You can now use VPC Network bindings with

network_id: "cf1:network"to reach your full private network from Workers, including:- Cloudflare Mesh nodes and client devices

- Subnet routes and hostname routes announced through Cloudflare Tunnel or Cloudflare Mesh

- Destinations connected through Cloudflare WAN on-ramps — GRE, IPsec, and CNI

This means a single VPC Network binding can route Worker requests to private services regardless of how those services are connected to Cloudflare: through a Cloudflare Tunnel from a cloud VPC, a Mesh node on a private subnet, or a Cloudflare WAN on-ramp from your data center or branch site.

JSONC {"vpc_networks": [{"binding": "PRIVATE_NETWORK","network_id": "cf1:network","remote": true,},],}TOML [[vpc_networks]]binding = "PRIVATE_NETWORK"network_id = "cf1:network"remote = trueAt runtime, the URL you pass to

fetch()determines the destination:JavaScript // Reach a service behind a Cloudflare WAN IPsec on-rampconst response = await env.PRIVATE_NETWORK.fetch("http://10.50.0.100:8080/api");For configuration options, refer to VPC Networks.

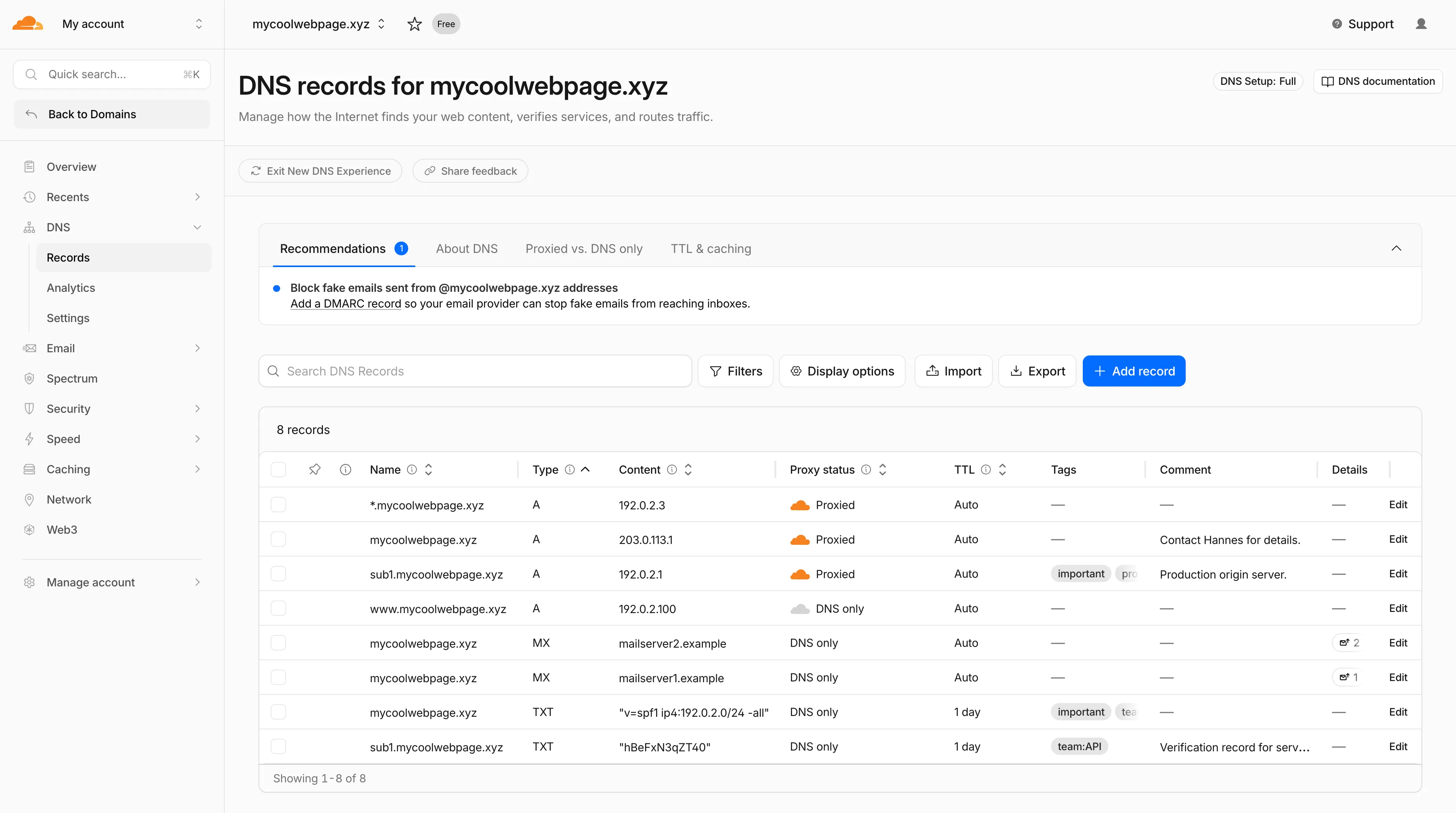

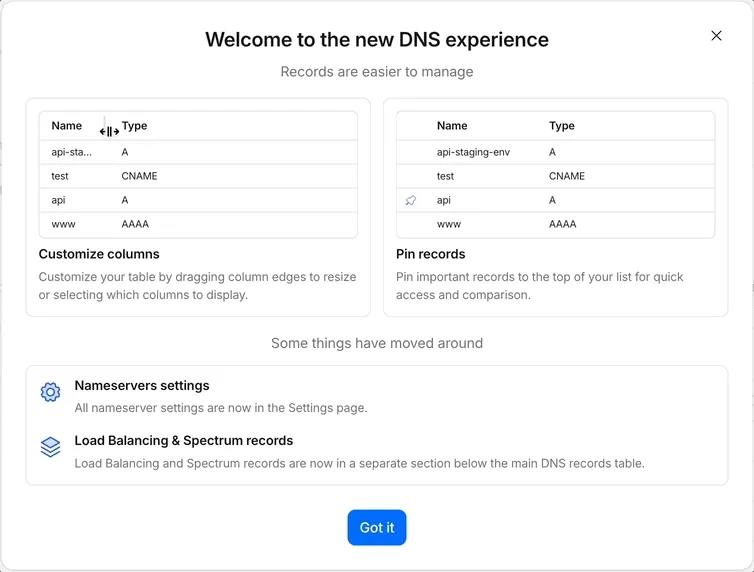

Starting today, everyone can opt in to a refreshed DNS records page in the Cloudflare dashboard. Over the coming weeks, the new experience will become the default for Free plan users first, followed by paid plans.

- Better table experience: resizable and hideable columns, row pinning, advanced filters with logical operators (AND/OR), configurable pagination, and expanded input fields so long values are no longer cut off.

- First-class mobile experience: responsive layout with a touch-friendly, card-based UI and compact controls for small screens.

- DNS quick reference: bite-sized explainers for DNS, proxy status, and TTL, available directly in the product to help users configure records without leaving the page.

- Modern frontend: a refactor onto Cloudflare's new UI framework that improves performance and lays the foundation for future improvements.

Dates are subject to change based on feedback received during the rollout.

- 20 May - 05 June: ramped rollout to Free, then Pro and Business plans.

- 08 June - 03 July: ramped rollout to Enterprise plans.

Once the new experience is turned on for your account, look for the feedback link at the top of the DNS records page in the Cloudflare dashboard and let us know what you think. Your input helps us prioritize the next round of improvements.

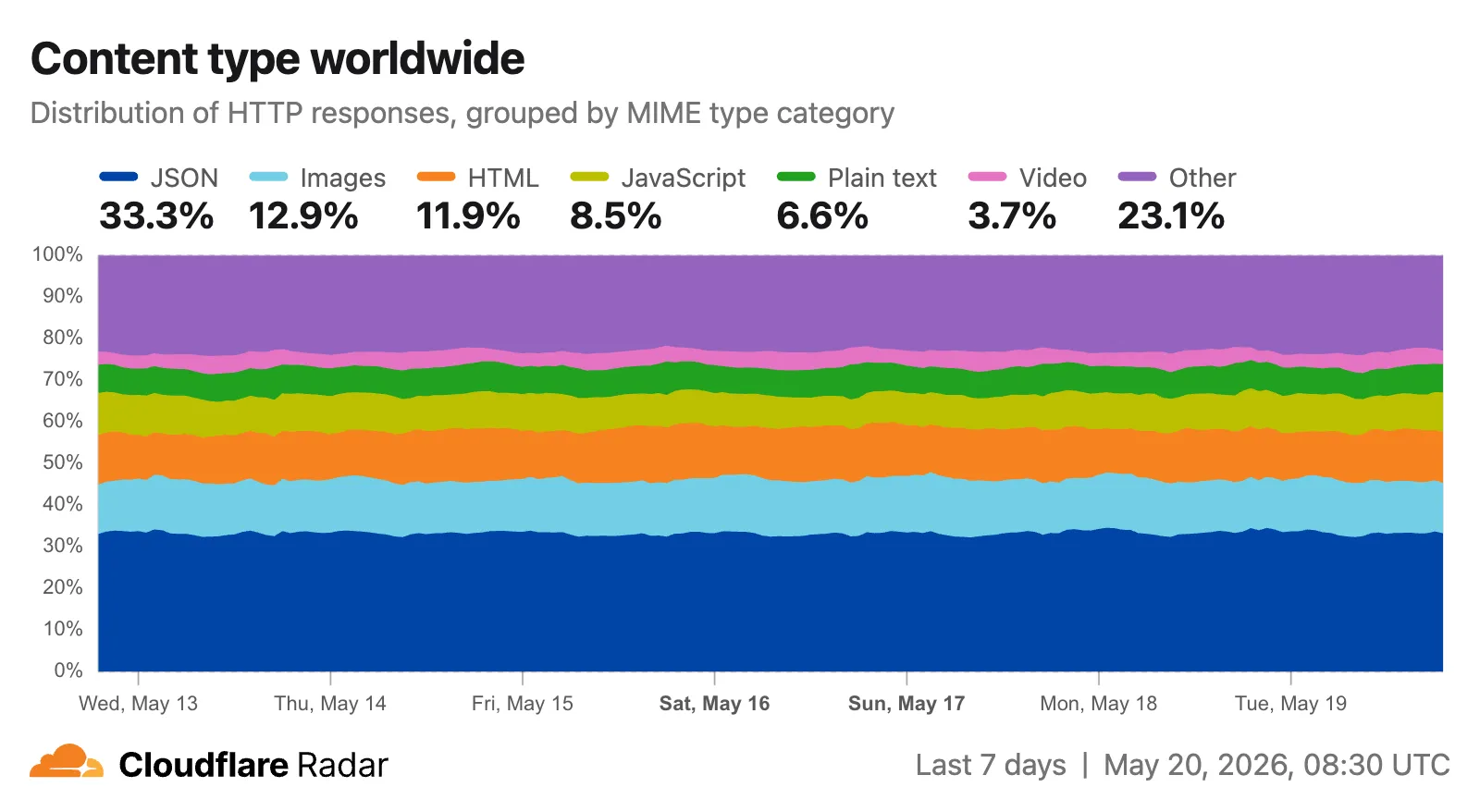

Radar now includes two new charts on the traffic page ↗ that provide deeper insights into the composition of HTTP traffic: a content type distribution chart and an API traffic share chart.

The new Content type ↗ chart displays the distribution of HTTP response content types, grouped into high-level categories. A traffic type selector allows filtering by human, bot, or all traffic. The existing Bot vs. Human ↗ chart also gained a content type category filter, allowing users to see the bot/human split for specific content categories.

Content type categories:

- HTML — Web pages (

text/html) - Images — All image formats (

image/*) - JSON — JSON data and API responses (

application/json,*+json) - JavaScript — Scripts (

application/javascript,text/javascript) - CSS — Stylesheets (

text/css) - Plain Text — Unformatted text (

text/plain) - Fonts — Web fonts (

font/*,application/font-*) - XML — XML documents and feeds (

text/xml,application/xml,application/rss+xml,application/atom+xml) - YAML — Configuration files (

text/yaml,application/yaml) - Video — Video content and streaming (

video/*,application/ogg,*mpegurl) - Audio — Audio content (

audio/*) - Markdown — Markdown documents (

text/markdown) - Documents — PDFs, Office documents, ePub, CSV (

application/pdf,application/msword,text/csv) - Binary — Executables, archives, WebAssembly (

application/octet-stream,application/zip,application/wasm) - Serialization — Binary API formats (

application/protobuf,application/grpc,application/msgpack) - Other — All other content types

The

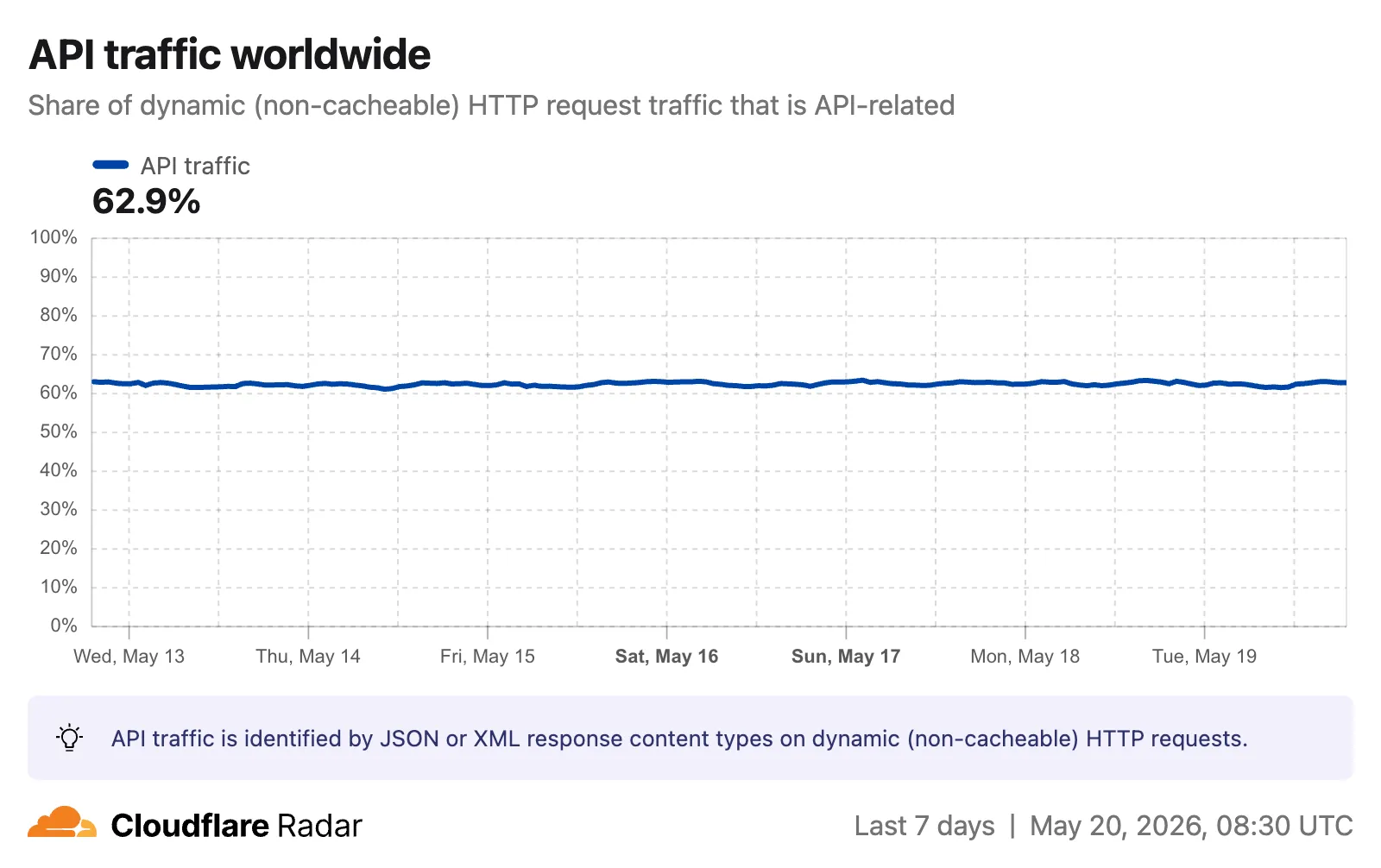

CONTENT_TYPEdimension andcontentTypefilter are available on the HTTP summary, timeseries groups, and timeseries endpoints.The new API traffic ↗ chart shows the percentage of dynamic (non-cacheable) HTTP request traffic that is API-related. API traffic is identified by JSON or XML response content types (

application/json,application/xml,text/xml) on HTTP requests that returned a 200 status code. A traffic type selector allows switching between human traffic, bot traffic, or all traffic.

The

API_TRAFFICdimension is available on the existing HTTP summary and timeseries groups endpoints. AnapiTrafficfilter (APIorNON_API) can also be applied to HTTP timeseries requests to retrieve raw request counts for API-only or non-API traffic.Visit the Radar traffic page ↗ to explore these new charts.

- HTML — Web pages (

Key Findings

- Existing rule enhancements have been deployed to improve detection resilience against broad classes of web attacks and strengthen behavioral coverage.

Continuous Rule Improvements

We are continuously refining our managed rules to provide more resilient protection and deeper insights into attack patterns. To ensure an optimal security posture, we recommend consistently monitoring the Security Events dashboard and adjusting rule actions as these enhancements are deployed.

Ruleset Rule ID Legacy Rule ID Description Previous Action New Action Comments Cloudflare Managed Ruleset N/A Sitecore - Cache Poisoning - CVE:CVE-2025-53693 Beta N/A Block This rule is merged into the original rule "Sitecore - Cache Poisoning - CVE:CVE-2025-53693" (ID:

Cloudflare Access now supports using Cloudflare itself as an identity provider. If you publish an Access application and select Cloudflare as the login method, users can sign in with their existing Cloudflare account — no one-time PINs, no third-party IdP configuration, and no shared email inboxes. Authentication is backed by Cloudflare's own account security (including multi-factor authentication), making it both simpler to set up and more secure than OTP-based login for most use cases.

Cloudflare is now the default identity provider for all newly created Zero Trust accounts, replacing One-time PIN.

This also enables two new capabilities:

- Cloudflare Account Member selector — A new policy selector that matches users based on their membership in a Cloudflare account. You can target the current account or specify a different account ID for cross-account access scenarios.

- Restrict to account members — An identity provider configuration option that limits authentication to users who are members of your Cloudflare account.

To get started, add Cloudflare as an identity provider in your Zero Trust settings.

You can now receive event notifications for Artifacts repository changes and consume them from a Worker to build commit-driven automation.

This allows you to:

- Run custom workflows when a repository is created or imported

- Kick off a build and deploy a change when an agent pushes to a repo

- Trigger a review agent on every push

Available events include:

- Account-level events (

artifactssource) —repo.created,repo.deleted,repo.forked,repo.imported - Repository-level events (

artifacts.reposource) —pushed,cloned,fetched

To learn more, refer to Artifacts documentation.

Cloudflare CASB now integrates with the Claude Compliance API ↗. This enhancement gives security teams visibility into Claude usage patterns, admin activity, and compliance-relevant events across their organization.

The Claude Compliance API provides structured access to audit logs and administrative actions within Claude Enterprise and Claude Platform. Cloudflare CASB ingests this data to surface security findings that help organizations enhance their security posture and enforce AI governance.

Starting today, security teams can scan for security findings across the following assets:

- Public projects — Projects set to public visibility

- Project attachment — Files and documents added to projects that violate DLP policies

- Chat files — User-uploaded and provider-generated files that violate DLP policies

- Chat messages — User prompts and provider responses that violate DLP policies

- Artifacts — Provider-generated documents and files that violate DLP policies

This integration is available to all Cloudflare One customers. New Cloudflare customers can sign up and start with their first two integrations for free. Existing customers can enable the integration directly in the dashboard. The integration begins scanning immediately and surfaces findings in the dashboard within minutes.

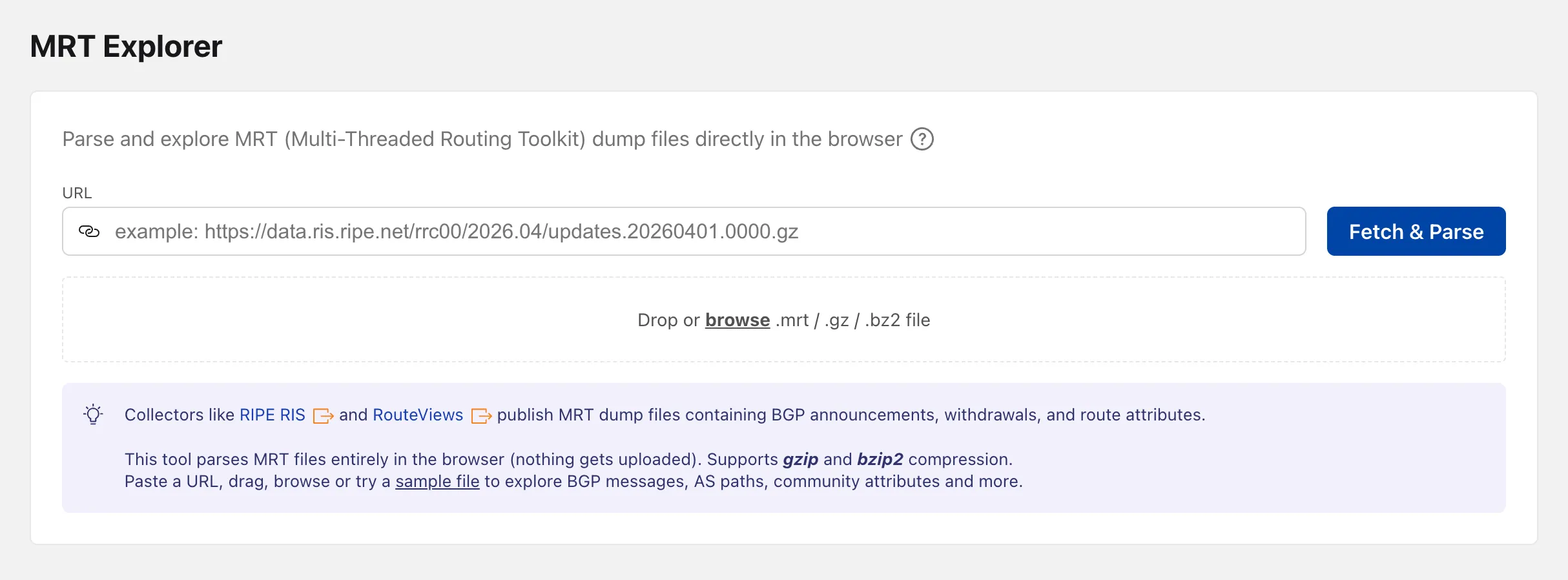

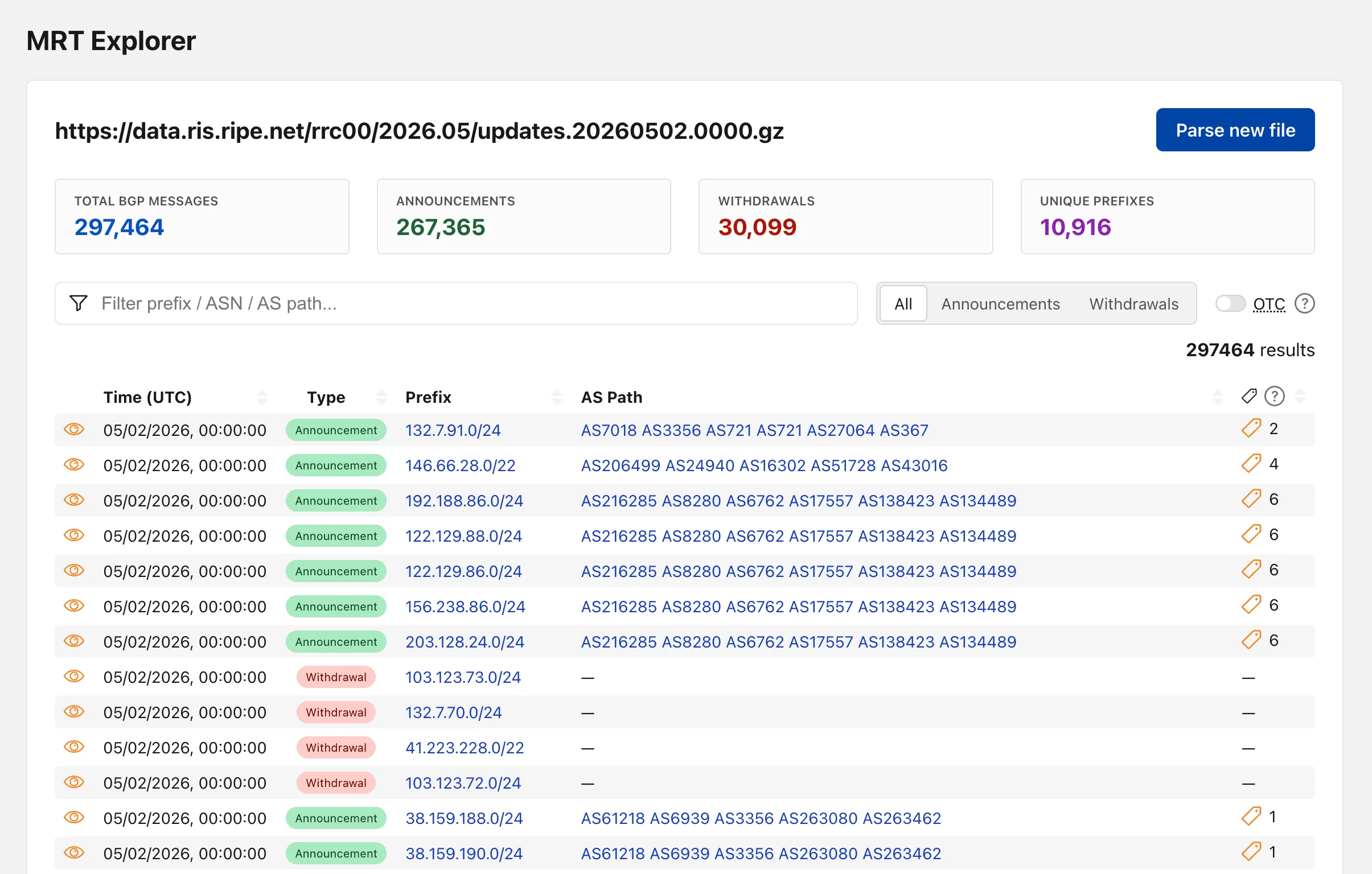

Radar now includes an MRT Explorer ↗ tool in the Routing section. Route collectors like RIPE RIS and RouteViews publish MRT (Multi-Threaded Routing Toolkit) dump files containing BGP announcements, withdrawals, and route attributes. The new tool parses these files entirely in the browser — nothing gets uploaded.

Paste a URL to fetch an MRT file remotely, drag and drop one onto the page, or browse for a local file. Gzip and bzip2 compressed files are supported. A sample file is also available to get started right away.

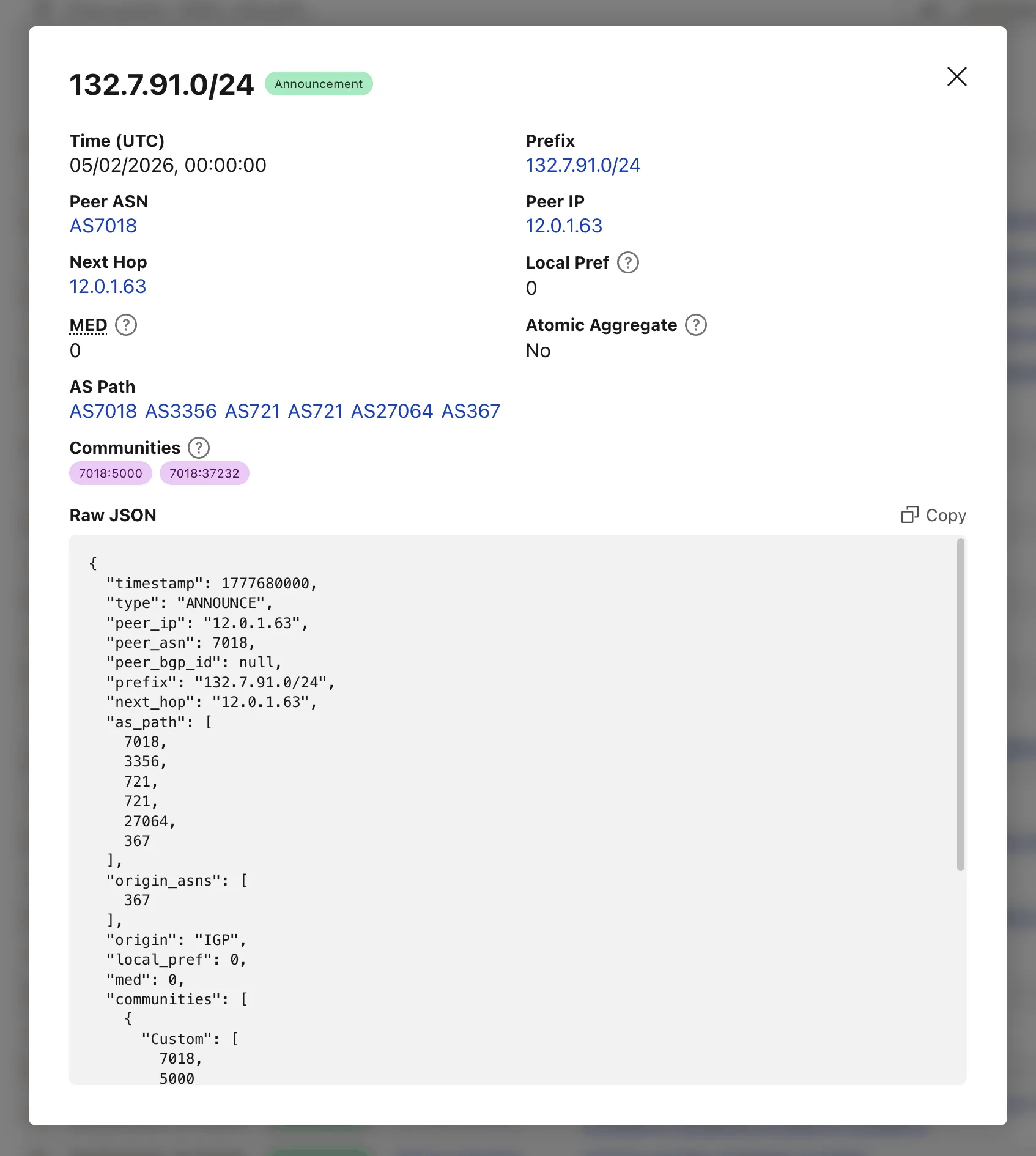

Once parsed, the tool lists every BGP event with its timestamp, prefix, AS path, OTC (Only to Customer), and community attributes.

Clicking on the "View details" action opens a modal with additional properties and the full event JSON.

When loading a file by URL, the query string captures the source so the link can be shared directly — the recipient's browser immediately fetches and parses the same file.

Try the MRT Explorer on Cloudflare Radar ↗.

You can now manage Artifacts namespaces, repos, and repo-scoped tokens directly from Wrangler CLI.

Available commands:

wrangler artifacts namespaces list— List Artifacts namespaces in your account.wrangler artifacts namespaces get— Get metadata for a namespace.wrangler artifacts repos create— Create a repo in a namespace.wrangler artifacts repos list— List repos in a namespace.wrangler artifacts repos get— Get metadata for a repo.wrangler artifacts repos delete— Delete a repo.wrangler artifacts repos issue-token— Issue a repo-scoped token for Git access.

To get started, refer to the Wrangler Artifacts commands documentation.

Network Analytics is now fully supported for accounts using Unified Routing mode. Traffic that traverses Unified Routing onramps and offramps is now visible in Network Analytics with the same dimensions and filters as traffic on the standard data plane.

This closes a parity gap for customers who had moved tunnels onto Unified Routing and lost visibility into their dataplane traffic in the Network Analytics dashboard. No configuration change is required — analytics data is collected automatically for all accounts with Unified Routing enabled.

For the remaining beta limitations, refer to Traffic steering beta limitations.

You can now share local dev sessions through Cloudflare Tunnel and get a public URL when using either Wrangler or the Cloudflare Vite plugin. This is useful when you need to share a preview, test a webhook, or access your app from another device.

This lets you either:

- start a temporary Quick tunnel with a random

*.trycloudflare.comhostname, or - use an existing named tunnel for a stable hostname and to restrict access with Cloudflare Access.

To start a tunnel, press

tin Wrangler ort + Enterin Vite while your dev server is running. For details on setting up a named tunnel, refer to Share a local dev server.- start a temporary Quick tunnel with a random

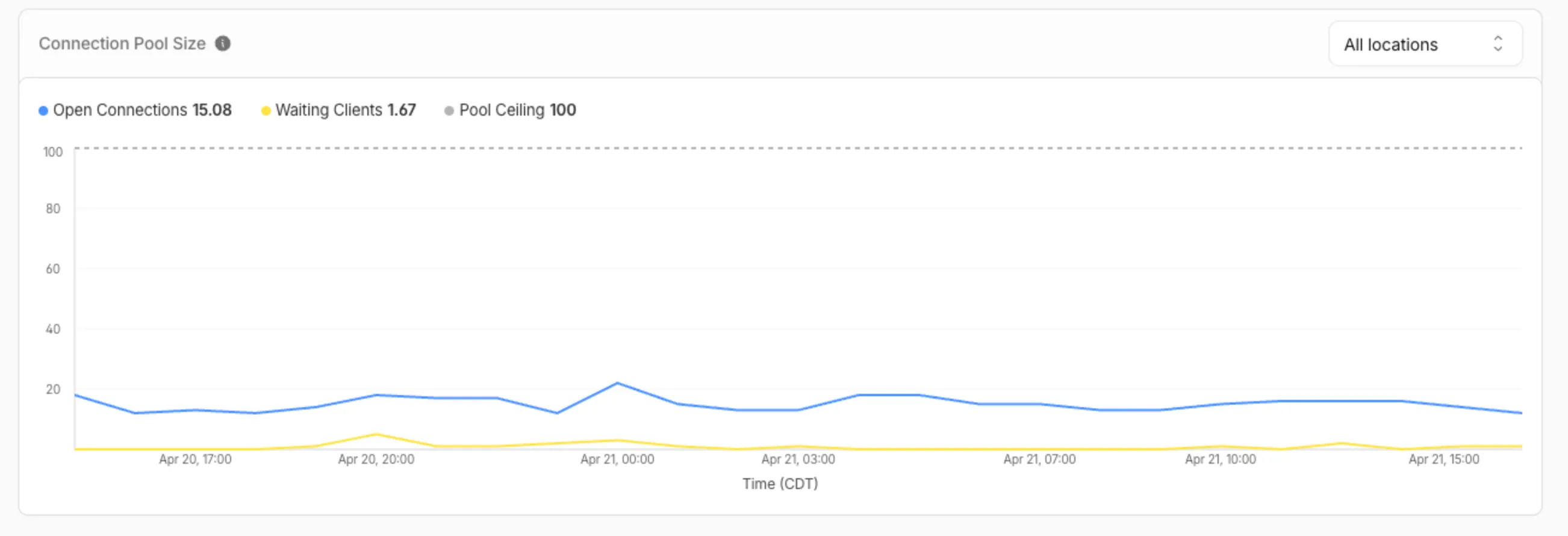

You can now view the size of your Hyperdrive database connection pools, giving you the ability to self-diagnose connection issues. Using the Cloudflare dashboard or the

hyperdrivePoolSizesAdaptiveGroupsdataset in the GraphQL Analytics API, you can seewaitingClients,currentPoolSize,availablePoolSlots, andmaxPoolSizefor each of your configurations.A new Pool connections chart has been added to the Metrics tab of each Hyperdrive configuration in the Cloudflare dashboard ↗. You can use the location selector to drill down into specific locations hosting your connection pool by airport code.

The chart shows:

- Waiting clients: Client requests waiting for an available connection.

- Open connections: Active connections to your database.

- Pool size maximum: Your configured origin connection limit.

Connection contention appears as a spike in waiting clients, or when open connections consistently approach the pool size maximum. If your open connections regularly approach this limit, consider contacting Cloudflare to increase your Hyperdrive connection limit.

The

hyperdrivePoolSizesAdaptiveGroupsdataset in the GraphQL Analytics API exposes the following key connection pool metrics for each Hyperdrive configuration:Under

avg:currentPoolSize— Average number of connections currently open in the pool.availablePoolSlots— Average number of pool connections available for checkout.waitingClients— Average number of clients waiting for a connection from the pool.

Under

max:maxPoolSize— Configured maximum size of the connection pool.currentPoolSize— Peak number of connections open in the pool.waitingClients— Peak number of clients waiting for a connection from the pool.

For more information, refer to Metrics and analytics and Connection pooling.

R2 SQL is Cloudflare's serverless, distributed SQL engine for querying Apache Iceberg ↗ tables stored in R2 Data Catalog. R2 SQL runs directly on Cloudflare's global network with no infrastructure to manage, so you can analyze data in R2 without exporting it to an external warehouse.

R2 SQL now supports joining multiple Iceberg tables in a single query. You can combine tables with JOINs, filter with subqueries, and define multi-table CTEs to build complex analytical queries.

- JOINs —

INNER JOIN,LEFT JOIN,RIGHT JOIN,FULL OUTER JOIN,CROSS JOIN, and implicit joins (comma-separatedFROMwith conditions inWHERE) - Subqueries —

IN/NOT IN,EXISTS/NOT EXISTS, scalar subqueries inSELECT/WHERE/HAVING, and derived tables (subqueries inFROM) - Multi-table CTEs —

WITHclauses can reference different tables and include JOINs - Self-joins — join a table with itself using different aliases

- Multi-way joins — join three or more tables in a single query

SELECT z.domain, z.plan, COUNT(*) AS request_countFROM my_namespace.zones zINNER JOIN my_namespace.http_requests h ON z.zone_id = h.zone_idWHERE z.plan = 'enterprise'GROUP BY z.domain, z.planORDER BY request_count DESCLIMIT 20SELECT z.domain, z.planFROM my_namespace.zones zWHERE EXISTS (SELECT 1 FROM my_namespace.firewall_events fWHERE f.zone_id = z.zone_id AND f.action = 'block')ORDER BY z.domainLIMIT 20WITH top_zones AS (SELECT zone_id, COUNT(*) AS req_countFROM my_namespace.http_requestsGROUP BY zone_idORDER BY req_count DESCLIMIT 50),zone_threats AS (SELECT zone_id, COUNT(*) AS threat_countFROM my_namespace.firewall_eventsWHERE risk_score > 0.5GROUP BY zone_id)SELECT tz.zone_id, tz.req_count, COALESCE(zt.threat_count, 0) AS threat_countFROM top_zones tzLEFT JOIN zone_threats zt ON tz.zone_id = zt.zone_idORDER BY tz.req_count DESCLIMIT 20For the full syntax reference, refer to the SQL reference. For performance guidance with joins, refer to Limitations and best practices.

- JOINs —

This emergency release introduces two new rules to detect nginx heap buffer overflow and heap spray exploitation attempts targeting the rewrite module's

is_argsstale-state bug (CVE-2026-42945).Key Findings

CVE-2026-42945: nginx Heap Buffer Overflow via Stale

is_argsin Rewrite ModuleSuccessful exploitation allows remote attackers to trigger a heap buffer overflow in nginx's rewrite module by sending crafted URIs containing escapable characters. A length/copy pass mismatch in

ngx_http_script_copy_capture_code()causes the copy pass to write escaped data into an undersized buffer, leading to heap corruption. This enables denial of service (worker process crash) and, with heap feng shui techniques, potential remote code execution.We strongly recommend upgrading to nginx 1.30.1 (or later) immediately to address the underlying vulnerability. If you cannot upgrade immediately, avoid

rewritedirectives with?in the replacement string followed bysetorifreferencing capture groups.Ruleset Rule ID Legacy Rule ID Description Previous Action New Action Comments Cloudflare Managed Ruleset N/A nginx - Remote Code Execution - Buffer Overread - CVE:CVE-2026-42945 N/A Block This is a new detection.

Cloudflare Managed Ruleset N/A nginx - Remote Code Execution - Heap Spray - CVE:CVE-2026-42945 N/A Block This is a new detection.

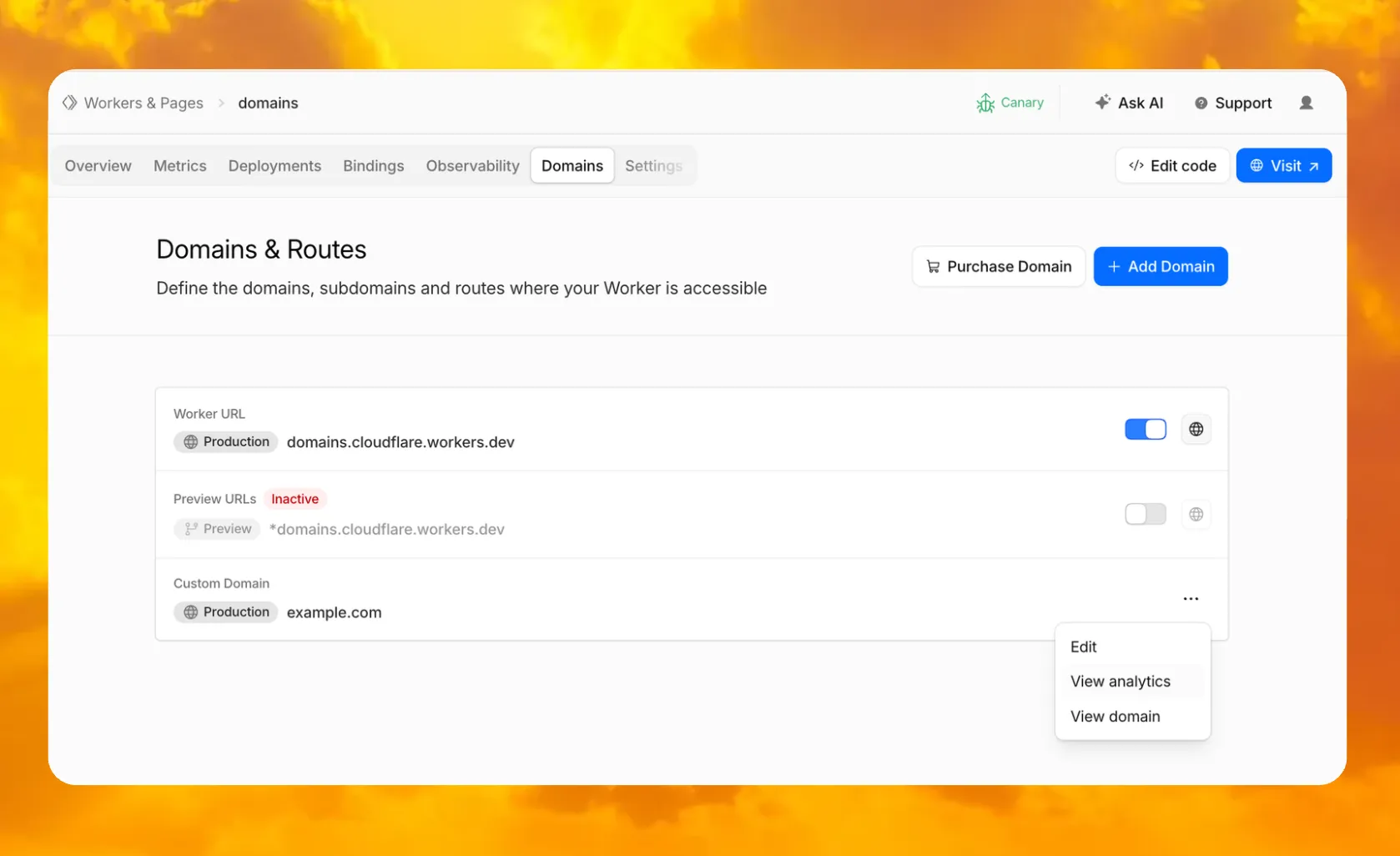

In your Worker's dashboard, there is now a dedicated Domains tab where you can purchase a new domain through Cloudflare Registrar and have it automatically connected, add an existing domain, and manage all of your Worker's routing in one place.

You can also enable or disable your

workers.devsubdomain and Preview URLs, put them behind Cloudflare Access to require sign-in, and jump directly to analytics or domain overview for any connected domain.To get started, go to Workers & Pages, select a Worker, and open the Domains tab.

Go to Workers & Pages

The latest release of the Agents SDK ↗ brings more reliable chat recovery, fixes Agent state synchronization during reconnects, adds durable submissions for Think, exposes routing retry configuration, and adds connection control for Voice agents.

@cloudflare/ai-chatnow keeps server turns running when a browser or client stream is interrupted. This is useful for long-running AI responses where users refresh the page, close a tab, or temporarily lose connection. Callingstop()still cancels the server turn.Set

cancelOnClientAbort: trueif browser or client aborts should also cancel the server turn:JavaScript const chat = useAgentChat({agent: "assistant",name: "user-123",cancelOnClientAbort: true,});TypeScript const chat = useAgentChat({agent: "assistant",name: "user-123",cancelOnClientAbort: true,});Notable bug fixes:

- Chat stream resume negotiation no longer throws when replay races with a closed WebSocket connection.

- Recovered chat continuations no longer leave

useAgentChatstuck in a streaming state when the original socket disconnects before a terminal response. - Approval auto-continuation preserves reasoning parts and persists continuation reasoning in the final message.

isServerStreamingnow resets correctly when a resumed stream moves from the fallback observer path to a transport-owned stream.

agents@0.12.4prevents duplicate initial state frames during WebSocket connection setup. This avoids stale initial state messages overwriting state updates already sent by the client.Agent recovery is also more reliable when tool calls span a Durable Object restart. Recovery now defers user finish hooks until after agent startup and isolates hook failures, so one failed hook does not block other recovered runs from finalizing.

getAgentByName()now supportsroutingRetryfor transient Durable Object routing failures:JavaScript import { getAgentByName } from "agents";const agent = await getAgentByName(env.AssistantAgent, "user-123", {routingRetry: {maxAttempts: 3,},});TypeScript import { getAgentByName } from "agents";const agent = await getAgentByName(env.AssistantAgent, "user-123", {routingRetry: {maxAttempts: 3,},});@cloudflare/thinknow supports durable programmatic submissions.submitMessages()provides durable acceptance, idempotent retries, status inspection, cancellation, and cleanup for server-driven turns that should continue after the caller returns.Think.chat()RPC turns now run inside chat recovery fibers and persist their stream chunks. Interrupted sub-agent turns can recover partial output instead of starting over.ChatOptions.toolshas been removed from the TypeScript API. Define durable tools on the child agent or use agent tools for orchestration. Runtimeoptions.toolsvalues passed by legacy callers are ignored with a warning.@cloudflare/thinkno longer appliespruneMessages({ toolCalls: "before-last-2-messages" })to model context by default. The previous default could strip client-side tool results from longer multi-turn flows.truncateOlderMessagesstill runs as before, so context cost remains bounded. Subclasses that relied on the old aggressive pruning can opt back in frombeforeTurn:JavaScript import { Think } from "@cloudflare/think";import { pruneMessages } from "ai";export class MyAgent extends Think {beforeTurn(ctx) {return {messages: pruneMessages({messages: ctx.messages,toolCalls: "before-last-2-messages",}),};}}TypeScript import { Think } from "@cloudflare/think";import { pruneMessages } from "ai";export class MyAgent extends Think<Env> {beforeTurn(ctx) {return {messages: pruneMessages({messages: ctx.messages,toolCalls: "before-last-2-messages",}),};}}@cloudflare/voiceadds anenabledoption touseVoiceAgent. React apps can now delay creating and connecting aVoiceClientuntil prerequisites such as capability tokens are ready.JavaScript const voice = useVoiceAgent({agent: "MyVoiceAgent",enabled: Boolean(token),});TypeScript const voice = useVoiceAgent({agent: "MyVoiceAgent",enabled: Boolean(token),});This release also fixes Workers AI speech-to-text session edge cases and

withVoicetext streaming from AI SDKtextStreamresponses.- Streamable HTTP routing — Server-to-client requests now route through the originating POST stream when no standalone SSE stream is available.

- Structured tool output — Tool output shapes are preserved when truncating older messages or oversized persisted rows.

- Non-chat Think tool steps — Think agent-tool children can complete without emitting assistant text and can return structured output through

getAgentToolOutput. - Sub-agent schedules — Stale sub-agent schedule rows are pruned when their owning facet registry entry no longer exists.

@cloudflare/codemode— Adds a browser-safe export with an iframe sandbox executor and resolves OpenAPI specs inside the sandbox to avoid Worker Loader RPC size limits.

To update to the latest version:

Terminal window npm i agents@latest @cloudflare/ai-chat@latest @cloudflare/think@latest @cloudflare/voice@latestRefer to the Agents API reference and Chat agents documentation for more information.

Cloudflare has updated Logpush datasets:

- Email Security Post-Delivery Events: A new dataset with fields including

AlertID,CompletedAt,Destination,FinalDisposition,Folder,From,FromName,MessageID,MessageTimestamp,MicrosoftTenantID,Operation,PostfixID,Reasons,Recipient,RequestedAt,RequestedBy,RequestedDisposition,Status,Subject,Success, andTo. - Magic Network Monitoring Flow Logs: A new dataset with fields including

AWSVPCFlowJSON,Bits,DestinationAS,DestinationAddress,DestinationPort,DeviceID,EgressBits,EgressPackets,Ethertype,FlowProtocol,FlowTimestamp,NumFlows,PacketID,Packets,Protocol,RuleIDs,SampleRate,SampleRateType,SamplerAddress,SourceAS,SourceAddress,SourcePort,TcpFlags, andTimestamp.

- Firewall events (added):

AISecurityInjectionScore,AISecurityPIICategories,AISecurityTokenCount, andAISecurityUnsafeTopicCategories. - HTTP requests (added):

AISecurityInjectionScore,AISecurityPIICategories,AISecurityTokenCount,AISecurityUnsafeTopicCategories, andSubrequests.

For the complete field definitions for each dataset, refer to Logpush datasets.

- Email Security Post-Delivery Events: A new dataset with fields including

The

/cdn-cgi/rumbeacon endpoint now returns405 Method Not Allowedfor non-POST requests instead of404 Not Found. The response includes anAllow: POST, OPTIONSheader per RFC 9110 §15.5.6 ↗.Previously, sending a

GETor other non-POST request to this endpoint returned a404, which was misleading because it suggested the endpoint did not exist. The new405response clearly indicates that the endpoint exists but only acceptsPOSTrequests.The Web Analytics beacon (

beacon.min.js) already usesPOSTfor all metric submissions, so this change does not affect normal beacon operation.OPTIONSrequests for CORS preflight continue to work as before.For more information, refer to the Web Analytics FAQ.

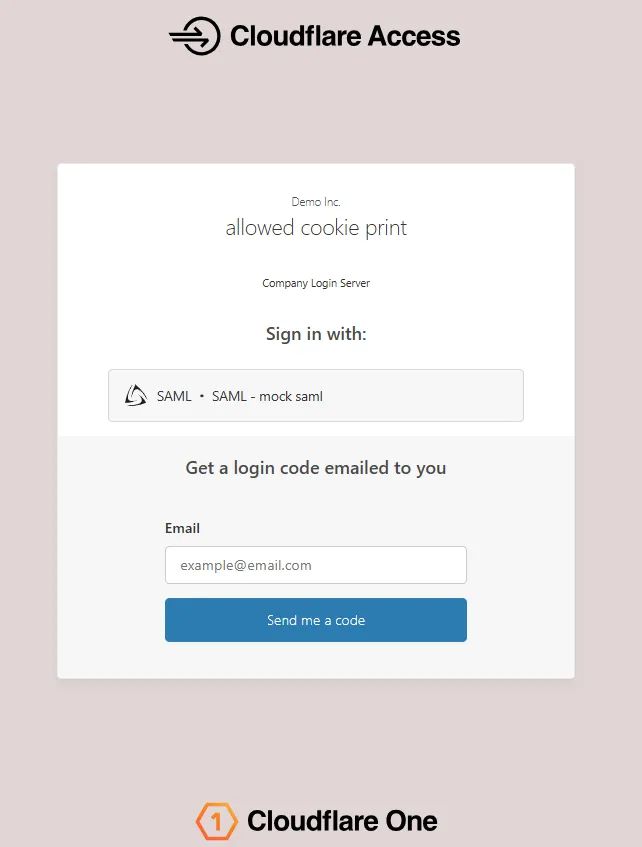

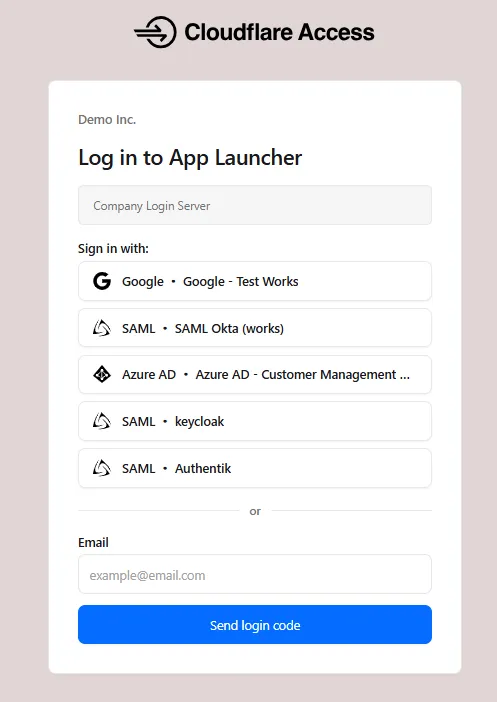

The Access login page and one-time password (OTP) page now feature a refreshed design that improves visual consistency, user trust, and mobile responsiveness.

Before:

After:

The updated login experience includes:

- Unified authentication card - All sign-in options (identity provider buttons, email input, OTP) now appear in a single card with consistent styling, replacing the previous multi-section layout.

- Consistent button styling - Identity provider buttons use a uniform size and layout for easier scanning and selection.

- Better mobile experience - Responsive layout improvements ensure the login page renders correctly on phones and tablets.

- Dark mode support - The login page now supports dark mode.

New Magic Transit and Cloudflare WAN accounts are now assigned a single IPv4 anycast address by default.

Cloudflare handles failures on its network automatically by advertising your endpoint IP from multiple nodes across many globally distributed data centers. To handle failures on your network, configure two tunnels from separate routers.

To request additional anycast IP addresses for your account, contact your account team.

For tunnel configuration guidance, refer to Configure tunnel endpoints for Cloudflare WAN or Configure tunnel endpoints for Magic Transit.

SSH through Wrangler is now enabled by default for Containers. Previously, you had to set

ssh.enabledtotruein your Container configuration before you could connect.This change does not expose any publicly accessible ports on your Container. The SSH service is reachable only through

wrangler containers ssh, which authenticates against your Cloudflare account. You also need to add anssh-ed25519public key toauthorized_keysbefore anyone can connect, so enabling SSH alone does not grant access.To connect, add a public key to your Container configuration and run

wrangler containers ssh <INSTANCE_ID>:JSONC {"containers": [{"authorized_keys": [{"name": "<NAME>","public_key": "<YOUR_PUBLIC_KEY_HERE>",},],},],}TOML [[containers]][[containers.authorized_keys]]name = "<NAME>"public_key = "<YOUR_PUBLIC_KEY_HERE>"To disable SSH, set

ssh.enabledtofalsein your Container configuration:JSONC {"containers": [{"ssh": {"enabled": false,},},],}TOML [[containers]][containers.ssh]enabled = falseFor more information, refer to the SSH documentation.

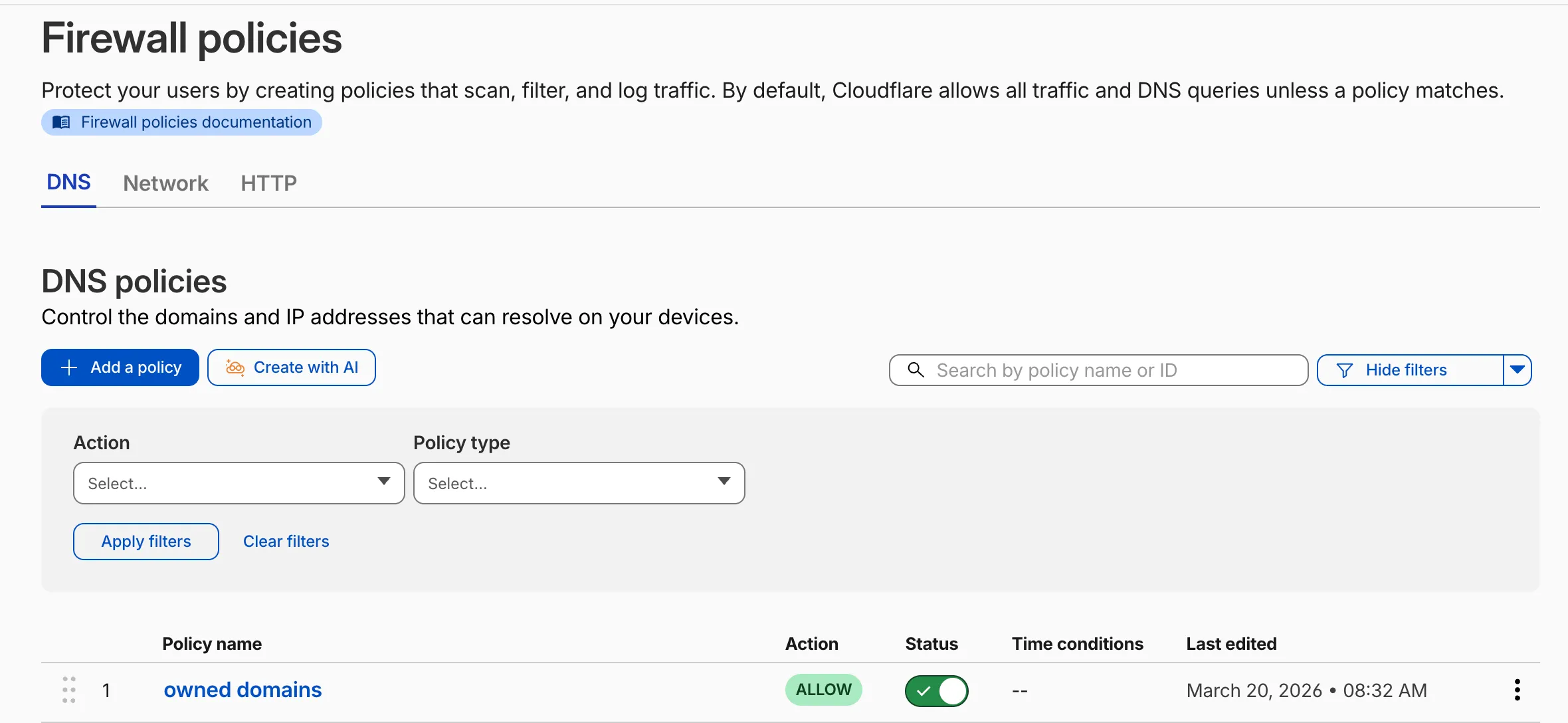

Cloudflare Gateway now supports natural language policy creation for DNS, HTTP, and Network firewall policies. Administrators can describe the outcome they want in plain language, and Cloudflare will generate a complete policy rule that populates the policy builder form.

To create a policy with natural language, select Create with AI on any Gateway firewall policy tab. Choose a policy type, describe what the policy should do, and a fully configured rule will appear in the policy builder for review. You can edit any field before saving, or re-generate with a different prompt.

The generated policy incorporates your account context - including lists, DLP profiles, applications, and device posture checks - so that references to your existing resources resolve automatically.

A built-in feedback mechanism allows you to rate each generated policy and provide optional comments, which Cloudflare uses to improve output quality over time.

For more information, refer to Gateway firewall policies.

R2 Data Catalog is a managed Apache Iceberg data catalog built directly into your R2 bucket that allows you to connect query engines like R2 SQL, Spark, Snowflake, and DuckDB to your data in R2.

You can now query analytics for your R2 Data Catalog warehouses via Cloudflare's GraphQL Analytics API. Two new datasets are available:

r2CatalogDataOperationsAdaptiveGroupstracks Iceberg REST API requests made to your catalog, including operation type, request duration, HTTP status, and request body bytes. Use this to monitor request volume and latency across warehouses, namespaces, and tables.r2CatalogTableMaintenanceAdaptiveGroupstracks table maintenance jobs such as compaction and snapshot expiration. Use this to monitor job success rates, files processed, bytes read and written, and job duration.

Both datasets support filtering by warehouse name, namespace, table name, and time range. They also include percentile aggregations for duration metrics.

For detailed schema information and example queries, refer to the R2 Data Catalog metrics and analytics documentation.

We’ve added a new Agent Readiness tab to URL Scanner reports accessible via the Cloudflare dashboard. This feature evaluates your site against emerging AI standards and provides six specialized scores to help you optimize for the next generation of AI agents and automated discovery.

The Internet is shifting from a human-read web to a machine-read web. AI agents now browse, interact with, and even perform transactions on websites. If a site isn't "agent-ready," these bots may consume excessive bandwidth, fail to find critical information, or be unable to navigate your services efficiently.

This update provides material value by breaking down readiness into six actionable categories:

- Basic Web Presence

- Discoverability

- Content Accessibility

- Bot Access Control

- Protocol Discovery

- Commerce

You can view these scores for any scanned URL directly in the dashboard or via our API.

- Dashboard: Go to Protect & Connect > Application Security > Investigate. After running a scan, select the Agent Readiness tab in the report.

- API: Use the URL Scanner API ↗ to programmatically retrieve these scores for your infrastructure.

To learn more about the methodology behind these scores, refer to the blogpost ↗.

A new GA release for the Windows Cloudflare One Client is now available on the stable releases downloads page.

This release introduces the new Cloudflare One Client UI for Windows! You can expect a cleaner and more intuitive design as well as easier access to common actions and information. Here are some of the many things we have found our users appreciate:

- Right click context menu to access the most common client actions quickly

- Built-in captive portal login experience

Additional Changes and improvements

- Added a new CLI command: warp-cli mdm refresh. This command executes an immediate refresh of the Mobile Device Management (MDM) configuration file.

Known issues

- Registration authentication for devices via the integrated WebView2 browser is unavailable in this version as a temporary measure. As a result, the client will utilize the default browser on the device to complete the authentication process.

- An error indicating that Microsoft Edge can't read and write to its data directory may be displayed during captive portal login; this error is benign and can be dismissed.

- Registration may hang at "Checking your organization configuration" due to IPC errors. A system reboot should resolve the error, allowing registration to proceed.

- Split tunnel list configuration is not available in the new UI. Management of Split Tunnel entries is currently only possible via

warp-cli tunnel ipandwarp-cli tunnel host. UI support will be added in a future release. - Windows ARM may prompt the user to close running applications while trying to install this version. Simply click “Ok” with the default highlighted option.

- DNS resolution may be broken when the following conditions are all true:

- The client is in Secure Web Gateway without DNS filtering (tunnel-only) mode.

- A custom DNS server address is configured on the primary network adapter.

- The custom DNS server address on the primary network adapter is changed while the client is connected.

To work around this issue, please reconnect the client by selecting "disconnect" and then "connect" in the client user interface.