Before

New updates and improvements at Cloudflare.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high number of issues reported by the Cloudflare community related to the v5 release. We have committed to releasing improvements on a 2-3 week cadence ↗ to ensure its stability and reliability, including the v5.15 release. We have also pivoted from an issue-to-issue approach to a resource-per-resource approach ↗ - we will be focusing on specific resources to not only stabilize the resource but also ensure it is migration-friendly for those migrating from v4 to v5.

Thank you for continuing to raise issues. They make our provider stronger and help us build products that reflect your needs.

This release includes bug fixes, the stabilization of even more popular resources, and more.

startup_time_ms (286ab55 ↗)upload_status (7dc0fe3 ↗)upload_status (7dc0fe3 ↗)upload_status (7dc0fe3 ↗)upload_status (7dc0fe3 ↗)forensic_copy (5741fd0 ↗)We suggest waiting to migrate to v5 while we work on stabilization. This helps with avoiding any blocking issues while the Terraform resources are actively being stabilized ↗. We will be releasing a new migration tool in March 2026 to help support v4 to v5 transitions for our most popular resources.

TanStack Start ↗ apps can now prerender routes to static HTML at build time with access to build time environment variables

and bindings, and serve them as static assets. To enable prerendering, configure the prerender option of the TanStack Start plugin in your Vite config:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";import { tanstackStart } from "@tanstack/react-start/plugin/vite";

export default defineConfig({ plugins: [ cloudflare({ viteEnvironment: { name: "ssr" } }), tanstackStart({ prerender: { enabled: true, }, }), ],});This feature requires @tanstack/react-start v1.138.0 or later. See the TanStack Start framework guide for more details.

R2 Data Catalog now supports automatic snapshot expiration for Apache Iceberg tables.

In Apache Iceberg, a snapshot is metadata that represents the state of a table at a given point in time. Every mutation creates a new snapshot which enable powerful features like time travel queries and rollback capabilities but will accumulate over time.

Without regular cleanup, these accumulated snapshots can lead to:

Snapshot expiration in R2 Data Catalog automatically removes old table snapshots based on your configured retention policy, improving performance and storage costs.

# Enable catalog-level snapshot expiration# Expire snapshots older than 7 days, always retain at least 10 recent snapshotsnpx wrangler r2 bucket catalog snapshot-expiration enable my-bucket \ --older-than-days 7 \ --retain-last 10Snapshot expiration uses two parameters to determine which snapshots to remove:

--older-than-days: age threshold in days--retain-last: minimum snapshot count to retainBoth conditions must be met before a snapshot is expired, ensuring you always retain recent snapshots even if they exceed the age threshold.

This feature complements automatic compaction, which optimizes query performance by combining small data files into larger ones. Together, these automatic maintenance operations keep your Iceberg tables performant and cost-efficient without manual intervention.

To learn more about snapshot expiration and how to configure it, visit our table maintenance documentation or see how to manage catalogs.

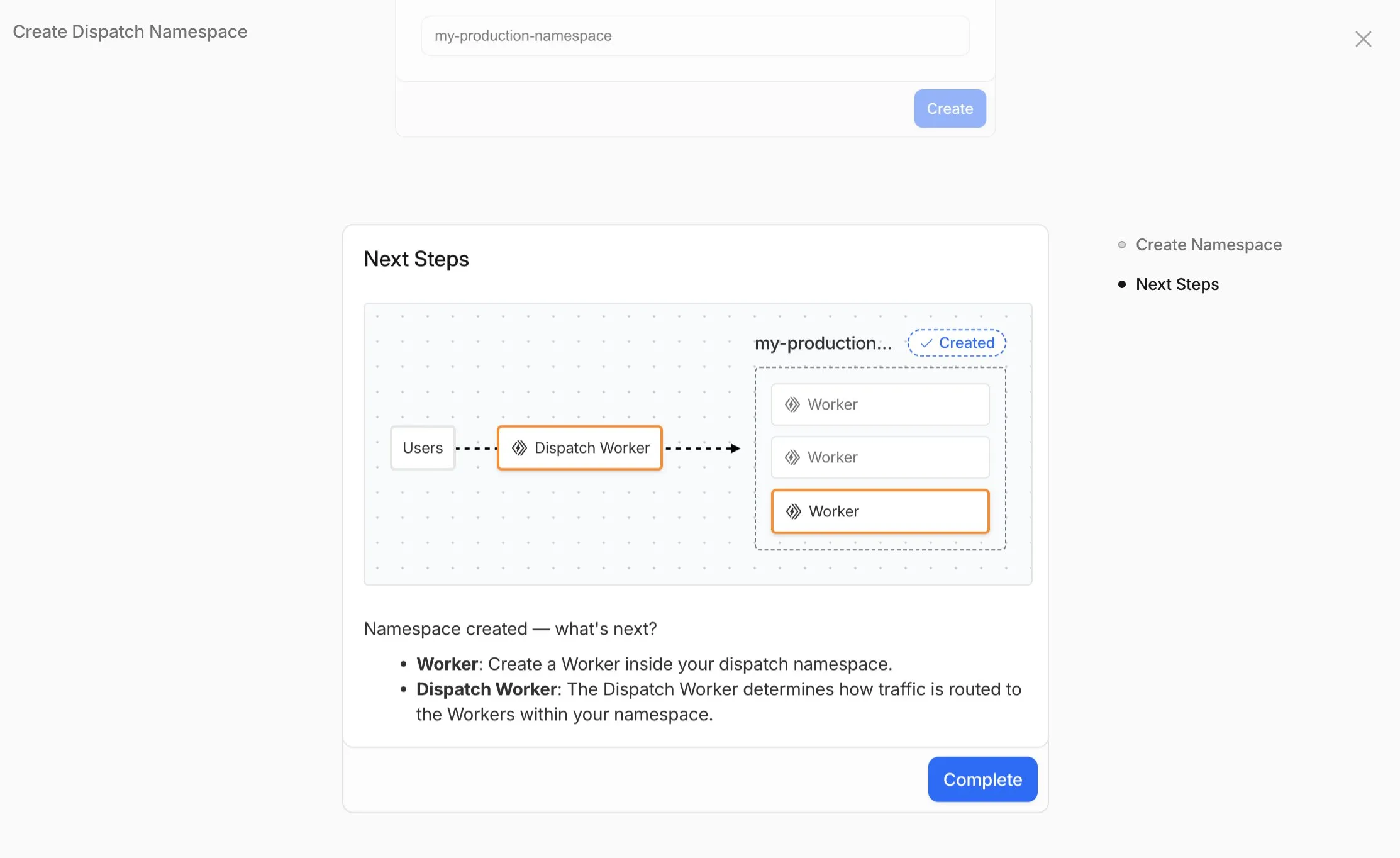

Workers for Platforms lets you build multi-tenant platforms on Cloudflare Workers, allowing your end users to deploy and run their own code on your platform. It's designed for anyone building an AI vibe coding platform, e-commerce platform, website builder, or any product that needs to securely execute user-generated code at scale.

Previously, setting up Workers for Platforms required using the API. Now, the Workers for Platforms UI supports namespace creation, dispatch worker templates, and tag management, making it easier for Workers for Platforms customers to build and manage multi-tenant platforms directly from the Cloudflare dashboard.

To get started, go to Workers for Platforms under Compute & AI in the Cloudflare dashboard ↗.

We've published build image policies for Workers Builds and Cloudflare Pages, which establish:

To prepare for updates, monitor the Cloudflare Changelog ↗, dashboard notifications, and email. You can also override default versions to maintain specific versions.

Wrangler now includes a new wrangler auth token command that retrieves your current authentication token or credentials for use with other tools and scripts.

wrangler auth tokenThe command returns whichever authentication method is currently configured, in priority order: API token from CLOUDFLARE_API_TOKEN, or OAuth token from wrangler login (automatically refreshed if expired).

Use the --json flag to get structured output including the token type:

wrangler auth token --jsonThe JSON output includes the authentication type:

// API token{ "type": "api_token", "token": "..." }

// OAuth token{ "type": "oauth", "token": "..." }

// API key/email (only available with --json){ "type": "api_key", "key": "...", "email": "..." }API key/email credentials from CLOUDFLARE_API_KEY and CLOUDFLARE_EMAIL require the --json flag since this method uses two values instead of a single token.

The @cloudflare/vitest-pool-workers package now supports the ctx.exports API, allowing you to access your Worker's top-level exports during tests.

You can access ctx.exports in unit tests by calling createExecutionContext():

import { createExecutionContext } from "cloudflare:test";import { it, expect } from "vitest";

it("can access ctx.exports", async () => { const ctx = createExecutionContext(); const result = await ctx.exports.MyEntryPoint.myMethod(); expect(result).toBe("expected value");});Alternatively, you can import exports directly from cloudflare:workers:

import { exports } from "cloudflare:workers";import { it, expect } from "vitest";

it("can access imported exports", async () => { const result = await exports.MyEntryPoint.myMethod(); expect(result).toBe("expected value");});See the context-exports fixture ↗ for a complete example.

Wrangler now supports automatic configuration for popular web frameworks in experimental mode, making it even easier to deploy to Cloudflare Workers.

Previously, if you wanted to deploy an application using a popular web framework like Next.js or Astro, you had to follow tutorials to set up your application for deployment to Cloudflare Workers. This usually involved creating a Wrangler file, installing adapters, or changing configuration options.

Now wrangler deploy does this for you. Starting with Wrangler 4.55, you can use npx wrangler deploy --x-autoconfig in the directory of any web application using one of the supported frameworks. Wrangler will then proceed to configure and deploy it to your Cloudflare account.

You can also configure your application without deploying it by using the new npx wrangler setup command. This enables you to easily review what changes we are making so your application is ready for Cloudflare Workers.

The following application frameworks are supported starting today:

Automatic configuration also supports static sites by detecting the assets directory and build command. From a single index.html file to the output of a generator like Jekyll or Hugo, you can just run npx wrangler deploy --x-autoconfig to upload to Cloudflare.

We're really excited to bring you automatic configuration so you can do more with Workers. Please let us know if you run into challenges using this experimentally. We’ve opened a GitHub discussion ↗ and would love to hear your feedback.

A new Rules of Durable Objects guide is now available, providing opinionated best practices for building effective Durable Objects applications. This guide covers design patterns, storage strategies, concurrency, and common anti-patterns to avoid.

Key guidance includes:

blockConcurrencyWhile().The testing documentation has also been updated with modern patterns using @cloudflare/vitest-pool-workers, including examples for testing SQLite storage, alarms, and direct instance access:

import { env, runDurableObjectAlarm } from "cloudflare:test";import { it, expect } from "vitest";

it("can test Durable Objects with isolated storage", async () => { const stub = env.COUNTER.getByName("test");

// Call RPC methods directly on the stub await stub.increment(); expect(await stub.getCount()).toBe(1);

// Trigger alarms immediately without waiting await runDurableObjectAlarm(stub);});import { env, runDurableObjectAlarm } from "cloudflare:test";import { it, expect } from "vitest";

it("can test Durable Objects with isolated storage", async () => { const stub = env.COUNTER.getByName("test");

// Call RPC methods directly on the stub await stub.increment(); expect(await stub.getCount()).toBe(1);

// Trigger alarms immediately without waiting await runDurableObjectAlarm(stub);});Storage billing for SQLite-backed Durable Objects will be enabled in January 2026, with a target date of January 7, 2026 (no earlier).

To view your SQLite storage usage, go to the Durable Objects page

Go to Durable ObjectsIf you do not want to incur costs, please take action such as optimizing queries or deleting unnecessary stored data in order to reduce your SQLite storage usage ahead of the January 7th target. Only usage on and after the billing target date will incur charges.

Developers on the Workers Paid plan with Durable Object's SQLite storage usage beyond included limits will incur charges according to SQLite storage pricing announced in September 2024 with the public beta ↗. Developers on the Workers Free plan will not be charged.

Compute billing for SQLite-backed Durable Objects has been enabled since the initial public beta. SQLite-backed Durable Objects currently incur charges for requests and duration, and no changes are being made to compute billing.

For more information about SQLite storage pricing and limits, refer to the Durable Objects pricing documentation.

R2 SQL now supports aggregation functions, GROUP BY, HAVING, along with schema discovery commands to make it easy to explore your data catalog.

You can now perform aggregations on Apache Iceberg tables in R2 Data Catalog using standard SQL functions including COUNT(*), SUM(), AVG(), MIN(), and MAX(). Combine these with GROUP BY to analyze data across dimensions, and use HAVING to filter aggregated results.

-- Calculate average transaction amounts by departmentSELECT department, COUNT(*), AVG(total_amount)FROM my_namespace.sales_dataWHERE region = 'North'GROUP BY departmentHAVING COUNT(*) > 50ORDER BY AVG(total_amount) DESC-- Find high-value departmentsSELECT department, SUM(total_amount)FROM my_namespace.sales_dataGROUP BY departmentHAVING SUM(total_amount) > 50000New metadata commands make it easy to explore your data catalog and understand table structures:

SHOW DATABASES or SHOW NAMESPACES - List all available namespacesSHOW TABLES IN namespace_name - List tables within a namespaceDESCRIBE namespace_name.table_name - View table schema and column types❯ npx wrangler r2 sql query "{ACCOUNT_ID}_{BUCKET_NAME}" "DESCRIBE default.sales_data;"

⛅️ wrangler 4.54.0─────────────────────────────────────────────

┌──────────────────┬────────────────┬──────────┬─────────────────┬───────────────┬───────────────────────────────────────────────────────────────────────────────────────────────────┐│ column_name │ type │ required │ initial_default │ write_default │ doc │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ sale_id │ BIGINT │ false │ │ │ Unique identifier for each sales transaction │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ sale_timestamp │ TIMESTAMPTZ │ false │ │ │ Exact date and time when the sale occurred (used for partitioning) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ department │ TEXT │ false │ │ │ Product department (8 categories: Electronics, Beauty, Home, Toys, Sports, Food, Clothing, Books) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ category │ TEXT │ false │ │ │ Product category grouping (4 categories: Premium, Standard, Budget, Clearance) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ region │ TEXT │ false │ │ │ Geographic sales region (5 regions: North, South, East, West, Central) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ product_id │ INT │ false │ │ │ Unique identifier for the product sold │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ quantity │ INT │ false │ │ │ Number of units sold in this transaction (range: 1-50) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ unit_price │ DECIMAL(10, 2) │ false │ │ │ Price per unit in dollars (range: $5.00-$500.00) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ total_amount │ DECIMAL(10, 2) │ false │ │ │ Total sale amount before tax (quantity × unit_price with discounts applied) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ discount_percent │ INT │ false │ │ │ Discount percentage applied to this sale (0-50%) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ tax_amount │ DECIMAL(10, 2) │ false │ │ │ Tax amount collected on this sale │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ profit_margin │ DECIMAL(10, 2) │ false │ │ │ Profit margin on this sale as a decimal percentage │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ customer_id │ INT │ false │ │ │ Unique identifier for the customer who made the purchase │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ is_online_sale │ BOOLEAN │ false │ │ │ Boolean flag indicating if sale was made online (true) or in-store (false) │├──────────────────┼────────────────┼──────────┼─────────────────┼───────────────┼───────────────────────────────────────────────────────────────────────────────────────────────────┤│ sale_date │ DATE │ false │ │ │ Calendar date of the sale (extracted from sale_timestamp) │└──────────────────┴────────────────┴──────────┴─────────────────┴───────────────┴───────────────────────────────────────────────────────────────────────────────────────────────────┘Read 0 B across 0 files from R2On average, 0 B / sTo learn more about the new aggregation capabilities and schema discovery commands, check out the SQL reference. If you're new to R2 SQL, visit our getting started guide to begin querying your data.

Python Workers now feature improved cold start performance, reducing initialization time for new Worker instances. This improvement is particularly noticeable for Workers with larger dependency sets or complex initialization logic.

Every time you deploy a Python Worker, a memory snapshot is captured after the top level of the Worker is executed. This snapshot captures all imports, including package imports that are often costly to load. The memory snapshot is loaded when the Worker is first started, avoiding the need to reload the Python runtime and all dependencies on each cold start.

We set up a benchmark that imports common packages (httpx ↗, fastapi ↗ and pydantic ↗) to see how Python Workers stack up against other platforms:

| Platform | Mean Cold Start (ms) |

|---|---|

| Cloudflare Python Workers | 1027 |

| AWS Lambda | 2502 |

| Google Cloud Run | 3069 |

These benchmarks run continuously. You can view the results and the methodology on our benchmark page ↗.

In additional testing, we have found that without any memory snapshot, the cold start for this benchmark takes around 10 seconds, so this change improves cold start performance by roughly a factor of 10.

To get started with Python Workers, check out our Python Workers overview.

We are introducing a brand new tool called Pywrangler, which simplifies package management in Python Workers by automatically installing Workers-compatible Python packages into your project.

With Pywrangler, you specify your Worker's Python dependencies in your pyproject.toml file:

[project]name = "python-beautifulsoup-worker"version = "0.1.0"description = "A simple Worker using beautifulsoup4"requires-python = ">=3.12"dependencies = [ "beautifulsoup4"]

[dependency-groups]dev = [ "workers-py", "workers-runtime-sdk"]You can then develop and deploy your Worker using the following commands:

uv run pywrangler devuv run pywrangler deployPywrangler automatically downloads and vendors the necessary packages for your Worker, and these packages are bundled with the Worker when you deploy.

Consult the Python packages documentation for full details on Pywrangler and Python package management in Workers.

When using the Cloudflare Vite plugin to build and deploy Workers, a Wrangler configuration file is now optional for assets-only (static) sites. If no wrangler.toml, wrangler.json, or wrangler.jsonc file is found, the plugin generates sensible defaults for an assets-only site. The name is based on the package.json or the project directory name, and the compatibility_date uses the latest date supported by your installed Miniflare version.

This allows easier setup for static sites using Vite. Note that SPAs will still need to set assets.not_found_handling to single-page-application ↗ in order to function correctly.

The Cloudflare Vite plugin now supports programmatic configuration of Workers without a Wrangler configuration file. You can use the config option to define Worker settings directly in your Vite configuration, or to modify existing configuration loaded from a Wrangler config file. This is particularly useful when integrating with other build tools or frameworks, as it allows them to control Worker configuration without needing users to manage a separate config file.

The Vite plugin's new config option accepts either a partial configuration object or a function that receives the current configuration and returns overrides. This option is applied after any config file is loaded, allowing the plugin to override specific values or define Worker configuration entirely in code.

Setting config to an object to provide configuration values that merge with defaults and config file settings:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";

export default defineConfig({ plugins: [ cloudflare({ config: { name: "my-worker", compatibility_flags: ["nodejs_compat"], send_email: [ { name: "EMAIL", }, ], }, }), ],});Use a function to modify the existing configuration:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";export default defineConfig({ plugins: [ cloudflare({ config: (userConfig) => { delete userConfig.compatibility_flags; }, }), ],});Return an object with values to merge:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";

export default defineConfig({ plugins: [ cloudflare({ config: (userConfig) => { if (!userConfig.compatibility_flags.includes("no_nodejs_compat")) { return { compatibility_flags: ["nodejs_compat"] }; } }, }), ],});Auxiliary Workers also support the config option, enabling multi-Worker architectures without config files.

Define auxiliary Workers without config files using config inside the auxiliaryWorkers array:

import { defineConfig } from "vite";import { cloudflare } from "@cloudflare/vite-plugin";

export default defineConfig({ plugins: [ cloudflare({ config: { name: "entry-worker", main: "./src/entry.ts", services: [{ binding: "API", service: "api-worker" }], }, auxiliaryWorkers: [ { config: { name: "api-worker", main: "./src/api.ts", }, }, ], }), ],});For more details and examples, see Programmatic configuration.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high number of issues reported by the Cloudflare community related to the v5 release. We have committed to releasing improvements on a 2-3 week cadence ↗ to ensure its stability and reliability, including the v5.14 release. We have also pivoted from an issue-to-issue approach to a resource-per-resource approach ↗ - we will be focusing on specific resources to not only stabilize the resource but also ensure it is migration-friendly for those migrating from v4 to v5.

Thank you for continuing to raise issues. They make our provider stronger and help us build products that reflect your needs.

This release includes bug fixes, the stabilization of even more popular resources, and more.

Resource affected: api_shield_discovery_operation

Cloudflare continuously discovers and updates API endpoints and web assets of your web applications. To improve the maintainability of these dynamic resources, we are working on reducing the need to actively engage with discovered operations.

The corresponding public API endpoint of discovered operations ↗ is not affected and will continue to be supported.

dlp_custom_profilepartners_ent as valid enum for rate_plan.id (#6505 ↗)We suggest waiting to migrate to v5 while we work on stabilization. This helps with avoiding any blocking issues while the Terraform resources are actively being stabilized ↗. We will be releasing a new migration tool in March 2026 to help support v4 to v5 transitions for our most popular resources.

You can now connect directly to remote databases and databases requiring TLS with wrangler dev.

This lets you run your Worker code locally while connecting to remote databases, without needing to use wrangler dev --remote.

The localConnectionString field and CLOUDFLARE_HYPERDRIVE_LOCAL_CONNECTION_STRING_<BINDING_NAME> environment variable can be used to configure the connection string used by wrangler dev.

{ "hyperdrive": [ { "binding": "HYPERDRIVE", "id": "your-hyperdrive-id", "localConnectionString": "postgres://user:password@remote-host.example.com:5432/database?sslmode=require" } ]}Learn more about local development with Hyperdrive.

Workers applications now use reusable Cloudflare Access policies to reduce duplication and simplify access management across multiple Workers.

Previously, enabling Cloudflare Access on a Worker created per-application policies, unique to each application. Now, we create reusable policies that can be shared across applications:

Preview URLs: All Workers preview URLs share a single "Cloudflare Workers Preview URLs" policy across your account. This policy is automatically created the first time you enable Access on any preview URL. By sharing a single policy across all preview URLs, you can configure access rules once and have them apply company-wide to all Workers which protect preview URLs. This makes it much easier to manage who can access preview environments without having to update individual policies for each Worker.

Production workers.dev URLs: When enabled, each Worker gets its own reusable policy (named <worker-name> - Production) by default. We recognize production services often have different access requirements and having individual policies here makes it easier to configure service-to-service authentication or protect internal dashboards or applications with specific user groups. Keeping these policies separate gives you the flexibility to configure exactly the right access rules for each production service. When you disable Access on a production Worker, the associated policy is automatically cleaned up if it's not being used by other applications.

This change reduces policy duplication, simplifies cross-company access management for preview environments, and provides the flexibility needed for production services. You can still customize access rules by editing the reusable policies in the Zero Trust dashboard.

To enable Cloudflare Access on your Worker:

workers.dev or Preview URLs, click Enable Cloudflare Access.For more information on configuring Cloudflare Access for Workers, refer to the Workers Access documentation.

The latest release of @cloudflare/agents ↗ brings resumable streaming, significant MCP client improvements, and critical fixes for schedules and Durable Object lifecycle management.

AIChatAgent now supports resumable streaming, allowing clients to reconnect and continue receiving streamed responses without losing data. This is useful for:

Streams are maintained across page refreshes, broken connections, and syncing across open tabs and devices.

The MCPClientManager API has been redesigned for better clarity and control:

registerServer() method: Register MCP servers without immediately connectingconnectToServer() method: Establish connections to registered serversrestoreConnectionsFromStorage() now properly handles failed connections// Register a server to Agentconst { id } = await this.mcp.registerServer({ name: "my-server", url: "https://my-mcp-server.example.com",});

// Connect when readyawait this.mcp.connectToServer(id);

// Discover tools, prompts and resourcesawait this.mcp.discoverIfConnected(id);The SDK now includes a formalized MCPConnectionState enum with states: idle, connecting, authenticating, connected, discovering, and ready.

MCP discovery fetches the available tools, prompts, and resources from an MCP server so your agent knows what capabilities are available. The MCPClientConnection class now includes a dedicated discover() method with improved reliability:

this.schedule(new Date(), ...) would not fireTo update to the latest version:

npm i agents@latestWe've partnered with Black Forest Labs (BFL) to bring their latest FLUX.2 [dev] model to Workers AI! This model excels in generating high-fidelity images with physical world grounding, multi-language support, and digital asset creation. You can also create specific super images with granular controls like JSON prompting.

Read the BFL blog ↗ to learn more about the model itself. Read our Cloudflare blog ↗ to see the model in action, or try it out yourself on our multi modal playground ↗.

Pricing documentation is available on the model page or pricing page. Note, we expect to drop pricing in the next few days after iterating on the model performance.

The model hosted on Workers AI is able to support up to 4 image inputs (512x512 per input image). Note, this image model is one of the most powerful in the catalog and is expected to be slower than the other image models we currently support. One catch to look out for is that this model takes multipart form data inputs, even if you just have a prompt.

With the REST API, the multipart form data input looks like this:

curl --request POST \ --url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-dev' \ --header 'Authorization: Bearer {TOKEN}' \ --header 'Content-Type: multipart/form-data' \ --form 'prompt=a sunset at the alps' \ --form steps=25 --form width=1024 --form height=1024With the Workers AI binding, you can use it as such:

const form = new FormData();form.append('prompt', 'a sunset with a dog');form.append('width', '1024');form.append('height', '1024');

//this dummy request is temporary hack//we're pushing a change to address this soonconst formRequest = new Request('http://dummy', { method: 'POST', body: form});const formStream = formRequest.body;const formContentType = formRequest.headers.get('content-type') || 'multipart/form-data';

const resp = await env.AI.run("@cf/black-forest-labs/flux-2-dev", { multipart: { body: formStream, contentType: formContentType }});The parameters you can send to the model are detailed here:

prompt (string) - Text description of the image to generateOptional Parameters

input_image_0 (string) - Binary imageinput_image_1 (string) - Binary imageinput_image_2 (string) - Binary imageinput_image_3 (string) - Binary imagesteps (integer) - Number of inference steps. Higher values may improve quality but increase generation timeguidance (float) - Guidance scale for generation. Higher values follow the prompt more closelywidth (integer) - Width of the image, default 1024 Range: 256-1920height (integer) - Height of the image, default 768 Range: 256-1920seed (integer) - Seed for reproducibility## Multi-Reference Images

The FLUX.2 model is great at generating images based on reference images. You can use this feature to apply the style of one image to another, add a new character to an image, or iterate on past generate images. You would use it with the same multipart form data structure, with the input images in binary.

For the prompt, you can reference the images based on the index, like `take the subject of image 1 and style it like image 0` or even use natural language like `place the dog beside the woman`.

Note: you have to name the input parameter as `input_image_0`, `input_image_1`, `input_image_2` for it to work correctly. All input images must be smaller than 512x512.

```bashcurl --request POST \ --url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-dev' \ --header 'Authorization: Bearer {TOKEN}' \ --header 'Content-Type: multipart/form-data' \ --form 'prompt=take the subject of image 1 and style it like image 0' \ --form input_image_0=@/Users/johndoe/Desktop/icedoutkeanu.png \ --form input_image_1=@/Users/johndoe/Desktop/me.png \ --form steps=25 --form width=1024 --form height=1024Through Workers AI Binding:

//helper function to convert ReadableStream to Blobasync function streamToBlob(stream: ReadableStream, contentType: string): Promise<Blob> { const reader = stream.getReader(); const chunks = [];

while (true) { const { done, value } = await reader.read(); if (done) break; chunks.push(value); }

return new Blob(chunks, { type: contentType });}

const image0 = await fetch("http://image-url");const image1 = await fetch("http://image-url");const form = new FormData();

const image_blob0 = await streamToBlob(image0.body, "image/png");const image_blob1 = await streamToBlob(image1.body, "image/png");form.append('input_image_0', image_blob0)form.append('input_image_1', image_blob1)form.append('prompt', 'take the subject of image 1and style it like image 0')

//this dummy request is temporary hack//we're pushing a change to address this soonconst formRequest = new Request('http://dummy', { method: 'POST', body: form});const formStream = formRequest.body;const formContentType = formRequest.headers.get('content-type') || 'multipart/form-data';

const resp = await env.AI.run("@cf/black-forest-labs/flux-2-dev", { multipart: { body: form, contentType: "multipart/form-data" }})The model supports prompting in JSON to get more granular control over images. You would pass the JSON as the value of the 'prompt' field in the multipart form data. See the JSON schema below on the base parameters you can pass to the model.

{ "type": "object", "properties": { "scene": { "type": "string", "description": "Overall scene setting or location" }, "subjects": { "type": "array", "items": { "type": "object", "properties": { "type": { "type": "string", "description": "Type of subject (e.g., desert nomad, blacksmith, DJ, falcon)" }, "description": { "type": "string", "description": "Physical attributes, clothing, accessories" }, "pose": { "type": "string", "description": "Action or stance" }, "position": { "type": "string", "enum": ["foreground", "midground", "background"], "description": "Depth placement in scene" } }, "required": ["type", "description", "pose", "position"] } }, "style": { "type": "string", "description": "Artistic rendering style (e.g., digital painting, photorealistic, pixel art, noir sci-fi, lifestyle photo, wabi-sabi photo)" }, "color_palette": { "type": "array", "items": { "type": "string" }, "minItems": 3, "maxItems": 3, "description": "Exactly 3 main colors for the scene (e.g., ['navy', 'neon yellow', 'magenta'])" }, "lighting": { "type": "string", "description": "Lighting condition and direction (e.g., fog-filtered sun, moonlight with star glints, dappled sunlight)" }, "mood": { "type": "string", "description": "Emotional atmosphere (e.g., harsh and determined, playful and modern, peaceful and dreamy)" }, "background": { "type": "string", "description": "Background environment details" }, "composition": { "type": "string", "enum": [ "rule of thirds", "circular arrangement", "framed by foreground", "minimalist negative space", "S-curve", "vanishing point center", "dynamic off-center", "leading leads", "golden spiral", "diagonal energy", "strong verticals", "triangular arrangement" ], "description": "Compositional technique" }, "camera": { "type": "object", "properties": { "angle": { "type": "string", "enum": ["eye level", "low angle", "slightly low", "bird's-eye", "worm's-eye", "over-the-shoulder", "isometric"], "description": "Camera perspective" }, "distance": { "type": "string", "enum": ["close-up", "medium close-up", "medium shot", "medium wide", "wide shot", "extreme wide"], "description": "Framing distance" }, "focus": { "type": "string", "enum": ["deep focus", "macro focus", "selective focus", "sharp on subject", "soft background"], "description": "Focus type" }, "lens": { "type": "string", "enum": ["14mm", "24mm", "35mm", "50mm", "70mm", "85mm"], "description": "Focal length (wide to telephoto)" }, "f-number": { "type": "string", "description": "Aperture (e.g., f/2.8, the smaller the number the more blurry the background)" }, "ISO": { "type": "number", "description": "Light sensitivity value (comfortable range between 100 & 6400, lower = less sensitivity)" } } }, "effects": { "type": "array", "items": { "type": "string" }, "description": "Post-processing effects (e.g., 'lens flare small', 'subtle film grain', 'soft bloom', 'god rays', 'chromatic aberration mild')" } }, "required": ["scene", "subjects"]}#2ECC71Containers now support mounting R2 buckets as FUSE (Filesystem in Userspace) volumes, allowing applications to interact with R2 using standard filesystem operations.

Common use cases include:

FUSE adapters like tigrisfs ↗, s3fs ↗, and gcsfuse ↗ can be installed in your container image and configured to mount buckets at startup.

FROM alpine:3.20

# Install FUSE and dependenciesRUN apk update && \ apk add --no-cache ca-certificates fuse curl bash

# Install tigrisfsRUN ARCH=$(uname -m) && \ if [ "$ARCH" = "x86_64" ]; then ARCH="amd64"; fi && \ if [ "$ARCH" = "aarch64" ]; then ARCH="arm64"; fi && \ VERSION=$(curl -s https://api.github.com/repos/tigrisdata/tigrisfs/releases/latest | grep -o '"tag_name": "[^"]*' | cut -d'"' -f4) && \ curl -L "https://github.com/tigrisdata/tigrisfs/releases/download/${VERSION}/tigrisfs_${VERSION#v}_linux_${ARCH}.tar.gz" -o /tmp/tigrisfs.tar.gz && \ tar -xzf /tmp/tigrisfs.tar.gz -C /usr/local/bin/ && \ rm /tmp/tigrisfs.tar.gz && \ chmod +x /usr/local/bin/tigrisfs

# Create startup script that mounts bucketRUN printf '#!/bin/sh\n\ set -e\n\ mkdir -p /mnt/r2\n\ R2_ENDPOINT="https://${R2_ACCOUNT_ID}.r2.cloudflarestorage.com"\n\ /usr/local/bin/tigrisfs --endpoint "${R2_ENDPOINT}" -f "${BUCKET_NAME}" /mnt/r2 &\n\ sleep 3\n\ ls -lah /mnt/r2\n\ ' > /startup.sh && chmod +x /startup.sh

CMD ["/startup.sh"]See the Mount R2 buckets with FUSE example for a complete guide on mounting R2 buckets and/or other S3-compatible storage buckets within your containers.

Containers and Sandboxes pricing for CPU time is now based on active usage only, instead of provisioned resources.

This means that you now pay less for Containers and Sandboxes.

Imagine running the standard-2 instance type for one hour, which can use up to 1 vCPU,

but on average you use only 20% of your CPU capacity.

CPU-time is priced at $0.00002 per vCPU-second.

Previously, you would be charged for the CPU allocated to the instance multiplied by the time it was active, in this case 1 hour.

CPU cost would have been: $0.072 — 1 vCPU * 3600 seconds * $0.00002

Now, since you are only using 20% of your CPU capacity, your CPU cost is cut to 20% of the previous amount.

CPU cost is now: $0.0144 — 1 vCPU * 3600 seconds * $0.00002 * 20% utilization

This can significantly reduce costs for Containers and Sandboxes.

See the documentation to learn more about Containers, Sandboxes, and associated pricing.

Workers Builds now supports up to 64 environment variables, and each environment variable can be up to 5 KB in size. The previous limit was 5 KB total across all environment variables.

This change enables better support for complex build configurations, larger application settings, and more flexible CI/CD workflows.

For more details, refer to the build limits documentation.

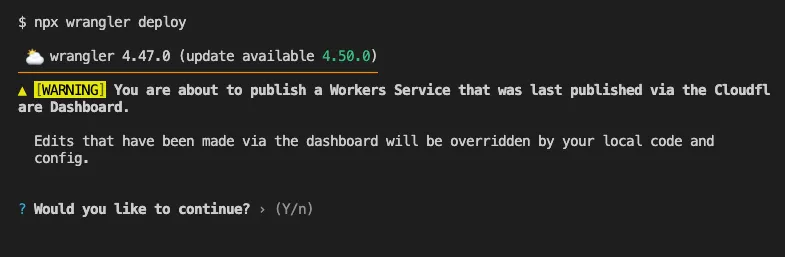

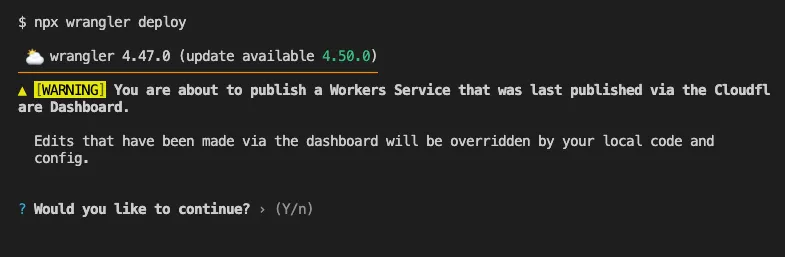

Until now, if a Worker had been previously deployed via the Cloudflare Dashboard ↗, a subsequent deployment done via the Cloudflare Workers CLI, Wrangler

(through the deploy command), would allow the user to override the Worker's dashboard settings without providing details on

what dashboard settings would be lost.

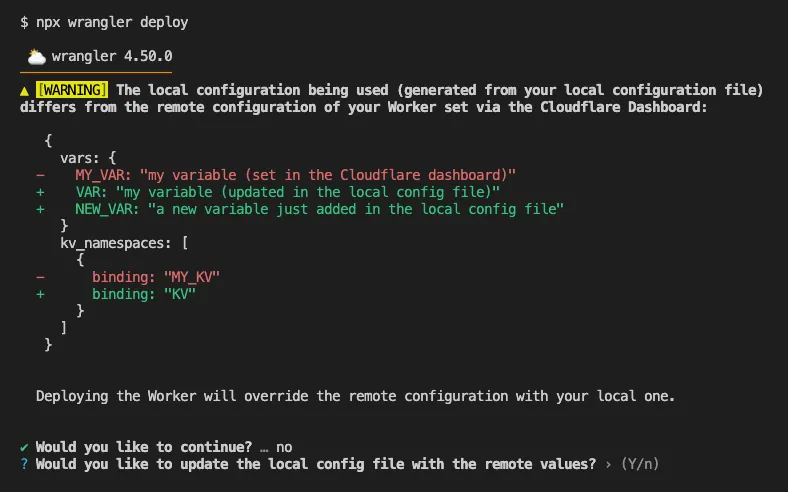

Now instead, wrangler deploy presents a helpful representation of the differences between the local configuration

and the remote dashboard settings, and offers to update your local configuration file for you.

See example below showing a before and after for wrangler deploy when a local configuration is expected to override a Worker's dashboard settings:

Before

After

Also, if instead Wrangler detects that a deployment would override remote dashboard settings but in an additive way, without modifying or removing any of them, it will simply proceed with the deployment without requesting any user interaction.

Update to Wrangler v4.50.0 or greater to take advantage of this improved deploy flow.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high number of issues reported by the Cloudflare community related to the v5 release. We have committed to releasing improvements on a 2-3 week cadence ↗ to ensure its stability and reliability, including the v5.13 release. We have also pivoted from an issue-to-issue approach to a resource-per-resource approach ↗ - we will be focusing on specific resources to not only stabilize the resource but also ensure it is migration-friendly for those migrating from v4 to v5.

Thank you for continuing to raise issues. They make our provider stronger and help us build products that reflect your needs.

This release includes new features, new resources and data sources, bug fixes, updates to our Developer Documentation, and more.

Please be aware that there are breaking changes for the cloudflare_api_token and cloudflare_account_token resources. These changes eliminate configuration drift caused by policy ordering differences in the Cloudflare API.

For more specific information about the changes or the actions required, please see the detailed Repository changelog ↗.

We suggest holding off on migration to v5 while we work on stabilization. This help will you avoid any blocking issues while the Terraform resources are actively being stabilized. We will be releasing a new migration tool in March 2026 to help support v4 to v5 transitions for our most popular resources.