Changelog

New updates and improvements at Cloudflare.

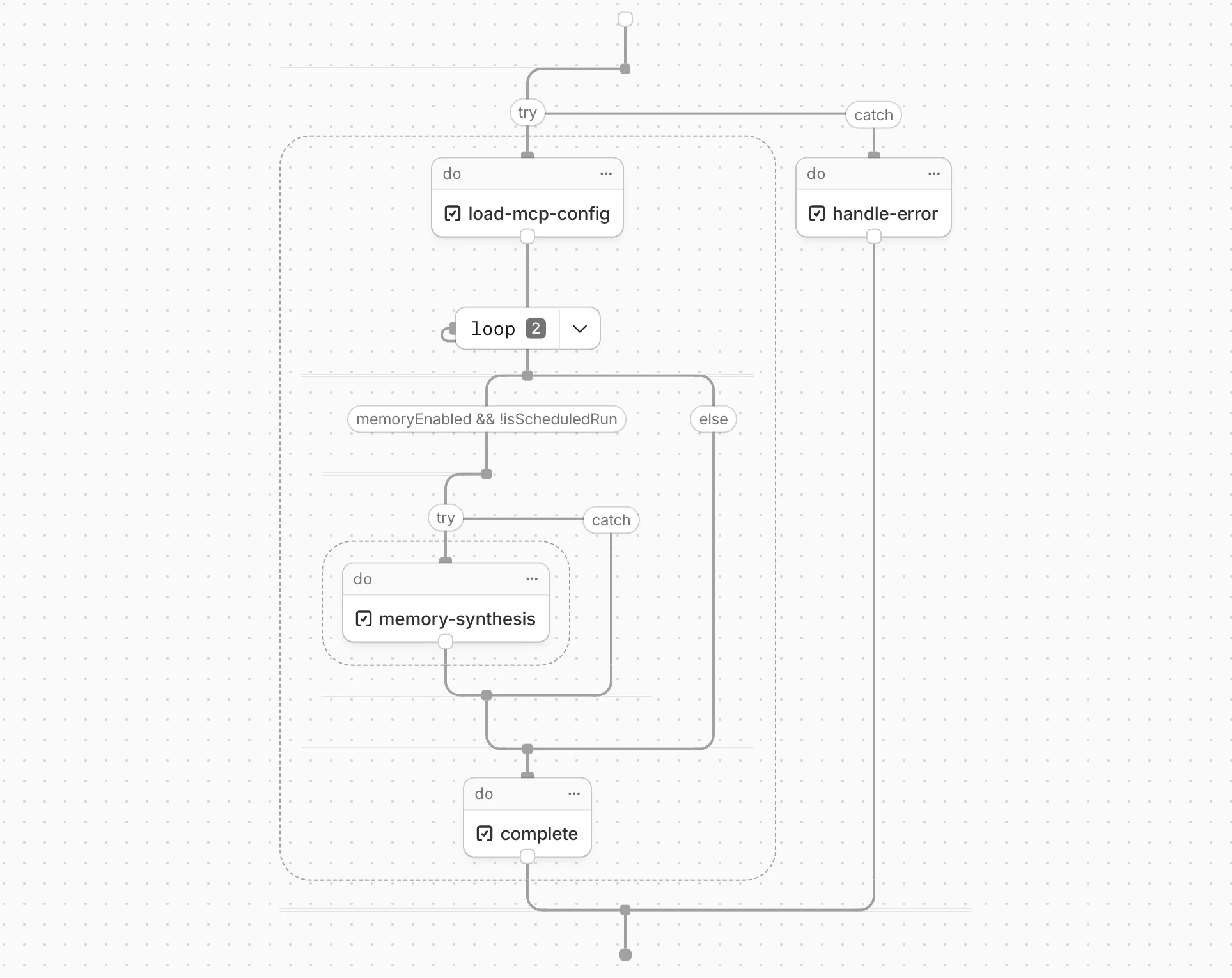

Cloudflare Workflows now automatically generates visual diagrams from your code

Your Workflow is parsed to provide a visual map of the Workflow structure, allowing you to:

- Understand how steps connect and execute

- Visualize loops and nested logic

- Follow branching paths for conditional logic

You can collapse loops and nested logic to see the high-level flow, or expand them to see every step.

Workflow diagrams are available in beta for all JavaScript and TypeScript Workflows. Find your Workflows in the Cloudflare dashboard ↗ to see their diagrams.

The latest release of the Agents SDK ↗ brings first-class support for Cloudflare Workflows, synchronous state management, and new scheduling capabilities.

Agents excel at real-time communication and state management. Workflows excel at durable execution. Together, they enable powerful patterns where Agents handle WebSocket connections while Workflows handle long-running tasks, retries, and human-in-the-loop flows.

Use the new

AgentWorkflowclass to define workflows with typed access to your Agent:JavaScript import { AgentWorkflow } from "agents/workflows";export class ProcessingWorkflow extends AgentWorkflow {async run(event, step) {// Call Agent methods via RPCawait this.agent.updateStatus(event.payload.taskId, "processing");// Non-durable: progress reporting to clientsawait this.reportProgress({ step: "process", percent: 0.5 });this.broadcastToClients({ type: "update", taskId: event.payload.taskId });// Durable via step: idempotent, won't repeat on retryawait step.mergeAgentState({ taskProgress: 0.5 });const result = await step.do("process", async () => {return processData(event.payload.data);});await step.reportComplete(result);return result;}}TypeScript import { AgentWorkflow } from "agents/workflows";import type { AgentWorkflowEvent, AgentWorkflowStep } from "agents/workflows";export class ProcessingWorkflow extends AgentWorkflow<MyAgent, TaskParams> {async run(event: AgentWorkflowEvent<TaskParams>, step: AgentWorkflowStep) {// Call Agent methods via RPCawait this.agent.updateStatus(event.payload.taskId, "processing");// Non-durable: progress reporting to clientsawait this.reportProgress({ step: "process", percent: 0.5 });this.broadcastToClients({ type: "update", taskId: event.payload.taskId });// Durable via step: idempotent, won't repeat on retryawait step.mergeAgentState({ taskProgress: 0.5 });const result = await step.do("process", async () => {return processData(event.payload.data);});await step.reportComplete(result);return result;}}Start workflows from your Agent with

runWorkflow()and handle lifecycle events:JavaScript export class MyAgent extends Agent {async startTask(taskId, data) {const instanceId = await this.runWorkflow("PROCESSING_WORKFLOW", {taskId,data,});return { instanceId };}async onWorkflowProgress(workflowName, instanceId, progress) {this.broadcast(JSON.stringify({ type: "progress", progress }));}async onWorkflowComplete(workflowName, instanceId, result) {console.log(`Workflow ${instanceId} completed`);}async onWorkflowError(workflowName, instanceId, error) {console.error(`Workflow ${instanceId} failed:`, error);}}TypeScript export class MyAgent extends Agent {async startTask(taskId: string, data: string) {const instanceId = await this.runWorkflow("PROCESSING_WORKFLOW", {taskId,data,});return { instanceId };}async onWorkflowProgress(workflowName: string,instanceId: string,progress: unknown,) {this.broadcast(JSON.stringify({ type: "progress", progress }));}async onWorkflowComplete(workflowName: string,instanceId: string,result?: unknown,) {console.log(`Workflow ${instanceId} completed`);}async onWorkflowError(workflowName: string,instanceId: string,error: unknown,) {console.error(`Workflow ${instanceId} failed:`, error);}}Key workflow methods on your Agent:

runWorkflow(workflowName, params, options?)— Start a workflow with optional metadatagetWorkflow(workflowId)/getWorkflows(criteria?)— Query workflows with cursor-based paginationapproveWorkflow(workflowId)/rejectWorkflow(workflowId)— Human-in-the-loop approval flowspauseWorkflow(),resumeWorkflow(),terminateWorkflow()— Workflow control

State updates are now synchronous with a new

validateStateChange()validation hook:JavaScript export class MyAgent extends Agent {validateStateChange(oldState, newState) {// Return false to reject the changeif (newState.count < 0) return false;// Return modified state to transformreturn { ...newState, lastUpdated: Date.now() };}}TypeScript export class MyAgent extends Agent<Env, State> {validateStateChange(oldState: State, newState: State): State | false {// Return false to reject the changeif (newState.count < 0) return false;// Return modified state to transformreturn { ...newState, lastUpdated: Date.now() };}}The new

scheduleEvery()method enables fixed-interval recurring tasks with built-in overlap prevention:JavaScript // Run every 5 minutesawait this.scheduleEvery("syncData", 5 * 60 * 1000, { source: "api" });TypeScript // Run every 5 minutesawait this.scheduleEvery("syncData", 5 * 60 * 1000, { source: "api" });- Client-side RPC timeout — Set timeouts on callable method invocations

StreamingResponse.error(message)— Graceful stream error signalinggetCallableMethods()— Introspection API for discovering callable methods- Connection close handling — Pending calls are automatically rejected on disconnect

JavaScript await agent.call("method", [args], {timeout: 5000,stream: { onChunk, onDone, onError },});TypeScript await agent.call("method", [args], {timeout: 5000,stream: { onChunk, onDone, onError },});Secure email reply routing — Email replies are now secured with HMAC-SHA256 signed headers, preventing unauthorized routing of emails to agent instances.

Routing improvements:

basePathoption to bypass default URL construction for custom routing- Server-sent identity — Agents send

nameandagenttype on connect - New

onIdentityandonIdentityChangecallbacks on the client

JavaScript const agent = useAgent({basePath: "user",onIdentity: (name, agentType) => console.log(`Connected to ${name}`),});TypeScript const agent = useAgent({basePath: "user",onIdentity: (name, agentType) => console.log(`Connected to ${name}`),});To update to the latest version:

Terminal window npm i agents@latestFor the complete Workflows API reference and patterns, see Run Workflows.

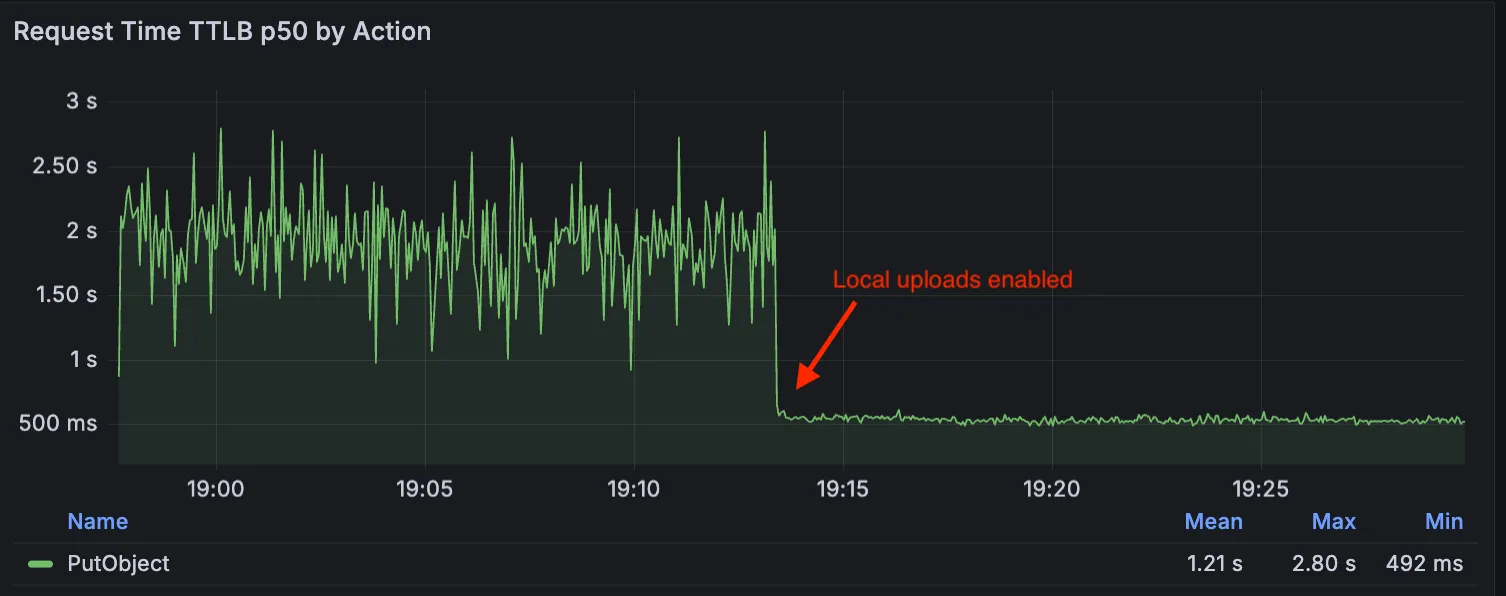

Local Uploads is now available in open beta. Enable it on your R2 bucket to improve upload performance when clients upload data from a different region than your bucket. With Local Uploads enabled, object data is written to storage infrastructure near the client, then asynchronously replicated to your bucket. The object is immediately accessible and remains strongly consistent throughout. Refer to How R2 works for details on how data is written to your bucket.

In our tests, we observed up to 75% reduction in Time to Last Byte (TTLB) for upload requests when Local Uploads is enabled.

This feature is ideal when:

- Your users are globally distributed

- Upload performance and reliability is critical to your application

- You want to optimize write performance without changing your bucket's primary location

To enable Local Uploads on your bucket, find Local Uploads in your bucket settings in the Cloudflare Dashboard ↗, or run:

Terminal window npx wrangler r2 bucket local-uploads enable <BUCKET_NAME>Enabling Local Uploads on a bucket is seamless: existing uploads will complete as expected and there’s no interruption to traffic. There is no additional cost to enable Local Uploads. Upload requests incur the standard Class A operation costs same as upload requests made without Local Uploads.

For more information, refer to Local Uploads.

The minimum

cacheTtlparameter for Workers KV has been reduced from 60 seconds to 30 seconds. This change applies to bothget()andgetWithMetadata()methods.This reduction allows you to maintain more up-to-date cached data and have finer-grained control over cache behavior. Applications requiring faster data refresh rates can now configure cache durations as low as 30 seconds instead of the previous 60-second minimum.

The

cacheTtlparameter defines how long a KV result is cached at the global network location it is accessed from:JavaScript // Read with custom cache TTLconst value = await env.NAMESPACE.get("my-key", {cacheTtl: 30, // Cache for minimum 30 seconds (previously 60)});// getWithMetadata also supports the reduced cache TTLconst valueWithMetadata = await env.NAMESPACE.getWithMetadata("my-key", {cacheTtl: 30, // Cache for minimum 30 seconds});The default cache TTL remains unchanged at 60 seconds. Upgrade to the latest version of Wrangler to be able to use 30 seconds

cacheTtl.This change affects all KV read operations using the binding API. For more information, consult the Workers KV cache TTL documentation.

We have partnered with Black Forest Labs (BFL) again to bring their optimized FLUX.2 [klein] 9B model to Workers AI. This distilled model offers enhanced quality compared to the 4B variant, while maintaining cost-effective pricing. With a fixed 4-step inference process, Klein 9B is ideal for rapid prototyping and real-time applications where both speed and quality matter.

Read the BFL blog ↗ to learn more about the model itself, or try it out yourself on our multi modal playground ↗.

Pricing documentation is available on the model page or pricing page.

The model hosted on Workers AI is optimized for speed with a fixed 4-step inference process and supports up to 4 image inputs. Since this is a distilled model, the

stepsparameter is fixed at 4 and cannot be adjusted. Like FLUX.2 [dev] and FLUX.2 [klein] 4B, this image model uses multipart form data inputs, even if you just have a prompt.With the REST API, the multipart form data input looks like this:

Terminal window curl --request POST \--url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-klein-9b' \--header 'Authorization: Bearer {TOKEN}' \--header 'Content-Type: multipart/form-data' \--form 'prompt=a sunset at the alps' \--form width=1024 \--form height=1024With the Workers AI binding, you can use it as such:

JavaScript const form = new FormData();form.append("prompt", "a sunset with a dog");form.append("width", "1024");form.append("height", "1024");// FormData doesn't expose its serialized body or boundary. Passing it to a// Request (or Response) constructor serializes it and generates the Content-Type// header with the boundary, which is required for the server to parse the multipart fields.const formResponse = new Response(form);const formStream = formResponse.body;const formContentType = formResponse.headers.get('content-type');const resp = await env.AI.run("@cf/black-forest-labs/flux-2-klein-9b", {multipart: {body: formStream,contentType: formContentType,},});The parameters you can send to the model are detailed here:

JSON Schema for Model

Required Parametersprompt(string) - Text description of the image to generate

Optional Parameters

input_image_0(string) - Binary imageinput_image_1(string) - Binary imageinput_image_2(string) - Binary imageinput_image_3(string) - Binary imageguidance(float) - Guidance scale for generation. Higher values follow the prompt more closelywidth(integer) - Width of the image, default1024Range: 256-1920height(integer) - Height of the image, default768Range: 256-1920seed(integer) - Seed for reproducibility

Note: Since this is a distilled model, the

stepsparameter is fixed at 4 and cannot be adjusted.The FLUX.2 klein-9b model supports generating images based on reference images, just like FLUX.2 [dev] and FLUX.2 [klein] 4B. You can use this feature to apply the style of one image to another, add a new character to an image, or iterate on past generated images. You would use it with the same multipart form data structure, with the input images in binary. The model supports up to 4 input images.

For the prompt, you can reference the images based on the index, like

take the subject of image 1 and style it like image 0or even use natural language likeplace the dog beside the woman.You must name the input parameter as

input_image_0,input_image_1,input_image_2,input_image_3for it to work correctly. All input images must be smaller than 512x512.Terminal window curl --request POST \--url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-klein-9b' \--header 'Authorization: Bearer {TOKEN}' \--header 'Content-Type: multipart/form-data' \--form 'prompt=take the subject of image 1 and style it like image 0' \--form input_image_0=@/Users/johndoe/Desktop/icedoutkeanu.png \--form input_image_1=@/Users/johndoe/Desktop/me.png \--form width=1024 \--form height=1024Through Workers AI Binding:

JavaScript //helper function to convert ReadableStream to Blobasync function streamToBlob(stream: ReadableStream, contentType: string): Promise<Blob> {const reader = stream.getReader();const chunks = [];while (true) {const { done, value } = await reader.read();if (done) break;chunks.push(value);}return new Blob(chunks, { type: contentType });}const image0 = await fetch("http://image-url");const image1 = await fetch("http://image-url");const form = new FormData();const image_blob0 = await streamToBlob(image0.body, "image/png");const image_blob1 = await streamToBlob(image1.body, "image/png");form.append('input_image_0', image_blob0)form.append('input_image_1', image_blob1)form.append('prompt', 'take the subject of image 1 and style it like image 0')// FormData doesn't expose its serialized body or boundary. Passing it to a// Request (or Response) constructor serializes it and generates the Content-Type// header with the boundary, which is required for the server to parse the multipart fields.const formResponse = new Response(form);const formStream = formResponse.body;const formContentType = formResponse.headers.get('content-type');const resp = await env.AI.run("@cf/black-forest-labs/flux-2-klein-9b", {multipart: {body: formStream,contentType: formContentType}})

Paid plans can now have up to 100,000 files per Pages site, increased from the previous limit of 20,000 files.

To enable this increased limit, set the environment variable

PAGES_WRANGLER_MAJOR_VERSION=4in your Pages project settings.The Free plan remains at 20,000 files per site.

For more details, refer to the Pages limits documentation.

You can now store up to 10 million vectors in a single Vectorize index, doubling the previous limit of 5 million vectors. This enables larger-scale semantic search, recommendation systems, and retrieval-augmented generation (RAG) applications without splitting data across multiple indexes.

Vectorize continues to support indexes with up to 1,536 dimensions per vector at 32-bit precision. Refer to the Vectorize limits documentation for complete details.

You can now configure Workers to run close to infrastructure in legacy cloud regions to minimize latency to existing services and databases. This is most useful when your Worker makes multiple round trips.

To set a placement hint, set the

placement.regionproperty in your Wrangler configuration file:JSONC {"placement": {"region": "aws:us-east-1",},}TOML [placement]region = "aws:us-east-1"Placement hints support Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure region identifiers. Workers run in the Cloudflare data center ↗ with the lowest latency to the specified cloud region.

If your existing infrastructure is not in these cloud providers, expose it to placement probes with

placement.hostfor layer 4 checks orplacement.hostnamefor layer 7 checks. These probes are designed to locate single-homed infrastructure and are not suitable for anycasted or multicasted resources.JSONC {"placement": {"host": "my_database_host.com:5432",},}TOML [placement]host = "my_database_host.com:5432"JSONC {"placement": {"hostname": "my_api_server.com",},}TOML [placement]hostname = "my_api_server.com"This is an extension of Smart Placement, which automatically places your Workers closer to back-end APIs based on measured latency. When you do not know the location of your back-end APIs or have multiple back-end APIs, set

mode: "smart":JSONC {"placement": {"mode": "smart",},}TOML [placement]mode = "smart"

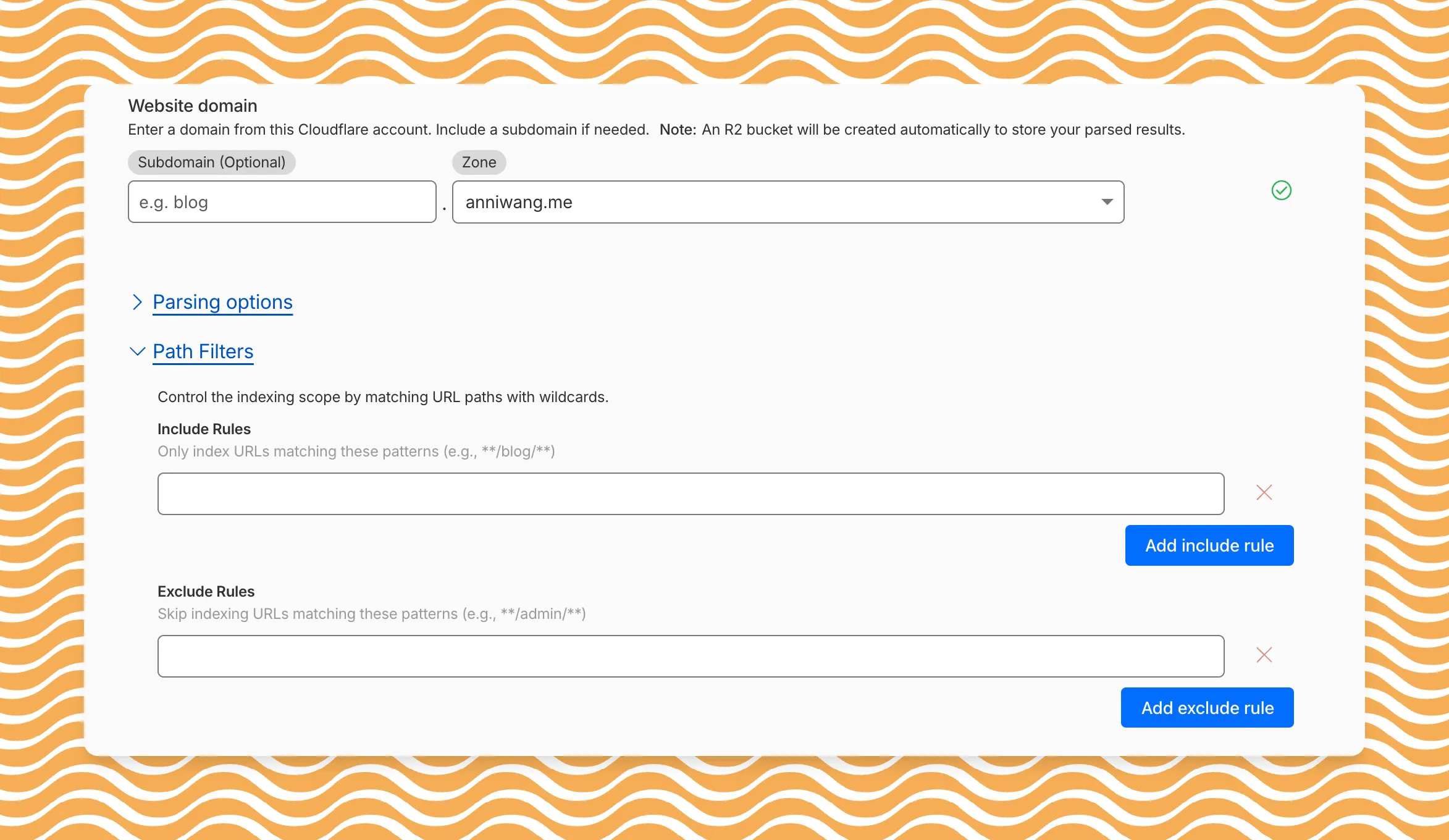

AI Search now includes path filtering for both website and R2 data sources. You can now control which content gets indexed by defining include and exclude rules for paths.

By controlling what gets indexed, you can improve the relevance and quality of your search results. You can also use path filtering to split a single data source across multiple AI Search instances for specialized search experiences.

Path filtering uses micromatch ↗ patterns, so you can use

*to match within a directory and**to match across directories.Use case Include Exclude Index docs but skip drafts **/docs/****/docs/drafts/**Keep admin pages out of results — **/admin/**Index only English content **/en/**— Configure path filters when creating a new instance or update them anytime from Settings. Check out path filtering to learn more.

You can now create AI Search instances programmatically using the API. For example, use the API to create instances for each customer in a multi-tenant application or manage AI Search alongside your other infrastructure.

If you have created an AI Search instance via the dashboard before, you already have a service API token registered and can start creating instances programmatically right away. If not, follow the API guide to set up your first instance.

For example, you can now create separate search instances for each language on your website:

Terminal window for lang in en fr es de; docurl -X POST "https://api.cloudflare.com/client/v4/accounts/$ACCOUNT_ID/ai-search/instances" \-H "Authorization: Bearer $API_TOKEN" \-H "Content-Type: application/json" \--data '{"id": "docs-'"$lang"'","type": "web-crawler","source": "example.com","source_params": {"path_include": ["**/'"$lang"'/**"]}}'doneRefer to the REST API reference for additional configuration options.

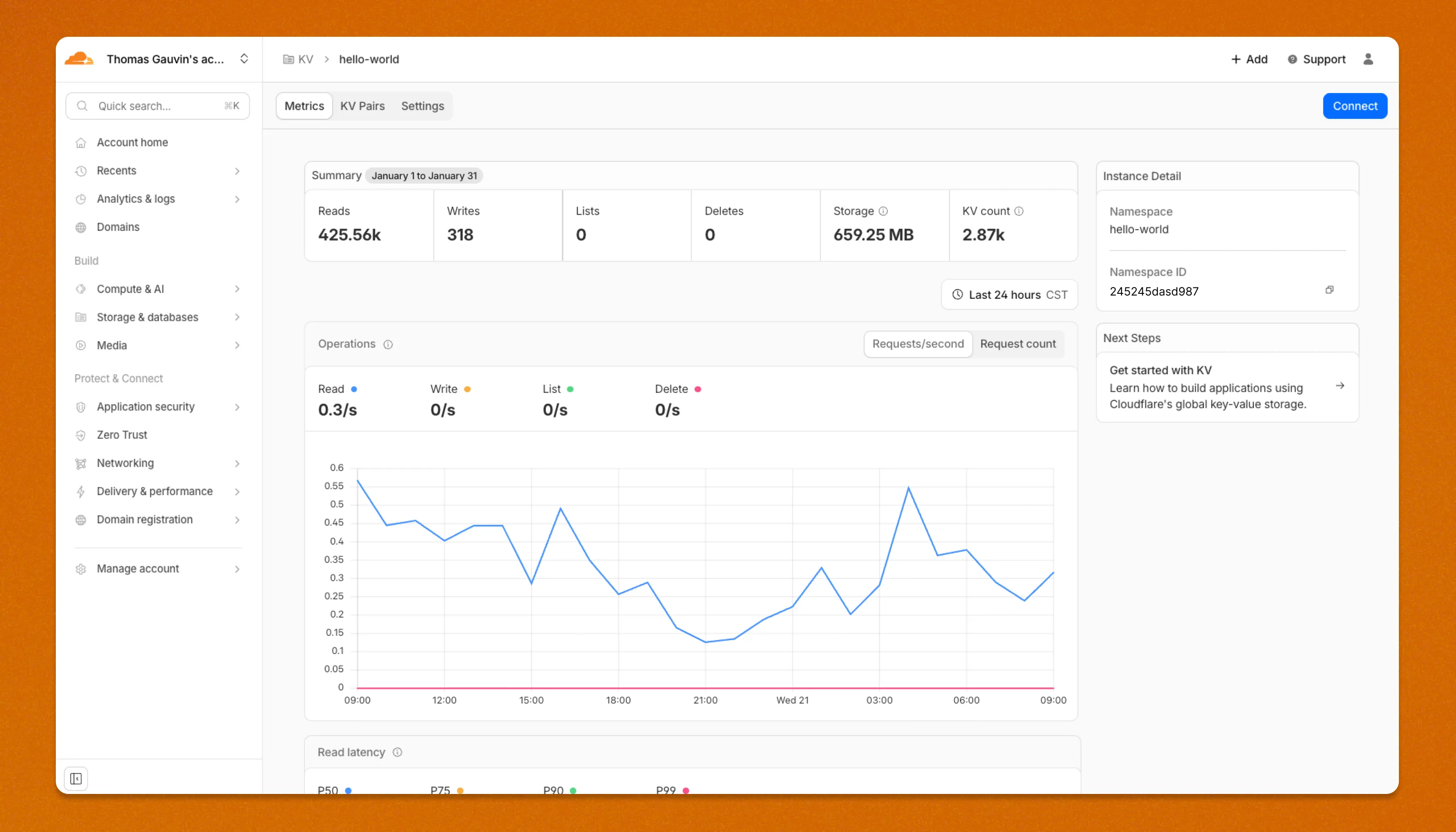

Workers KV has an updated dashboard UI with new dashboard styling that makes it easier to navigate and see analytics and settings for a KV namespace.

The new dashboard features a streamlined homepage for easy access to your namespaces and key operations, with consistent design with the rest of the dashboard UI updates. It also provides an improved analytics view.

The updated dashboard is now available for all Workers KV users. Log in to the Cloudflare Dashboard ↗ to start exploring the new interface.

Disclaimer: Please note that v6.0.0-beta.1 is in Beta and we are still testing it for stability.

Full Changelog: v5.2.0...v6.0.0-beta.1 ↗

In this release, you'll see a large number of breaking changes. This is primarily due to a change in OpenAPI definitions, which our libraries are based off of, and codegen updates that we rely on to read those OpenAPI definitions and produce our SDK libraries. As the codegen is always evolving and improving, so are our code bases.

Some breaking changes were introduced due to bug fixes, also listed below.

Please ensure you read through the list of changes below before moving to this version - this will help you understand any down or upstream issues it may cause to your environments.

BGPPrefixCreateParams.cidr: optional → requiredPrefixCreateParams.asn:number | null→numberPrefixCreateParams.loa_document_id: required → optionalServiceBindingCreateParams.cidr: optional → requiredServiceBindingCreateParams.service_id: optional → required

ConfigurationUpdateResponseremovedPublicSchema→OldPublicSchemaSchemaUpload→UserSchemaCreateResponseConfigurationUpdateParams.propertiesremoved; usenormalize

ThreatEventBulkCreateResponse:number→ complex object with counts and errors

DatabaseQueryParams: simple interface → union type (D1SingleQuery | MultipleQueries)DatabaseRawParams: same change- Supports batch queries via

batcharray

All record type interfaces renamed from

*Recordto short names:RecordResponse.ARecord→RecordResponse.ARecordResponse.AAAARecord→RecordResponse.AAAARecordResponse.CNAMERecord→RecordResponse.CNAMERecordResponse.MXRecord→RecordResponse.MXRecordResponse.NSRecord→RecordResponse.NSRecordResponse.PTRRecord→RecordResponse.PTRRecordResponse.TXTRecord→RecordResponse.TXTRecordResponse.CAARecord→RecordResponse.CAARecordResponse.CERTRecord→RecordResponse.CERTRecordResponse.DNSKEYRecord→RecordResponse.DNSKEYRecordResponse.DSRecord→RecordResponse.DSRecordResponse.HTTPSRecord→RecordResponse.HTTPSRecordResponse.LOCRecord→RecordResponse.LOCRecordResponse.NAPTRRecord→RecordResponse.NAPTRRecordResponse.SMIMEARecord→RecordResponse.SMIMEARecordResponse.SRVRecord→RecordResponse.SRVRecordResponse.SSHFPRecord→RecordResponse.SSHFPRecordResponse.SVCBRecord→RecordResponse.SVCBRecordResponse.TLSARecord→RecordResponse.TLSARecordResponse.URIRecord→RecordResponse.URIRecordResponse.OpenpgpkeyRecord→RecordResponse.Openpgpkey

ResourceGroupCreateResponse.scope: optional single → required arrayResourceGroupCreateResponse.id: optional → required

OriginCACertificateCreateParams.csr: optional → requiredOriginCACertificateCreateParams.hostnames: optional → requiredOriginCACertificateCreateParams.request_type: optional → required

- Renamed:

DeploymentsSinglePage→DeploymentListResponsesV4PagePaginationArray - Domain response fields: many optional → required

- Entire v0 API deprecated; use v1 methods (

createV1,listV1, etc.) - New sub-resources:

Sinks,Streams

EventNotificationUpdateParams.rules: optional → required- Super Slurper:

bucket,secretnow required in source params

dataSource:string→ typed enum (23 values)eventType:string→ typed enum (6 values)- V2 methods require

dimensionparameter (breaking signature change)

- Removed:

status_messagefield from all recipient response types

- Consolidated

SchemaCreateResponse,SchemaListResponse,SchemaEditResponse,SchemaGetResponse→PublicSchema - Renamed:

SchemaListResponsesV4PagePaginationArray→PublicSchemasV4PagePaginationArray

- Renamed union members:

AppListResponse.UnionMember0→SpectrumConfigAppConfig - Renamed union members:

AppListResponse.UnionMember1→SpectrumConfigPaygoAppConfig

- Removed:

WorkersBindingKindTailConsumertype (all occurrences) - Renamed:

ScriptsSinglePage→ScriptListResponsesSinglePage - Removed:

DeploymentsSinglePage

datasets.create(),update(),get()return types changedPredefinedGetResponseunion members renamed toUnionMember0-5

- Removed:

CloudflaredCreateResponse,CloudflaredListResponse,CloudflaredDeleteResponse,CloudflaredEditResponse,CloudflaredGetResponse - Removed:

CloudflaredListResponsesV4PagePaginationArray

- Reports:

create,list,get - Mitigations: sub-resource for abuse mitigations

- Instances:

create,update,list,delete,read,stats - Items:

list,get - Jobs:

create,list,get,logs - Tokens:

create,update,list,delete,read

- Directory Services:

create,update,list,delete,get - Supports IPv4, IPv6, dual-stack, and hostname configurations

- Organizations:

create,update,list,delete,get - OrganizationProfile:

update,get - Hierarchical organization support with parent/child relationships

- Catalog:

list,enable,disable,get - Credentials:

create - MaintenanceConfigs:

update,get - Namespaces:

list - Tables:

list, maintenance config management - Apache Iceberg integration

- Apps:

get,post - Meetings:

create,get, participant management - Livestreams: 10+ methods for streaming

- Recordings: start, pause, stop, get

- Sessions: transcripts, summaries, chat

- Webhooks: full CRUD

- ActiveSession: polls, kick participants

- Analytics: organization analytics

- Configuration:

create,list,delete,edit,get - Credentials:

update - Rules:

create,list,delete,bulkCreate,bulkEdit,edit,get - JWT validation with RS256/384/512, PS256/384/512, ES256, ES384

create,update,list,delete,get

create,update,list,delete,get,beginVerification

- Sinks:

create,list,delete,get - Streams:

create,update,list,delete,get

- Portals:

create,update,list,delete,read - Servers:

create,update,list,delete,read,sync

managed_byfield withparent_org_id,parent_org_name

auto_generatedfield onLOADocumentCreateResponse

delegate_loa_creation,irr_validation_state,ownership_validation_state,ownership_validation_token,rpki_validation_state

- Added

toMarkdown.supported()method to get all supported conversion formats

zdrfield added to all responses and params

- New alert type:

abuse_report_alert typefield added to PolicyFilter

ContentCreateParams: refined to discriminated union (Variant0 | Variant1)- Split into URL-based and HTML-based parameter variants for better type safety

reactivateparameter in edit

ThreatEventCreateParams.indicatorType: required → optionalhasChildrenfield added to all threat event response typesdatasetIdsquery parameter onAttackerListParams,CategoryListParams,TargetIndustryListParamscategoryUuidfield onTagCreateResponseindicatorsarray for multi-indicator support per eventuuidandpreserveUuidfields for UUID preservation in bulk createformatquery parameter ('json' | 'stix2') onThreatEventListParamscreatedAt,datasetIdfields onThreatEventEditParams

- Added

create(),update(),get()methods

- New page types:

basic_challenge,under_attack,waf_challenge

served_by_colo- colo that handled queryjurisdiction-'eu' | 'fedramp'- Time Travel (

client.d1.database.timeTravel):getBookmark(),restore()- point-in-time recovery

- New fields on

InvestigateListResponse/InvestigateGetResponse:envelope_from,envelope_to,postfix_id_outbound,replyto - New detection classification:

'outbound_ndr' - Enhanced

Findinginterface withattachment,detection,field,portion,reason,score - Added

cursorquery parameter toInvestigateListParams

- New list types:

CATEGORY,LOCATION,DEVICE

- New issue type:

'configuration_suggestion' payloadfield:unknown→ typedPayloadinterface withdetection_method,zone_tag

- Added

detections.get()method

- New datasets:

dex_application_tests,dex_device_state_events,ipsec_logs,warp_config_changes,warp_toggle_changes

Monitor.port:number→number | nullPool.load_shedding:LoadShedding→LoadShedding | nullPool.origin_steering:OriginSteering→OriginSteering | null

license_keyfield on connectorsprovision_licenseparameter for auto-provisioning- IPSec:

custom_remote_identitieswith FQDN support - Snapshots: Bond interface,

probed_mtufield

- New response types:

ProjectCreateResponse,ProjectListResponse,ProjectEditResponse,ProjectGetResponse - Deployment methods return specific response types instead of generic

Deployment

- Added

subscriptions.get()method - Enhanced

SubscriptionGetResponsewith typed event source interfaces - New event source types: Images, KV, R2, Vectorize, Workers AI, Workers Builds, Workflows

- Sippy: new provider

s3(S3-compatible endpoints) - Sippy:

bucketUrlfield for S3-compatible sources - Super Slurper:

keysfield on source response schemas (specify specific keys to migrate) - Super Slurper:

pathPrefixfield on source schemas - Super Slurper:

regionfield on S3 source params

- Added

geolocations.list(),geolocations.get()methods - Added V2 dimension-based methods (

summaryV2,timeseriesGroupsV2) to radar sub-resources

- Added

terminalboolean field to Resource Error interfaces

- Added

idfield toItemDeleteParams.Item

- New buffering fields on

SetConfigRule:request_body_buffering,response_body_buffering

- New scopes:

'dex','access'(in addition to'workers','ai_gateway')

- Response types now proper interfaces (was

unknown) - Fields now required:

id,certificates,hosts,status,type

payloadfield:unknown→ typedPayloadinterface withdetection_method,zone_tag

- Added:

CloudflareTunnelsV4PagePaginationArraypagination class

- Added

subdomains.delete()method Worker.references- track external dependencies (domains, Durable Objects, queues)Worker.startup_time_ms- startup timingScript.observability- observability settings with loggingScript.tag,Script.tags- immutable ID and tags- Placement: support for region, hostname, host-based placement

tags,tail_consumersnow accept| null- Telemetry:

tracesfield,$containersevent info,durableObjectId,transactionName,abr_levelfields

ScriptUpdateResponse: new fieldsentry_point,observability,tag,tagsplacementfield now union of 4 variants (smart mode, region, hostname, host)tags,tail_consumersnow nullableTagUpdateParams.bodynow acceptsnull

instance_retention:unknown→ typedInstanceRetentioninterface witherror_retention,success_retention- New status option:

'restart'added toStatusEditParams.status

- External emergency disconnect settings (4 new fields)

antivirusdevice posture check typeos_version_extradocumentation improvements

- New response types:

SubscriptionCreateResponse,SubscriptionUpdateResponse,SubscriptionGetResponse

- New

ApplicationTypevalues:'mcp','mcp_portal','proxy_endpoint' - New destination type:

ViaMcpServerPortalDestinationfor MCP server access

- Added

rules.listTenant()method

ProxyEndpoint: interface → discriminated union (ZeroTrustGatewayProxyEndpointIP | ZeroTrustGatewayProxyEndpointIdentity)ProxyEndpointCreateParams: interface → union type- Added

kindfield:'ip' | 'identity'

WARPConnector*Response: union type → interface

- API Gateway:

UserSchemas,Settings,SchemaValidationresources - Audit Logs:

auditLogId.not(useid.not) - CloudforceOne:

ThreatEvents.get(),IndicatorTypes.list() - Devices:

public_ipfield (use DEX API) - Email Security:

item_countfield in Move responses - Pipelines: v0 methods (use v1)

- Radar: old

summary()andtimeseriesGroups()methods (use V2) - Rulesets:

disable_apps,miragefields - WARP Connector:

connectionsfield - Workers:

environmentparameter in Domains - Zones:

ResponseBufferingpage rule

- mcp: correct code tool API endpoint (599703c ↗)

- mcp: return correct lines on typescript errors (5d6f999 ↗)

- organization_profile: fix bad reference (d84ea77 ↗)

- schema_validation: correctly reflect model to openapi mapping (bb86151 ↗)

- workers: fix tests (2ee37f7 ↗)

In January 2025, we announced the launch of the new Terraform v5 Provider. We greatly appreciate the proactive engagement and valuable feedback from the Cloudflare community following the v5 release. In response, we've established a consistent and rapid 2-3 week cadence ↗ for releasing targeted improvements, demonstrating our commitment to stability and reliability.

With the help of the community, we have a growing number of resources that we have marked as stable ↗, with that list continuing to grow with every release. The most used resources ↗ are on track to be stable by the end of March 2026, when we will also be releasing a new migration tool to you migrate from v4 to v5 with ease.

Thank you for continuing to raise issues. They make our provider stronger and help us build products that reflect your needs.

This release includes bug fixes, the stabilization of even more popular resources, and more.

- custom_pages: add "waf_challenge" as new supported error page type identifier in both resource and data source schemas

- list: enhance CIDR validator to check for normalized CIDR notation requiring network address for IPv4 and IPv6

- magic_wan_gre_tunnel: add automatic_return_routing attribute for automatic routing control

- magic_wan_gre_tunnel: add BGP configuration support with new BGP model attribute

- magic_wan_gre_tunnel: add bgp_status computed attribute for BGP connection status information

- magic_wan_gre_tunnel: enhance schema with BGP-related attributes and validators

- magic_wan_ipsec_tunnel: add automatic_return_routing attribute for automatic routing control

- magic_wan_ipsec_tunnel: add BGP configuration support with new BGP model attribute

- magic_wan_ipsec_tunnel: add bgp_status computed attribute for BGP connection status information

- magic_wan_ipsec_tunnel: add custom_remote_identities attribute for custom identity configuration

- magic_wan_ipsec_tunnel: enhance schema with BGP and identity-related attributes

- ruleset: add request body buffering support

- ruleset: enhance ruleset data source with additional configuration options

- workers_script: add observability logs attributes to list data source model

- workers_script: enhance list data source schema with additional configuration options

- account_member: fix resource importability issues

- dns_record: remove unnecessary fmt.Sprintf wrapper around LoadTestCase call in test configuration helper function

- load_balancer: fix session_affinity_ttl type expectations to match Float64 in initial creation and Int64 after migration

- workers_kv: handle special characters correctly in URL encoding

- account_subscription: update schema description for rate_plan.sets attribute to clarify it returns an array of strings

- api_shield: add resource-level description for API Shield management of auth ID characteristics

- api_shield: enhance auth_id_characteristics.name attribute description to include JWT token configuration format requirements

- api_shield: specify JSONPath expression format for JWT claim locations

- hyperdrive_config: add description attribute to name attribute explaining its purpose in dashboard and API identification

- hyperdrive_config: apply description improvements across resource, data source, and list data source schemas

- hyperdrive_config: improve schema descriptions for cache settings to clarify default values

- hyperdrive_config: update port description to clarify defaults for different database types

Auxiliary Workers are now fully supported when using full-stack frameworks, such as React Router and TanStack Start, that integrate with the Cloudflare Vite plugin. They are included alongside the framework's build output in the build output directory. Note that this feature requires Vite 7 or above.

Auxiliary Workers are additional Workers that can be called via service bindings from your main (entry) Worker. They are defined in the plugin config, as in the example below:

vite.config.ts import { defineConfig } from "vite";import { tanstackStart } from "@tanstack/react-start/plugin/vite";import { cloudflare } from "@cloudflare/vite-plugin";export default defineConfig({plugins: [tanstackStart(),cloudflare({viteEnvironment: { name: "ssr" },auxiliaryWorkers: [{ configPath: "./wrangler.aux.jsonc" }],}),],});See the Vite plugin API docs for more info.

The

.sqlfile extension is now automatically configured to be importable in your Worker code when using Wrangler or the Cloudflare Vite plugin. This is particular useful for importing migrations in Durable Objects and means you no longer need to configure custom rules when using Drizzle ↗.SQL files are imported as JavaScript strings:

TypeScript // `example` will be a JavaScript stringimport example from "./example.sql";

We have made it easier to validate connectivity when deploying WARP Connector as part of your software-defined private network.

You can now

pingthe WARP Connector host directly on its LAN IP address immediately after installation. This provides a fast, familiar way to confirm that the Connector is online and reachable within your network before testing access to downstream services.Starting with version 2025.10.186.0, WARP Connector responds to traffic addressed to its own LAN IP, giving you immediate visibility into Connector reachability.

Learn more about deploying WARP Connector and building private network connectivity with Cloudflare One.

We've partnered with Black Forest Labs (BFL) again to bring their optimized FLUX.2 [klein] 4B model to Workers AI! This distilled model offers faster generation and cost-effective pricing, while maintaining great output quality. With a fixed 4-step inference process, Klein 4B is ideal for rapid prototyping and real-time applications where speed matters.

Read the BFL blog ↗ to learn more about the model itself, or try it out yourself on our multi modal playground ↗.

Pricing documentation is available on the model page or pricing page.

The model hosted on Workers AI is optimized for speed with a fixed 4-step inference process and supports up to 4 image inputs. Since this is a distilled model, the

stepsparameter is fixed at 4 and cannot be adjusted. Like FLUX.2 [dev], this image model uses multipart form data inputs, even if you just have a prompt.With the REST API, the multipart form data input looks like this:

Terminal window curl --request POST \--url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-klein-4b' \--header 'Authorization: Bearer {TOKEN}' \--header 'Content-Type: multipart/form-data' \--form 'prompt=a sunset at the alps' \--form width=1024 \--form height=1024With the Workers AI binding, you can use it as such:

JavaScript const form = new FormData();form.append("prompt", "a sunset with a dog");form.append("width", "1024");form.append("height", "1024");// FormData doesn't expose its serialized body or boundary. Passing it to a// Request (or Response) constructor serializes it and generates the Content-Type// header with the boundary, which is required for the server to parse the multipart fields.const formResponse = new Response(form);const formStream = formResponse.body;const formContentType = formResponse.headers.get('content-type');const resp = await env.AI.run("@cf/black-forest-labs/flux-2-klein-4b", {multipart: {body: formStream,contentType: formContentType,},});The parameters you can send to the model are detailed here:

JSON Schema for Model

Required Parametersprompt(string) - Text description of the image to generate

Optional Parameters

input_image_0(string) - Binary imageinput_image_1(string) - Binary imageinput_image_2(string) - Binary imageinput_image_3(string) - Binary imageguidance(float) - Guidance scale for generation. Higher values follow the prompt more closelywidth(integer) - Width of the image, default1024Range: 256-1920height(integer) - Height of the image, default768Range: 256-1920seed(integer) - Seed for reproducibility

Note: Since this is a distilled model, the

stepsparameter is fixed at 4 and cannot be adjusted.## Multi-Reference ImagesThe FLUX.2 klein-4b model supports generating images based on reference images, just like FLUX.2 [dev]. You can use this feature to apply the style of one image to another, add a new character to an image, or iterate on past generated images. You would use it with the same multipart form data structure, with the input images in binary. The model supports up to 4 input images.For the prompt, you can reference the images based on the index, like `take the subject of image 1 and style it like image 0` or even use natural language like `place the dog beside the woman`.Note: you have to name the input parameter as `input_image_0`, `input_image_1`, `input_image_2`, `input_image_3` for it to work correctly. All input images must be smaller than 512x512.```bashcurl --request POST \--url 'https://api.cloudflare.com/client/v4/accounts/{ACCOUNT}/ai/run/@cf/black-forest-labs/flux-2-klein-4b' \--header 'Authorization: Bearer {TOKEN}' \--header 'Content-Type: multipart/form-data' \--form 'prompt=take the subject of image 1 and style it like image 0' \--form input_image_0=@/Users/johndoe/Desktop/icedoutkeanu.png \--form input_image_1=@/Users/johndoe/Desktop/me.png \--form width=1024 \--form height=1024Through Workers AI Binding:

JavaScript //helper function to convert ReadableStream to Blobasync function streamToBlob(stream: ReadableStream, contentType: string): Promise<Blob> {const reader = stream.getReader();const chunks = [];while (true) {const { done, value } = await reader.read();if (done) break;chunks.push(value);}return new Blob(chunks, { type: contentType });}const image0 = await fetch("http://image-url");const image1 = await fetch("http://image-url");const form = new FormData();const image_blob0 = await streamToBlob(image0.body, "image/png");const image_blob1 = await streamToBlob(image1.body, "image/png");form.append('input_image_0', image_blob0)form.append('input_image_1', image_blob1)form.append('prompt', 'take the subject of image 1 and style it like image 0')// FormData doesn't expose its serialized body or boundary. Passing it to a// Request (or Response) constructor serializes it and generates the Content-Type// header with the boundary, which is required for the server to parse the multipart fields.const formResponse = new Response(form);const formStream = formResponse.body;const formContentType = formResponse.headers.get('content-type');const resp = await env.AI.run("@cf/black-forest-labs/flux-2-klein-4b", {multipart: {body: formStream,contentType: formContentType}})

The

wrangler typescommand now generates TypeScript types for bindings from all environments defined in your Wrangler configuration file by default.Previously,

wrangler typesonly generated types for bindings in the top-level configuration (or a single environment when using the--envflag). This meant that if you had environment-specific bindings — for example, a KV namespace only in production or an R2 bucket only in staging — those bindings would be missing from your generated types, causing TypeScript errors when accessing them.Now, running

wrangler typescollects bindings from all environments and includes them in the generatedEnvtype. This ensures your types are complete regardless of which environment you deploy to.If you want the previous behavior of generating types for only a specific environment, you can use the

--envflag:Terminal window wrangler types --env productionLearn more about generating types for your Worker in the Wrangler documentation.

Wrangler now supports a

--checkflag for thewrangler typescommand. This flag validates that your generated types are up to date without writing any changes to disk.This is useful in CI/CD pipelines where you want to ensure that developers have regenerated their types after making changes to their Wrangler configuration. If the types are out of date, the command will exit with a non-zero status code.

Terminal window npx wrangler types --checkIf your types are up to date, the command will succeed silently. If they are out of date, you'll see an error message indicating which files need to be regenerated.

For more information, see the Wrangler types documentation.

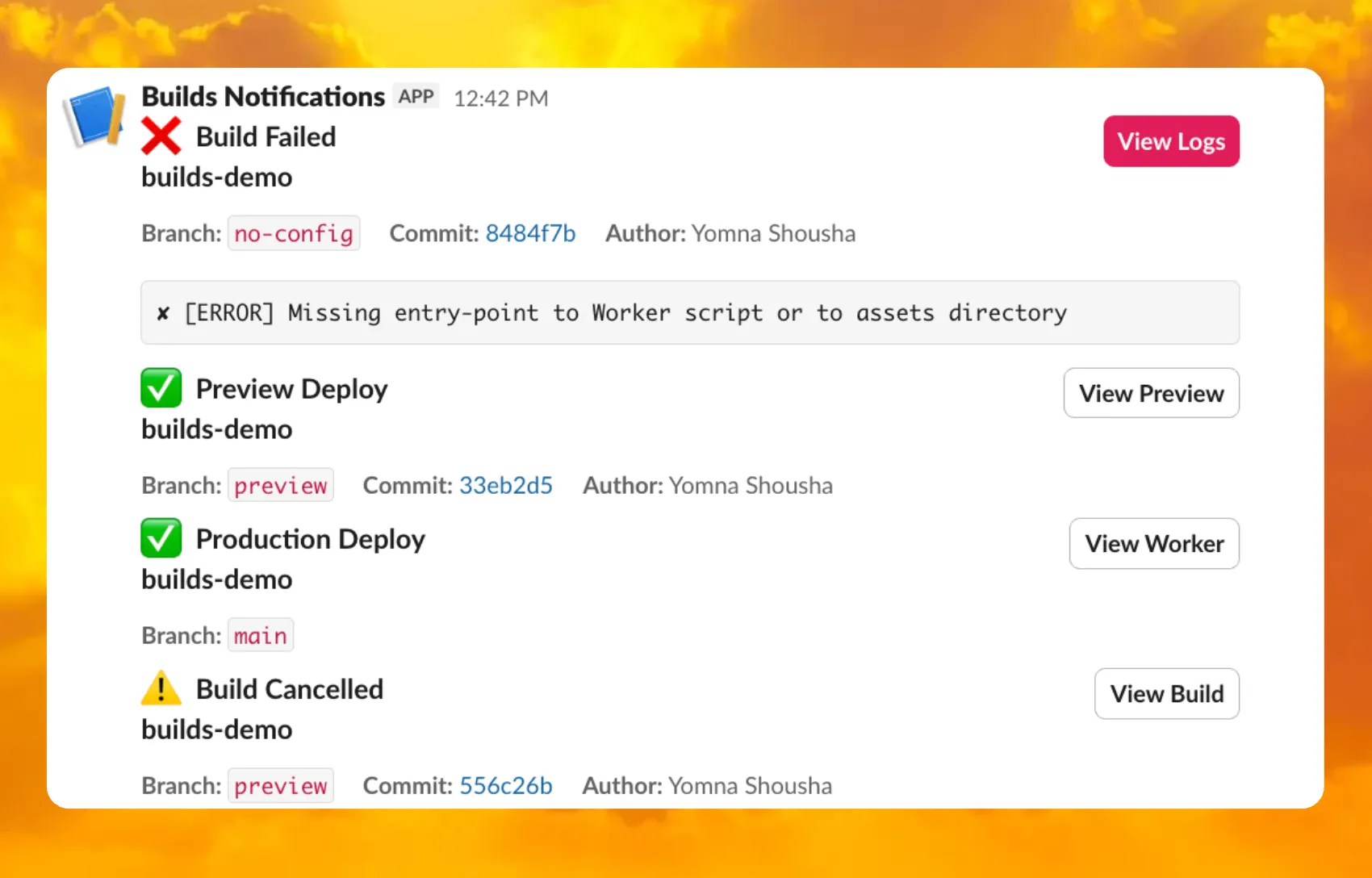

You can now receive notifications when your Workers' builds start, succeed, fail, or get cancelled using Event Subscriptions.

Workers Builds publishes events to a Queue that your Worker can read messages from, and then send notifications wherever you need — Slack, Discord, email, or any webhook endpoint.

You can deploy this Worker ↗ to your own Cloudflare account to send build notifications to Slack:

The template includes:

- Build status with Preview/Live URLs for successful deployments

- Inline error messages for failed builds

- Branch, commit hash, and author name

For setup instructions, refer to the template README ↗ or the Event Subscriptions documentation.

Wrangler now includes built-in shell tab completion support, making it faster and easier to navigate commands without memorizing every option. Press Tab as you type to autocomplete commands, subcommands, flags, and even option values like log levels.

Tab completions are supported for Bash, Zsh, Fish, and PowerShell.

Generate the completion script for your shell and add it to your configuration file:

Terminal window # Bashwrangler complete bash >> ~/.bashrc# Zshwrangler complete zsh >> ~/.zshrc# Fishwrangler complete fish >> ~/.config/fish/config.fish# PowerShellwrangler complete powershell >> $PROFILEAfter adding the script, restart your terminal or source your configuration file for the changes to take effect. Then you can simply press Tab to see available completions:

Terminal window wrangler d<TAB> # completes to 'deploy', 'dev', 'd1', etc.wrangler kv <TAB> # shows subcommands: namespace, key, bulkTab completions are dynamically generated from Wrangler's command registry, so they stay up-to-date as new commands and options are added. This feature is powered by

@bomb.sh/tab↗.See the

wrangler completedocumentation for more details.

You can now use the

HAVINGclause andLIKEpattern matching operators in Workers Analytics Engine ↗.Workers Analytics Engine allows you to ingest and store high-cardinality data at scale and query your data through a simple SQL API.

The

HAVINGclause complements theWHEREclause by enabling you to filter groups based on aggregate values. WhileWHEREfilters rows before aggregation,HAVINGfilters groups after aggregation is complete.You can use

HAVINGto filter groups where the average exceeds a threshold:SELECTblob1 AS probe_name,avg(double1) AS average_tempFROM temperature_readingsGROUP BY probe_nameHAVING average_temp > 10You can also filter groups based on aggregates such as the number of items in the group:

SELECTblob1 AS probe_name,count() AS num_readingsFROM temperature_readingsGROUP BY probe_nameHAVING num_readings > 100The new pattern matching operators enable you to search for strings that match specific patterns using wildcard characters:

LIKE- case-sensitive pattern matchingNOT LIKE- case-sensitive pattern exclusionILIKE- case-insensitive pattern matchingNOT ILIKE- case-insensitive pattern exclusion

Pattern matching supports two wildcard characters:

%(matches zero or more characters) and_(matches exactly one character).You can match strings starting with a prefix:

SELECT *FROM logsWHERE blob1 LIKE 'error%'You can also match file extensions (case-insensitive):

SELECT *FROM requestsWHERE blob2 ILIKE '%.jpg'Another example is excluding strings containing specific text:

SELECT *FROM eventsWHERE blob3 NOT ILIKE '%debug%'Learn more about the

HAVINGclause or pattern matching operators in the Workers Analytics Engine SQL reference documentation.

Custom instance types are now enabled for all Cloudflare Containers users. You can now specify specific vCPU, memory, and disk amounts, rather than being limited to pre-defined instance types. Previously, only select Enterprise customers were able to customize their instance type.

To use a custom instance type, specify the

instance_typeproperty as an object withvcpu,memory_mib, anddisk_mbfields in your Wrangler configuration:TOML [[containers]]image = "./Dockerfile"instance_type = { vcpu = 2, memory_mib = 6144, disk_mb = 12000 }Individual limits for custom instance types are based on the

standard-4instance type (4 vCPU, 12 GiB memory, 20 GB disk). You must allocate at least 1 vCPU for custom instance types. For workloads requiring less than 1 vCPU, use the predefined instance types likeliteorbasic.See the limits documentation for the full list of constraints on custom instance types. See the getting started guide to deploy your first Container,

You can now deploy microfrontends to Cloudflare, splitting a single application into smaller, independently deployable units that render as one cohesive application. This lets different teams using different frameworks develop, test, and deploy each microfrontend without coordinating releases.

Microfrontends solve several challenges for large-scale applications:

- Independent deployments: Teams deploy updates on their own schedule without redeploying the entire application

- Framework flexibility: Build multi-framework applications (for example, Astro, Remix, and Next.js in one app)

- Gradual migration: Migrate from a monolith to a distributed architecture incrementally

Create a microfrontend project:

This template automatically creates a router worker with pre-configured routing logic, and lets you configure Service bindings to Workers you have already deployed to your Cloudflare account. The router Worker analyzes incoming requests, matches them against configured routes, and forwards requests to the appropriate microfrontend via service bindings. The router automatically rewrites HTML, CSS, and headers to ensure assets load correctly from each microfrontend's mount path. The router includes advanced features like preloading for faster navigation between microfrontends, smooth page transitions using the View Transitions API, and automatic path rewriting for assets, redirects, and cookies.

Each microfrontend can be a full-framework application, a static site with Workers Static Assets, or any other Worker-based application.

Get started with the microfrontends template ↗, or read the microfrontends documentation for implementation details.

Agents SDK v0.3.0, workers-ai-provider v3.0.0, and ai-gateway-provider v3.0.0 with AI SDK v6 support

We've shipped a new release for the Agents SDK ↗ v0.3.0 bringing full compatibility with AI SDK v6 ↗ and introducing the unified tool pattern, dynamic tool approval, and enhanced React hooks with improved tool handling.

This release includes improved streaming and tool support, dynamic tool approval (for "human in the loop" systems), enhanced React hooks with

onToolCallcallback, improved error handling for streaming responses, and seamless migration from v5 patterns.This makes it ideal for building production AI chat interfaces with Cloudflare Workers AI models, agent workflows, human-in-the-loop systems, or any application requiring reliable tool execution and approval workflows.

Additionally, we've updated workers-ai-provider v3.0.0, the official provider for Cloudflare Workers AI models, and ai-gateway-provider v3.0.0, the provider for Cloudflare AI Gateway, to be compatible with AI SDK v6.

AI SDK v6 introduces a unified tool pattern where all tools are defined on the server using the

tool()function. This replaces the previous client-sideAIToolpattern.TypeScript import { tool } from "ai";import { z } from "zod";// Server: Define ALL tools on the serverconst tools = {// Server-executed toolgetWeather: tool({description: "Get weather for a city",inputSchema: z.object({ city: z.string() }),execute: async ({ city }) => fetchWeather(city)}),// Client-executed tool (no execute = client handles via onToolCall)getLocation: tool({description: "Get user location from browser",inputSchema: z.object({})// No execute function}),// Tool requiring approval (dynamic based on input)processPayment: tool({description: "Process a payment",inputSchema: z.object({ amount: z.number() }),needsApproval: async ({ amount }) => amount > 100,execute: async ({ amount }) => charge(amount)})};TypeScript // Client: Handle client-side tools via onToolCall callbackimport { useAgentChat } from "agents/ai-react";const { messages, sendMessage, addToolOutput } = useAgentChat({agent,onToolCall: async ({ toolCall, addToolOutput }) => {if (toolCall.toolName === "getLocation") {const position = await new Promise((resolve, reject) => {navigator.geolocation.getCurrentPosition(resolve, reject);});addToolOutput({toolCallId: toolCall.toolCallId,output: {lat: position.coords.latitude,lng: position.coords.longitude}});}}});Key benefits of the unified tool pattern:

- Server-defined tools: All tools are defined in one place on the server

- Dynamic approval: Use

needsApprovalto conditionally require user confirmation - Cleaner client code: Use

onToolCallcallback instead of managing tool configs - Type safety: Full TypeScript support with proper tool typing

Creates a new chat interface with enhanced v6 capabilities.

TypeScript // Basic chat setup with onToolCallconst { messages, sendMessage, addToolOutput } = useAgentChat({agent,onToolCall: async ({ toolCall, addToolOutput }) => {// Handle client-side tool executionawait addToolOutput({toolCallId: toolCall.toolCallId,output: { result: "success" }});}});Use

needsApprovalon server tools to conditionally require user confirmation:TypeScript const paymentTool = tool({description: "Process a payment",inputSchema: z.object({amount: z.number(),recipient: z.string()}),needsApproval: async ({ amount }) => amount > 1000,execute: async ({ amount, recipient }) => {return await processPayment(amount, recipient);}});The

isToolUIPartandgetToolNamefunctions now check both static and dynamic tool parts:TypeScript import { isToolUIPart, getToolName } from "ai";const pendingToolCallConfirmation = messages.some((m) =>m.parts?.some((part) => isToolUIPart(part) && part.state === "input-available",),);// Handle tool confirmationif (pendingToolCallConfirmation) {await addToolOutput({toolCallId: part.toolCallId,output: "User approved the action"});}If you need the v5 behavior (static-only checks), use the new functions:

TypeScript import { isStaticToolUIPart, getStaticToolName } from "ai";The

convertToModelMessages()function is now asynchronous. Update all calls to await the result:TypeScript import { convertToModelMessages } from "ai";const result = streamText({messages: await convertToModelMessages(this.messages),model: openai("gpt-4o")});The

CoreMessagetype has been removed. UseModelMessageinstead:TypeScript import { convertToModelMessages, type ModelMessage } from "ai";const modelMessages: ModelMessage[] = await convertToModelMessages(messages);The

modeoption forgenerateObjecthas been removed:TypeScript // Before (v5)const result = await generateObject({mode: "json",model,schema,prompt});// After (v6)const result = await generateObject({model,schema,prompt});While

generateObjectandstreamObjectare still functional, the recommended approach is to usegenerateText/streamTextwith theOutput.object()helper:TypeScript import { generateText, Output, stepCountIs } from "ai";const { output } = await generateText({model: openai("gpt-4"),output: Output.object({schema: z.object({ name: z.string() })}),stopWhen: stepCountIs(2),prompt: "Generate a name"});Note: When using structured output with

generateText, you must configure multiple steps withstopWhenbecause generating the structured output is itself a step.Seamless integration with Cloudflare Workers AI models through the updated workers-ai-provider v3.0.0 with AI SDK v6 support.

Use Cloudflare Workers AI models directly in your agent workflows:

TypeScript import { createWorkersAI } from "workers-ai-provider";import { useAgentChat } from "agents/ai-react";// Create Workers AI model (v3.0.0 - enhanced v6 internals)const model = createWorkersAI({binding: env.AI,})("@cf/meta/llama-3.2-3b-instruct");Workers AI models now support v6 file handling with automatic conversion:

TypeScript // Send images and files to Workers AI modelssendMessage({role: "user",parts: [{ type: "text", text: "Analyze this image:" },{type: "file",data: imageBuffer,mediaType: "image/jpeg",},],});// Workers AI provider automatically converts to proper formatEnhanced streaming support with automatic warning detection:

TypeScript // Streaming with Workers AI modelsconst result = await streamText({model: createWorkersAI({ binding: env.AI })("@cf/meta/llama-3.2-3b-instruct"),messages: await convertToModelMessages(messages),onChunk: (chunk) => {// Enhanced streaming with warning handlingconsole.log(chunk);},});The ai-gateway-provider v3.0.0 now supports AI SDK v6, enabling you to use Cloudflare AI Gateway with multiple AI providers including Anthropic, Azure, AWS Bedrock, Google Vertex, and Perplexity.

Use Cloudflare AI Gateway to add analytics, caching, and rate limiting to your AI applications:

TypeScript import { createAIGateway } from "ai-gateway-provider";// Create AI Gateway provider (v3.0.0 - enhanced v6 internals)const model = createAIGateway({gatewayUrl: "https://gateway.ai.cloudflare.com/v1/your-account-id/gateway",headers: {"Authorization": `Bearer ${env.AI_GATEWAY_TOKEN}`}})({provider: "openai",model: "gpt-4o"});The following APIs are deprecated in favor of the unified tool pattern:

Deprecated Replacement AITooltypeUse AI SDK's tool()function on serverextractClientToolSchemas()Define tools on server, no client schemas needed createToolsFromClientSchemas()Define tools on server with tool()toolsRequiringConfirmationoptionUse needsApprovalon server toolsexperimental_automaticToolResolutionUse onToolCallcallbacktoolsoption inuseAgentChatUse onToolCallfor client-side executionaddToolResult()Use addToolOutput()- Unified Tool Pattern: All tools must be defined on the server using

tool() convertToModelMessages()is async: Addawaitto all callsCoreMessageremoved: UseModelMessageinsteadgenerateObjectmode removed: RemovemodeoptionisToolUIPartbehavior changed: Now checks both static and dynamic tool parts

Update your dependencies to use the latest versions:

Terminal window npm install agents@^0.3.0 workers-ai-provider@^3.0.0 ai-gateway-provider@^3.0.0 ai@^6.0.0 @ai-sdk/react@^3.0.0 @ai-sdk/openai@^3.0.0- Migration Guide ↗ - Comprehensive migration documentation from v5 to v6

- AI SDK v6 Documentation ↗ - Official AI SDK migration guide

- AI SDK v6 Announcement ↗ - Learn about new features in v6

- AI SDK Documentation ↗ - Complete AI SDK reference

- GitHub Issues ↗ - Report bugs or request features

We'd love your feedback! We're particularly interested in feedback on:

- Migration experience - How smooth was the upgrade from v5 to v6?

- Unified tool pattern - How does the new server-defined tool pattern work for you?

- Dynamic tool approval - Does the

needsApprovalfeature meet your needs? - AI Gateway integration - How well does the new provider work with your setup?