Changelog

New updates and improvements at Cloudflare.

You can now receive event notifications for Artifacts repository changes and consume them from a Worker to build commit-driven automation.

This allows you to:

- Run custom workflows when a repository is created or imported

- Kick off a build and deploy a change when an agent pushes to a repo

- Trigger a review agent on every push

Available events include:

- Account-level events (

artifactssource) —repo.created,repo.deleted,repo.forked,repo.imported - Repository-level events (

artifacts.reposource) —pushed,cloned,fetched

To learn more, refer to Artifacts documentation.

You can now manage Artifacts namespaces, repos, and repo-scoped tokens directly from Wrangler CLI.

Available commands:

wrangler artifacts namespaces list— List Artifacts namespaces in your account.wrangler artifacts namespaces get— Get metadata for a namespace.wrangler artifacts repos create— Create a repo in a namespace.wrangler artifacts repos list— List repos in a namespace.wrangler artifacts repos get— Get metadata for a repo.wrangler artifacts repos delete— Delete a repo.wrangler artifacts repos issue-token— Issue a repo-scoped token for Git access.

To get started, refer to the Wrangler Artifacts commands documentation.

You can now share local dev sessions through Cloudflare Tunnel and get a public URL when using either Wrangler or the Cloudflare Vite plugin. This is useful when you need to share a preview, test a webhook, or access your app from another device.

This lets you either:

- start a temporary Quick tunnel with a random

*.trycloudflare.comhostname, or - use an existing named tunnel for a stable hostname and to restrict access with Cloudflare Access.

To start a tunnel, press

tin Wrangler ort + Enterin Vite while your dev server is running. For details on setting up a named tunnel, refer to Share a local dev server.- start a temporary Quick tunnel with a random

R2 SQL is Cloudflare's serverless, distributed SQL engine for querying Apache Iceberg ↗ tables stored in R2 Data Catalog. R2 SQL runs directly on Cloudflare's global network with no infrastructure to manage, so you can analyze data in R2 without exporting it to an external warehouse.

R2 SQL now supports joining multiple Iceberg tables in a single query. You can combine tables with JOINs, filter with subqueries, and define multi-table CTEs to build complex analytical queries.

- JOINs —

INNER JOIN,LEFT JOIN,RIGHT JOIN,FULL OUTER JOIN,CROSS JOIN, and implicit joins (comma-separatedFROMwith conditions inWHERE) - Subqueries —

IN/NOT IN,EXISTS/NOT EXISTS, scalar subqueries inSELECT/WHERE/HAVING, and derived tables (subqueries inFROM) - Multi-table CTEs —

WITHclauses can reference different tables and include JOINs - Self-joins — join a table with itself using different aliases

- Multi-way joins — join three or more tables in a single query

SELECT z.domain, z.plan, COUNT(*) AS request_countFROM my_namespace.zones zINNER JOIN my_namespace.http_requests h ON z.zone_id = h.zone_idWHERE z.plan = 'enterprise'GROUP BY z.domain, z.planORDER BY request_count DESCLIMIT 20SELECT z.domain, z.planFROM my_namespace.zones zWHERE EXISTS (SELECT 1 FROM my_namespace.firewall_events fWHERE f.zone_id = z.zone_id AND f.action = 'block')ORDER BY z.domainLIMIT 20WITH top_zones AS (SELECT zone_id, COUNT(*) AS req_countFROM my_namespace.http_requestsGROUP BY zone_idORDER BY req_count DESCLIMIT 50),zone_threats AS (SELECT zone_id, COUNT(*) AS threat_countFROM my_namespace.firewall_eventsWHERE risk_score > 0.5GROUP BY zone_id)SELECT tz.zone_id, tz.req_count, COALESCE(zt.threat_count, 0) AS threat_countFROM top_zones tzLEFT JOIN zone_threats zt ON tz.zone_id = zt.zone_idORDER BY tz.req_count DESCLIMIT 20For the full syntax reference, refer to the SQL reference. For performance guidance with joins, refer to Limitations and best practices.

- JOINs —

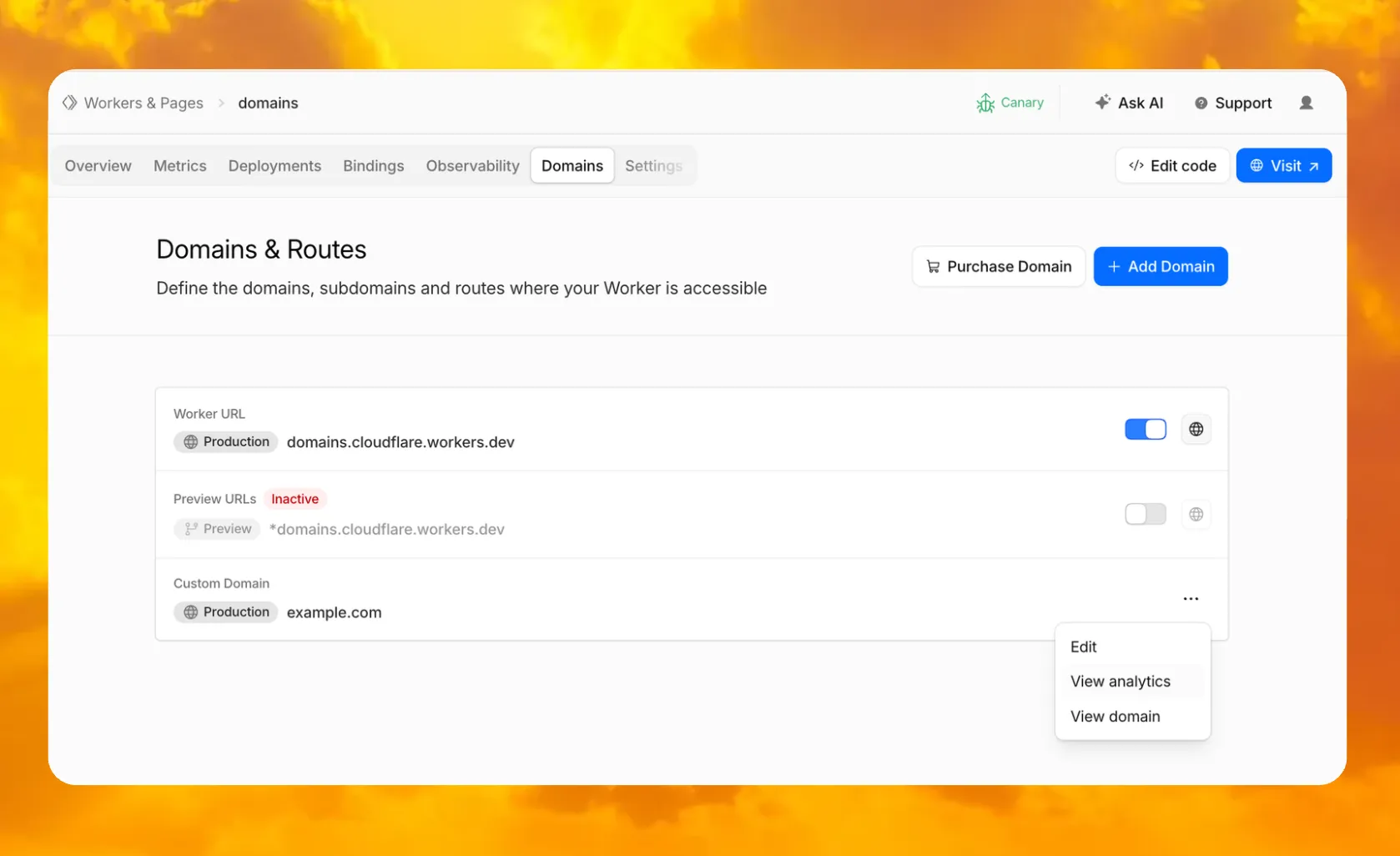

In your Worker's dashboard, there is now a dedicated Domains tab where you can purchase a new domain through Cloudflare Registrar and have it automatically connected, add an existing domain, and manage all of your Worker's routing in one place.

You can also enable or disable your

workers.devsubdomain and Preview URLs, put them behind Cloudflare Access to require sign-in, and jump directly to analytics or domain overview for any connected domain.To get started, go to Workers & Pages, select a Worker, and open the Domains tab.

Go to Workers & Pages

The latest release of the Agents SDK ↗ brings more reliable chat recovery, fixes Agent state synchronization during reconnects, adds durable submissions for Think, exposes routing retry configuration, and adds connection control for Voice agents.

@cloudflare/ai-chatnow keeps server turns running when a browser or client stream is interrupted. This is useful for long-running AI responses where users refresh the page, close a tab, or temporarily lose connection. Callingstop()still cancels the server turn.Set

cancelOnClientAbort: trueif browser or client aborts should also cancel the server turn:JavaScript const chat = useAgentChat({agent: "assistant",name: "user-123",cancelOnClientAbort: true,});TypeScript const chat = useAgentChat({agent: "assistant",name: "user-123",cancelOnClientAbort: true,});Notable bug fixes:

- Chat stream resume negotiation no longer throws when replay races with a closed WebSocket connection.

- Recovered chat continuations no longer leave

useAgentChatstuck in a streaming state when the original socket disconnects before a terminal response. - Approval auto-continuation preserves reasoning parts and persists continuation reasoning in the final message.

isServerStreamingnow resets correctly when a resumed stream moves from the fallback observer path to a transport-owned stream.

agents@0.12.4prevents duplicate initial state frames during WebSocket connection setup. This avoids stale initial state messages overwriting state updates already sent by the client.Agent recovery is also more reliable when tool calls span a Durable Object restart. Recovery now defers user finish hooks until after agent startup and isolates hook failures, so one failed hook does not block other recovered runs from finalizing.

getAgentByName()now supportsroutingRetryfor transient Durable Object routing failures:JavaScript import { getAgentByName } from "agents";const agent = await getAgentByName(env.AssistantAgent, "user-123", {routingRetry: {maxAttempts: 3,},});TypeScript import { getAgentByName } from "agents";const agent = await getAgentByName(env.AssistantAgent, "user-123", {routingRetry: {maxAttempts: 3,},});@cloudflare/thinknow supports durable programmatic submissions.submitMessages()provides durable acceptance, idempotent retries, status inspection, cancellation, and cleanup for server-driven turns that should continue after the caller returns.Think.chat()RPC turns now run inside chat recovery fibers and persist their stream chunks. Interrupted sub-agent turns can recover partial output instead of starting over.ChatOptions.toolshas been removed from the TypeScript API. Define durable tools on the child agent or use agent tools for orchestration. Runtimeoptions.toolsvalues passed by legacy callers are ignored with a warning.@cloudflare/thinkno longer appliespruneMessages({ toolCalls: "before-last-2-messages" })to model context by default. The previous default could strip client-side tool results from longer multi-turn flows.truncateOlderMessagesstill runs as before, so context cost remains bounded. Subclasses that relied on the old aggressive pruning can opt back in frombeforeTurn:JavaScript import { Think } from "@cloudflare/think";import { pruneMessages } from "ai";export class MyAgent extends Think {beforeTurn(ctx) {return {messages: pruneMessages({messages: ctx.messages,toolCalls: "before-last-2-messages",}),};}}TypeScript import { Think } from "@cloudflare/think";import { pruneMessages } from "ai";export class MyAgent extends Think<Env> {beforeTurn(ctx) {return {messages: pruneMessages({messages: ctx.messages,toolCalls: "before-last-2-messages",}),};}}@cloudflare/voiceadds anenabledoption touseVoiceAgent. React apps can now delay creating and connecting aVoiceClientuntil prerequisites such as capability tokens are ready.JavaScript const voice = useVoiceAgent({agent: "MyVoiceAgent",enabled: Boolean(token),});TypeScript const voice = useVoiceAgent({agent: "MyVoiceAgent",enabled: Boolean(token),});This release also fixes Workers AI speech-to-text session edge cases and

withVoicetext streaming from AI SDKtextStreamresponses.- Streamable HTTP routing — Server-to-client requests now route through the originating POST stream when no standalone SSE stream is available.

- Structured tool output — Tool output shapes are preserved when truncating older messages or oversized persisted rows.

- Non-chat Think tool steps — Think agent-tool children can complete without emitting assistant text and can return structured output through

getAgentToolOutput. - Sub-agent schedules — Stale sub-agent schedule rows are pruned when their owning facet registry entry no longer exists.

@cloudflare/codemode— Adds a browser-safe export with an iframe sandbox executor and resolves OpenAPI specs inside the sandbox to avoid Worker Loader RPC size limits.

To update to the latest version:

Terminal window npm i agents@latest @cloudflare/ai-chat@latest @cloudflare/think@latest @cloudflare/voice@latestRefer to the Agents API reference and Chat agents documentation for more information.

The

/cdn-cgi/rumbeacon endpoint now returns405 Method Not Allowedfor non-POST requests instead of404 Not Found. The response includes anAllow: POST, OPTIONSheader per RFC 9110 §15.5.6 ↗.Previously, sending a

GETor other non-POST request to this endpoint returned a404, which was misleading because it suggested the endpoint did not exist. The new405response clearly indicates that the endpoint exists but only acceptsPOSTrequests.The Web Analytics beacon (

beacon.min.js) already usesPOSTfor all metric submissions, so this change does not affect normal beacon operation.OPTIONSrequests for CORS preflight continue to work as before.For more information, refer to the Web Analytics FAQ.

SSH through Wrangler is now enabled by default for Containers. Previously, you had to set

ssh.enabledtotruein your Container configuration before you could connect.This change does not expose any publicly accessible ports on your Container. The SSH service is reachable only through

wrangler containers ssh, which authenticates against your Cloudflare account. You also need to add anssh-ed25519public key toauthorized_keysbefore anyone can connect, so enabling SSH alone does not grant access.To connect, add a public key to your Container configuration and run

wrangler containers ssh <INSTANCE_ID>:JSONC {"containers": [{"authorized_keys": [{"name": "<NAME>","public_key": "<YOUR_PUBLIC_KEY_HERE>",},],},],}TOML [[containers]][[containers.authorized_keys]]name = "<NAME>"public_key = "<YOUR_PUBLIC_KEY_HERE>"To disable SSH, set

ssh.enabledtofalsein your Container configuration:JSONC {"containers": [{"ssh": {"enabled": false,},},],}TOML [[containers]][containers.ssh]enabled = falseFor more information, refer to the SSH documentation.

R2 Data Catalog is a managed Apache Iceberg data catalog built directly into your R2 bucket that allows you to connect query engines like R2 SQL, Spark, Snowflake, and DuckDB to your data in R2.

You can now query analytics for your R2 Data Catalog warehouses via Cloudflare's GraphQL Analytics API. Two new datasets are available:

r2CatalogDataOperationsAdaptiveGroupstracks Iceberg REST API requests made to your catalog, including operation type, request duration, HTTP status, and request body bytes. Use this to monitor request volume and latency across warehouses, namespaces, and tables.r2CatalogTableMaintenanceAdaptiveGroupstracks table maintenance jobs such as compaction and snapshot expiration. Use this to monitor job success rates, files processed, bytes read and written, and job duration.

Both datasets support filtering by warehouse name, namespace, table name, and time range. They also include percentile aggregations for duration metrics.

For detailed schema information and example queries, refer to the R2 Data Catalog metrics and analytics documentation.

We are refreshing the Workers AI model catalog to make room for newer releases. Please update your apps to remove references to the models listed below before the deprecation date.

@cf/zai-org/glm-4.7-flash— fast multilingual model with multi-turn tool calling and coding capabilities.@cf/google/gemma-4-26b-a4b-it— efficient open model with vision and tool calling.@cf/moonshotai/kimi-k2.6— capable tool-calling and vision model for agentic workloads and coding.

For pricing, refer to the Workers AI pricing page.

We originally stated Kimi K2.5 would be deprecated on May 10, 2026, however we have extended the deprecation date to May 30, 2026. Requests will be automatically aliased to Kimi K2.6 on May 30, 2026, which has a higher price. Please review the

@cf/moonshotai/kimi-k2.6pricing and model capabilities prior to May 30, 2026 to ensure that the model suits your needs.@cf/moonshotai/kimi-k2.5-->@cf/moonshotai/kimi-k2.6@hf/meta-llama/meta-llama-3-8b-instruct@cf/meta/llama-3-8b-instruct@cf/meta/llama-3-8b-instruct-awq@cf/meta/llama-3.1-8b-instruct@cf/meta/llama-3.1-8b-instruct-awq@cf/meta/llama-3.1-70b-instruct@cf/meta/llama-2-7b-chat-int8@cf/meta/llama-2-7b-chat-fp16@cf/mistral/mistral-7b-instruct-v0.1@hf/mistral/mistral-7b-instruct-v0.2@hf/google/gemma-7b-it@cf/google/gemma-3-12b-it@hf/nousresearch/hermes-2-pro-mistral-7b@cf/microsoft/phi-2@cf/defog/sqlcoder-7b-2@cf/unum/uform-gen2-qwen-500m@cf/facebook/bart-large-cnn

The

-fastand-loravariants of models will remain active, including:@cf/meta/llama-3.3-70b-instruct-fp8-fast@cf/meta/llama-3.1-8b-instruct-fast@cf/google/gemma-7b-it-lora@cf/google/gemma-2b-it-lora@cf/mistral/mistral-7b-instruct-v0.2-lora@cf/meta-llama/llama-2-7b-chat-hf-lora

LoRA models may be deprecated in the future. We will be adding more LoRA capabilities to the catalog, and will communicate when new LoRA models come online to give users time to train new LoRAs before we deprecate old ones.

For the full list of available models, refer to the Workers AI model catalog.

Multiple security vulnerabilities were disclosed by the React team and Vercel affecting React Server Components and Next.js. These include denial of service, middleware and proxy bypass, server-side request forgery, cross-site scripting, and cache poisoning issues across a range of severity levels.

We strongly recommend updating your application and its dependencies immediately. Patched versions are available for React (

react-server-dom-webpack,react-server-dom-parcel, andreact-server-dom-turbopack19.0.6,19.1.7, and19.2.6) and Next.js (15.5.16and16.2.5).Cloudflare WAF rules deployed in response to prior React Server Component CVEs (

CVE-2025-55184↗ andCVE-2026-23864↗) already provide coverage for the newly disclosed denial-of-service vulnerabilities. These rules are enabled by default with a Block action for all customers using the Cloudflare Managed Ruleset, including Free plan customers using the Free Managed Ruleset.Ruleset Rule description Rule ID Default action Cloudflare Managed Ruleset React - DoS - CVE-2025-55184↗2694f1610c0b471393b21aef102ec699Block Cloudflare Managed Ruleset React - DoS - CVE-2026-23864↗aaede80b4d414dc89c443cea61680354Block The existing rules detect the underlying attack patterns generically. As a result, they apply to the new

CVE-2026-23870↗ denial-of-service vulnerability in Server Components and the corresponding Next.js advisoryGHSA-8h8q-6873-q5fj↗.Cloudflare is investigating whether WAF rules can be safely and effectively deployed for three of the high-severity advisories:

CVE-2026-23870↗ /GHSA-8h8q-6873-q5fj↗,GHSA-267c-6grr-h53f↗, andGHSA-mg66-mrh9-m8jx↗. If it is possible to create a managed WAF rule that mitigates these CVEs and does not potentially break application behavior, Cloudflare will add additional managed WAF rules. These rules will be announced through the WAF changelog. Because these vulnerabilities were shared with Cloudflare with minimal advance notice, we are still investigating what WAF mitigations are possible.Several of the disclosed vulnerabilities are not possible to block in WAF. We strongly recommend updating your applications so they are not purely reliant on WAF mitigations.

Customers on Pro, Business, or Enterprise plans should ensure that Managed Rules are enabled.

Vinext: Vinext ↗ is a Vite plugin that reimplements the Next.js API surface. Vinext's latest release is not vulnerable to any of the disclosed CVEs. Vinext's architecture differs from stock Next.js in ways that sidestep the affected code paths. For example, it does not implement the PPR resume protocol, does not expose Pages Router data-route endpoints, and strips internal headers such as

x-nextjs-dataat request boundaries. As an extra layer of defense, we added a React19.2.6or later requirement when runningvinext init(PR #1118 ↗, PR #1112 ↗) to prevent accidentally running a vulnerable version of React with Vinext.OpenNext on Cloudflare: OpenNext is an adapter that lets you deploy Next.js apps to the Cloudflare Workers platform. OpenNext itself is not directly vulnerable to the React denial-of-service CVE, but users must update the Next.js version in their application. The OpenNext team has updated the adapter to further harden against these vectors and released a new version of the Cloudflare adapter. Test fixtures and examples have been updated to use patched versions (PR #1255 ↗).

Advisory Severity Issue WAF status CVE-2026-23870↗ /GHSA-8h8q-6873-q5fj↗High Denial of service in Server Components WAF rules in place: 2694f1610c0b471393b21aef102ec699,aaede80b4d414dc89c443cea61680354

Cloudflare is investigating additional managed WAF coverageGHSA-267c-6grr-h53f↗High Middleware bypass via segment-prefetch routes Cloudflare is investigating if this can be safely and effectively mitigated by a managed WAF rule GHSA-mg66-mrh9-m8jx↗High Denial of service via connection exhaustion in Cache Components Cloudflare is investigating if this can be safely and effectively mitigated by a managed WAF rule GHSA-492v-c6pp-mqqv↗High Middleware bypass via dynamic route parameter injection Not possible to safely enable a managed WAF rule without potentially breaking application behavior GHSA-c4j6-fc7j-m34r↗High SSRF via WebSocket upgrades Not possible to safely enable a managed WAF rule without potentially breaking application behavior GHSA-36qx-fr4f-26g5↗High Middleware bypass in Pages Router i18n Custom WAF rule possible; global managed rule could potentially break application behavior GHSA-ffhc-5mcf-pf4q↗Moderate XSS via CSP nonces Custom WAF rule possible; global managed rule could potentially break application behavior GHSA-gx5p-jg67-6x7h↗Moderate XSS in beforeInteractivescriptsNot possible to safely enable a managed WAF rule without potentially breaking application behavior GHSA-h64f-5h5j-jqjh↗Moderate Denial of service in Image Optimization API Custom WAF rule possible; global managed rule could potentially break application behavior GHSA-wfc6-r584-vfw7↗Moderate Cache poisoning in RSC responses Custom WAF rule possible; global managed rule could potentially break application behavior GHSA-vfv6-92ff-j949↗Low Cache poisoning via RSC cache-busting collisions Not possible to safely enable a managed WAF rule without potentially breaking application behavior GHSA-3g8h-86w9-wvmq↗Low Middleware redirect cache poisoning Custom WAF rule possible; global managed rule could potentially break application behavior

You can now interact with your Stream video library using new bindings for Workers! This allows customers to upload content to Stream, provision direct uploads, manage videos, and generate signed URLs from a Worker without making authenticated API calls. We're excited to bring Stream and Workers closer together to empower more programmatic pipelines, tighter integrations, and support generative AI and inference workloads.

Use the Stream binding when you want to:

- Upload videos from URLs or create basic direct upload links for end users

- Generate signed playback tokens without managing signing keys

- Manage video metadata, captions, downloads, and watermarks

- Build video pipelines entirely within Workers

To get started, add the Stream binding to your Wrangler configuration:

JSONC {"$schema": "./node_modules/wrangler/config-schema.json","stream": {"binding": "STREAM"}}TOML [stream]binding = "STREAM"Generate a video with AI and upload directly to Stream or send a URL of a file you already have:

JavaScript const aiResponse = await env.AI.run("google/veo-3.1",{prompt: "A dog walking next to a river",duration: "10s",aspect_ratio: "16:9",resolution: "1080p",generate_audio: true,},{gateway: { id: "experiments" },},);// Veo will return a URL of the generated asset.const videoUrl = aiResponse.result.video;// Alternative option: a video of the Austin Office mobile// const videoUrl = 'https://pub-d9fcbc1abcd244c1821f38b99017347f.r2.dev/aus-mobile.mp4';// Upload to Stream by providing a URLconst streamVideo = await env.STREAM.upload(videoUrl);// The streamVideo response will include the video ID, playback and manifest// URLs, and other information, just like the REST API.TypeScript const aiResponse = await env.AI.run('google/veo-3.1',{prompt: 'A dog walking next to a river',duration: '10s',aspect_ratio: '16:9',resolution: '1080p',generate_audio: true,},{gateway: { id: 'experiments' },},);// Veo will return a URL of the generated asset.const videoUrl = aiResponse.result.video;// Alternative option: a video of the Austin Office mobile// const videoUrl = 'https://pub-d9fcbc1abcd244c1821f38b99017347f.r2.dev/aus-mobile.mp4';// Upload to Stream by providing a URLconst streamVideo = await env.STREAM.upload(videoUrl);// The streamVideo response will include the video ID, playback and manifest// URLs, and other information, just like the REST API.Generate a signed URL without using a signing key or an API call:

JavaScript const video_id = "ce800be43a9772f4bb02f35b860fb516";const token = await env.STREAM.video(video_id).generateToken();// Use the "token" in an iframe embed code, manifest URL, or thumbnail:const embedUrl = `https://customer-igynxd2rwhmuoxw8.cloudflarestream.com/${token}/iframe`;TypeScript const video_id = 'ce800be43a9772f4bb02f35b860fb516';const token = await env.STREAM.video(video_id).generateToken();// Use the "token" in an iframe embed code, manifest URL, or thumbnail:const embedUrl = `https://customer-igynxd2rwhmuoxw8.cloudflarestream.com/${token}/iframe`;Get and set video properties easily:

JavaScript const video_id = "46c8b7f480d410840758c1cb14a72e47";const result = await env.STREAM.video(video_id).details();await env.STREAM.video(video_id).update({meta: { name: "sample video" },});TypeScript const video_id = '46c8b7f480d410840758c1cb14a72e47';const result = await env.STREAM.video(video_id).details();await env.STREAM.video(video_id).update({meta: { name: 'sample video' }});For setup instructions and the full API reference, refer to Bind to Workers API.

Add a binding for Cloudflare Stream (env.STREAM). On the watch page, use the Stream binding to get info based on the ID, and leverage video.meta.name as the page title.

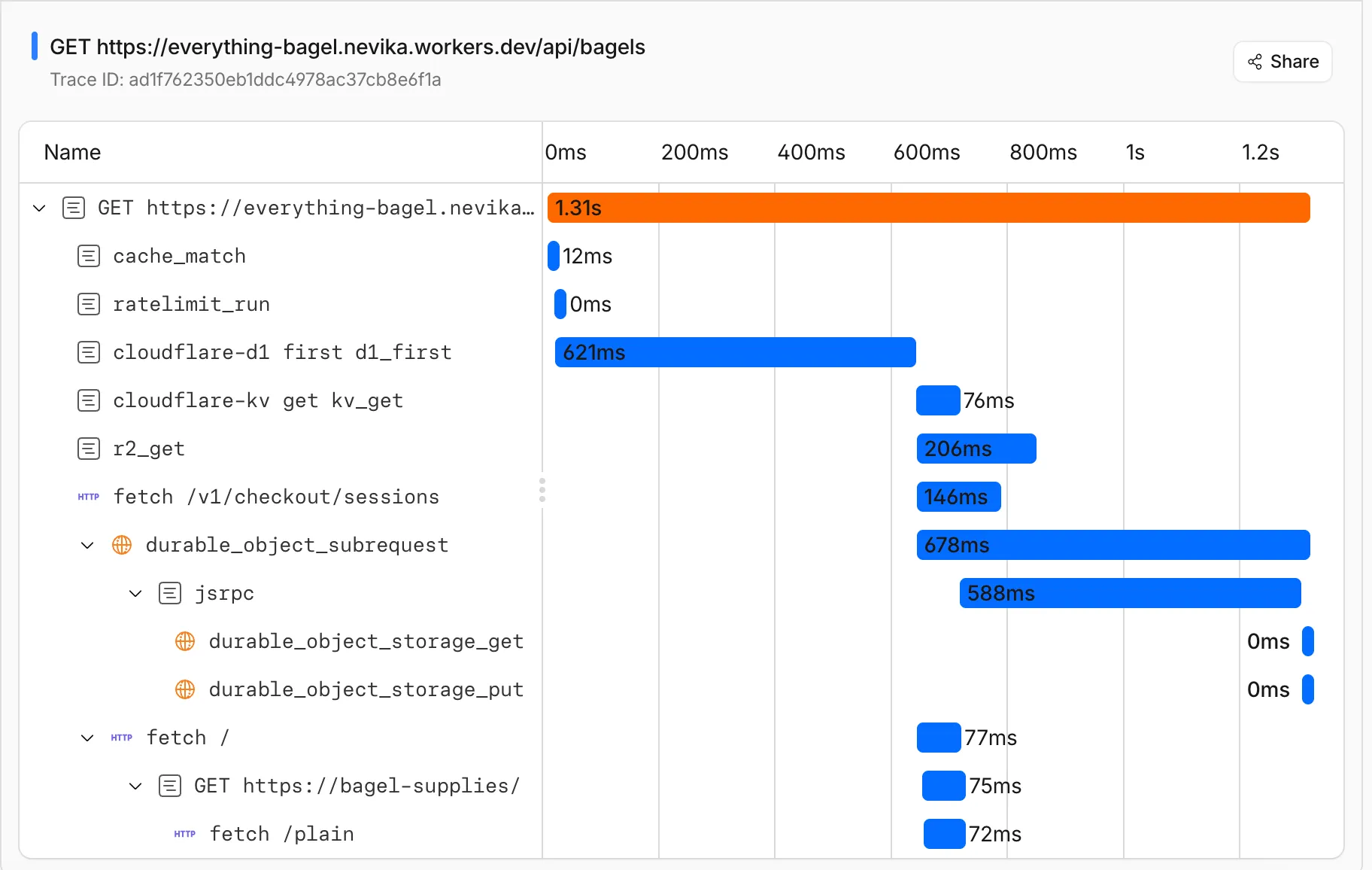

You can now get a single unified trace across Worker-to-Worker subrequests, with trace context propagating automatically. Previously, automatic tracing produced disconnected traces when a Worker called another Worker through a service binding or Durable Object.

This means you can:

- Follow a request through your entire Worker architecture in one trace view

- See service binding and Durable Object calls as nested child spans instead of separate traces

- Debug cross-Worker request flows in the Cloudflare dashboard or in an external observability platform via OpenTelemetry

Tracing must be enabled in your Wrangler configuration for traces to be recorded. Checkout Workers tracing to get started.

Up next, we are working on external trace context propagation using W3C Trace Context standards ↗, which will allow traces from your Workers to link with traces from services outside of Cloudflare.

Cloudflare Pipelines ingests streaming data via Workers or HTTP endpoints, transforms it with SQL, and writes it to R2 as Apache Iceberg tables. R2 Data Catalog manages those Iceberg tables, compaction, and compatibility with query engines like R2 SQL, Spark, and DuckDB.

You can now create and manage both products using Terraform, supported in the Cloudflare Terraform provider v5.19.0 ↗.

This adds four new resources that let you define your entire data pipeline as infrastructure-as-code: a data catalog, a stream for ingestion, a sink that writes to R2 Data Catalog or R2, and a pipeline that connects them with SQL.

The new Terraform resources are:

cloudflare_r2_data_catalog↗ — enable the data catalog on an R2 bucketcloudflare_pipeline_stream↗ — create a stream that receives events via HTTP or Worker bindingscloudflare_pipeline_sink↗ — create a sink that writes to R2 Data Catalog or R2cloudflare_pipeline↗ — create a pipeline with SQL connecting a stream to a sink

Here is a minimal example that creates a stream, an R2 Data Catalog sink, and a pipeline:

resource "cloudflare_pipeline_stream" "my_stream" {account_id = var.cloudflare_account_idname = "my_stream"format = { type = "json" }schema = {fields = [{name = "value"type = "json"required = true}]}http = { enabled = true, authentication = false, cors = {} }worker_binding = { enabled = false }}resource "cloudflare_pipeline_sink" "my_sink" {account_id = var.cloudflare_account_idname = "my_sink"type = "r2_data_catalog"format = { type = "parquet" }schema = { fields = [] }config = {account_id = var.cloudflare_account_idbucket = "my-pipeline-bucket"table_name = "my_table"token = var.catalog_token}}resource "cloudflare_pipeline" "my_pipeline" {account_id = var.cloudflare_account_idname = "my_pipeline"sql = "INSERT INTO ${cloudflare_pipeline_sink.my_sink.name} SELECT * FROM ${cloudflare_pipeline_stream.my_stream.name}"}For a full end-to-end example that includes R2 bucket creation, data catalog setup, and scoped API token provisioning, refer to the Pipelines Terraform documentation.

You can now use

@cloudflare/dynamic-workflows↗ to run a Workflow inside a Dynamic Worker, ensuring durable execution for code that is loaded at runtime.The Worker Loader loads Dynamic Workers on demand, which previously made durability challenging. Even within a Dynamic Worker, a Workflow might sleep for hours or days between steps, and by the time it resumes, the original Dynamic Worker code would no longer be in memory.

The library solves this by tagging each Workflow instance with metadata that identifies which Dynamic Worker to load — for example, a tenant ID — then reloading the matching Dynamic Worker through the Worker Loader whenever a Workflow awakens.

Because Dynamic Workers are created on-demand, you do not have to register each Workflow up front or manage them individually. Load the Workflow code in the Dynamic Worker when it is needed, and the Workflows engine handles persistence and retries behind the scenes. Your Workflow code itself is unaffected by the routing and behaves as normal.

This unlocks patterns where the Workflow code itself is dynamic. For example, this is useful with:

- SaaS platforms where each tenant defines their own automation, such as onboarding sequences, approval chains, or billing retry logic.

- AI agent frameworks where agents generate and execute multi-step plans at runtime, surviving restarts and waiting for human approval between tool calls.

- Multi-tenant job systems where each customer submits their own processing logic and every step persists progress and retries on failure.

TypeScript import {createDynamicWorkflowEntrypoint,DynamicWorkflowBinding,wrapWorkflowBinding,type WorkflowRunner,} from "@cloudflare/dynamic-workflows";export { DynamicWorkflowBinding };interface Env {WORKFLOWS: Workflow;LOADER: WorkerLoader;}function loadTenant(env: Env, tenantId: string) {return env.LOADER.get(tenantId, async () => ({compatibilityDate: "2026-01-01",mainModule: "index.js",modules: { "index.js": await fetchTenantCode(tenantId) },// The Dynamic Worker uses this exactly like a real Workflow binding;// every create() is tagged with { tenantId } automatically.env: { WORKFLOWS: wrapWorkflowBinding({ tenantId }) },}));}// The entrypoint name must match `class_name` in the workflows binding of your Wrangler config file.export const DynamicWorkflow = createDynamicWorkflowEntrypoint<Env>(async ({ env, metadata }) => {const stub = loadTenant(env, metadata.tenantId as string);return stub.getEntrypoint("TenantWorkflow") as unknown as WorkflowRunner;},);export default {fetch(request: Request, env: Env) {const tenantId = request.headers.get("x-tenant-id")!;return loadTenant(env, tenantId).getEntrypoint().fetch(request);},};For a full walkthrough, refer to the Dynamic Workflows guide.

Full Changelog: v6.10.0...v7.0.0 ↗

This is a major version release that includes breaking changes to three packages:

ai_search,email_security, andworkers. These changes reflect upstream API specification updates that improve type correctness and consistency.Please ensure you read through the list of changes below before moving to this version - this will help you understand any down or upstream issues it may cause to your environments.

See the v7.0.0 Migration Guide ↗ for before/after code examples and actions needed for each change.

The

SearchForAgentsnested type has been removed from all instance metadata structs. This field is no longer part of the API specification.Removed Types:

InstanceNewResponseMetadataSearchForAgentsInstanceUpdateResponseMetadataSearchForAgentsInstanceListResponseMetadataSearchForAgentsInstanceDeleteResponseMetadataSearchForAgentsInstanceReadResponseMetadataSearchForAgentsInstanceNewParamsMetadataSearchForAgentsInstanceUpdateParamsMetadataSearchForAgentsNamespaceInstanceNewResponseMetadataSearchForAgentsNamespaceInstanceUpdateResponseMetadataSearchForAgentsNamespaceInstanceListResponseMetadataSearchForAgentsNamespaceInstanceDeleteResponseMetadataSearchForAgentsNamespaceInstanceReadResponseMetadataSearchForAgentsNamespaceInstanceNewParamsMetadataSearchForAgentsNamespaceInstanceUpdateParamsMetadataSearchForAgents

Multiple Email Security settings sub-resources have changed their path parameter types from

int64tostring:AllowPolicies(policyID int64->policyID string)BlockSenders(patternID int64->patternID string)Domains(domainID int64->domainID string)ImpersonationRegistry(displayNameID int64->impersonationRegistryID string)TrustedDomains(trustedDomainID int64->trustedDomainID string)

The

Investigate.Get,Investigate.Move.New, andInvestigate.Reclassify.Newmethods now useinvestigateIDinstead ofpostfixIDas the path parameter name.The

SettingDomainService.BulkDeletemethod and its associated types have been removed:SettingDomainBulkDeleteResponseSettingDomainBulkDeleteParams

SettingTrustedDomainService.Newnow returns*SettingTrustedDomainNewResponseinstead of*SettingTrustedDomainNewResponseUnion.InvestigateMoveService.Newnow returns*pagination.SinglePage[InvestigateMoveNewResponse]instead of*[]InvestigateMoveNewResponse.The observability telemetry filter parameter types have been restructured to support nested filter groups. New discriminated union types replace the previous flat filter arrays:

ObservabilityTelemetryKeysParams.Filtersnow acceptsFiltersObjectFilterUnion(was[]interface\{\})ObservabilityTelemetryQueryParams.Parameters.Filtersnow acceptsFiltersObjectFilterUnionObservabilityTelemetryValuesParams.Filtersnow acceptsFiltersObjectFilterUnion

New types include

FiltersObjectFiltersObject(for group filters withFilterCombination) andFiltersWorkersObservabilityFilterLeaf(for leaf filters with typedOperation,Type, andValuefields).NEW SERVICE: Query organization audit logs with cursor-based pagination.

List()- Retrieve audit logs

client.BrowserRendering.Devtools.Browser.Targets.Close()- Close a specific browser target (tab, page) by ID

client.Queues.GetMetrics()- Retrieve queue metrics for a specific queue

- Added

WaitForCompletionparameter toNamespaceInstanceItemNewOrUpdateParamsandNamespaceInstanceItemSyncParamsfor synchronous indexing confirmation

- Magic Transit:

ConnectorService.Listparameter name corrected fromquerytoparams(non-functional, affects generated documentation only)

None in this release.

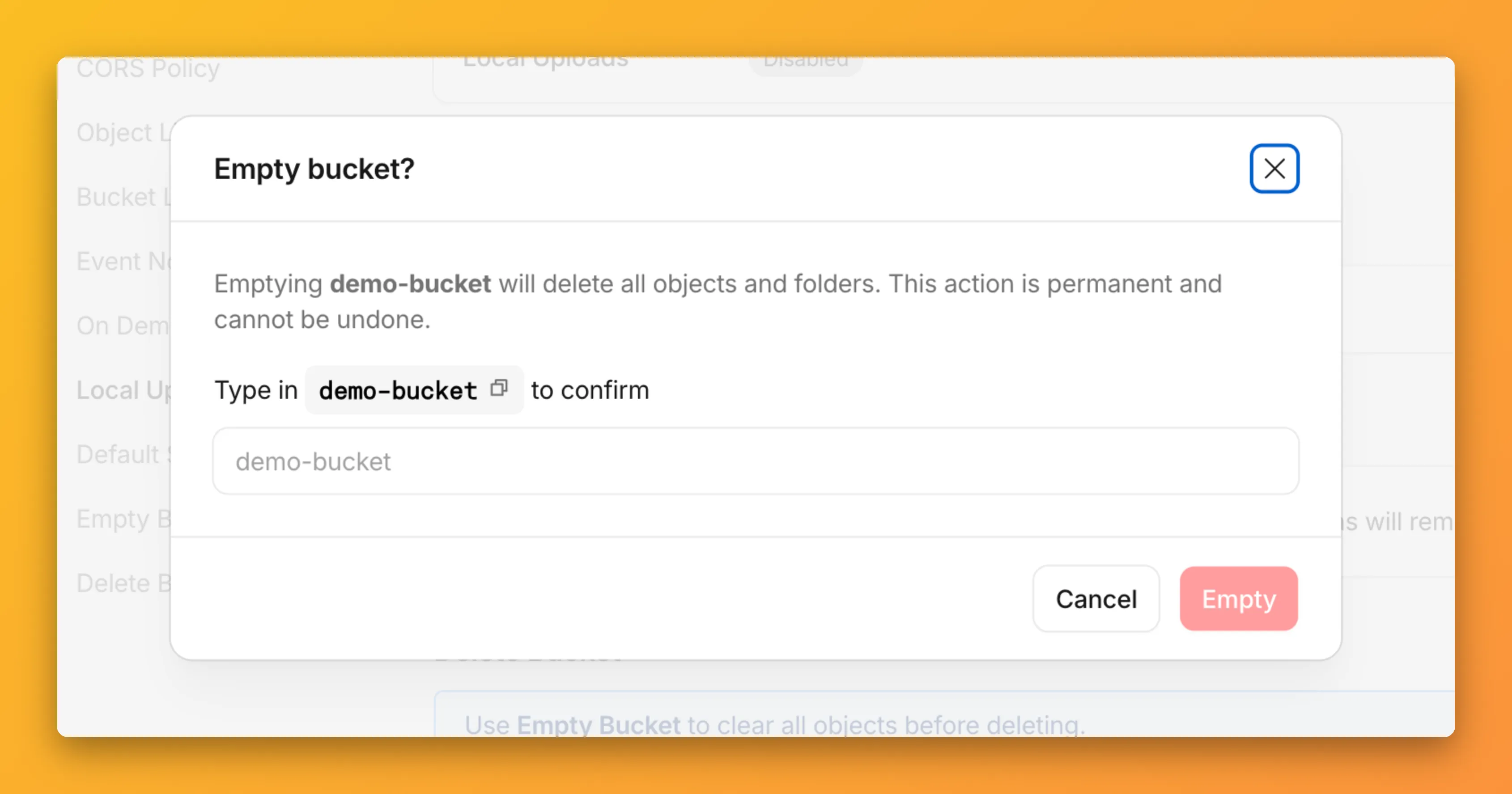

You can now empty an entire R2 bucket or delete folders directly from the dashboard. Emptying a bucket is required before you can delete it. Previously, this required scripting or configuring lifecycle rules. Now, the dashboard can handle it in a single action.

Go to your bucket's Settings tab and select Empty under the Empty Bucket section. This deletes all objects in the bucket while preserving the bucket and its configuration. For large buckets, the operation runs in the background and the dashboard displays progress.

Emptying a bucket is also a prerequisite for deleting it. The dashboard now guides you through both steps in one place.

R2 uses a flat object structure. The dashboard groups objects that share a common prefix into folders when the View prefixes as directories checkbox is selected. Deleting a folder removes every object under that prefix.

From the Objects tab, you can select one or more folders and delete them alongside individual objects.

For step-by-step instructions, refer to Delete buckets and Delete objects.

Full Changelog: v4.3.1...v5.0.0 ↗

This is a major release of the Cloudflare Python SDK. It drops support for Python 3.8, adds 11 new API services, introduces optional aiohttp backend support for improved async concurrency, and includes hundreds of type and method updates across the entire API surface.

Please review the breaking changes below before upgrading. A migration guide is available at v5.0.0 Migration Guide ↗.

- Python 3.8 is no longer supported. The minimum required version is now Python 3.9.

typing-extensionsminimum version bumped from>=4.10to>=4.14.

The following resources have breaking changes. See the v5.0.0 Migration Guide ↗ for detailed migration instructions.

abusereportsacm.totaltlsapigateway.configurationscloudforceone.threateventsd1.databaseintel.indicatorfeedslogpush.edgeorigintlsclientauth.hostnamesqueues.consumersradar.bgprulesets.rulesschemavalidation.schemassnippetszerotrust.dlpzerotrust.networks

The async client now supports an optional

aiohttpHTTP backend for improved concurrency performance. Install withpip install cloudflare[aiohttp]and useDefaultAioHttpClient()as thehttp_clientparameter.Python 3.13 and 3.14 are now tested and supported.

The following top-level resources are new in this release:

Resource Client Path Description AI Search aisearchAI-powered search capabilities Connectivity connectivityConnectivity testing and diagnostics Email Sending email_sendingEmail send and send_raw endpoints Fraud fraudFraud detection and prevention Google Tag Gateway google_tag_gatewayGoogle Tag Gateway management Organizations organizationsOrganization audit logs and management R2 Data Catalog r2_data_catalogR2 Data Catalog operations Realtime Kit realtime_kitRealtime communication (Calls/TURN) Resource Tagging resource_taggingResource tagging and labeling Token Validation token_validationToken validation configuration and rules Vulnerability Scanner vulnerability_scannerVulnerability scanning, credential sets, and target environments - api_gateway: Labels endpoints

- billing: Billable usage PayGo endpoint

- brand_protection: v2 endpoints

- browser_rendering: DevTools methods

- cache: Origin cloud regions resource

- custom_origin_trust_store: Custom origin trust store

- dns:

dns_records/usageendpoints - email_security: Phishguard reports endpoint

- iam: User groups and user group members resources

- radar: Botnet Threat Feed and Post-Quantum endpoints

- workers: Observability Destinations resources

- zero_trust: Access Users, DEX rules, Device IP Profile, Device Subnet, WARP Connector connections and failover, WARP Subnet, Gateway PAC files

- zones: Zone environments endpoints

- Fixed

polymorphic_serializationparameter inmodel_dumpoverrides - Added

BaseModelbase to responseSchemaFieldStruct/SchemaFieldListstubs in Pipelines - Added missing

model_rebuild/update_forward_refsforSharedEntryCustomEntryclasses in DLP - Made

RunQueryParametersNeedleValueaBaseModelwitharbitrary_types_allowedin Workers - Removed duplicate

notification_urlfield in webhook response types for Stream - Resolved pre-existing codegen type errors

- Fixed

type: ignore[call-arg]placement for mypy compatibility in Radar

Resources with

@deprecatedannotations on some methods include:accounts,addressing,ai-gateway,aisearch,api-gateway,billing,cloudforce-one,dns,email-routing,email-security,filters,firewall,images,intel,kv,logpush,origin-tls-client-auth,pages,pipelines,radar,rate-limits,registrar,rulesets,ssl,user,workers,workers-for-platforms,zero-trust,zones

Full Changelog: v6.0.0-beta.2...v6.0.0 ↗

This is a major version release of the Cloudflare TypeScript SDK. It includes 11 entirely new top-level API resources, new sub-resources and methods across 50+ existing resources, SDK infrastructure improvements, and breaking changes to the generated API surface from the v5.x line.

Please ensure you read through the list of changes below before moving to this version - this will help you understand any down or upstream issues it may cause to your environments.

- Retry-After handling changed: The SDK now respects any server-specified

Retry-Aftervalue for rate-limited requests. Previously, values over 60 seconds were ignored and a default backoff was used instead. - Empty response handling: Responses with

content-length: 0now returnundefinedinstead of attempting to parse the body. - Environment variable reading: Empty string env vars (for example,

CLOUDFLARE_API_TOKEN="") are now treated as unset. - Path query parameter merging: URL search params embedded in endpoint paths are now extracted and merged into the query object.

17 HTTP endpoints were removed from the SDK, affecting

abuse-reports,cloudforce-one,dlp/profiles/predefined,email-security/investigate,email-security/settings, andintel/ip-list.client.ai.toMarkdown.transform(file, \{ ...params \})->client.ai.toMarkdown.transform(\{ ...params \})--filemoved from positional arg into params bodyclient.radar.ai.toMarkdown.create(body, \{ ...params \})->client.radar.ai.toMarkdown.create(\{ ...params \})--bodymoved from positional arg into paramsclient.abuseReports.create(reportType, \{ ...params \})->client.abuseReports.create(reportParam, \{ ...params \})-- positional arg renamedclient.iam.userGroups.members.create(userGroupId, [ ...body ])->client.iam.userGroups.members.create(userGroupId, [ ...members ])-- body array param renamed

client.originTLSClientAuth.hostnames.certificates->client.originTLSClientAuth.zoneCertificatesclient.radar.netflows->client.radar.netFlows(casing change)

- 133 methods now return

nullinstead of a typed response object. This primarily affects delete operations acrossaccounts,cache,d1,filters,firewall,hyperdrive,iam,kv,logpush,logs,r2,stream,workers,zero-trust,zones, and others. - 17 methods changed pagination type (for example,

KeysCursorPaginationAfter->KeysCursorLimitPagination). - 29 methods changed to a different named type (for example,

CloudflaredCreateResponse->CloudflareTunnel).

24 shared types removed from root namespace (

ASN,AuditLog,Member,Permission,Role,Subscription,Token, etc.). 19 response types consolidated or renamed.19 resources were restructured from single files to directories. Public API client paths are unchanged, but deep imports may break.

11 entirely new resources added to the client:

Resource Client Path Methods Description AI Search client.aiSearch46 Instances, namespaces, tokens, and items Connectivity client.connectivity5 Directory service APIs Email Sending client.emailSending7 Send and send_raw endpoints Fraud client.fraud2 Fraud detection API Google Tag Gateway client.googleTagGateway2 Google Tag Gateway management Organizations client.organizations8 Organization profiles and audit logs R2 Data Catalog client.r2DataCatalog11 R2 Data Catalog routes Realtime Kit client.realtimeKit54 Realtime Kit APIs Resource Tagging client.resourceTagging9 Resource tagging routes Token Validation client.tokenValidation13 Token validation rules Vulnerability Scanner client.vulnerabilityScanner21 Vulnerability scanning - browser-rendering:

crawl,devtools- Crawl endpoints and DevTools methods - cache:

origin-cloud-regions- Origin cloud regions resource - dns:

usage- DNS records usage endpoints - d1:

time-travel- Time travel get_bookmark and restore - email-security:

phishguard- Phishguard reports endpoint - pipelines:

sinks,streams- Pipelines restructure - radar:

agent-readiness,geolocations,post-quantum- New analytics endpoints - workers:

observability- Observability destinations - zones:

environments- Zone environments endpoints - api-gateway:

labels- Labels endpoints - brand-protection:

v2- V2 endpoints - alerting:

silences- Alert silencing API - billing:

usage- Billable usage PayGo endpoint - iam:

sso- SSO Connectors resource - queues:

getMetricsmethod - Queues metrics endpoint - registrar:

registration-status,update-status- Registrar API convergence - zero-trust: DLP settings, DEX rules, Access Users, WARP Connector, WARP Subnets, Gateway PAC files, Gateway tenants

- Resolved type errors from codegen overwriting manual fixes

- Fixed

post()usage for to-markdown endpoints to resolve async type error - Added least-privilege permissions to all workflow jobs

- Reverted erroneous removal of rulesets resource methods and types

- Resolved prettier formatting errors in codegen output

The following resources now include

@deprecatedannotations on some methods:accounts,addressing,ai-gateway,aisearch,api-gateway,billing,cloudforce-one,custom-nameservers,dns,email-routing,email-security,filters,firewall,images,intel,keyless-certificates,kv,logpush,origin-tls-client-auth,page-shield,pages,pipelines,radar,rate-limits,registrar,rulesets,ssl,user,workers,workers-for-platforms,zero-trust,zones- Retry-After handling changed: The SDK now respects any server-specified

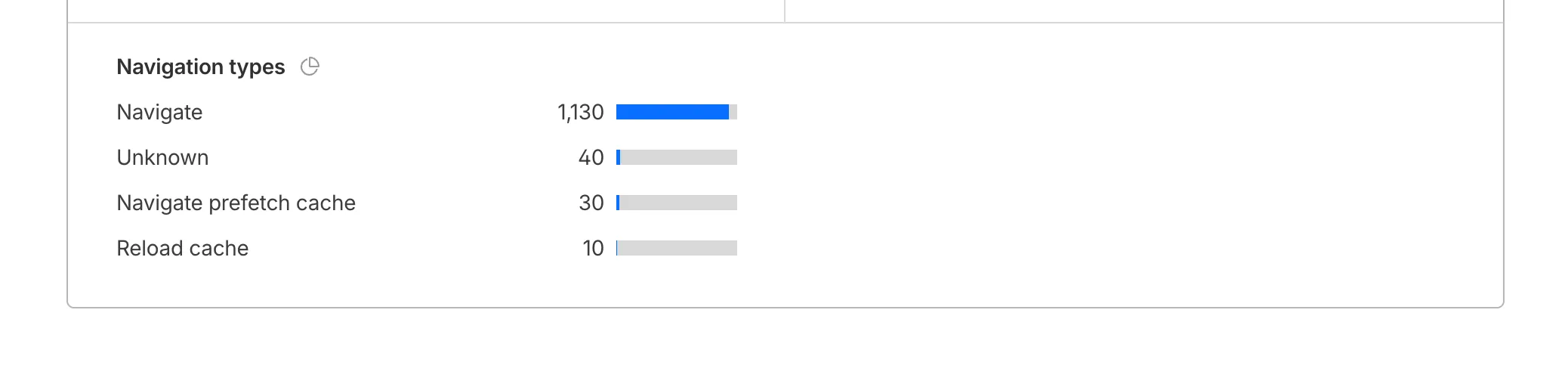

Cloudflare Web Analytics now supports Navigation Type reporting and filtering.

This update allows developers and performance analysts to see how users are navigating between pages — whether through a link click or form submission, a page reload, or using the browser's back/forward buttons — and whether a browser cache hit occurred for these behaviors.

Understanding navigation types is critical for optimizing user experience. For example, if a high volume of your traffic consists of "Back-forward" navigations versus "Back-forward Cache", those visitors are not benefiting from the Back/Forward Cache (bfcache) and therefore are experiencing higher load times due to potentially unnecessary network requests.

The same applies for regular "Navigate" entries — where "Navigate Cache", "Navigate Prefetch Cache" and "Prerender" would provide instant document retrieval — and "Reload", where "Reload cache" would be more optimal.

A high volume of "Reload" entries can also indicate a potential stability problem with your website.

By identifying these patterns, you can tune your browser caching strategies to ensure HTML documents are served instantaneously from local caches rather than requiring a roundtrip to the network.

For more information, refer to Navigation Types.

- Monitor Cache Effectiveness: See how often your site is served from the HTTP cache or bfcache.

- Identify Performance Bottlenecks: Filter by the different types to understand performance opportunity of improving browser cache hit ratio.

You can now find the Navigation Type dimension in the Web Analytics dashboard. You can filter to include/exclude one or more specific types using "equals", "does not equal", "in", or "not in" matchers.

To check the list of popular navigation types, select Page views on the Web Analytics sidebar and scroll down to the bottom:

You can now connect Hyperdrive to a private database through a Workers VPC service. This is the recommended way to connect Hyperdrive to a private database that is not exposed to the public Internet.

When creating a Hyperdrive configuration in the Cloudflare dashboard, choose Connect to private database and then Workers VPC. From there, you can select an existing VPC service or create a new one inline by picking a Cloudflare Tunnel and entering your origin host and TCP port.

You can also create a Hyperdrive configuration backed by a Workers VPC service from the command line:

Terminal window npx wrangler hyperdrive create my-vpc-database \--service-id <YOUR_VPC_SERVICE_ID> \--database <DATABASE_NAME> \--user <DATABASE_USER> \--password <DATABASE_PASSWORD> \--scheme postgresqlWorkers VPC services are reusable across Hyperdrive configurations and can also be bound directly to Workers, so you can share the same private connection across multiple products.

To get started, refer to Connect Hyperdrive to a private database using Workers VPC.

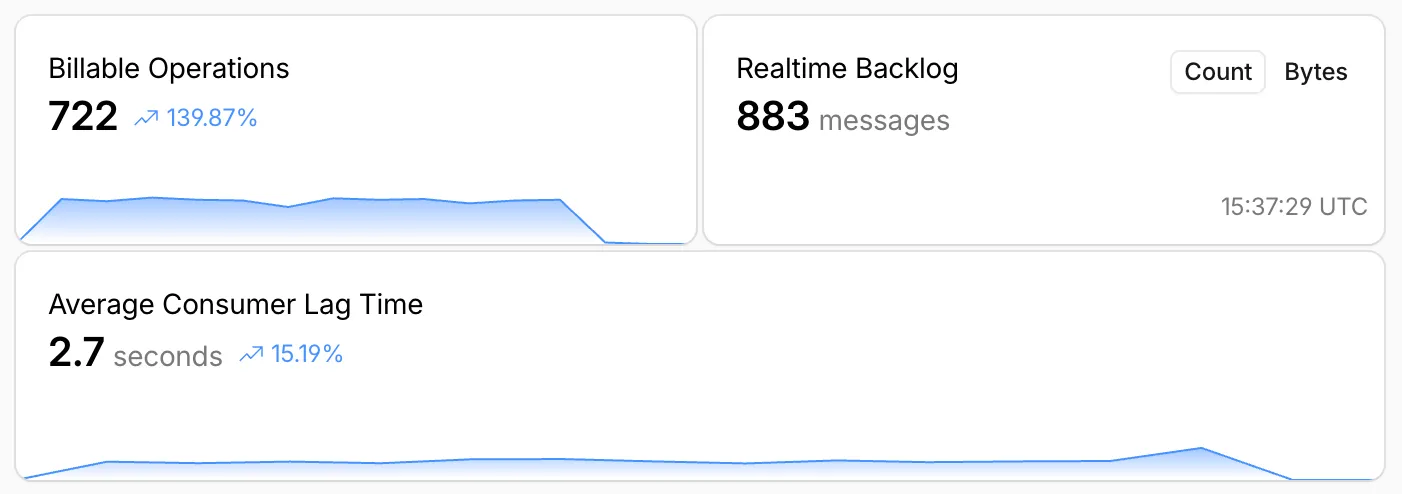

Queues, Cloudflare's managed message queue, now exposes realtime backlog metrics via the dashboard, REST API, and JavaScript API. Three new fields are available:

backlog_count— the number of unacknowledged messages in the queuebacklog_bytes— the total size of those messages in bytesoldest_message_timestamp_ms— the timestamp of the oldest unacknowledged message

The following endpoints also now include a

metadata.metricsobject on the result field after successful message consumption:/accounts/{account_id}/queues/{queue_id}/messages/pull/accounts/{account_id}/queues/{queue_id}/messages/accounts/{account_id}/queues/{queue_id}/messages/batch

Call

env.QUEUE.metrics()to get realtime backlog metrics:TypeScript const {backlogCount, // numberbacklogBytes, // numberoldestMessageTimestamp, // Date | undefined} = await env.QUEUE.metrics();env.QUEUE.send()andenv.QUEUE.sendBatch()also now return a metrics object on the response.You can also query these fields via the GraphQL Analytics API or view realtime backlog on the dashboard ↗.

For more information, refer to Queues metrics.

Terraform Provider v5.19.0 introduces 14 new resources spanning AI Gateway, Pipelines, R2 Data Catalog, User Groups, Vulnerability Scanner, Workers Observability, and Zero Trust capabilities. This release significantly improves the v4 to v5 migration experience with automatic state upgraders for 26 resources, working seamlessly with the new tf-migrate CLI tool ↗ to automate resource renames, attribute updates, and

movedblock generation. Together, these enhancements reduce manual migration effort and minimize risk when upgrading from v4 to v5.Note:

cmd/migrateis deprecated in favor oftf-migrateand will be removed in a future release (#7062 ↗)- cloudflare_ai_gateway: Manage AI Gateway instances

- cloudflare_certificate_authorities_hostname_associations: Manage mTLS certificate hostname associations

- cloudflare_custom_page_asset: Manage custom page assets

- cloudflare_pipeline: Manage Cloudflare Pipelines

- cloudflare_r2_data_catalog: Manage R2 Data Catalog

- cloudflare_user_group: Manage user groups

- cloudflare_user_group_members: Manage user group memberships

- cloudflare_vulnerability_scanner_credential: Manage vulnerability scanner credentials

- cloudflare_vulnerability_scanner_credential_set: Manage vulnerability scanner credential sets

- cloudflare_vulnerability_scanner_target_environment: Manage vulnerability scanner target environments

- cloudflare_workers_observability_destination: Manage Workers Observability destinations

- cloudflare_zero_trust_device_ip_profile: Manage Zero Trust device IP profiles

- cloudflare_zero_trust_device_subnet: Manage Zero Trust device subnets

- cloudflare_zero_trust_dlp_settings: Manage Zero Trust DLP settings

State upgraders added for seamless migration from v4 to v5 for the following resources:

- account

- account_member

- account_token

- authenticated_origin_pulls

- authenticated_origin_pulls_hostname_certificate

- byo_ip_prefix

- custom_hostname

- custom_ssl

- leaked_credential_check

- leaked_credential_check_rule

- logpush_ownership_challenge

- mtls_certificate

- observatory_scheduled_test

- pages_domain

- regional_tiered_cache

- turnstile_widget

- workers_custom_domain

- zero_trust_device_custom_profile

- zero_trust_device_default_profile

- zero_trust_device_posture_integration

- zero_trust_gateway_certificate

- zero_trust_gateway_settings

- zero_trust_organization

- zero_trust_tunnel_cloudflared_virtual_network

- zone_setting

- ruleset: Add

content_converterandredirects_for_ai_trainingsupport to configuration rules - zero_trust_gateway_logging: Make importable

- account_member: Add UseStateForUnknown to status field to prevent drift

- authenticated_origin_pulls_settings: Fix no prior schema and no-op upgrade

- certificate_pack: Initialize empty lists instead of null in state upgrader to prevent drift

- migrations: Handle ambiguous schema_version state for v4/v5 coexistence

- zero_trust_access_policy: Fix nil pointer panic in state upgrader; set PriorSchema nil for v4 state upgrade

- ai_search_instance: Restore original defaults for cache and cache_threshold; conflict resolution

- apijson: Return empty object from MarshalForPatch when no fields are serializable

- dlp_predefined_profile: Eliminate perpetual entries and enabled_entries drift

- dns_record: Avoid unnecessary drift for ipv4_only and ipv6_only attributes; remove private_routing default value

- drift: Preserve prior state values for optional fields not returned by API

- healthcheck: Use buildHealthcheckPlanChecks helper for correct plan checks per migration source; update assertions

- leaked_credential_check_rule: Handle empty ID from v4 provider state migration

- list_item: Remove context

- logpush_job: Update model for migration

- ruleset: Fix migration; add redirects_for_ai_training to SourceV4ActionParametersModel; fix duplicate model attribute

- worker: Add UseStateForUnknown() plan modifiers and update tests for observability.traces

- workers_custom_domain: Handle HTTP 200 no content header; update assertions

- workers_script: Fix model drift

- zero_trust_access_identity_provider: Fix boolean drifts

- zero_trust_device_managed_networks: Upgrade resource state

- zero_trust_gateway_policy: Make filters Computed+Optional to prevent drift

- zero_trust_gateway_settings: Fix breaking changes; implement sweeper to reset account to clean defaults

- zone_setting: Migration test improvements and fixes

- healthcheck: Update port description to clarify defaults

- Add application-scoped access policy migration guidance

- Update zone_settings_override migration guide for tf-migrate v2 workflow

We're excited to announce tf-migrate, a purpose-built CLI tool that simplifies migrating from Cloudflare Terraform Provider v4 to v5.

Terraform Provider v5 is stable and actively receiving updates. We encourage all users to migrate to v5 to take advantage of ongoing enhancements and new capabilities.

Cloudflare uses tf-migrate to migrate our own infrastructure — the same tool we're providing to the community — ensuring the best possible migration experience.

tf-migrate automates the tedious and error-prone parts of the v4 to v5 migration process:

- Resource type renames – Automatically updates

cloudflare_record→cloudflare_dns_record,cloudflare_access_application→cloudflare_zero_trust_access_application, and 40+ other renamed resources - Attribute transformations – Updates field names (e.g.,

value→contentfor DNS records) and restructures nested blocks - Moved block generation – Creates Terraform 1.8+

movedblocks to prevent resource replacements and ensure zero-downtime migrations - Cross-file reference updates – Automatically finds and updates all references to renamed resources across your entire configuration

- Dry-run mode – Preview all changes before applying them to ensure safety

Combined with the automatic state upgraders introduced in v5.19+, tf-migrate eliminates the manual work and risk that previously made v5 migrations challenging. Tf-migrate operates directly on the config, and the built-in state upgraders handle the rest.

Tf-migrate currently supports the most common Terraform resources our customers use. We are actively working to expand coverage, with the most commonly used resources prioritized first.

For the complete list of supported resources and their migration status, refer to the v5 Stabilization Tracker ↗. This list is updated regularly as additional resources are stabilized and migration support is added.

Resources not yet supported by tf-migrate will need to be migrated manually using the version 5 upgrade guide ↗. The upgrade guide provides step-by-step instructions for handling resource renames, attribute changes, and state migrations.

- Download tf-migrate ↗

- Version 5 Migration Guide ↗

- Terraform Provider documentation ↗

- v5 Stabilization Tracker ↗

We have been releasing Betas over the past month and a half while testing this tool. See the full changelog of those Betas here: tf-migrate releases ↗.

- Resource type renames – Automatically updates

-

In this release, you'll see a number of breaking changes. This is primarily due to changes in OpenAPI definitions, which our libraries are based off of, and codegen updates that we rely on to read those OpenAPI definitions and produce our SDK libraries.

Please ensure you read through the list of changes below before moving to this version - this will help you understand any down or upstream issues it may cause to your environments.

See the v6.10.0 Migration Guide ↗ for before/after code examples and actions needed for each change.

Several fields have been removed from

AbuseReportNewParamsBodyAbuseReportsRegistrarWhoisReportRegWhoRequest:RegWhoGoodFaithAffirmationRegWhoLawfulProcessingAgreementRegWhoLegalBasisRegWhoRequestTypeRegWhoRequestedDataElements

The

InstanceNewParamsandInstanceUpdateParamstypes have been significantly restructured. Many fields have been moved or removed:InstanceNewParams.TokenID,Type,CreatedFromAISearchWizard,WorkerDomainremovedInstanceUpdateParams— most configuration fields removed (includingIndexMethod,IndexingOptions,MaxNumResults,Metadata,Paused,PublicEndpointParams,Reranking,RerankingModel,RetrievalOptions,RewriteModel,RewriteQuery,ScoreThreshold,SourceParams,Summarization,SummarizationModel,SystemPromptAISearch,SystemPromptIndexSummarization,SystemPromptRewriteQuery,TokenID,CreatedFromAISearchWizard,WorkerDomain)InstanceSearchParams.Messagesfield removed along withInstanceSearchParamsMessageandInstanceSearchParamsMessagesRoletypes

The

InstanceItemServicetype has been removed. The items sub-resource atclient.AISearch.Instances.Itemsno longer exists in the non-namespace path. Useclient.AISearch.Namespaces.Instances.Itemsinstead.The following types have been removed from the

ai_searchpackage:TokenDeleteResponseTokenListParams(and associatedTokenListParamsOrderBy,TokenListParamsOrderByDirection)

The

Investigate.Move.New()method now returns a raw slice instead of a paginated wrapper:New()returns*[]InvestigateMoveNewResponseinstead of*pagination.SinglePage[InvestigateMoveNewResponse]NewAutoPaging()method removed

The

ConfigEditParamstype lost itsMTLSandNamefields. TheHyperdriveMTLSParamtype lostMTLSandHostfields. TheHostfield on origin config changed fromparam.Field[string]to a plainstring.The

UserGroupMemberNewParamsstruct has been restructured and theNew()method now returns a paginated response:UserGroupMemberNewParams.Bodyrenamed toUserGroupMemberNewParams.MembersUserGroupMemberNewParamsBodyrenamed toUserGroupMemberNewParamsMemberUserGroupMemberUpdateParams.Bodyrenamed toUserGroupMemberUpdateParams.MembersUserGroupMemberUpdateParamsBodyrenamed toUserGroupMemberUpdateParamsMemberUserGroups.Members.New()returns*pagination.SinglePage[UserGroupMemberNewResponse]instead of*UserGroupMemberNewResponse

The

UserGroupListParams.Directionfield changed fromparam.Field[string]toparam.Field[UserGroupListParamsDirection](typed enum withasc/descvalues).Several delete methods across Pipelines now return typed responses instead of bare error:

Pipelines.DeleteV1()returns(*PipelineDeleteV1Response, error)instead oferrorPipelines.Sinks.Delete()returns(*SinkDeleteResponse, error)instead oferrorPipelines.Streams.Delete()returns(*StreamDeleteResponse, error)instead oferror

The following response envelope types have been removed:

MessageBulkPushResponseSuccessMessagePushResponseSuccessMessageAckResponsefieldsRetryCountandWarningsremoved

Methods now return direct types instead of

SinglePagewrappers, and several internal types have been removed. AssociatedAutoPagingmethods have also been removed:Stores.New()returns*StoreNewResponseinstead of*pagination.SinglePage[StoreNewResponse]Stores.NewAutoPaging()method removedStores.Secrets.BulkDelete()returns*StoreSecretBulkDeleteResponseinstead of*pagination.SinglePage[StoreSecretBulkDeleteResponse]Stores.Secrets.BulkDeleteAutoPaging()method removed- Removed types:

StoreDeleteResponse,StoreDeleteResponseEnvelopeResultInfo,StoreSecretDeleteResponse,StoreSecretDeleteResponseStatus,StoreSecretBulkDeleteResponse(old shape),StoreSecretBulkDeleteResponseStatus,StoreSecretDeleteResponseEnvelopeResultInfo StoreNewParamsrestructured (oldStoreNewParamsBodyremoved)StoreSecretBulkDeleteParamsrestructured

The

AudioTracks.Get()method now returns a dedicated response type instead of a paginated list. TheGetAutoPaging()method has been removed:Get()returns*AudioTrackGetResponseinstead of*pagination.SinglePage[Audio]GetAutoPaging()method removed

The

Clip.New()method now returns the sharedVideotype. The following types have been entirely removed:Clip,ClipPlayback,ClipStatus,ClipWatermark

ClipNewParams.MaxDurationSeconds,ThumbnailTimestampPct,WatermarkremovedCopyNewParams.ThumbnailTimestampPct,Watermarkremoved

DownloadNewResponseStatustype removedWebhookUpdateResponseandWebhookGetResponsechanged frominterface{}type aliases to full struct types

The following union interface types have been removed:

AccessAIControlMcpPortalListResponseServersUpdatedPromptsUnionAccessAIControlMcpPortalListResponseServersUpdatedToolsUnionAccessAIControlMcpPortalReadResponseServersUpdatedPromptsUnionAccessAIControlMcpPortalReadResponseServersUpdatedToolsUnion

NEW SERVICE: Full vulnerability scanning management

- CredentialSets - CRUD for credential sets (

New,Update,List,Delete,Edit,Get) - Credentials - Manage credentials within sets (

New,Update,List,Delete,Edit,Get) - Scans - Create and manage vulnerability scans (

New,List,Get) - TargetEnvironments - Manage scan target environments (

New,Update,List,Delete,Edit,Get)

NEW SERVICE: Namespace-scoped AI Search management

New(),Update(),List(),Delete(),ChatCompletions(),Read(),Search()- Instances - Namespace-scoped instances (

New,Update,List,Delete,ChatCompletions,Read,Search,Stats) - Jobs - Instance job management (

New,Update,List,Get,Logs) - Items - Instance item management (

List,Delete,Chunks,NewOrUpdate,Download,Get,Logs,Sync,Upload)

NEW SERVICE: DevTools protocol browser control

- Session - List and get devtools sessions

- Browser - Browser lifecycle management (

New,Delete,Connect,Launch,Protocol,Version) - Page - Get page by target ID

- Targets - Manage browser targets (

New,List,Activate,Get)

NEW: Domain check and search endpoints

Check()-POST /accounts/{account_id}/registrar/domain-checkSearch()-GET /accounts/{account_id}/registrar/domain-search

NEW: Registration management (

client.Registrar.Registrations)New(),List(),Edit(),Get()RegistrationStatus.Get()- Get registration workflow statusUpdateStatus.Get()- Get update workflow status

NEW SERVICE: Manage origin cloud region configurations

New(),List(),Delete(),BulkDelete(),BulkEdit(),Edit(),Get(),SupportedRegions()

NEW SERVICE: DLP settings management

Update(),Delete(),Edit(),Get()

AgentReadiness.Summary()- Agent readiness summary by dimensionAI.MarkdownForAgents.Summary()- Markdown-for-agents summaryAI.MarkdownForAgents.Timeseries()- Markdown-for-agents timeseries

UserGroups.Members.Get()- Get details of a specific member in a user groupUserGroups.Members.NewAutoPaging()- Auto-paging variant for adding membersUserGroups.NewParams.Policieschanged from required to optional

ContentBotsProtectionfield added toBotFightModeConfigurationandSubscriptionConfiguration(block/disabled)

None in this release.