Changelog

New updates and improvements at Cloudflare.

When using a Worker with the

nodejs_compatcompatibility flag enabled, you can now use the following Node.js APIs:You can use

node:net↗ to create a direct connection to servers via a TCP sockets withnet.Socket↗.index.js import net from "node:net";const exampleIP = "127.0.0.1";export default {async fetch(req) {const socket = new net.Socket();socket.connect(4000, exampleIP, function () {console.log("Connected");});socket.write("Hello, Server!");socket.end();return new Response("Wrote to server", { status: 200 });},};index.ts import net from "node:net";const exampleIP = "127.0.0.1";export default {async fetch(req): Promise<Response> {const socket = new net.Socket();socket.connect(4000, exampleIP, function () {console.log("Connected");});socket.write("Hello, Server!");socket.end();return new Response("Wrote to server", { status: 200 });},} satisfies ExportedHandler;Additionally, you can now use other APIs including

net.BlockList↗ andnet.SocketAddress↗.Note that

net.Server↗ is not supported.You can use

node:dns↗ for name resolution via DNS over HTTPS using Cloudflare DNS ↗ at 1.1.1.1.index.js import dns from "node:dns";let response = await dns.promises.resolve4("cloudflare.com", "NS");index.ts import dns from 'node:dns';let response = await dns.promises.resolve4('cloudflare.com', 'NS');All

node:dnsfunctions are available, exceptlookup,lookupService, andresolvewhich throw "Not implemented" errors when called.You can use

node:timers↗ to schedule functions to be called at some future period of time.This includes

setTimeout↗ for calling a function after a delay,setInterval↗ for calling a function repeatedly, andsetImmediate↗ for calling a function in the next iteration of the event loop.index.js import timers from "node:timers";console.log("first");timers.setTimeout(() => {console.log("last");}, 10);timers.setTimeout(() => {console.log("next");});index.ts import timers from "node:timers";console.log("first");timers.setTimeout(() => {console.log("last");}, 10);timers.setTimeout(() => {console.log("next");});

Workflows (beta) now allows you to define up to 1024 steps.

sleepsteps do not count against this limit.We've also added:

instanceIdas property to theWorkflowEventtype, allowing you to retrieve the current instance ID from within a running Workflow instance- Improved queueing logic for Workflow instances beyond the current maximum concurrent instances, reducing the cases where instances are stuck in the queued state.

- Support for

pauseandresumefor Workflow instances in a queued state.

We're continuing to work on increases to the number of concurrent Workflow instances, steps, and support for a new

waitForEventAPI over the coming weeks.

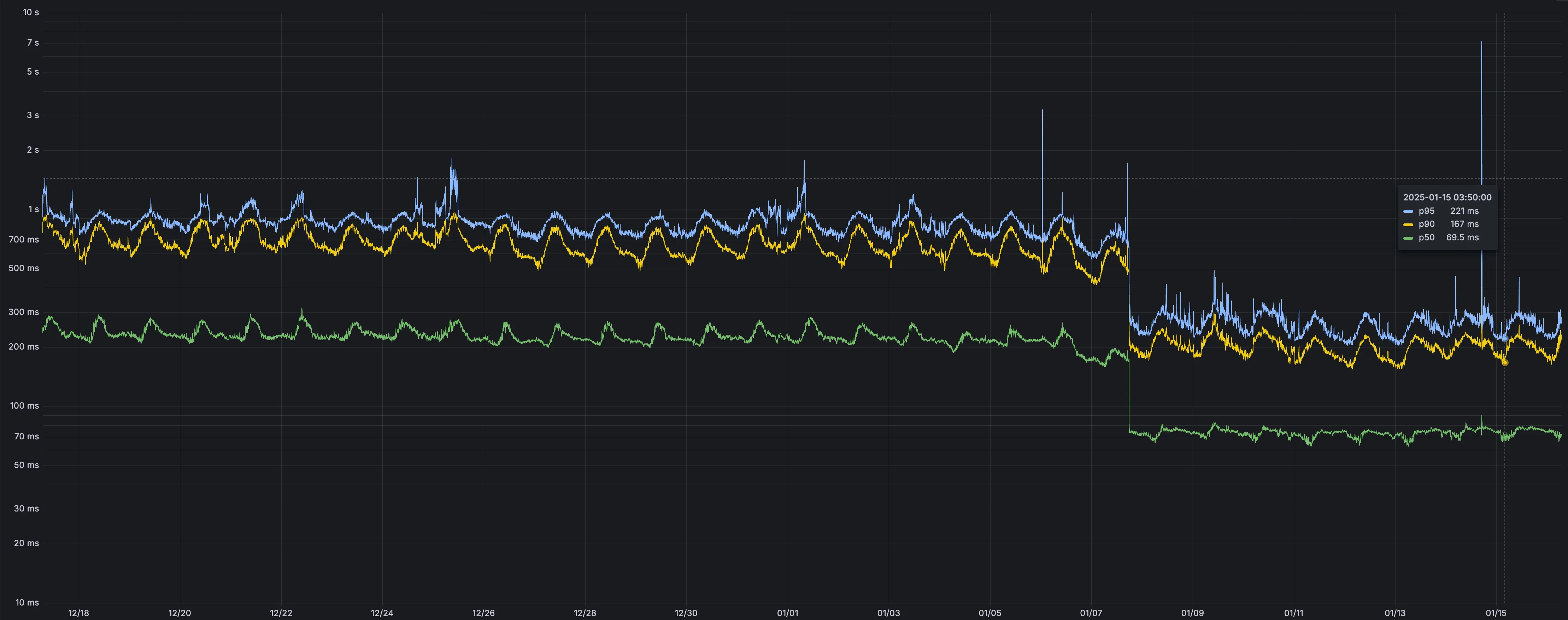

Users making D1 requests via the Workers API can see up to a 60% end-to-end latency improvement due to the removal of redundant network round trips needed for each request to a D1 database.

p50, p90, and p95 request latency aggregated across entire D1 service. These latencies are a reference point and should not be viewed as your exact workload improvement.

This performance improvement benefits all D1 Worker API traffic, especially cross-region requests where network latency is an outsized latency factor. For example, a user in Europe talking to a database in North America. D1 location hints can be used to influence the geographic location of a database.

For more details on how D1 removed redundant round trips, see the D1 specific release note entry.

AI Gateway now supports DeepSeek, including their cutting-edge DeepSeek-V3 model. With this addition, you have even more flexibility to manage and optimize your AI workloads using AI Gateway. Whether you're leveraging DeepSeek or other providers, like OpenAI, Anthropic, or Workers AI, AI Gateway empowers you to:

- Monitor: Gain actionable insights with analytics and logs.

- Control: Implement caching, rate limiting, and fallbacks.

- Optimize: Improve performance with feedback and evaluations.

To get started, simply update the base URL of your DeepSeek API calls to route through AI Gateway. Here's how you can send a request using cURL:

Example fetch request curl https://gateway.ai.cloudflare.com/v1/{account_id}/{gateway_id}/deepseek/chat/completions \--header 'content-type: application/json' \--header 'Authorization: Bearer DEEPSEEK_TOKEN' \--data '{"model": "deepseek-chat","messages": [{"role": "user","content": "What is Cloudflare?"}]}'For detailed setup instructions, see our DeepSeek provider documentation.

Workers Builds, the integrated CI/CD system for Workers (currently in beta), now lets you cache artifacts across builds, speeding up build jobs by eliminating repeated work, such as downloading dependencies at the start of each build.

-

Build Caching: Cache dependencies and build outputs between builds with a shared project-wide cache, ensuring faster builds for the entire team.

-

Build Watch Paths: Define paths to include or exclude from the build process, ideal for monorepos to target only the files that need to be rebuilt per Workers project.

To get started, select your Worker on the Cloudflare dashboard ↗ then go to Settings > Builds, and connect a GitHub or GitLab repository. Once connected, you'll see options to configure Build Caching and Build Watch Paths.

-

The latest

cloudflaredbuild 2024.12.2 ↗ introduces the ability to collect all the diagnostic logs needed to troubleshoot acloudflaredinstance.A diagnostic report collects data from a single instance of

cloudflaredrunning on the local machine and outputs it to acloudflared-diagfile.For more information, refer to Diagnostic logs.

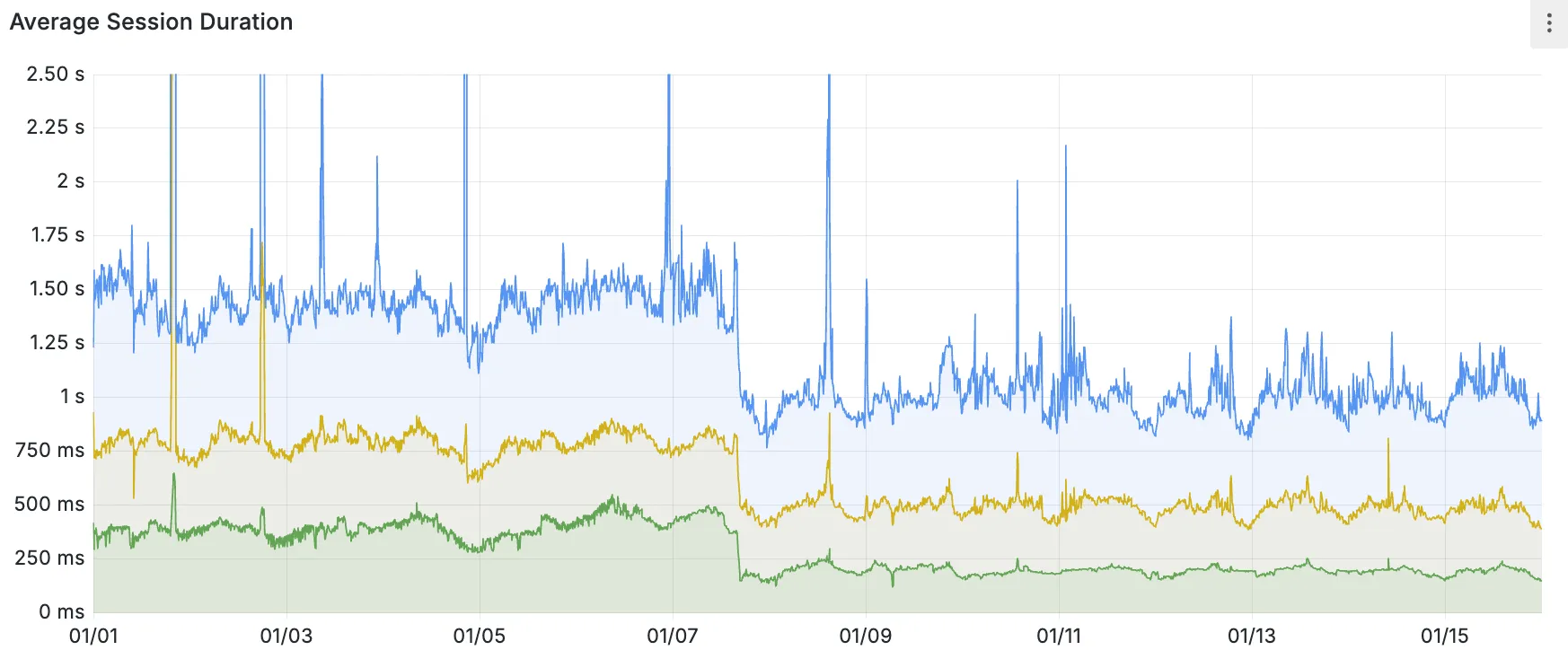

Hyperdrive now caches queries in all Cloudflare locations, decreasing cache hit latency by up to 90%.

When you make a query to your database and Hyperdrive has cached the query results, Hyperdrive will now return the results from the nearest cache. By caching data closer to your users, the latency for cache hits reduces by up to 90%.

This reduction in cache hit latency is reflected in a reduction of the session duration for all queries (cached and uncached) from Cloudflare Workers to Hyperdrive, as illustrated below.

P50, P75, and P90 Hyperdrive session latency for all client connection sessions (both cached and uncached queries) for Hyperdrive configurations with caching enabled during the rollout period.

This performance improvement is applied to all new and existing Hyperdrive configurations that have caching enabled.

For more details on how Hyperdrive performs query caching, refer to the Hyperdrive documentation.

You can now use the

cacheproperty of theRequestinterface to bypass Cloudflare's cache when making subrequests from Cloudflare Workers, by setting its value tono-store.index.js export default {async fetch(req, env, ctx) {const request = new Request("https://cloudflare.com", {cache: "no-store",});const response = await fetch(request);return response;},};index.ts export default {async fetch(req, env, ctx): Promise<Response> {const request = new Request("https://cloudflare.com", { cache: 'no-store'});const response = await fetch(request);return response;}} satisfies ExportedHandler<Environment>When you set the value to

no-storeon a subrequest made from a Worker, the Cloudflare Workers runtime will not check whether a match exists in the cache, and not add the response to the cache, even if the response includes directives in theCache-ControlHTTP header that otherwise indicate that the response is cacheable.This increases compatibility with NPM packages and JavaScript frameworks that rely on setting the

cacheproperty, which is a cross-platform standard part of theRequestinterface. Previously, if you set thecacheproperty onRequest, the Workers runtime threw an exception.If you've tried to use

@planetscale/database,redis-js,stytch-node,supabase,axiom-jsor have seen the error messageThe cache field on RequestInitializerDict is not implemented in fetch— you should try again, making sure that the Compatibility Date of your Worker is set to on or after2024-11-11, or thecache_option_enabledcompatibility flag is enabled for your Worker.- Learn how the Cache works with Cloudflare Workers

- Enable Node.js compatibility for your Cloudflare Worker

- Explore Runtime APIs and Bindings available in Cloudflare Workers

Workflows is now in open beta, and available to any developer a free or paid Workers plan.

Workflows allow you to build multi-step applications that can automatically retry, persist state and run for minutes, hours, days, or weeks. Workflows introduces a programming model that makes it easier to build reliable, long-running tasks, observe as they progress, and programmatically trigger instances based on events across your services.

You can get started with Workflows by following our get started guide and/or using

npm create cloudflareto pull down the starter project:Terminal window npm create cloudflare@latest workflows-starter -- --template "cloudflare/workflows-starter"You can open the

src/index.tsfile, extend it, and usewrangler deployto deploy your first Workflow. From there, you can:- Learn the Workflows API

- Trigger Workflows via your Workers apps.

- Understand the Rules of Workflows and how to adopt best practices

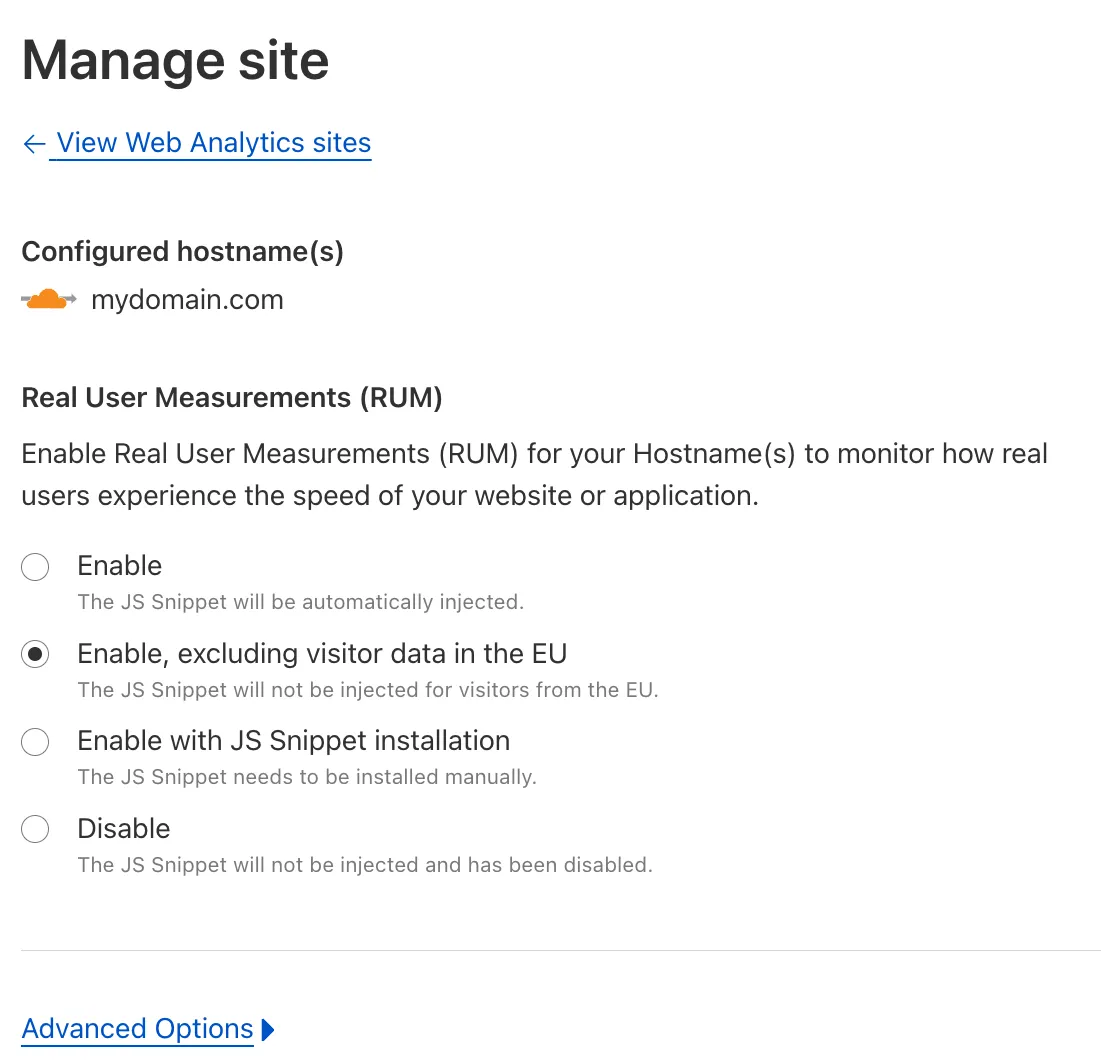

You can now easily enable Real User Monitoring (RUM) monitoring for your hostnames, while safely dropping requests from visitors in the European Union to comply with GDPR and CCPA.

Our Web Analytics product has always been centered on giving you insights into your users' experience that you need to provide the best quality experience, without sacrificing user privacy in the process.

To help with that aim, you can now selectively enable RUM monitoring for your hostname and exclude EU visitor data in a single click. If you opt for this option, we will drop all metrics collected by our EU data centers automatically.

You can learn more about what metrics are reported by Web Analytics and how it is collected in the Web Analytics documentation. You can enable Web Analytics on any hostname by going to the Web Analytics ↗ section of the dashboard, selecting "Manage Site" for the hostname you want to monitor, and choosing the appropriate enablement option.