Changelog

New updates and improvements at Cloudflare.

AI Search now supports custom HTTP headers for website crawling, solving a common problem where valuable content behind authentication or access controls could not be indexed.

Previously, AI Search could only crawl publicly accessible pages, leaving knowledge bases, documentation, and other protected content out of your search results. With custom headers support, you can now include authentication credentials that allow the crawler to access this protected content.

This is particularly useful for indexing content like:

- Internal documentation behind corporate login systems

- Premium content that requires users to provide access to unlock

- Sites protected by Cloudflare Access using service tokens

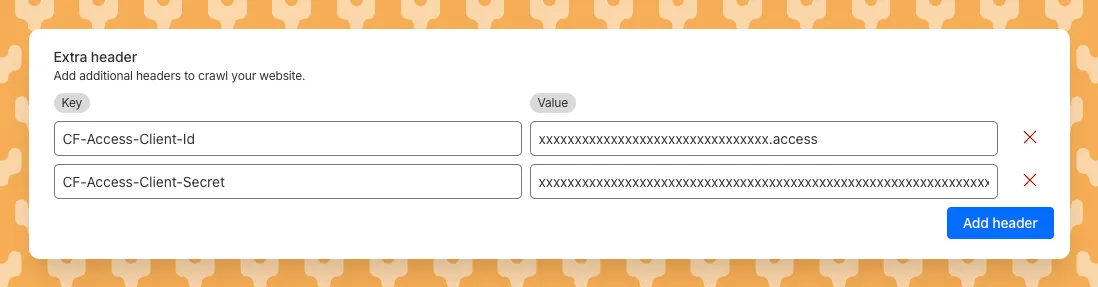

To add custom headers when creating an AI Search instance, select Parse options. In the Extra headers section, you can add up to five custom headers per Website data source.

For example, to crawl a site protected by Cloudflare Access, you can add service token credentials as custom headers:

CF-Access-Client-Id: your-token-id.accessCF-Access-Client-Secret: your-token-secretThe crawler will automatically include these headers in all requests, allowing it to access protected pages that would otherwise be blocked.

Learn more about configuring custom headers for website crawling in AI Search.

You can now perform more powerful queries directly in Workers Analytics Engine ↗ with a major expansion of our SQL function library.

Workers Analytics Engine allows you to ingest and store high-cardinality data at scale (such as custom analytics) and query your data through a simple SQL API.

Today, we've expanded Workers Analytics Engine's SQL capabilities with several new functions:

countIf()- count the number of rows which satisfy a provided conditionsumIf()- calculate a sum from rows which satisfy a provided conditionavgIf()- calculate an average from rows which satisfy a provided condition

New date and time functions: ↗

toYear()toMonth()toDayOfMonth()toDayOfWeek()toHour()toMinute()toSecond()toStartOfYear()toStartOfMonth()toStartOfWeek()toStartOfDay()toStartOfHour()toStartOfFifteenMinutes()toStartOfTenMinutes()toStartOfFiveMinutes()toStartOfMinute()today()toYYYYMM()

Whether you're building usage-based billing systems, customer analytics dashboards, or other custom analytics, these functions let you get the most out of your data. Get started with Workers Analytics Engine and explore all available functions in our SQL reference documentation.

Starting February 2, 2026, the

cloudflared proxy-dnscommand will be removed from all newcloudflaredreleases.This change is being made to enhance security and address a potential vulnerability in an underlying DNS library. This vulnerability is specific to the

proxy-dnscommand and does not affect any othercloudflaredfeatures, such as the core Cloudflare Tunnel service.The

proxy-dnscommand, which runs a client-side DNS-over-HTTPS (DoH) proxy, has been an officially undocumented feature for several years. This functionality is fully and securely supported by our actively developed products.Versions of

cloudflaredreleased before this date will not be affected and will continue to operate. However, note that our official support policy for anycloudflaredrelease is one year from its release date.We strongly advise users of this undocumented feature to migrate to one of the following officially supported solutions before February 2, 2026, to continue benefiting from secure DNS-over-HTTPS.

The preferred method for enabling DNS-over-HTTPS on user devices is the Cloudflare WARP client. The WARP client automatically secures and proxies all DNS traffic from your device, integrating it with your organization's Zero Trust policies and posture checks.

For scenarios where installing a client on every device is not possible (such as servers, routers, or IoT devices), we recommend using the WARP Connector.

Instead of running

cloudflared proxy-dnson a machine, you can install the WARP Connector on a single Linux host within your private network. This connector will act as a gateway, securely routing all DNS and network traffic from your entire subnet to Cloudflare for filtering and logging.

Wrangler now supports using the

CLOUDFLARE_ENVenvironment variable to select the active environment for your Worker commands. This provides a more flexible way to manage environments, especially when working with build tools and CI/CD pipelines.Environment selection via environment variable:

- Set

CLOUDFLARE_ENVto specify which environment to use for Wrangler commands - Works with all Wrangler commands that support the

--envflag - The

--envcommand line argument takes precedence over theCLOUDFLARE_ENVenvironment variable

Terminal window # Deploy to the production environment using CLOUDFLARE_ENVCLOUDFLARE_ENV=production wrangler deploy# Upload a version to the staging environmentCLOUDFLARE_ENV=staging wrangler versions upload# The --env flag takes precedence over CLOUDFLARE_ENVCLOUDFLARE_ENV=dev wrangler deploy --env production# This will deploy to production, not devThe

CLOUDFLARE_ENVenvironment variable is particularly useful when working with build tools like Vite. You can set the environment once during the build process, and it will be used for both building and deploying your Worker:Terminal window # Set the environment for both build and deployCLOUDFLARE_ENV=production npm run build & wrangler deployWhen using

@cloudflare/vite-plugin, the build process generates a "redirected deploy config" that is flattened to only contain the active environment. Wrangler will validate that the environment specified matches the environment used during the build to prevent accidentally deploying a Worker built for one environment to a different environment.- Set

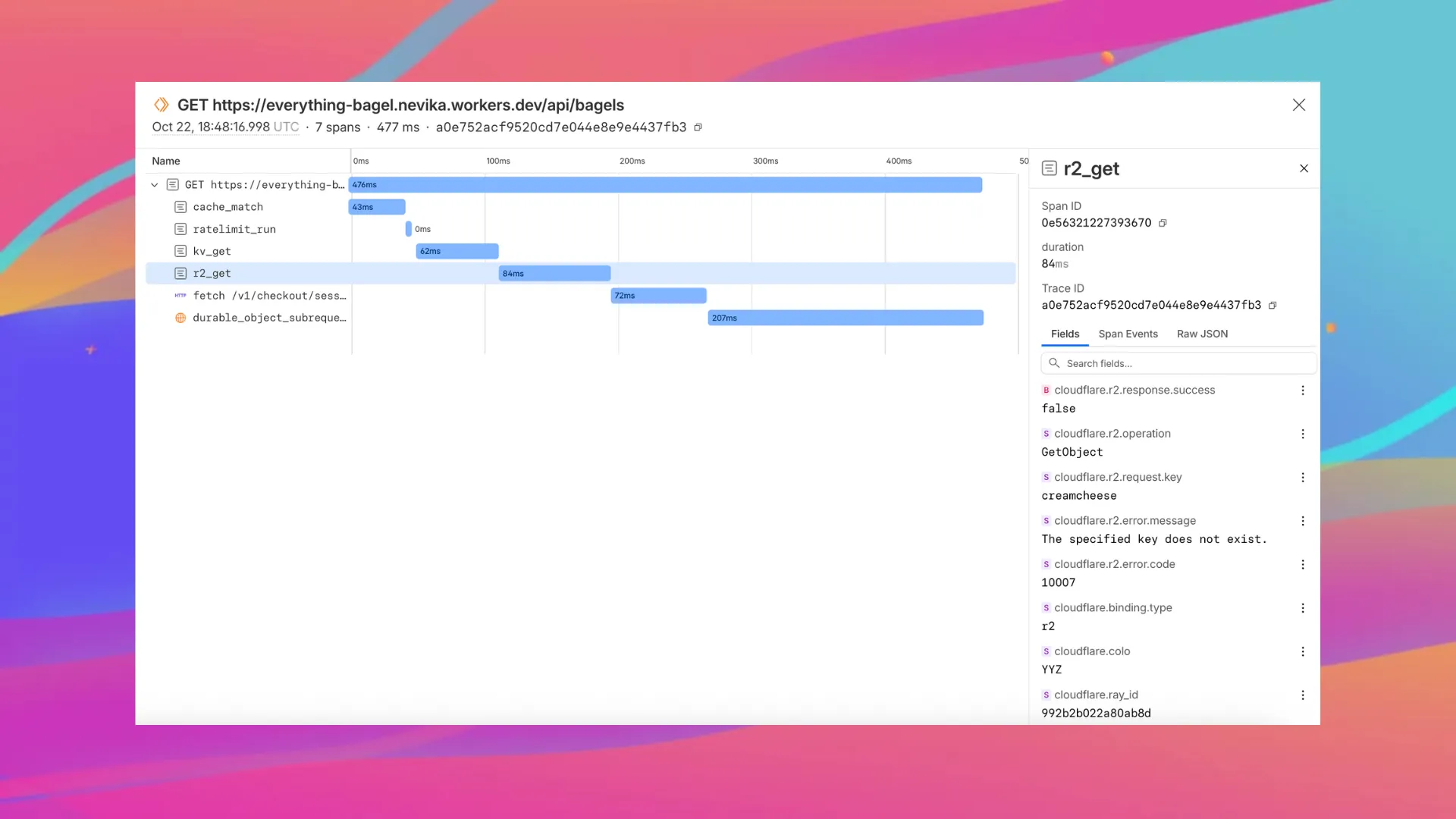

Enable automatic tracing on your Workers, giving you detailed metadata and timing information for every operation your Worker performs.

Tracing helps you identify performance bottlenecks, resolve errors, and understand how your Worker interacts with other services on the Workers platform. You can now answer questions like:

- Which calls are slowing down my application?

- Which queries to my database take the longest?

- What happened within a request that resulted in an error?

You can now:

- View traces alongside your logs in the Workers Observability dashboard

- Export traces (and correlated logs) to any OTLP-compatible destination ↗, such as Honeycomb, Sentry or Grafana, by configuring a tracing destination in the Cloudflare dashboard ↗

- Analyze and query across span attributes (operation type, status, duration, errors)

JSONC {"observability": {"tracing": {"enabled": true,},},}

You can now set a jurisdiction when creating a D1 database to guarantee where your database runs and stores data. Jurisdictions can help you comply with data localization regulations such as GDPR. Supported jurisdictions include

euandfedramp.A jurisdiction can only be set at database creation time via wrangler, REST API or the UI and cannot be added/updated after the database already exists.

Terminal window npx wrangler@latest d1 create db-with-jurisdiction --jurisdiction eucurl -X POST "https://api.cloudflare.com/client/v4/accounts/<account_id>/d1/database" \-H "Authorization: Bearer $TOKEN" \-H "Content-Type: application/json" \--data '{"name": "db-with-jurisdiction", "jurisdiction": "eu" }'To learn more, visit D1's data location documentation.

Workers VPC Services is now available, enabling your Workers to securely access resources in your private networks, without having to expose them on the public Internet.

- VPC Services: Create secure connections to internal APIs, databases, and services using familiar Worker binding syntax

- Multi-cloud Support: Connect to resources in private networks in any external cloud (AWS, Azure, GCP, etc.) or on-premise using Cloudflare Tunnels

JavaScript export default {async fetch(request, env, ctx) {// Perform application logic in Workers here// Sample call to an internal API running on ECS in AWS using the bindingconst response = await env.AWS_VPC_ECS_API.fetch("https://internal-host.example.com");// Additional application logic in Workersreturn new Response();},};Set up a Cloudflare Tunnel, create a VPC Service, add service bindings to your Worker, and access private resources securely. Refer to the documentation to get started.

You can now capture Wrangler command output in a structured ND-JSON ↗ format by setting the

WRANGLER_OUTPUT_FILE_PATHorWRANGLER_OUTPUT_FILE_DIRECTORYenvironment variables. This feature is particularly useful for CI/CD pipelines and automation tools that need programmatic access to deployment information such as worker names, version IDs, deployment URLs, and error details. Commands that support this feature includewrangler deploy,wrangler versions upload,wrangler versions deploy, andwrangler pages deploy.

Workers, including those using Durable Objects and Browser Rendering, may now process WebSocket messages up to 32 MiB in size. Previously, this limit was 1 MiB.

This change allows Workers to handle use cases requiring large message sizes, such as processing Chrome Devtools Protocol messages.

For more information, please see the Durable Objects startup limits.

We've raised the Cloudflare Workflows account-level limits for all accounts on the Workers paid plan:

- Instance creation rate increased from 100 workflow instances per 10 seconds to 100 instances per second

- Concurrency limit increased from 4,500 to 10,000 workflow instances per account

These increases mean you can create new instances up to 10x faster, and have more workflow instances concurrently executing. To learn more and get started with Workflows, refer to the getting started guide.

If your application requires a higher limit, fill out the Limit Increase Request Form or contact your account team. Please refer to Workflows pricing for more information.

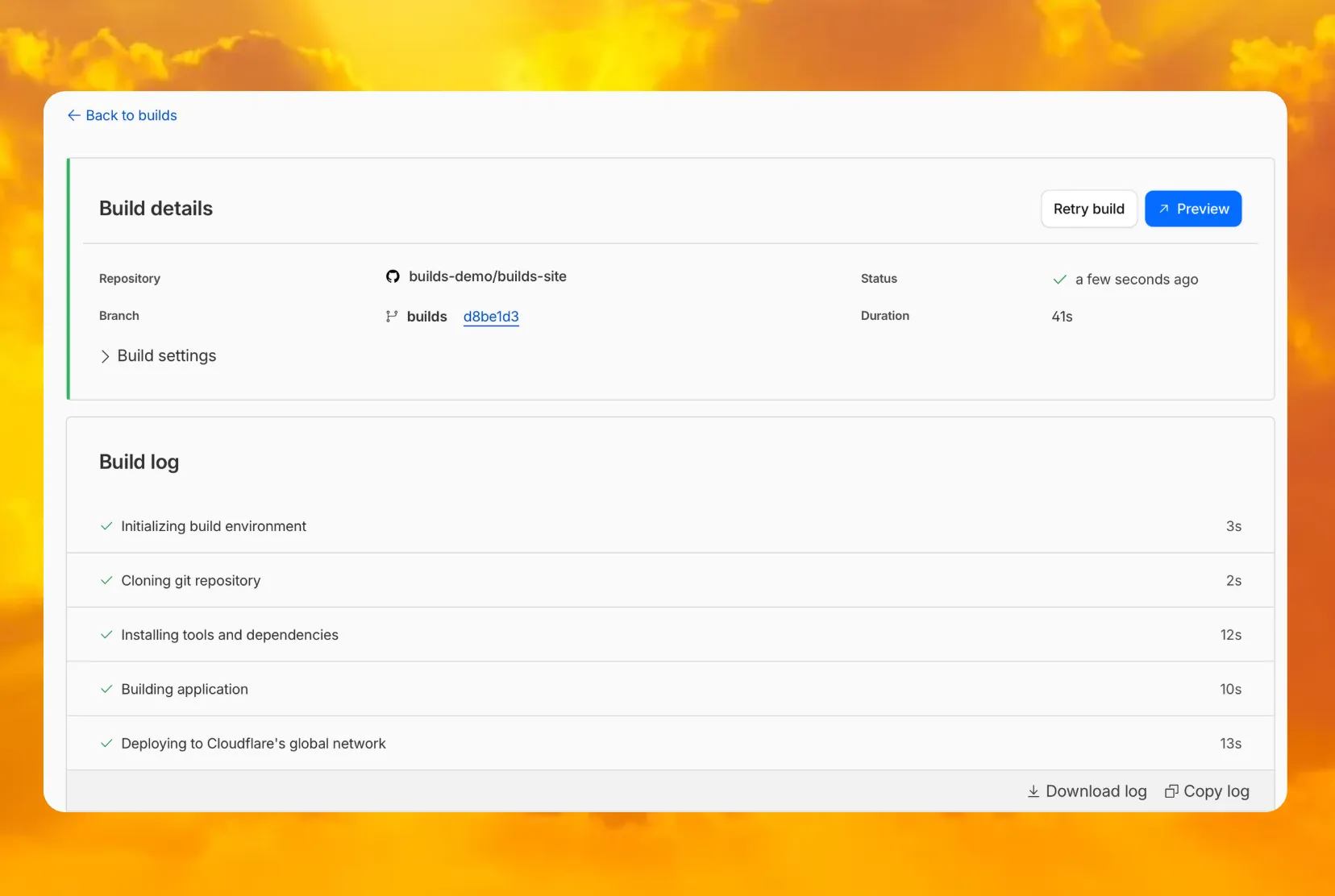

You can now access preview URLs directly from the build details page, making it easier to test your changes when reviewing builds in the dashboard.

What's new

- A Preview button now appears in the top-right corner of the build details page for successful builds

- Click it to instantly open the latest preview URL

- Matches the same experience you're familiar with from Pages

AI Search now supports reranking for improved retrieval quality and allows you to set the system prompt directly in your API requests.

You can now enable reranking to reorder retrieved documents based on their semantic relevance to the user’s query. Reranking helps improve accuracy, especially for large or noisy datasets where vector similarity alone may not produce the optimal ordering.

You can enable and configure reranking in the dashboard or directly in your API requests:

JavaScript const answer = await env.AI.autorag("my-autorag").aiSearch({query: "How do I train a llama to deliver coffee?",model: "@cf/meta/llama-3.3-70b-instruct-fp8-fast",reranking: {enabled: true,model: "@cf/baai/bge-reranker-base",},});Previously, system prompts could only be configured in the dashboard. You can now define them directly in your API requests, giving you per-query control over behavior. For example:

JavaScript // Dynamically set query and system prompt in AI Searchasync function getAnswer(query, tone) {const systemPrompt = `You are a ${tone} assistant.`;const response = await env.AI.autorag("my-autorag").aiSearch({query: query,system_prompt: systemPrompt,});return response;}// Example usageconst query = "What is Cloudflare?";const tone = "friendly";const answer = await getAnswer(query, tone);console.log(answer);Learn more about Reranking and System Prompt in AI Search.

Previously, if you wanted to develop or deploy a worker with attached resources, you'd have to first manually create the desired resources. Now, if your Wrangler configuration file includes a KV namespace, D1 database, or R2 bucket that does not yet exist on your account, you can develop locally and deploy your application seamlessly, without having to run additional commands.

Automatic provisioning is launching as an open beta, and we'd love to hear your feedback to help us make improvements! It currently works for KV, R2, and D1 bindings. You can disable the feature using the

--no-x-provisionflag.To use this feature, update to wrangler@4.45.0 and add bindings to your config file without resource IDs e.g.:

JSONC {"kv_namespaces": [{ "binding": "MY_KV" }],"d1_databases": [{ "binding": "MY_DB" }],"r2_buckets": [{ "binding": "MY_R2" }],}wrangler devwill then automatically create these resources for you locally, and on your next run ofwrangler deploy, Wrangler will call the Cloudflare API to create the requested resources and link them to your Worker.Though resource IDs will be automatically written back to your Wrangler config file after resource creation, resources will stay linked across future deploys even without adding the resource IDs to the config file. This is especially useful for shared templates, which now no longer need to include account-specific resource IDs when adding a binding.

The Cloudflare Vite plugin now supports TanStack Start ↗ apps. Get started with new or existing projects.

Create a new TanStack Start project that uses the Cloudflare Vite plugin via the

create-cloudflareCLI:npm create cloudflare@latest -- my-tanstack-start-app --framework=tanstack-startyarn create cloudflare my-tanstack-start-app --framework=tanstack-startpnpm create cloudflare@latest my-tanstack-start-app --framework=tanstack-startMigrate an existing TanStack Start project to use the Cloudflare Vite plugin:

- Install

@cloudflare/vite-pluginandwrangler

npm i -D @cloudflare/vite-plugin wrangleryarn add -D @cloudflare/vite-plugin wranglerpnpm add -D @cloudflare/vite-plugin wranglerbun add -d @cloudflare/vite-plugin wrangler- Add the Cloudflare plugin to your Vite config

vite.config.ts import { defineConfig } from "vite";import { tanstackStart } from "@tanstack/react-start/plugin/vite";import viteReact from "@vitejs/plugin-react";import { cloudflare } from "@cloudflare/vite-plugin";export default defineConfig({plugins: [cloudflare({ viteEnvironment: { name: "ssr" } }),tanstackStart(),viteReact(),],});- Add your Worker config file

JSONC {"$schema": "./node_modules/wrangler/config-schema.json","name": "my-tanstack-start-app",// Set this to today's date"compatibility_date": "2026-05-23","compatibility_flags": ["nodejs_compat"],"main": "@tanstack/react-start/server-entry"}TOML "$schema" = "./node_modules/wrangler/config-schema.json"name = "my-tanstack-start-app"# Set this to today's datecompatibility_date = "2026-05-23"compatibility_flags = [ "nodejs_compat" ]main = "@tanstack/react-start/server-entry"- Modify the scripts in your

package.json

package.json {"scripts": {"dev": "vite dev","build": "vite build && tsc --noEmit","start": "node .output/server/index.mjs","preview": "vite preview","deploy": "npm run build && wrangler deploy","cf-typegen": "wrangler types"}}See the TanStack Start framework guide for more info.

- Install

Developers can now programmatically retrieve a list of all file formats supported by the Markdown Conversion utility in Workers AI.

You can use the

env.AIbinding:TypeScript await env.AI.toMarkdown().supported()Or call the REST API:

Terminal window curl https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai/tomarkdown/supported \-H 'Authorization: Bearer {API_TOKEN}'Both return a list of file formats that users can convert into Markdown:

[{"extension": ".pdf","mimeType": "application/pdf",},{"extension": ".jpeg","mimeType": "image/jpeg",},...]Learn more about our Markdown Conversion utility.

We have updated the default behavior for Cloudflare Workers Preview URLs. Going forward, if a preview URL setting is not explicitly configured during deployment, its default behavior will automatically match the setting of your

workers.devsubdomain.This change is intended to provide a more intuitive and secure experience by aligning your preview URL's default state with your

workers.devconfiguration to prevent cases where a preview URL might remain public even after you disabled yourworkers.devroute.What this means for you:

- If neither setting is configured: both the workers.dev route and the preview URL will default to enabled

- If your workers.dev route is enabled and you do not explicitly set Preview URLs to enabled or disabled: Preview URLs will default to enabled

- If your workers.dev route is disabled and you do not explicitly set Preview URLs to enabled or disabled: Preview URLs will default to disabled

You can override the default setting by explicitly enabling or disabling the preview URL in your Worker's configuration through the API, Dashboard, or Wrangler.

Wrangler Version Behavior

The default behavior depends on the version of Wrangler you are using. This new logic applies to the latest version. Here is a summary of the behavior across different versions:

- Before v4.34.0: Preview URLs defaulted to enabled, regardless of the workers.dev setting.

- v4.34.0 up to (but not including) v4.44.0: Preview URLs defaulted to disabled, regardless of the workers.dev setting.

- v4.44.0 or later: Preview URLs now default to matching your workers.dev setting.

Why we’re making this change

In July, we introduced preview URLs to Workers, which let you preview code changes before deploying to production. This made disabling your Worker’s workers.dev URL an ambiguous action — the preview URL, served as a subdomain of

workers.dev(ex:preview-id-worker-name.account-name.workers.dev) would still be live even if you had disabled your Worker’sworkers.devroute. If you misinterpreted what it meant to disable yourworkers.devroute, you might unintentionally leave preview URLs enabled when you didn’t mean to, and expose them to the public Internet.To address this, we made a one-time update to disable preview URLs on existing Workers that had their workers.dev route disabled and changed the default behavior to be disabled for all new deployments where a preview URL setting was not explicitly configured.

While this change helped secure many customers, it was disruptive for customers who keep their

workers.devroute enabled and actively use the preview functionality, as it now required them to explicitly enable preview URLs on every redeployment.This new, more intuitive behavior ensures that your preview URL settings align with yourworkers.devconfiguration by default, providing a more secure and predictable experience.Securing access to

workers.devand preview URL endpointsTo further secure your

workers.devsubdomain and preview URL, you can enable Cloudflare Access with a single click in your Worker's settings to limit access to specific users or groups.

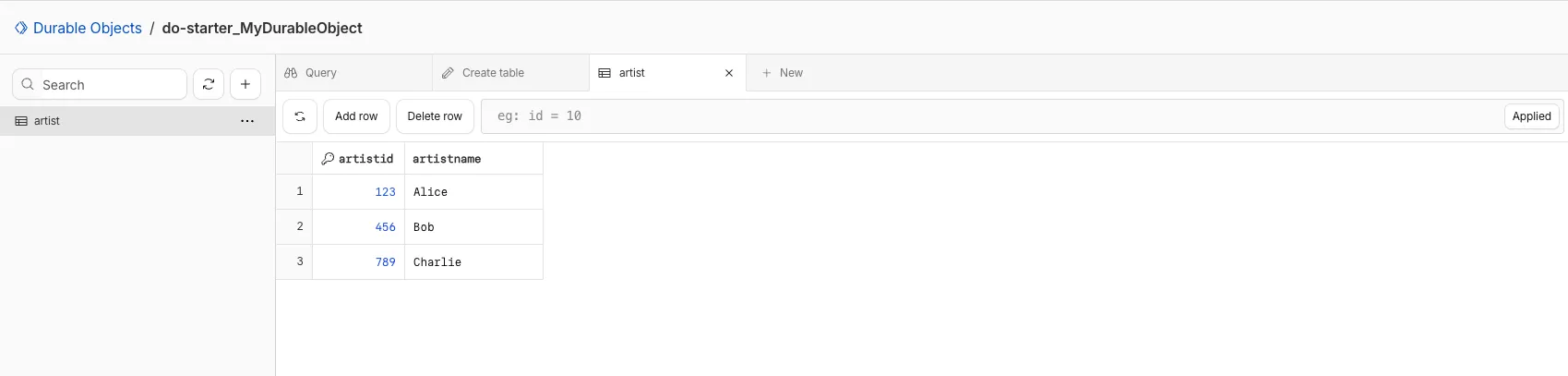

You can now view and write to each Durable Object's storage using a UI editor on the Cloudflare dashboard. Only Durable Objects using SQLite storage can use Data Studio.

Go to Durable ObjectsData Studio unlocks easier data access with Durable Objects for prototyping application data models to debugging production storage usage. Before, querying your Durable Objects data required deploying a Worker.

To access a Durable Object, you can provide an object's unique name or ID generated by Cloudflare. Data Studio requires you to have at least the

Workers Platform Adminrole, and all queries are captured with audit logging for your security and compliance needs. Queries executed by Data Studio send requests to your remote, deployed objects and incur normal usage billing.To learn more, visit the Data Studio documentation. If you have feedback or suggestions for the new Data Studio, please share your experience on Discord ↗

You can now upload a Worker that takes up 1 second to parse and execute its global scope. Previously, startup time was limited to 400 ms.

This allows you to run Workers that import more complex packages and execute more code prior to requests being handled.

For more information, see the documentation on Workers startup limits.

You can now upload Workers with static assets (like HTML, CSS, JavaScript, images) with the Cloudflare Terraform provider v5.11.0 ↗, making it even easier to deploy and manage full-stack apps with IaC.

Previously, you couldn't use Terraform to upload static assets without writing custom scripts to handle generating an asset manifest, calling the Cloudflare API to upload assets in chunks, and handling change detection.

Now, you simply define the directory where your assets are built, and we handle the rest. Check out the examples for what this looks like in Terraform configuration.

You can get started today with the Cloudflare Terraform provider (v5.11.0) ↗, using either the existing

cloudflare_workers_scriptresource ↗, or the betacloudflare_worker_versionresource ↗.Here's how you can use the existing

cloudflare_workers_script↗ resource to upload your Worker code and assets in one shot.resource "cloudflare_workers_script" "my_app" {account_id = var.account_idscript_name = "my-app"content_file = "./dist/worker/index.js"content_sha256 = filesha256("./dist/worker/index.js")main_module = "index.js"# Just point to your assets directory - that's it!assets = {directory = "./dist/static"}}And here's an example using the beta

cloudflare_worker_version↗ resource, alongside thecloudflare_worker↗ andcloudflare_workers_deployment↗ resources:# This tracks the existence of your Worker, so that you# can upload code and assets separately from tracking Worker state.resource "cloudflare_worker" "my_app" {account_id = var.account_idname = "my-app"}resource "cloudflare_worker_version" "my_app_version" {account_id = var.account_idworker_id = cloudflare_worker.my_app.id# Just point to your assets directory - that's it!assets = {directory = "./dist/static"}modules = [{name = "index.js"content_file = "./dist/worker/index.js"content_type = "application/javascript+module"}]}resource "cloudflare_workers_deployment" "my_app_deployment" {account_id = var.account_idscript_name = cloudflare_worker.my_app.namestrategy = "percentage"versions = [{version_id = cloudflare_worker_version.my_app_version.idpercentage = 100}]}Under the hood, the Cloudflare Terraform provider now handles the same logic that Wrangler uses for static asset uploads. This includes scanning your assets directory, computing hashes for each file, generating a manifest with file metadata, and calling the Cloudflare API to upload any missing files in chunks. We support large directories with parallel uploads and chunking, and when the asset manifest hash changes, we detect what's changed and trigger an upload for only those changed files.

- Get started with the Cloudflare Terraform provider (v5.11.0) ↗

- You can use either the existing

cloudflare_workers_scriptresource ↗ to upload your Worker code and assets in one resource. - Or you can use the new beta

cloudflare_worker_versionresource ↗ (along with thecloudflare_worker↗ andcloudflare_workers_deployment↗) resources to more granularly control the lifecycle of each Worker resource.

You can now create and manage Workflows using Terraform, now supported in the Cloudflare Terraform provider v5.11.0 ↗. Workflows allow you to build durable, multi-step applications -- without needing to worry about retrying failed tasks or managing infrastructure.

Now, you can deploy and manage Workflows through Terraform using the new

cloudflare_workflowresource ↗:resource "cloudflare_workflow" "my_workflow" {account_id = var.account_idworkflow_name = "my-workflow"class_name = "MyWorkflow"script_name = "my-worker"}Here are full examples of how to configure

cloudflare_workflowin Terraform, using the existingcloudflare_workers_scriptresource ↗, and the betacloudflare_worker_versionresource ↗.resource "cloudflare_workers_script" "workflow_worker" {account_id = var.cloudflare_account_idscript_name = "my-workflow-worker"content_file = "${path.module}/../dist/worker/index.js"content_sha256 = filesha256("${path.module}/../dist/worker/index.js")main_module = "index.js"}resource "cloudflare_workflow" "workflow" {account_id = var.cloudflare_account_idworkflow_name = "my-workflow"class_name = "MyWorkflow"script_name = cloudflare_workers_script.workflow_worker.script_name}You can more granularly control the lifecycle of each Worker resource using the beta

cloudflare_worker_version↗ resource, alongside thecloudflare_worker↗ andcloudflare_workers_deployment↗ resources.resource "cloudflare_worker" "workflow_worker" {account_id = var.cloudflare_account_idname = "my-workflow-worker"}resource "cloudflare_worker_version" "workflow_worker_version" {account_id = var.cloudflare_account_idworker_id = cloudflare_worker.workflow_worker.idmain_module = "index.js"modules = [{name = "index.js"content_file = "${path.module}/../dist/worker/index.js"content_type = "application/javascript+module"}]}resource "cloudflare_workers_deployment" "workflow_deployment" {account_id = var.cloudflare_account_idscript_name = cloudflare_worker.workflow_worker.namestrategy = "percentage"versions = [{version_id = cloudflare_worker_version.workflow_worker_version.idpercentage = 100}]}resource "cloudflare_workflow" "my_workflow" {account_id = var.cloudflare_account_idworkflow_name = "my-workflow"class_name = "MyWorkflow"script_name = cloudflare_worker.workflow_worker.name}- Get started with the Cloudflare Terraform provider (v5.11.0) ↗ and the new

cloudflare_workflowresource ↗.

- Get started with the Cloudflare Terraform provider (v5.11.0) ↗ and the new

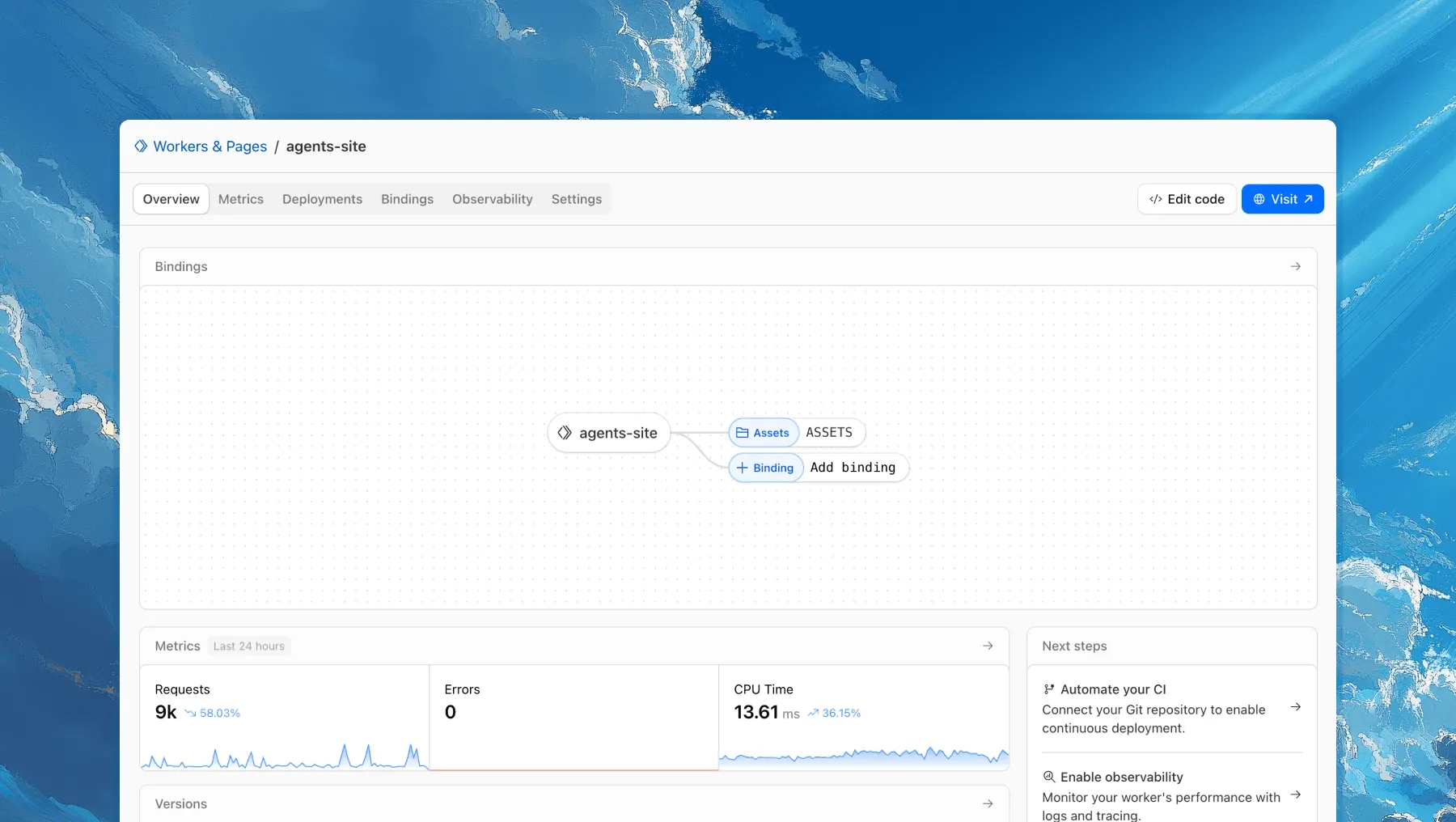

Each of your Workers now has a new overview page in the Cloudflare dashboard.

The goal is to make it easier to understand your Worker without digging through multiple tabs. Think of it as a new home base, a place to get a high-level overview on what's going on.

It's the first place you land when you open a Worker in the dashboard, and it gives you an immediate view of what’s going on. You can see requests, errors, and CPU time at a glance. You can view and add bindings, and see recent versions of your app, including who published them.

Navigation is also simpler, with visually distinct tabs at the top of the page. At the bottom right you'll find guided steps for what to do next that are based on the state of your Worker, such as adding a binding or connecting a custom domain.

We plan to add more here over time. Better insights, more controls, and ways to manage your Worker from one page.

If you have feedback or suggestions for the new Overview page or your Cloudflare Workers experience in general, we'd love to hear from you. Join the Cloudflare developer community on Discord ↗.

You can now enable compaction for individual Apache Iceberg ↗ tables in R2 Data Catalog, giving you fine-grained control over different workloads.

Terminal window # Enable compaction for a specific table (no token required)npx wrangler r2 bucket catalog compaction enable <BUCKET> <NAMESPACE> <TABLE> --target-size 256This allows you to:

- Apply different target file sizes per table

- Disable compaction for specific tables

- Optimize based on table-specific access patterns

Learn more at Manage catalogs.

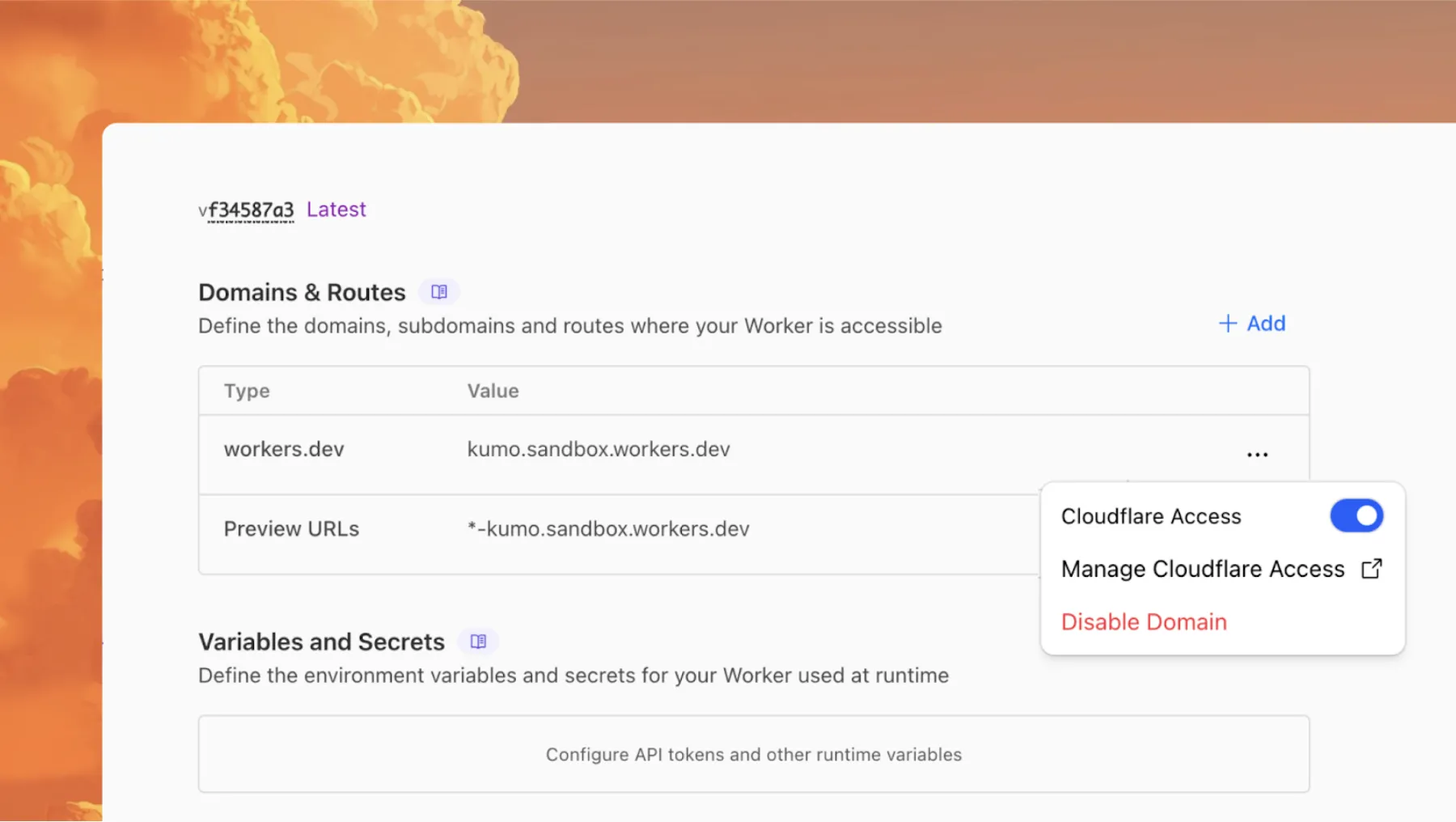

You can now enable Cloudflare Access for your

workers.devand Preview URLs in a single click.

Access allows you to limit access to your Workers to specific users or groups. You can limit access to yourself, your teammates, your organization, or anyone else you specify in your Access policy.

To enable Cloudflare Access:

-

In the Cloudflare dashboard, go to the Workers & Pages page.

Go to Workers & Pages -

In Overview, select your Worker.

-

Go to Settings > Domains & Routes.

-

For

workers.devor Preview URLs, click Enable Cloudflare Access. -

Optionally, to configure the Access application, click Manage Cloudflare Access. There, you can change the email addresses you want to authorize. View Access policies to learn about configuring alternate rules.

To fully secure your application, it is important that you validate the JWT that Cloudflare Access adds to the

Cf-Access-Jwt-Assertionheader on the incoming request.The following code will validate the JWT using the jose NPM package ↗:

JavaScript import { jwtVerify, createRemoteJWKSet } from "jose";export default {async fetch(request, env, ctx) {// Verify the POLICY_AUD environment variable is setif (!env.POLICY_AUD) {return new Response("Missing required audience", {status: 403,headers: { "Content-Type": "text/plain" },});}// Get the JWT from the request headersconst token = request.headers.get("cf-access-jwt-assertion");// Check if token existsif (!token) {return new Response("Missing required CF Access JWT", {status: 403,headers: { "Content-Type": "text/plain" },});}try {// Create JWKS from your team domainconst JWKS = createRemoteJWKSet(new URL(`${env.TEAM_DOMAIN}/cdn-cgi/access/certs`),);// Verify the JWTconst { payload } = await jwtVerify(token, JWKS, {issuer: env.TEAM_DOMAIN,audience: env.POLICY_AUD,});// Token is valid, proceed with your application logicreturn new Response(`Hello ${payload.email || "authenticated user"}!`, {headers: { "Content-Type": "text/plain" },});} catch (error) {// Token verification failedreturn new Response(`Invalid token: ${error.message}`, {status: 403,headers: { "Content-Type": "text/plain" },});}},};Add these environment variables to your Worker:

POLICY_AUD: Your application's AUD tagTEAM_DOMAIN:https://<your-team-name>.cloudflareaccess.com

Both of these appear in the modal that appears when you enable Cloudflare Access.

You can set these variables by adding them to your Worker's Wrangler configuration file, or via the Cloudflare dashboard under Workers & Pages > your-worker > Settings > Environment Variables.

-

Deepgram's newest Flux model

@cf/deepgram/fluxis now available on Workers AI, hosted directly on Cloudflare's infrastructure. We're excited to be a launch partner with Deepgram and offer their new Speech Recognition model built specifically for enabling voice agents. Check out Deepgram's blog ↗ for more details on the release.The Flux model can be used in conjunction with Deepgram's speech-to-text model

@cf/deepgram/nova-3and text-to-speech model@cf/deepgram/aura-1to build end-to-end voice agents. Having Deepgram on Workers AI takes advantage of our edge GPU infrastructure, for ultra low latency voice AI applications.For the month of October 2025, Deepgram's Flux model will be free to use on Workers AI. Official pricing will be announced soon and charged after the promotional pricing period ends on October 31, 2025. Check out the model page for pricing details in the future.

The new Flux model is WebSocket only as it requires live bi-directional streaming in order to recognize speech activity.

- Create a worker that establishes a websocket connection with

@cf/deepgram/flux

JavaScript export default {async fetch(request, env, ctx): Promise<Response> {const resp = await env.AI.run("@cf/deepgram/flux", {encoding: "linear16",sample_rate: "16000"}, {websocket: true});return resp;},} satisfies ExportedHandler<Env>;- Deploy your worker

Terminal window npx wrangler deploy- Write a client script to connect to your worker and start sending random audio bytes to it

JavaScript const ws = new WebSocket('wss://<your-worker-url.com>');ws.onopen = () => {console.log('Connected to WebSocket');// Generate and send random audio bytes// You can replace this part with a function// that reads from your mic or other audio sourceconst audioData = generateRandomAudio();ws.send(audioData);console.log('Audio data sent');};ws.onmessage = (event) => {// Transcription will be received here// Add your custom logic to parse the dataconsole.log('Received:', event.data);};ws.onerror = (error) => {console.error('WebSocket error:', error);};ws.onclose = () => {console.log('WebSocket closed');};// Generate random audio data (1 second of noise at 44.1kHz, mono)function generateRandomAudio() {const sampleRate = 44100;const duration = 1;const numSamples = sampleRate * duration;const buffer = new ArrayBuffer(numSamples * 2);const view = new Int16Array(buffer);for (let i = 0; i < numSamples; i++) {view[i] = Math.floor(Math.random() * 65536 - 32768);}return buffer;}- Create a worker that establishes a websocket connection with

You can now perform more powerful queries directly in Workers Analytics Engine ↗ with a major expansion of our SQL function library.

Workers Analytics Engine allows you to ingest and store high-cardinality data at scale (such as custom analytics) and query your data through a simple SQL API.

Today, we've expanded Workers Analytics Engine's SQL capabilities with several new functions:

argMin()- Returns the value associated with the minimum in a groupargMax()- Returns the value associated with the maximum in a grouptopK()- Returns an array of the most frequent values in a grouptopKWeighted()- Returns an array of the most frequent values in a group using weightsfirst_value()- Returns the first value in an ordered set of values within a partitionlast_value()- Returns the last value in an ordered set of values within a partition

bitAnd()- Returns the bitwise AND of two expressionsbitCount()- Returns the number of bits set to one in the binary representation of a numberbitHammingDistance()- Returns the number of bits that differ between two numbersbitNot()- Returns a number with all bits flippedbitOr()- Returns the inclusive bitwise OR of two expressionsbitRotateLeft()- Rotates all bits in a number left by specified positionsbitRotateRight()- Rotates all bits in a number right by specified positionsbitShiftLeft()- Shifts all bits in a number left by specified positionsbitShiftRight()- Shifts all bits in a number right by specified positionsbitTest()- Returns the value of a specific bit in a numberbitXor()- Returns the bitwise exclusive-or of two expressions

abs()- Returns the absolute value of a numberlog()- Computes the natural logarithm of a numberround()- Rounds a number to a specified number of decimal placesceil()- Rounds a number up to the nearest integerfloor()- Rounds a number down to the nearest integerpow()- Returns a number raised to the power of another number

lowerUTF8()- Converts a string to lowercase using UTF-8 encodingupperUTF8()- Converts a string to uppercase using UTF-8 encoding

hex()- Converts a number to its hexadecimal representationbin()- Converts a string to its binary representation

New type conversion functions: ↗

toUInt8()- Converts any numeric expression, or expression resulting in a string representation of a decimal, into an unsigned 8 bit integer

Whether you're building usage-based billing systems, customer analytics dashboards, or other custom analytics, these functions let you get the most out of your data. Get started with Workers Analytics Engine and explore all available functions in our SQL reference documentation.