Changelog

New updates and improvements at Cloudflare.

Local Explorer is a browser-based interface and REST API for viewing and editing local resource data during development. It removes the need to write throwaway scripts or dig through

.wrangler/stateto understand what data your Worker has stored locally.Local Explorer is available in Wrangler 4.82.1+ and the Cloudflare Vite plugin 1.32.0+. Start a local development session and press

ein your terminal, or navigate to/cdn-cgi/exploreron your local dev server.Local Explorer supports five resource types and works across multiple workers running locally:

- KV — Browse keys, view values and metadata, create, update, and delete key-value pairs.

- R2 — List objects, view metadata, upload files, and delete objects. Supports directory views and multi-select.

- D1 — Browse tables and rows, run arbitrary SQL queries, and edit schemas in a full data studio.

- Durable Objects (SQLite storage) — Browse individual object SQLite tables, run SQL queries, and edit schemas.

- Workflows — List instances, view status and step history, trigger new runs, and pause, resume, restart, or terminate instances.

Local Explorer exposes a REST API at

/cdn-cgi/explorer/apithat provides programmatic access to the same operations available in the browser. The root endpoint returns an OpenAPI specification ↗ describing all available endpoints, parameters, and response formats.Terminal window curl http://localhost:8787/cdn-cgi/explorer/apiPoint an AI coding agent at

/cdn-cgi/explorer/apiand it can discover and interact with your local resources without manual setup. This enables iterative development loops where an agent can populate test data in KV or D1, inspect Durable Object state, trigger Workflow runs, or upload files to R2.For more details, refer to the Local Explorer documentation.

Browser Rendering now exposes the Chrome DevTools Protocol (CDP), the low-level protocol that powers browser automation. The growing ecosystem of CDP-based agent tools, along with existing CDP automation scripts, can now use Browser Rendering directly.

Any CDP-compatible client, including Puppeteer and Playwright, can connect from any environment, whether that is Cloudflare Workers, your local machine, or a cloud environment. All you need is your Cloudflare API key.

For any existing CDP script, switching to Browser Rendering is a one-line change:

JavaScript const puppeteer = require("puppeteer-core");const browser = await puppeteer.connect({browserWSEndpoint: `wss://api.cloudflare.com/client/v4/accounts/${ACCOUNT_ID}/browser-rendering/devtools/browser?keep_alive=600000`,headers: { Authorization: `Bearer ${API_TOKEN}` },});const page = await browser.newPage();await page.goto("https://example.com");console.log(await page.title());await browser.close();Additionally, MCP clients like Claude Desktop, Claude Code, Cursor, and OpenCode can now use Browser Rendering as their remote browser via the chrome-devtools-mcp ↗ package.

Here is an example of how to configure Browser Rendering for Claude Desktop:

{"mcpServers": {"browser-rendering": {"command": "npx","args": ["-y","chrome-devtools-mcp@latest","--wsEndpoint=wss://api.cloudflare.com/client/v4/accounts/<ACCOUNT_ID>/browser-rendering/devtools/browser?keep_alive=600000","--wsHeaders={\"Authorization\":\"Bearer <API_TOKEN>\"}"]}}}To get started, refer to the CDP documentation.

The simultaneous open connections limit has been relaxed. Previously, each Worker invocation was limited to six open connections at a time for the entire lifetime of each connection, including while reading the response body. Now, a connection is freed as soon as response headers arrive, so the six-connection limit only constrains how many connections can be in the initial "waiting for headers" phase simultaneously.

This means Workers can now have many more connections open at the same time without queueing, as long as no more than six are waiting for their initial response. This eliminates the

Response closed due to connection limitexception that could previously occur when the runtime canceled stalled connections to prevent deadlocks.Previously, the runtime used a deadlock avoidance algorithm that watched each open connection for I/O activity. If all six connections appeared idle — even momentarily — the runtime would cancel the least-recently-used connection to make room for new requests. In practice, this heuristic was fragile. For example, when a response used

Content-Encoding: gzip, the runtime's internal decompression created brief gaps between read and write operations. During these gaps, the connection appeared stalled despite being actively read by the Worker. If multiple connections hit these gaps at the same time, the runtime could spuriously cancel a connection that was working correctly. By only counting connections during the waiting-for-headers phase — where the runtime is fully in control and there is no ambiguity about whether the connection is active — this class of bug is eliminated entirely.

AI Search now supports CSS content selectors for website data sources. You can now define which parts of a crawled page are extracted and indexed by specifying CSS selectors paired with URL glob patterns.

Content selectors solve the problem of indexing only relevant content while ignoring navigation, sidebars, footers, and other boilerplate. When a page URL matches a glob pattern, only elements matching the corresponding CSS selector are extracted and converted to Markdown for indexing.

Configure content selectors via the dashboard or API:

Terminal window curl "https://api.cloudflare.com/client/v4/accounts/{account_id}/ai-search/instances" \-H "Authorization: Bearer {api_token}" \-H "Content-Type: application/json" \-d '{"id": "my-ai-search","source": "https://example.com","type": "web-crawler","source_params": {"web_crawler": {"parse_options": {"content_selector": [{"path": "**/blog/**","selector": "article .post-body"}]}}}}'Selectors are evaluated in order, and the first matching pattern wins. You can define up to 10 content selector entries per instance.

For configuration details and examples, refer to the content selectors documentation.

AI Search now supports four additional Workers AI models across text generation and embedding.

Model Context window (tokens) @cf/zai-org/glm-4.7-flash131,072 @cf/qwen/qwen3-30b-a3b-fp832,000 GLM-4.7-Flash is a lightweight model from Zhipu AI with a 131,072 token context window, suitable for long-document summarization and retrieval tasks. Qwen3-30B-A3B is a mixture-of-experts model from Alibaba that activates only 3 billion parameters per forward pass, keeping inference fast while maintaining strong response quality.

Model Vector dims Input tokens Metric @cf/qwen/qwen3-embedding-0.6b1,024 4,096 cosine @cf/google/embeddinggemma-300m768 512 cosine Qwen3-Embedding-0.6B supports up to 4,096 input tokens, making it a good fit for indexing longer text chunks. EmbeddingGemma-300M from Google produces 768-dimension vectors and is optimized for low-latency embedding workloads.

All four models are available without additional provider keys since they run on Workers AI. Select them when creating or updating an AI Search instance in the dashboard or through the API.

For the full list of supported models, refer to Supported models.

The Workers runtime now automatically sends a reciprocal Close frame when it receives a Close frame from the peer. The

readyStatetransitions toCLOSEDbefore thecloseevent fires. This matches the WebSocket specification ↗ and standard browser behavior.This change is enabled by default for Workers using compatibility dates on or after

2026-04-07(via theweb_socket_auto_reply_to_closecompatibility flag). Existing code that manually callsclose()inside thecloseevent handler will continue to work — the call is silently ignored when the WebSocket is already closed.JavaScript const [client, server] = Object.values(new WebSocketPair());server.accept();server.addEventListener("close", (event) => {// readyState is already CLOSED — no need to call server.close().console.log(server.readyState); // WebSocket.CLOSEDconsole.log(event.code); // 1000console.log(event.wasClean); // true});The automatic close behavior can interfere with WebSocket proxying, where a Worker sits between a client and a backend and needs to coordinate the close on both sides independently. To support this use case, pass

{ allowHalfOpen: true }toaccept():JavaScript const [client, server] = Object.values(new WebSocketPair());server.accept({ allowHalfOpen: true });server.addEventListener("close", (event) => {// readyState is still CLOSING here, giving you time// to coordinate the close on the other side.console.log(server.readyState); // WebSocket.CLOSING// Manually close when ready.server.close(event.code, "done");});For more information, refer to WebSockets Close behavior.

You can now specify placement constraints to control where your Containers run.

Constraint Values Use case regionsENAM,WNAM,EEUR,WEURGeographic placement jurisdictioneu,fedrampCompliance boundaries Use

regionsto limit placement to specific geographic areas. Usejurisdictionto restrict containers to compliance boundaries —eumaps to European regions (EEUR, WEUR) andfedrampmaps to North American regions (ENAM, WNAM).Refer to Containers placement for more details.

We are partnering with Google to bring

@cf/google/gemma-4-26b-a4b-itto Workers AI. Gemma 4 26B A4B is a Mixture-of-Experts (MoE) model built from Gemini 3 research, with 26B total parameters and only 4B active per forward pass. By activating a small subset of parameters during inference, the model runs almost as fast as a 4B-parameter model while delivering the quality of a much larger one.Gemma 4 is Google's most capable family of open models, designed to maximize intelligence-per-parameter.

- Mixture-of-Experts architecture with 8 active experts out of 128 total (plus 1 shared expert), delivering frontier-level performance at a fraction of the compute cost of dense models

- 256,000 token context window for retaining full conversation history, tool definitions, and long documents across extended sessions

- Built-in thinking mode that lets the model reason step-by-step before answering, improving accuracy on complex tasks

- Vision understanding for object detection, document and PDF parsing, screen and UI understanding, chart comprehension, OCR (including multilingual), and handwriting recognition, with support for variable aspect ratios and resolutions

- Function calling with native support for structured tool use, enabling agentic workflows and multi-step planning

- Multilingual with out-of-the-box support for 35+ languages, pre-trained on 140+ languages

- Coding for code generation, completion, and correction

Use Gemma 4 26B A4B through the Workers AI binding (

env.AI.run()), the REST API at/runor/v1/chat/completions, or the OpenAI-compatible endpoint.For more information, refer to the Gemma 4 26B A4B model page.

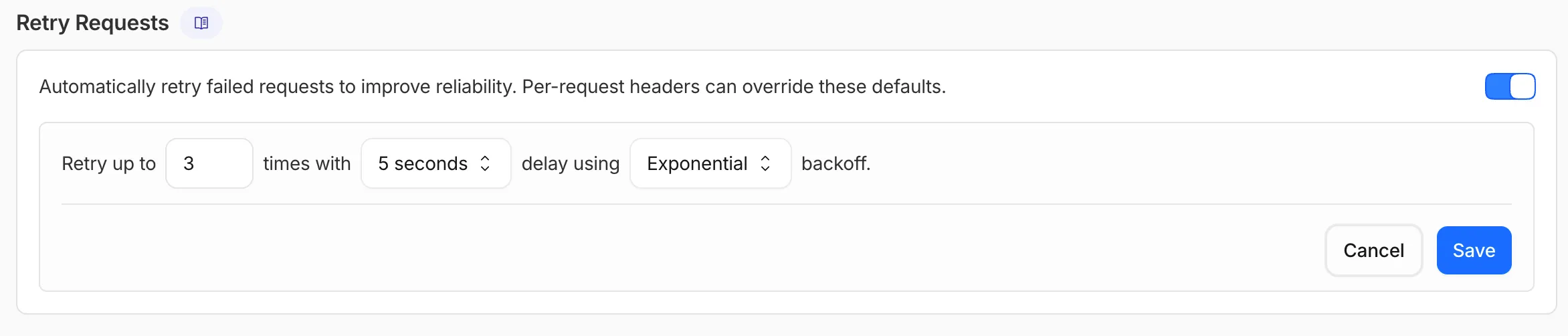

AI Gateway now supports automatic retries at the gateway level. When an upstream provider returns an error, your gateway retries the request based on the retry policy you configure, without requiring any client-side changes.

You can configure the retry count (up to 5 attempts), the delay between retries (from 100ms to 5 seconds), and the backoff strategy (Constant, Linear, or Exponential). These defaults apply to all requests through the gateway, and per-request headers can override them.

This is particularly useful when you do not control the client making the request and cannot implement retry logic on the caller side. For more complex failover scenarios — such as failing across different providers — use Dynamic Routing.

For more information, refer to Manage gateways.

All

wrangler workflowscommands now accept a--localflag to target a Workflow running in a localwrangler devsession instead of the production API.You can now manage the full Workflow lifecycle locally, including triggering Workflows, listing instances, pausing, resuming, restarting, terminating, and sending events:

Terminal window npx wrangler workflows list --localnpx wrangler workflows trigger my-workflow --localnpx wrangler workflows instances list my-workflow --localnpx wrangler workflows instances pause my-workflow <INSTANCE_ID> --localnpx wrangler workflows instances send-event my-workflow <INSTANCE_ID> --type my-event --localAll commands also accept

--portto target a specificwrangler devsession (defaults to8787).For more information, refer to Workflows local development.

AI Search supports a

wrangler ai-searchcommand namespace. Use it to manage instances from the command line.The following commands are available:

Command Description wrangler ai-search createCreate a new instance with an interactive wizard wrangler ai-search listList all instances in your account wrangler ai-search getGet details of a specific instance wrangler ai-search updateUpdate the configuration of an instance wrangler ai-search deleteDelete an instance wrangler ai-search searchRun a search query against an instance wrangler ai-search statsGet usage statistics for an instance The

createcommand guides you through setup, choosing a name, source type (r2orweb), and data source. You can also pass all options as flags for non-interactive use:Terminal window wrangler ai-search create my-instance --type r2 --source my-bucketUse

wrangler ai-search searchto query an instance directly from the CLI:Terminal window wrangler ai-search search my-instance --query "how do I configure caching?"All commands support

--jsonfor structured output that scripts and AI agents can parse directly.For full usage details, refer to the Wrangler commands documentation.

Workers Builds now supports Deploy Hooks — trigger builds from your headless CMS, a Cron Trigger, a Slack bot, or any system that can send an HTTP request.

Each Deploy Hook is a unique URL tied to a specific branch. Send it a

POSTand your Worker builds and deploys.Terminal window curl -X POST "https://api.cloudflare.com/client/v4/workers/builds/deploy_hooks/<DEPLOY_HOOK_ID>"To create one, go to Workers & Pages > your Worker > Settings > Builds > Deploy Hooks.

Since a Deploy Hook is a URL, you can also call it from another Worker. For example, a Worker with a Cron Trigger can rebuild your project on a schedule:

JavaScript export default {async scheduled(event, env, ctx) {ctx.waitUntil(fetch(env.DEPLOY_HOOK_URL, { method: "POST" }));},};TypeScript export default {async scheduled(event: ScheduledEvent, env: Env, ctx: ExecutionContext): Promise<void> {ctx.waitUntil(fetch(env.DEPLOY_HOOK_URL, { method: "POST" }));},} satisfies ExportedHandler<Env>;You can also use Deploy Hooks to rebuild when your CMS publishes new content or deploy from a Slack slash command.

- Automatic deduplication: If a Deploy Hook fires multiple times before the first build starts running, redundant builds are automatically skipped. This keeps your build queue clean when webhooks retry or CMS events arrive in bursts.

- Last triggered: The dashboard shows when each hook was last triggered.

- Build source: Your Worker's build history shows which Deploy Hook started each build by name.

Deploy Hooks are rate limited to 10 builds per minute per Worker and 100 builds per minute per account. For all limits, see Limits & pricing.

To get started, read the Deploy Hooks documentation.

Three new properties are now available on

request.cfin Workers that expose Layer 4 transport telemetry from the client connection. These properties let your Worker make decisions based on real-time connection quality signals — such as round-trip time and data delivery rate — without requiring any client-side changes.Previously, this telemetry was only available via the

Server-Timing: cfL4response header. These new properties surface the same data directly in the Workers runtime, so you can use it for routing, logging, or response customization.Property Type Description clientTcpRttnumber | undefined The smoothed TCP round-trip time (RTT) between Cloudflare and the client in milliseconds. Only present for TCP connections (HTTP/1, HTTP/2). For example, 22.clientQuicRttnumber | undefined The smoothed QUIC round-trip time (RTT) between Cloudflare and the client in milliseconds. Only present for QUIC connections (HTTP/3). For example, 42.edgeL4Object | undefined Layer 4 transport statistics. Contains deliveryRate(number) — the most recent data delivery rate estimate for the connection, in bytes per second. For example,123456.JavaScript export default {async fetch(request) {const cf = request.cf;const rtt = cf.clientTcpRtt ?? cf.clientQuicRtt ?? 0;const deliveryRate = cf.edgeL4?.deliveryRate ?? 0;const transport = cf.clientTcpRtt ? "TCP" : "QUIC";console.log(`Transport: ${transport}, RTT: ${rtt}ms, Delivery rate: ${deliveryRate} B/s`);const headers = new Headers(request.headers);headers.set("X-Client-RTT", String(rtt));headers.set("X-Delivery-Rate", String(deliveryRate));return fetch(new Request(request, { headers }));},};For more information, refer to Workers Runtime APIs: Request.

Four new fields are now available on

request.cf.tlsClientAuthin Workers for requests that include a mutual TLS (mTLS) client certificate. These fields encode the client certificate and its intermediate chain in RFC 9440 ↗ format — the same standard format used by theClient-CertandClient-Cert-ChainHTTP headers — so your Worker can forward them directly to your origin without any custom parsing or encoding logic.Field Type Description certRFC9440String The client leaf certificate in RFC 9440 format ( :base64-DER:). Empty if no client certificate was presented.certRFC9440TooLargeBoolean trueif the leaf certificate exceeded 10 KB and was omitted fromcertRFC9440.certChainRFC9440String The intermediate certificate chain in RFC 9440 format as a comma-separated list. Empty if no intermediates were sent or if the chain exceeded 16 KB. certChainRFC9440TooLargeBoolean trueif the intermediate chain exceeded 16 KB and was omitted fromcertChainRFC9440.JavaScript export default {async fetch(request) {const tls = request.cf.tlsClientAuth;// Only forward if cert was verified and chain is completeif (!tls || !tls.certVerified || tls.certRevoked || tls.certChainRFC9440TooLarge) {return new Response("Unauthorized", { status: 401 });}const headers = new Headers(request.headers);headers.set("Client-Cert", tls.certRFC9440);headers.set("Client-Cert-Chain", tls.certChainRFC9440);return fetch(new Request(request, { headers }));},};For more information, refer to Client certificate variables and Mutual TLS authentication.

Containers and Sandboxes now support connecting directly to Workers over HTTP. This allows you to call Workers functions and bindings, like KV or R2, from within the container at specific hostnames.

Define an

outboundhandler to capture any HTTP request or useoutboundByHostto capture requests to individual hostnames and IPs.JavaScript export class MyApp extends Sandbox {}MyApp.outbound = async (request, env, ctx) => {// you can run arbitrary functions defined in your Worker on any HTTP requestreturn await someWorkersFunction(request.body);};MyApp.outboundByHost = {"my.worker": async (request, env, ctx) => {return await anotherFunction(request.body);},};In this example, requests from the container to

http://my.workerwill run the function defined withinoutboundByHost, and any other HTTP requests will run theoutboundhandler. These handlers run entirely inside the Workers runtime, outside of the container sandbox.Each handler has access to

env, so it can call any binding set in Wrangler config. Code inside the container makes a standard HTTP request to that hostname and the outbound Worker translates it into a binding call.JavaScript export class MyApp extends Sandbox {}MyApp.outboundByHost = {"my.kv": async (request, env, ctx) => {const key = new URL(request.url).pathname.slice(1);const value = await env.KV.get(key);return new Response(value ?? "", { status: value ? 200 : 404 });},"my.r2": async (request, env, ctx) => {const key = new URL(request.url).pathname.slice(1);const object = await env.BUCKET.get(key);return new Response(object?.body ?? "", { status: object ? 200 : 404 });},};Now, from inside the container sandbox,

curl http://my.kv/some-keywill access Workers KV andcurl http://my.r2/some-objectwill access R2.Use

ctx.containerIdto reference the container's automatically provisioned Durable Object.JavaScript export class MyContainer extends Container {}MyContainer.outboundByHost = {"get-state.do": async (request, env, ctx) => {const id = env.MY_CONTAINER.idFromString(ctx.containerId);const stub = env.MY_CONTAINER.get(id);return stub.getStateForKey(request.body);},};This provides an easy way to associate state with any container instance, and includes a built-in SQLite database.

Upgrade to

@cloudflare/containersversion 0.2.0 or later, or@cloudflare/sandboxversion 0.8.0 or later to use outbound Workers.Refer to Containers outbound traffic and Sandboxes outbound traffic for more details and examples.

The new

secretsconfiguration property lets you declare the secret names your Worker requires in your Wrangler configuration file. Required secrets are validated during local development and deploy, and used as the source of truth for type generation.JSONC {"secrets": {"required": ["API_KEY", "DB_PASSWORD"],},}TOML [secrets]required = [ "API_KEY", "DB_PASSWORD" ]When

secretsis defined,wrangler devandvite devload only the keys listed insecrets.requiredfrom.dev.varsor.env/process.env. Additional keys in those files are excluded. If any required secrets are missing, a warning is logged listing the missing names.wrangler typesgenerates typed bindings fromsecrets.requiredinstead of inferring names from.dev.varsor.env. This lets you run type generation in CI or other environments where those files are not present. Per-environment secrets are supported — the aggregatedEnvtype marks secrets that only appear in some environments as optional.wrangler deployandwrangler versions uploadvalidate that all secrets insecrets.requiredare configured on the Worker before the operation succeeds. If any required secrets are missing, the command fails with an error listing which secrets need to be set.For more information, refer to the

secretsconfiguration property reference.

Containers now support Docker Hub ↗ images. You can use a fully qualified Docker Hub image reference in your Wrangler configuration ↗ instead of first pushing the image to Cloudflare Registry.

JSONC {"containers": [{// Example: docker.io/cloudflare/sandbox:0.7.18"image": "docker.io/<NAMESPACE>/<REPOSITORY>:<TAG>",},],}TOML [[containers]]image = "docker.io/<NAMESPACE>/<REPOSITORY>:<TAG>"Containers also support private Docker Hub images. To configure credentials, refer to Use private Docker Hub images.

For more information, refer to Image management.

Dynamic Workers are now in open beta ↗ for all paid Workers users. You can now have a Worker spin up other Workers, called Dynamic Workers, at runtime to execute code on-demand in a secure, sandboxed environment. Dynamic Workers start in milliseconds, making them well suited for fast, secure code execution at scale.

- Code Mode: LLMs are trained to write code. Run tool-calling logic written in code instead of stepping through many tool calls, which can save up to 80% in inference tokens and cost.

- AI agents executing code: Run code for tasks like data analysis, file transformation, API calls, and chained actions.

- Running AI-generated code: Run generated code for prototypes, projects, and automations in a secure, isolated sandboxed environment.

- Fast development and previews: Load prototypes, previews, and playgrounds in milliseconds.

- Custom automations: Create custom tools on the fly that execute a task, call an integration, or automate a workflow.

Dynamic Workers support two loading modes:

load(code)— for one-time code execution (equivalent to callingget()with a null ID).get(id, callback)— caches a Dynamic Worker by ID so it can stay warm across requests. Use this when the same code will receive subsequent requests.

JavaScript export default {async fetch(request, env) {const worker = env.LOADER.load({compatibilityDate: "2026-01-01",mainModule: "src/index.js",modules: {"src/index.js": `export default {fetch() {return new Response("Hello from a dynamic Worker");},};`,},// Block all outbound network access from the Dynamic Worker.globalOutbound: null,});return worker.getEntrypoint().fetch(request);},};TypeScript export default {async fetch(request: Request, env: Env): Promise<Response> {const worker = env.LOADER.load({compatibilityDate: "2026-01-01",mainModule: "src/index.js",modules: {"src/index.js": `export default {fetch() {return new Response("Hello from a dynamic Worker");},};`,},// Block all outbound network access from the Dynamic Worker.globalOutbound: null,});return worker.getEntrypoint().fetch(request);},};Here are 3 new libraries to help you build with Dynamic Workers:

-

@cloudflare/codemode↗: Replace individual tool calls with a singlecode()tool, so LLMs write and execute TypeScript that orchestrates multiple API calls in one pass. -

@cloudflare/worker-bundler↗: Resolve npm dependencies and bundle source files into ready-to-load modules for Dynamic Workers, all at runtime. -

@cloudflare/shell↗: Give your agent a virtual filesystem inside a Dynamic Worker with persistent storage backed by SQLite and R2.

Dynamic Workers Starter

Use this starter ↗ to deploy a Worker that can load and execute Dynamic Workers.

Dynamic Workers Playground

Deploy the Dynamic Workers Playground ↗ to write or import code, bundle it at runtime with

@cloudflare/worker-bundler, execute it through a Dynamic Worker, and see real-time responses and execution logs.For the full API reference and configuration options, refer to the Dynamic Workers documentation.

Dynamic Workers pricing is based on three dimensions: Dynamic Workers created daily, requests, and CPU time.

Included Additional usage Dynamic Workers created daily 1,000 unique Dynamic Workers per month +$0.002 per Dynamic Worker per day Requests ¹ 10 million per month +$0.30 per million requests CPU time ¹ 30 million CPU milliseconds per month +$0.02 per million CPU milliseconds ¹ Uses Workers Standard rates and will appear as part of your existing Workers bill, not as separate Dynamic Workers charges.

Note: Dynamic Workers requests and CPU time are already billed as part of your Workers plan and will count toward your Workers requests and CPU usage. The Dynamic Workers created daily charge is not yet active — you will not be billed for the number of Dynamic Workers created at this time. Pricing information is shared in advance so you can estimate future costs.

Workflow instance methods

pause(),resume(),restart(), andterminate()are now available in local development when usingwrangler dev.You can now test the full Workflow instance lifecycle locally:

TypeScript const instance = await env.MY_WORKFLOW.create({id: "my-instance-id",});await instance.pause(); // pauses a running workflow instanceawait instance.resume(); // resumes a paused instanceawait instance.restart(); // restarts the instance from the beginningawait instance.terminate(); // terminates the instance immediately

The latest release of the Agents SDK ↗ exposes agent state as a readable property, prevents duplicate schedule rows across Durable Object restarts, brings full TypeScript inference to

AgentClient, and migrates to Zod 4.Both

useAgent(React) andAgentClient(vanilla JS) now expose astateproperty that reflects the current agent state. Previously, reading state required manually tracking it through theonStateUpdatecallback.React (

useAgent)JavaScript const agent = useAgent({agent: "game-agent",name: "room-123",});// Read state directly — no separate useState + onStateUpdate neededreturn <div>Score: {agent.state?.score}</div>;// Spread for partial updatesagent.setState({ ...agent.state, score: (agent.state?.score ?? 0) + 10 });TypeScript const agent = useAgent<GameAgent, GameState>({agent: "game-agent",name: "room-123",});// Read state directly — no separate useState + onStateUpdate neededreturn <div>Score: {agent.state?.score}</div>;// Spread for partial updatesagent.setState({ ...agent.state, score: (agent.state?.score ?? 0) + 10 });agent.stateis reactive — the component re-renders when state changes from either the server or a client-sidesetState()call.Vanilla JS (

AgentClient)JavaScript const client = new AgentClient({agent: "game-agent",name: "room-123",host: "your-worker.workers.dev",});client.setState({ score: 100 });console.log(client.state); // { score: 100 }TypeScript const client = new AgentClient<GameAgent>({agent: "game-agent",name: "room-123",host: "your-worker.workers.dev",});client.setState({ score: 100 });console.log(client.state); // { score: 100 }State starts as

undefinedand is populated when the server sends the initial state on connect (frominitialState) or whensetState()is called. Use optional chaining (agent.state?.field) for safe access. TheonStateUpdatecallback continues to work as before — the newstateproperty is additive.schedule()now supports anidempotentoption that deduplicates by(type, callback, payload), preventing duplicate rows from accumulating when called in places that run on every Durable Object restart such asonStart().Cron schedules are idempotent by default. Calling

schedule("0 * * * *", "tick")multiple times with the same callback, expression, and payload returns the existing schedule row instead of creating a new one. Pass{ idempotent: false }to override.Delayed and date-scheduled types support opt-in idempotency:

JavaScript import { Agent } from "agents";class MyAgent extends Agent {async onStart() {// Safe across restarts — only one row is createdawait this.schedule(60, "maintenance", undefined, { idempotent: true });}}TypeScript import { Agent } from "agents";class MyAgent extends Agent {async onStart() {// Safe across restarts — only one row is createdawait this.schedule(60, "maintenance", undefined, { idempotent: true });}}Two new warnings help catch common foot-guns:

- Calling

schedule()insideonStart()without{ idempotent: true }emits aconsole.warnwith actionable guidance (once per callback; skipped for cron and whenidempotentis set explicitly). - If an alarm cycle processes 10 or more stale one-shot rows for the same callback, the SDK emits a

console.warnand aschedule:duplicate_warningdiagnostics channel event.

AgentClientnow accepts an optional agent type parameter for full type inference on RPC calls, matching the typed experience already available withuseAgent.JavaScript const client = new AgentClient({agent: "my-agent",host: window.location.host,});// Typed call — method name autocompletes, args and return type inferredconst value = await client.call("getValue");// Typed stub — direct RPC-style proxyawait client.stub.getValue();await client.stub.add(1, 2);TypeScript const client = new AgentClient<MyAgent>({agent: "my-agent",host: window.location.host,});// Typed call — method name autocompletes, args and return type inferredconst value = await client.call("getValue");// Typed stub — direct RPC-style proxyawait client.stub.getValue();await client.stub.add(1, 2);State is automatically inferred from the agent type, so

onStateUpdateis also typed:JavaScript const client = new AgentClient({agent: "my-agent",host: window.location.host,onStateUpdate: (state) => {// state is typed as MyAgent's state type},});TypeScript const client = new AgentClient<MyAgent>({agent: "my-agent",host: window.location.host,onStateUpdate: (state) => {// state is typed as MyAgent's state type},});Existing untyped usage continues to work without changes. The RPC type utilities (

AgentMethods,AgentStub,RPCMethods) are now exported fromagents/clientfor advanced typing scenarios.agents,@cloudflare/ai-chat, and@cloudflare/codemodenow requirezod ^4.0.0. Zod v3 is no longer supported.- Turn serialization —

onChatMessage()and_reply()work is now queued so user requests, tool continuations, andsaveMessages()never stream concurrently. - Duplicate messages on stop — Clicking stop during an active stream no longer splits the assistant message into two entries.

- Duplicate messages after tool calls — Orphaned client IDs no longer leak into persistent storage.

keepAlive()now uses a lightweight in-memory ref count instead of schedule rows. Multiple concurrent callers share a single alarm cycle. The@experimentaltag has been removed from bothkeepAlive()andkeepAliveWhile().A new entry point

@cloudflare/codemode/tanstack-aiadds support for TanStack AI's ↗chat()as an alternative to the Vercel AI SDK'sstreamText():JavaScript import {createCodeTool,tanstackTools,} from "@cloudflare/codemode/tanstack-ai";import { chat } from "@tanstack/ai";const codeTool = createCodeTool({tools: [tanstackTools(myServerTools)],executor,});const stream = chat({ adapter, tools: [codeTool], messages });TypeScript import { createCodeTool, tanstackTools } from "@cloudflare/codemode/tanstack-ai";import { chat } from "@tanstack/ai";const codeTool = createCodeTool({tools: [tanstackTools(myServerTools)],executor,});const stream = chat({ adapter, tools: [codeTool], messages });To update to the latest version:

Terminal window npm i agents@latest @cloudflare/ai-chat@latest- Calling

AI Search now offers new REST API endpoints for search and chat that use an OpenAI compatible format. This means you can use the familiar

messagesarray structure that works with existing OpenAI SDKs and tools. The messages array also lets you pass previous messages within a session, so the model can maintain context across multiple turns.Endpoint Path Chat Completions POST /accounts/{account_id}/ai-search/instances/{name}/chat/completionsSearch POST /accounts/{account_id}/ai-search/instances/{name}/searchHere is an example request to the Chat Completions endpoint using the new

messagesarray format:Terminal window curl https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai-search/instances/{NAME}/chat/completions \-H "Content-Type: application/json" \-H "Authorization: Bearer {API_TOKEN}" \-d '{"messages": [{"role": "system","content": "You are a helpful documentation assistant."},{"role": "user","content": "How do I get started?"}]}'For more details, refer to the AI Search REST API guide.

If you are using the previous AutoRAG API endpoints (

/autorag/rags/), we recommend migrating to the new endpoints. The previous AutoRAG API endpoints will continue to be fully supported.Refer to the migration guide for step-by-step instructions.

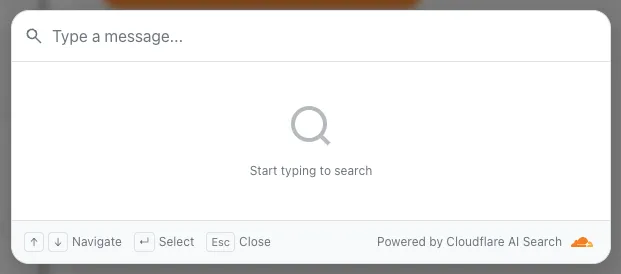

AI Search now supports public endpoints, UI snippets, and MCP, making it easy to add search to your website or connect AI agents.

Public endpoints allow you to expose AI Search capabilities without requiring API authentication. To enable public endpoints:

- Go to AI Search in the Cloudflare dashboard. Go to AI Search

- Select your instance, and turn on Public Endpoint in Settings. For more details, refer to Public endpoint configuration.

UI snippets are pre-built search and chat components you can embed in your website. Visit search.ai.cloudflare.com ↗ to configure and preview components for your AI Search instance.

To add a search modal to your page:

<scripttype="module"src="https://<INSTANCE_ID>.search.ai.cloudflare.com/assets/v0.0.25/search-snippet.es.js"></script><search-modal-snippetapi-url="https://<INSTANCE_ID>.search.ai.cloudflare.com/"placeholder="Search..."></search-modal-snippet>For more details, refer to the UI snippets documentation.

The MCP endpoint allows AI agents to search your content via the Model Context Protocol. Connect your MCP client to:

https://<INSTANCE_ID>.search.ai.cloudflare.com/mcpFor more details, refer to the MCP documentation.

AI Search now supports custom metadata filtering, allowing you to define your own metadata fields and filter search results based on attributes like category, version, or any custom field you define.

You can define up to 5 custom metadata fields per AI Search instance. Each field has a name and data type (

text,number, orboolean):Terminal window curl -X POST https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai-search/instances \-H "Content-Type: application/json" \-H "Authorization: Bearer {API_TOKEN}" \-d '{"id": "my-instance","type": "r2","source": "my-bucket","custom_metadata": [{ "field_name": "category", "data_type": "text" },{ "field_name": "version", "data_type": "number" },{ "field_name": "is_public", "data_type": "boolean" }]}'How you attach metadata depends on your data source:

- R2 bucket: Set metadata using S3-compatible custom headers (

x-amz-meta-*) when uploading objects. Refer to R2 custom metadata for examples. - Website: Add

<meta>tags to your HTML pages. Refer to Website custom metadata for details.

Use custom metadata fields in your search queries alongside built-in attributes like

folderandtimestamp:Terminal window curl https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai-search/instances/{NAME}/search \-H "Content-Type: application/json" \-H "Authorization: Bearer {API_TOKEN}" \-d '{"messages": [{"content": "How do I configure authentication?","role": "user"}],"ai_search_options": {"retrieval": {"filters": {"category": "documentation","version": { "$gte": 2.0 }}}}}'Learn more in the metadata filtering documentation.

- R2 bucket: Set metadata using S3-compatible custom headers (

R2 SQL now supports an expanded SQL grammar so you can write richer analytical queries without exporting data. This release adds CASE expressions, column aliases, arithmetic in clauses, 163 scalar functions, 33 aggregate functions, EXPLAIN, Common Table Expressions (CTEs),and full struct/array/map access. R2 SQL is Cloudflare's serverless, distributed, analytics query engine for querying Apache Iceberg ↗ tables stored in R2 Data Catalog. This page documents the supported SQL syntax.

- Column aliases —

SELECT col AS aliasnow works in all clauses - CASE expressions — conditional logic directly in SQL (searched and simple forms)

- Scalar functions — 163 new functions across math, string, datetime, regex, crypto, encoding, and type inspection categories

- Aggregate functions — statistical (variance, stddev, correlation, regression), bitwise, boolean, and positional aggregates join the existing basic and approximate functions

- Complex types — query struct fields with bracket notation, use 46 array functions, and extract map keys/values

- Common table expressions (CTEs) — use

WITH ... ASto define named temporary result sets. Chained CTEs are supported. All CTEs must reference the same single table. - Full expression support — arithmetic, type casting (

CAST,TRY_CAST,::shorthand), andEXTRACTin SELECT, WHERE, GROUP BY, HAVING, and ORDER BY

SELECT source,CASEWHEN AVG(price) > 30 THEN 'premium'WHEN AVG(price) > 10 THEN 'mid-tier'ELSE 'budget'END AS tier,round(stddev(price), 2) AS price_volatility,approx_percentile_cont(price, 0.95) AS p95_priceFROM my_namespace.sales_dataGROUP BY sourceSELECT product_name,pricing['price'] AS price,array_to_string(tags, ', ') AS tag_listFROM my_namespace.productsWHERE array_has(tags, 'Action')ORDER BY pricing['price'] DESCLIMIT 10WITH monthly AS (SELECT date_trunc('month', sale_timestamp) AS month,department,COUNT(*) AS transactions,round(AVG(total_amount), 2) AS avg_amountFROM my_namespace.sales_dataWHERE sale_timestamp BETWEEN '2025-01-01T00:00:00Z' AND '2025-12-31T23:59:59Z'GROUP BY date_trunc('month', sale_timestamp), department),ranked AS (SELECT month, department, transactions, avg_amount,CASEWHEN avg_amount > 1000 THEN 'high-value'WHEN avg_amount > 500 THEN 'mid-value'ELSE 'standard'END AS tierFROM monthlyWHERE transactions > 100)SELECT * FROM rankedORDER BY month, avg_amount DESCFor the full function reference and syntax details, refer to the SQL reference. For limitations and best practices, refer to Limitations and best practices.

- Column aliases —

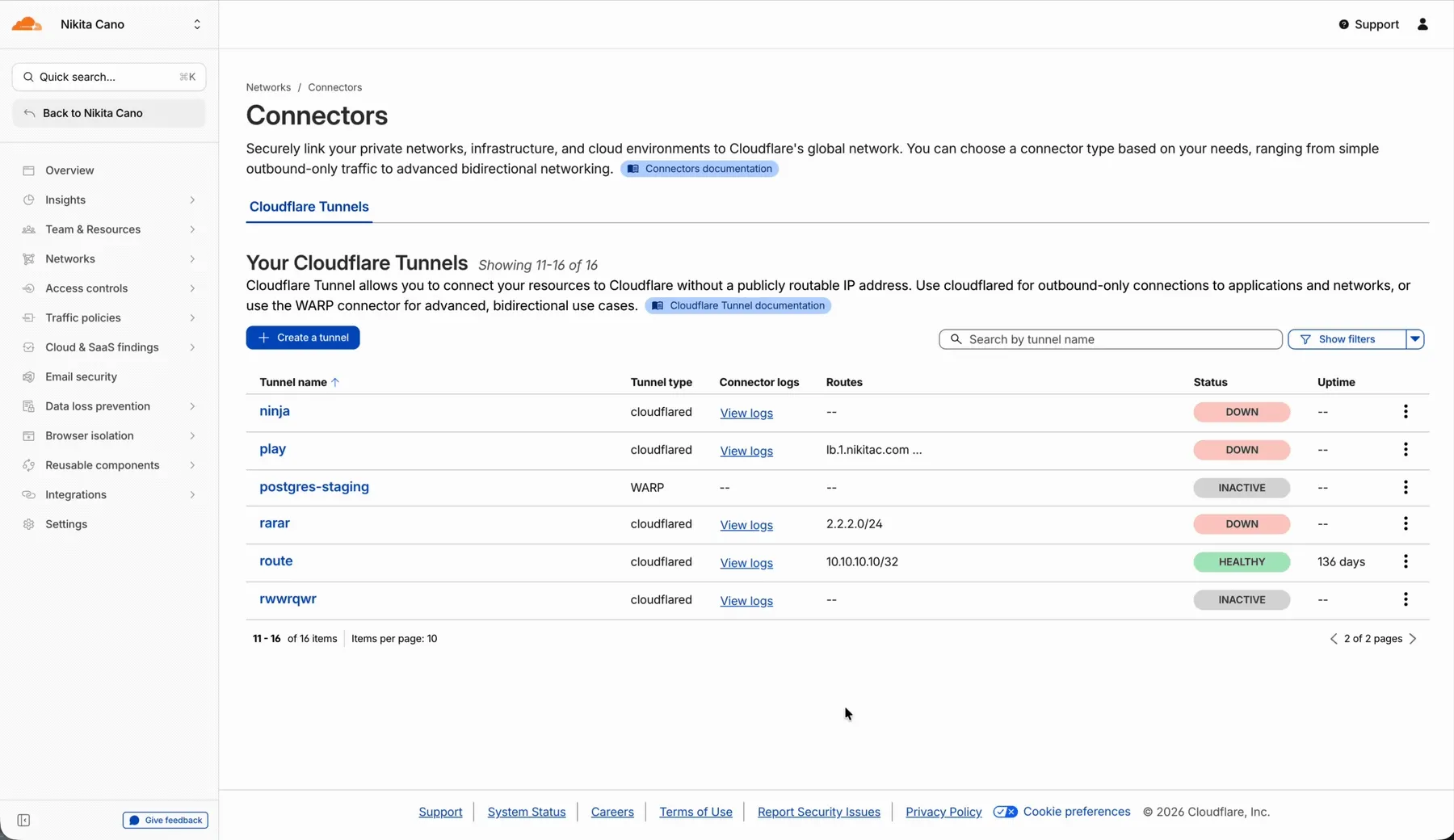

In the Cloudflare One dashboard, the overview page for a specific Cloudflare Tunnel now shows all replicas of that tunnel and supports streaming logs from multiple replicas at once.

Previously, you could only stream logs from one replica at a time. With this update:

- Replicas on the tunnel overview — All active replicas for the selected tunnel now appear on that tunnel's overview page under Connectors. Select any replica to stream its logs.

- Multi-connector log streaming — Stream logs from multiple replicas simultaneously, making it easier to correlate events across your infrastructure during debugging or incident response. To try it out, log in to Cloudflare One ↗ and go to Networks > Connectors > Cloudflare Tunnels. Select View logs next to the tunnel you want to monitor.

For more information, refer to Tunnel log streams and Deploy replicas.