Previously, if you wanted to develop or deploy a worker with attached resources, you'd have to first manually create the desired resources. Now, if your Wrangler configuration file includes a KV namespace, D1 database, or R2 bucket that does not yet exist on your account, you can develop locally and deploy your application seamlessly, without having to run additional commands.

Automatic provisioning is launching as an open beta, and we'd love to hear your feedback to help us make improvements! It currently works for KV, R2, and D1 bindings. You can disable the feature using the

--no-x-provisionflag.To use this feature, update to wrangler@4.45.0 and add bindings to your config file without resource IDs e.g.:

JSONC {"kv_namespaces": [{ "binding": "MY_KV" }],"d1_databases": [{ "binding": "MY_DB" }],"r2_buckets": [{ "binding": "MY_R2" }],}wrangler devwill then automatically create these resources for you locally, and on your next run ofwrangler deploy, Wrangler will call the Cloudflare API to create the requested resources and link them to your Worker.Though resource IDs will be automatically written back to your Wrangler config file after resource creation, resources will stay linked across future deploys even without adding the resource IDs to the config file. This is especially useful for shared templates, which now no longer need to include account-specific resource IDs when adding a binding.

The Cloudflare Vite plugin now supports TanStack Start ↗ apps. Get started with new or existing projects.

Create a new TanStack Start project that uses the Cloudflare Vite plugin via the

create-cloudflareCLI:npm create cloudflare@latest -- my-tanstack-start-app --framework=tanstack-startyarn create cloudflare my-tanstack-start-app --framework=tanstack-startpnpm create cloudflare@latest my-tanstack-start-app --framework=tanstack-startMigrate an existing TanStack Start project to use the Cloudflare Vite plugin:

- Install

@cloudflare/vite-pluginandwrangler

npm i -D @cloudflare/vite-plugin wrangleryarn add -D @cloudflare/vite-plugin wranglerpnpm add -D @cloudflare/vite-plugin wranglerbun add -d @cloudflare/vite-plugin wrangler- Add the Cloudflare plugin to your Vite config

vite.config.ts import { defineConfig } from "vite";import { tanstackStart } from "@tanstack/react-start/plugin/vite";import viteReact from "@vitejs/plugin-react";import { cloudflare } from "@cloudflare/vite-plugin";export default defineConfig({plugins: [cloudflare({ viteEnvironment: { name: "ssr" } }),tanstackStart(),viteReact(),],});- Add your Worker config file

JSONC {"$schema": "./node_modules/wrangler/config-schema.json","name": "my-tanstack-start-app",// Set this to today's date"compatibility_date": "2026-05-22","compatibility_flags": ["nodejs_compat"],"main": "@tanstack/react-start/server-entry"}TOML "$schema" = "./node_modules/wrangler/config-schema.json"name = "my-tanstack-start-app"# Set this to today's datecompatibility_date = "2026-05-22"compatibility_flags = [ "nodejs_compat" ]main = "@tanstack/react-start/server-entry"- Modify the scripts in your

package.json

package.json {"scripts": {"dev": "vite dev","build": "vite build && tsc --noEmit","start": "node .output/server/index.mjs","preview": "vite preview","deploy": "npm run build && wrangler deploy","cf-typegen": "wrangler types"}}See the TanStack Start framework guide for more info.

- Install

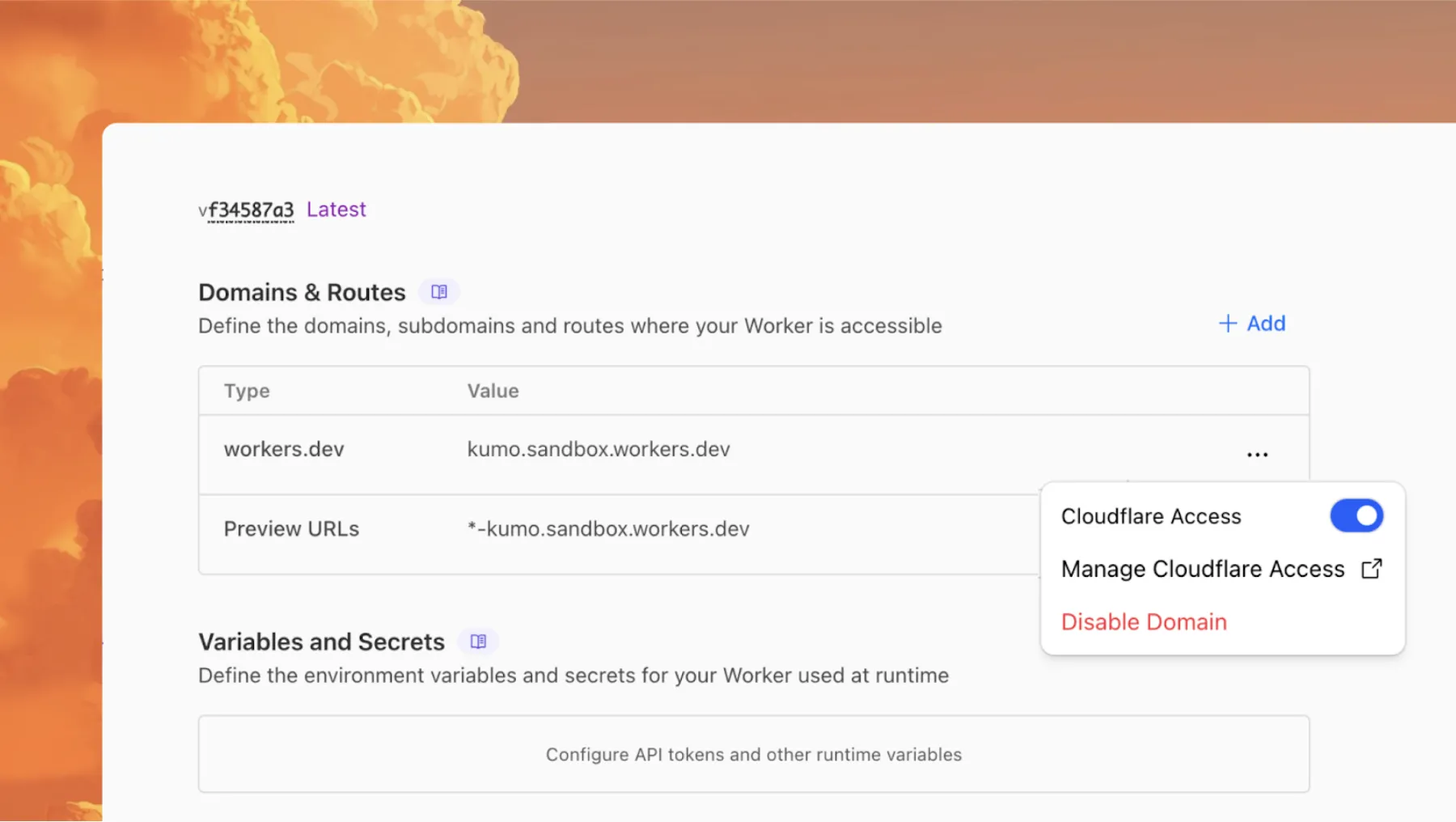

We have updated the default behavior for Cloudflare Workers Preview URLs. Going forward, if a preview URL setting is not explicitly configured during deployment, its default behavior will automatically match the setting of your

workers.devsubdomain.This change is intended to provide a more intuitive and secure experience by aligning your preview URL's default state with your

workers.devconfiguration to prevent cases where a preview URL might remain public even after you disabled yourworkers.devroute.What this means for you:

- If neither setting is configured: both the workers.dev route and the preview URL will default to enabled

- If your workers.dev route is enabled and you do not explicitly set Preview URLs to enabled or disabled: Preview URLs will default to enabled

- If your workers.dev route is disabled and you do not explicitly set Preview URLs to enabled or disabled: Preview URLs will default to disabled

You can override the default setting by explicitly enabling or disabling the preview URL in your Worker's configuration through the API, Dashboard, or Wrangler.

Wrangler Version Behavior

The default behavior depends on the version of Wrangler you are using. This new logic applies to the latest version. Here is a summary of the behavior across different versions:

- Before v4.34.0: Preview URLs defaulted to enabled, regardless of the workers.dev setting.

- v4.34.0 up to (but not including) v4.44.0: Preview URLs defaulted to disabled, regardless of the workers.dev setting.

- v4.44.0 or later: Preview URLs now default to matching your workers.dev setting.

Why we’re making this change

In July, we introduced preview URLs to Workers, which let you preview code changes before deploying to production. This made disabling your Worker’s workers.dev URL an ambiguous action — the preview URL, served as a subdomain of

workers.dev(ex:preview-id-worker-name.account-name.workers.dev) would still be live even if you had disabled your Worker’sworkers.devroute. If you misinterpreted what it meant to disable yourworkers.devroute, you might unintentionally leave preview URLs enabled when you didn’t mean to, and expose them to the public Internet.To address this, we made a one-time update to disable preview URLs on existing Workers that had their workers.dev route disabled and changed the default behavior to be disabled for all new deployments where a preview URL setting was not explicitly configured.

While this change helped secure many customers, it was disruptive for customers who keep their

workers.devroute enabled and actively use the preview functionality, as it now required them to explicitly enable preview URLs on every redeployment.This new, more intuitive behavior ensures that your preview URL settings align with yourworkers.devconfiguration by default, providing a more secure and predictable experience.Securing access to

workers.devand preview URL endpointsTo further secure your

workers.devsubdomain and preview URL, you can enable Cloudflare Access with a single click in your Worker's settings to limit access to specific users or groups.

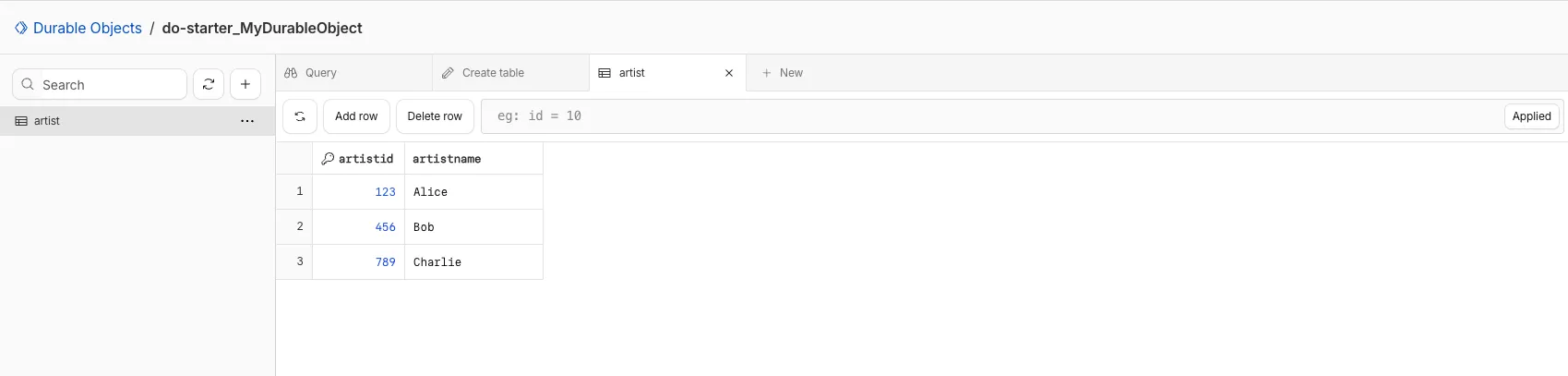

You can now view and write to each Durable Object's storage using a UI editor on the Cloudflare dashboard. Only Durable Objects using SQLite storage can use Data Studio.

Go to Durable ObjectsData Studio unlocks easier data access with Durable Objects for prototyping application data models to debugging production storage usage. Before, querying your Durable Objects data required deploying a Worker.

To access a Durable Object, you can provide an object's unique name or ID generated by Cloudflare. Data Studio requires you to have at least the

Workers Platform Adminrole, and all queries are captured with audit logging for your security and compliance needs. Queries executed by Data Studio send requests to your remote, deployed objects and incur normal usage billing.To learn more, visit the Data Studio documentation. If you have feedback or suggestions for the new Data Studio, please share your experience on Discord ↗

You can now upload a Worker that takes up 1 second to parse and execute its global scope. Previously, startup time was limited to 400 ms.

This allows you to run Workers that import more complex packages and execute more code prior to requests being handled.

For more information, see the documentation on Workers startup limits.

You can now upload Workers with static assets (like HTML, CSS, JavaScript, images) with the Cloudflare Terraform provider v5.11.0 ↗, making it even easier to deploy and manage full-stack apps with IaC.

Previously, you couldn't use Terraform to upload static assets without writing custom scripts to handle generating an asset manifest, calling the Cloudflare API to upload assets in chunks, and handling change detection.

Now, you simply define the directory where your assets are built, and we handle the rest. Check out the examples for what this looks like in Terraform configuration.

You can get started today with the Cloudflare Terraform provider (v5.11.0) ↗, using either the existing

cloudflare_workers_scriptresource ↗, or the betacloudflare_worker_versionresource ↗.Here's how you can use the existing

cloudflare_workers_script↗ resource to upload your Worker code and assets in one shot.resource "cloudflare_workers_script" "my_app" {account_id = var.account_idscript_name = "my-app"content_file = "./dist/worker/index.js"content_sha256 = filesha256("./dist/worker/index.js")main_module = "index.js"# Just point to your assets directory - that's it!assets = {directory = "./dist/static"}}And here's an example using the beta

cloudflare_worker_version↗ resource, alongside thecloudflare_worker↗ andcloudflare_workers_deployment↗ resources:# This tracks the existence of your Worker, so that you# can upload code and assets separately from tracking Worker state.resource "cloudflare_worker" "my_app" {account_id = var.account_idname = "my-app"}resource "cloudflare_worker_version" "my_app_version" {account_id = var.account_idworker_id = cloudflare_worker.my_app.id# Just point to your assets directory - that's it!assets = {directory = "./dist/static"}modules = [{name = "index.js"content_file = "./dist/worker/index.js"content_type = "application/javascript+module"}]}resource "cloudflare_workers_deployment" "my_app_deployment" {account_id = var.account_idscript_name = cloudflare_worker.my_app.namestrategy = "percentage"versions = [{version_id = cloudflare_worker_version.my_app_version.idpercentage = 100}]}Under the hood, the Cloudflare Terraform provider now handles the same logic that Wrangler uses for static asset uploads. This includes scanning your assets directory, computing hashes for each file, generating a manifest with file metadata, and calling the Cloudflare API to upload any missing files in chunks. We support large directories with parallel uploads and chunking, and when the asset manifest hash changes, we detect what's changed and trigger an upload for only those changed files.

- Get started with the Cloudflare Terraform provider (v5.11.0) ↗

- You can use either the existing

cloudflare_workers_scriptresource ↗ to upload your Worker code and assets in one resource. - Or you can use the new beta

cloudflare_worker_versionresource ↗ (along with thecloudflare_worker↗ andcloudflare_workers_deployment↗) resources to more granularly control the lifecycle of each Worker resource.

You can now create and manage Workflows using Terraform, now supported in the Cloudflare Terraform provider v5.11.0 ↗. Workflows allow you to build durable, multi-step applications -- without needing to worry about retrying failed tasks or managing infrastructure.

Now, you can deploy and manage Workflows through Terraform using the new

cloudflare_workflowresource ↗:resource "cloudflare_workflow" "my_workflow" {account_id = var.account_idworkflow_name = "my-workflow"class_name = "MyWorkflow"script_name = "my-worker"}Here are full examples of how to configure

cloudflare_workflowin Terraform, using the existingcloudflare_workers_scriptresource ↗, and the betacloudflare_worker_versionresource ↗.resource "cloudflare_workers_script" "workflow_worker" {account_id = var.cloudflare_account_idscript_name = "my-workflow-worker"content_file = "${path.module}/../dist/worker/index.js"content_sha256 = filesha256("${path.module}/../dist/worker/index.js")main_module = "index.js"}resource "cloudflare_workflow" "workflow" {account_id = var.cloudflare_account_idworkflow_name = "my-workflow"class_name = "MyWorkflow"script_name = cloudflare_workers_script.workflow_worker.script_name}You can more granularly control the lifecycle of each Worker resource using the beta

cloudflare_worker_version↗ resource, alongside thecloudflare_worker↗ andcloudflare_workers_deployment↗ resources.resource "cloudflare_worker" "workflow_worker" {account_id = var.cloudflare_account_idname = "my-workflow-worker"}resource "cloudflare_worker_version" "workflow_worker_version" {account_id = var.cloudflare_account_idworker_id = cloudflare_worker.workflow_worker.idmain_module = "index.js"modules = [{name = "index.js"content_file = "${path.module}/../dist/worker/index.js"content_type = "application/javascript+module"}]}resource "cloudflare_workers_deployment" "workflow_deployment" {account_id = var.cloudflare_account_idscript_name = cloudflare_worker.workflow_worker.namestrategy = "percentage"versions = [{version_id = cloudflare_worker_version.workflow_worker_version.idpercentage = 100}]}resource "cloudflare_workflow" "my_workflow" {account_id = var.cloudflare_account_idworkflow_name = "my-workflow"class_name = "MyWorkflow"script_name = cloudflare_worker.workflow_worker.name}- Get started with the Cloudflare Terraform provider (v5.11.0) ↗ and the new

cloudflare_workflowresource ↗.

- Get started with the Cloudflare Terraform provider (v5.11.0) ↗ and the new

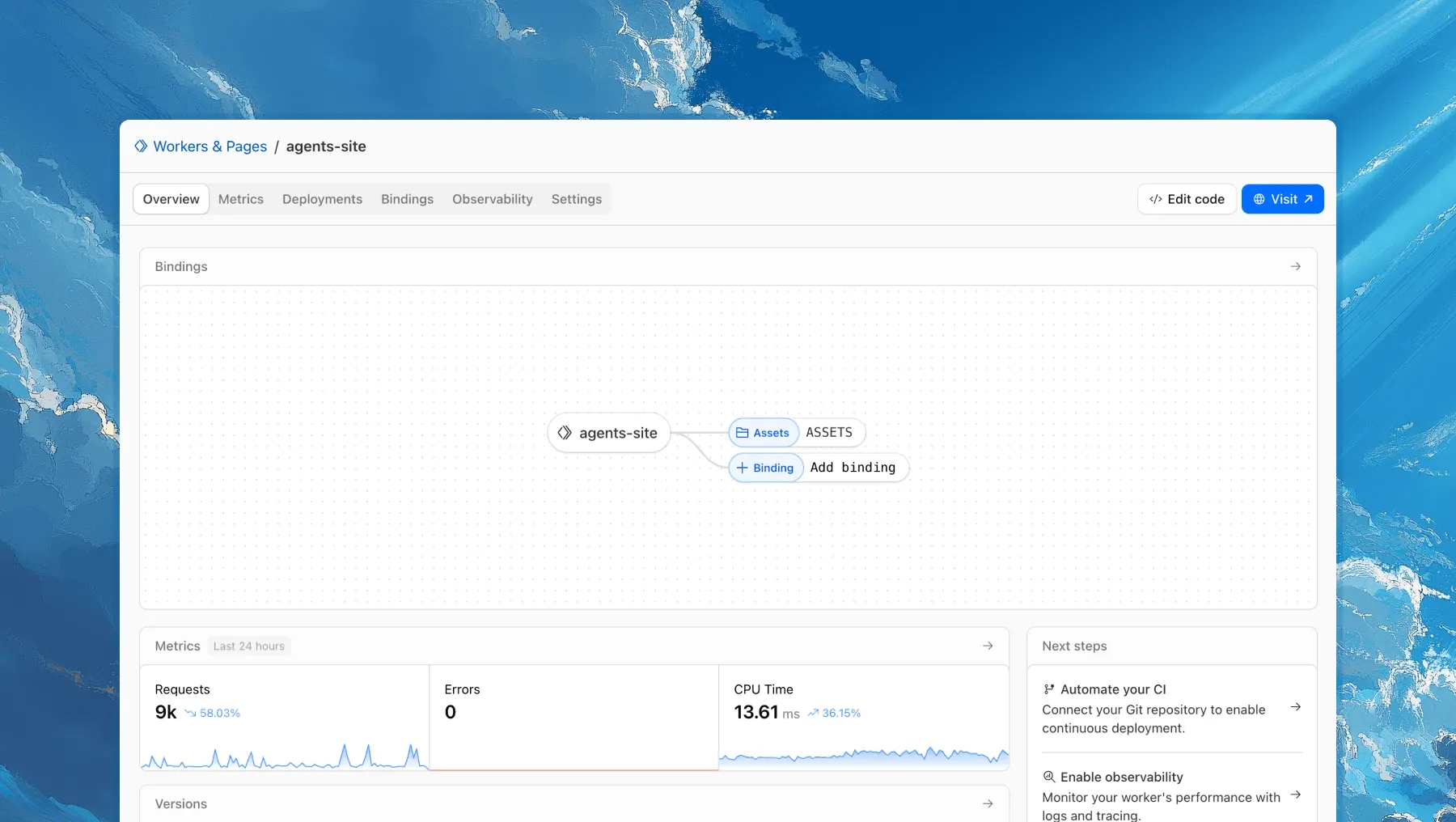

Each of your Workers now has a new overview page in the Cloudflare dashboard.

The goal is to make it easier to understand your Worker without digging through multiple tabs. Think of it as a new home base, a place to get a high-level overview on what's going on.

It's the first place you land when you open a Worker in the dashboard, and it gives you an immediate view of what’s going on. You can see requests, errors, and CPU time at a glance. You can view and add bindings, and see recent versions of your app, including who published them.

Navigation is also simpler, with visually distinct tabs at the top of the page. At the bottom right you'll find guided steps for what to do next that are based on the state of your Worker, such as adding a binding or connecting a custom domain.

We plan to add more here over time. Better insights, more controls, and ways to manage your Worker from one page.

If you have feedback or suggestions for the new Overview page or your Cloudflare Workers experience in general, we'd love to hear from you. Join the Cloudflare developer community on Discord ↗.

You can now enable Cloudflare Access for your

workers.devand Preview URLs in a single click.

Access allows you to limit access to your Workers to specific users or groups. You can limit access to yourself, your teammates, your organization, or anyone else you specify in your Access policy.

To enable Cloudflare Access:

-

In the Cloudflare dashboard, go to the Workers & Pages page.

Go to Workers & Pages -

In Overview, select your Worker.

-

Go to Settings > Domains & Routes.

-

For

workers.devor Preview URLs, click Enable Cloudflare Access. -

Optionally, to configure the Access application, click Manage Cloudflare Access. There, you can change the email addresses you want to authorize. View Access policies to learn about configuring alternate rules.

To fully secure your application, it is important that you validate the JWT that Cloudflare Access adds to the

Cf-Access-Jwt-Assertionheader on the incoming request.The following code will validate the JWT using the jose NPM package ↗:

JavaScript import { jwtVerify, createRemoteJWKSet } from "jose";export default {async fetch(request, env, ctx) {// Verify the POLICY_AUD environment variable is setif (!env.POLICY_AUD) {return new Response("Missing required audience", {status: 403,headers: { "Content-Type": "text/plain" },});}// Get the JWT from the request headersconst token = request.headers.get("cf-access-jwt-assertion");// Check if token existsif (!token) {return new Response("Missing required CF Access JWT", {status: 403,headers: { "Content-Type": "text/plain" },});}try {// Create JWKS from your team domainconst JWKS = createRemoteJWKSet(new URL(`${env.TEAM_DOMAIN}/cdn-cgi/access/certs`),);// Verify the JWTconst { payload } = await jwtVerify(token, JWKS, {issuer: env.TEAM_DOMAIN,audience: env.POLICY_AUD,});// Token is valid, proceed with your application logicreturn new Response(`Hello ${payload.email || "authenticated user"}!`, {headers: { "Content-Type": "text/plain" },});} catch (error) {// Token verification failedreturn new Response(`Invalid token: ${error.message}`, {status: 403,headers: { "Content-Type": "text/plain" },});}},};Add these environment variables to your Worker:

POLICY_AUD: Your application's AUD tagTEAM_DOMAIN:https://<your-team-name>.cloudflareaccess.com

Both of these appear in the modal that appears when you enable Cloudflare Access.

You can set these variables by adding them to your Worker's Wrangler configuration file, or via the Cloudflare dashboard under Workers & Pages > your-worker > Settings > Environment Variables.

-

You can now perform more powerful queries directly in Workers Analytics Engine ↗ with a major expansion of our SQL function library.

Workers Analytics Engine allows you to ingest and store high-cardinality data at scale (such as custom analytics) and query your data through a simple SQL API.

Today, we've expanded Workers Analytics Engine's SQL capabilities with several new functions:

argMin()- Returns the value associated with the minimum in a groupargMax()- Returns the value associated with the maximum in a grouptopK()- Returns an array of the most frequent values in a grouptopKWeighted()- Returns an array of the most frequent values in a group using weightsfirst_value()- Returns the first value in an ordered set of values within a partitionlast_value()- Returns the last value in an ordered set of values within a partition

bitAnd()- Returns the bitwise AND of two expressionsbitCount()- Returns the number of bits set to one in the binary representation of a numberbitHammingDistance()- Returns the number of bits that differ between two numbersbitNot()- Returns a number with all bits flippedbitOr()- Returns the inclusive bitwise OR of two expressionsbitRotateLeft()- Rotates all bits in a number left by specified positionsbitRotateRight()- Rotates all bits in a number right by specified positionsbitShiftLeft()- Shifts all bits in a number left by specified positionsbitShiftRight()- Shifts all bits in a number right by specified positionsbitTest()- Returns the value of a specific bit in a numberbitXor()- Returns the bitwise exclusive-or of two expressions

abs()- Returns the absolute value of a numberlog()- Computes the natural logarithm of a numberround()- Rounds a number to a specified number of decimal placesceil()- Rounds a number up to the nearest integerfloor()- Rounds a number down to the nearest integerpow()- Returns a number raised to the power of another number

lowerUTF8()- Converts a string to lowercase using UTF-8 encodingupperUTF8()- Converts a string to uppercase using UTF-8 encoding

hex()- Converts a number to its hexadecimal representationbin()- Converts a string to its binary representation

New type conversion functions: ↗

toUInt8()- Converts any numeric expression, or expression resulting in a string representation of a decimal, into an unsigned 8 bit integer

Whether you're building usage-based billing systems, customer analytics dashboards, or other custom analytics, these functions let you get the most out of your data. Get started with Workers Analytics Engine and explore all available functions in our SQL reference documentation.

The

ctx.exportsAPI contains automatically-configured bindings corresponding to your Worker's top-level exports. For each top-level export extendingWorkerEntrypoint,ctx.exportswill contain a Service Binding by the same name, and for each export extendingDurableObject(and for which storage has been configured via a migration),ctx.exportswill contain a Durable Object namespace binding. This means you no longer have to configure these bindings explicitly inwrangler.jsonc/wrangler.toml.Example:

JavaScript import { WorkerEntrypoint } from "cloudflare:workers";export class Greeter extends WorkerEntrypoint {greet(name) {return `Hello, ${name}!`;}}export default {async fetch(request, env, ctx) {let greeting = await ctx.exports.Greeter.greet("World")return new Response(greeting);}}At present, you must use the

enable_ctx_exportscompatibility flag to enable this API, though it will be on by default in the future.

You can run multiple Workers in a single dev command by passing multiple config files to

wrangler dev:Terminal window wrangler dev --config ./web/wrangler.jsonc --config ./api/wrangler.jsoncPreviously, if you ran the command above and then also ran wrangler dev for a different Worker, the Workers running in separate wrangler dev sessions could not communicate with each other. This prevented you from being able to use Service Bindings ↗ and Tail Workers ↗ in local development, when running separate wrangler dev sessions.

Now, the following works as expected:

Terminal window # Terminal 1: Run your application that includes both Web and API workerswrangler dev --config ./web/wrangler.jsonc --config ./api/wrangler.jsonc# Terminal 2: Run your auth worker separatelywrangler dev --config ./auth/wrangler.jsoncThese Workers can now communicate with each other across separate dev commands, regardless of your development setup.

./api/src/index.ts export default {async fetch(request, env) {// This service binding call now works across dev commandsconst authorized = await env.AUTH.isAuthorized(request);if (!authorized) {return new Response('Unauthorized', { status: 401 });}return new Response('Hello from API Worker!', { status: 200 });},};Check out the Developing with multiple Workers guide to learn more about the different approaches and when to use each one.

Rate Limiting within Cloudflare Workers is now Generally Available (GA).

The

ratelimitbinding is now stable and recommended for all production workloads. Existing deployments using the unsafe binding will continue to function to allow for a smooth transition.For more details, refer to Workers Rate Limiting documentation.

In workers-rs ↗, Rust panics were previously non-recoverable. A panic would put the Worker into an invalid state, and further function calls could result in memory overflows or exceptions.

Now, when a panic occurs, in-flight requests will throw 500 errors, but the Worker will automatically and instantly recover for future requests.

This ensures more reliable deployments. Automatic panic recovery is enabled for all new workers-rs deployments as of version 0.6.5, with no configuration required.

Rust Workers are built with Wasm Bindgen, which treats panics as non-recoverable. After a panic, the entire Wasm application is considered to be in an invalid state.

We now attach a default panic handler in Rust:

std::panic::set_hook(Box::new(move |panic_info| {hook_impl(panic_info);}));Which is registered by default in the JS initialization:

JavaScript import { setPanicHook } from "./index.js";setPanicHook(function (err) {console.error("Panic handler!", err);});When a panic occurs, we reset the Wasm state to revert the Wasm application to how it was when the application started.

We worked upstream on the Wasm Bindgen project to implement a new

--experimental-reset-state-functioncompilation option ↗ which outputs a new__wbg_reset_statefunction.This function clears all internal state related to the Wasm VM, and updates all function bindings in place to reference the new WebAssembly instance.

One other necessary change here was associating Wasm-created JS objects with an instance identity. If a JS object created by an earlier instance is then passed into a new instance later on, a new "stale object" error is specially thrown when using this feature.

Building on this new Wasm Bindgen feature, layered with our new default panic handler, we also added a proxy wrapper to ensure all top-level exported class instantiations (such as for Rust Durable Objects) are tracked and fully reinitialized when resetting the Wasm instance. This was necessary because the workerd runtime will instantiate exported classes, which would then be associated with the Wasm instance.

This approach now provides full panic recovery for Rust Workers on subsequent requests.

Of course, we never want panics, but when they do happen they are isolated and can be investigated further from the error logs - avoiding broader service disruption.

In the future, full support for recoverable panics could be implemented without needing reinitialization at all, utilizing the WebAssembly Exception Handling ↗ proposal, part of the newly announced WebAssembly 3.0 ↗ specification. This would allow unwinding panics as normal JS errors, and concurrent requests would no longer fail.

We're making significant improvements to the reliability of Rust Workers ↗. Join us in

#rust-on-workerson the Cloudflare Developers Discord ↗ to stay updated.

We recently increased the available disk space from 8 GB to 20 GB for all plans. Building on that improvement, we’re now doubling the CPU power available for paid plans — from 2 vCPU to 4 vCPU.

These changes continue our focus on making Workers Builds faster and more reliable.

Metric Free Plan Paid Plans CPU 2 vCPU 4 vCPU - Fast build times: Even single-threaded workloads benefit from having more vCPUs

- 2x faster multi-threaded builds: Tools like esbuild ↗ and webpack ↗ can now utilize additional cores, delivering near-linear performance scaling

All other build limits — including memory, build minutes, and timeout remain unchanged.

To prevent the accidental exposure of applications, we've updated how Worker preview URLs (

<PREVIEW>-<WORKER_NAME>.<SUBDOMAIN>.workers.dev) are handled. We made this change to ensure preview URLs are only active when intentionally configured, improving the default security posture of your Workers.We performed a one-time update to disable preview URLs for existing Workers where the workers.dev subdomain was also disabled.

Because preview URLs were historically enabled by default, users who had intentionally disabled their workers.dev route may not have realized their Worker was still accessible at a separate preview URL. This update was performed to ensure that using a preview URL is always an intentional, opt-in choice.

If your Worker was affected, its preview URL (

<PREVIEW>-<WORKER_NAME>.<SUBDOMAIN>.workers.dev) will now direct to an informational page explaining this change.How to Re-enable Your Preview URL

If your preview URL was disabled, you can re-enable it via the Cloudflare dashboard by navigating to your Worker's Settings page and toggling on the Preview URL.

Alternatively, you can use Wrangler by adding the

preview_urls = truesetting to your Wrangler file and redeploying the Worker.JSONC {"preview_urls": true}TOML preview_urls = trueNote: You can set

preview_urls = truewith any Wrangler version that supports the preview URL flag (v3.91.0+). However, we recommend updating to v4.34.0 or newer, as this version defaultspreview_urlsto false, ensuring preview URLs are always enabled by explicit choice.

Three months ago we announced the public beta of remote bindings for local development. Now, we're excited to say that it's available for everyone in Wrangler, Vite, and Vitest without using an experimental flag!

With remote bindings, you can now connect to deployed resources like R2 buckets and D1 databases while running Worker code on your local machine. This means you can test your local code changes against real data and services, without the overhead of deploying for each iteration.

To enable remote bindings, add

"remote" : trueto each binding that you want to rely on a remote resource running on Cloudflare:JSONC {"name": "my-worker",// Set this to today's date"compatibility_date": "2026-05-22","r2_buckets": [{"bucket_name": "screenshots-bucket","binding": "screenshots_bucket","remote": true,},],}TOML name = "my-worker"# Set this to today's datecompatibility_date = "2026-05-22"[[r2_buckets]]bucket_name = "screenshots-bucket"binding = "screenshots_bucket"remote = trueWhen remote bindings are configured, your Worker still executes locally, but all binding calls are proxied to the deployed resource that runs on Cloudflare's network.

You can try out remote bindings for local development today with:

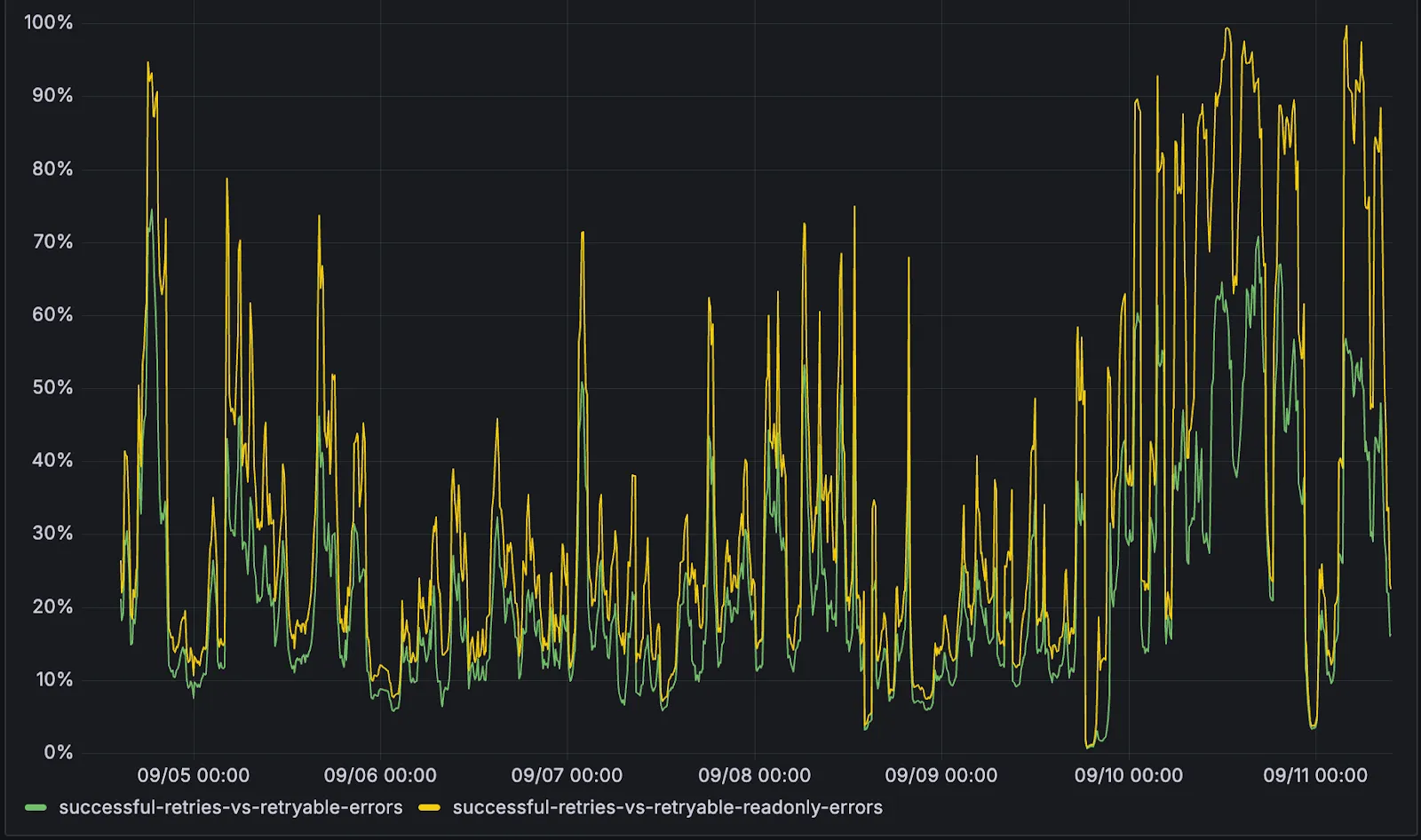

D1 now detects read-only queries and automatically attempts up to two retries to execute those queries in the event of failures with retryable errors. You can access the number of execution attempts in the returned response metadata property

total_attempts.At the moment, only read-only queries are retried, that is, queries containing only the following SQLite keywords:

SELECT,EXPLAIN,WITH. Queries containing any SQLite keyword ↗ that leads to database writes are not retried.The retry success ratio among read-only retryable errors varies from 5% all the way up to 95%, depending on the underlying error and its duration (like network errors or other internal errors).

The retry success ratio among all retryable errors is lower, indicating that there are write-queries that could be retried. Therefore, we recommend D1 users to continue applying retries in their own code for queries that are not read-only but are idempotent according to the business logic of the application.

D1 ensures that any retry attempt does not cause database writes, making the automatic retries safe from side-effects, even if a query causing changes slips through the read-only detection. D1 achieves this by checking for modifications after every query execution, and if any write occurred due to a retry attempt, the query is rolled back.

The read-only query detection heuristics are simple for now, and there is room for improvement to capture more cases of queries that can be retried, so this is just the beginning.

The number of recent versions available for a Worker rollback has been increased from 10 to 100.

This allows you to:

-

Promote any of the 100 most recent versions to be the active deployment.

-

Split traffic using gradual deployments between your latest code and any of the 100 most recent versions.

You can do this through the Cloudflare dashboard or with Wrangler's rollback command

Learn more about versioned deployments and rollbacks.

-

We've shipped a new release for the Agents SDK ↗ bringing full compatibility with AI SDK v5 ↗ and introducing automatic message migration that handles all legacy formats transparently.

This release includes improved streaming and tool support, tool confirmation detection (for "human in the loop" systems), enhanced React hooks with automatic tool resolution, improved error handling for streaming responses, and seamless migration utilities that work behind the scenes.

This makes it ideal for building production AI chat interfaces with Cloudflare Workers AI models, agent workflows, human-in-the-loop systems, or any application requiring reliable message handling across SDK versions — all while maintaining backward compatibility.

Additionally, we've updated workers-ai-provider v2.0.0, the official provider for Cloudflare Workers AI models, to be compatible with AI SDK v5.

Creates a new chat interface with enhanced v5 capabilities.

TypeScript // Basic chat setupconst { messages, sendMessage, addToolResult } = useAgentChat({agent,experimental_automaticToolResolution: true,tools,});// With custom tool confirmationconst chat = useAgentChat({agent,experimental_automaticToolResolution: true,toolsRequiringConfirmation: ["dangerousOperation"],});Tools are automatically categorized based on their configuration:

TypeScript const tools = {// Auto-executes (has execute function)getLocalTime: {description: "Get current local time",inputSchema: z.object({}),execute: async () => new Date().toLocaleString(),},// Requires confirmation (no execute function)deleteFile: {description: "Delete a file from the system",inputSchema: z.object({filename: z.string(),}),},// Server-executed (no client confirmation)analyzeData: {description: "Analyze dataset on server",inputSchema: z.object({ data: z.array(z.number()) }),serverExecuted: true,},} satisfies Record<string, AITool>;Send messages using the new v5 format with parts array:

TypeScript // Text messagesendMessage({role: "user",parts: [{ type: "text", text: "Hello, assistant!" }],});// Multi-part message with filesendMessage({role: "user",parts: [{ type: "text", text: "Analyze this image:" },{ type: "image", image: imageData },],});Simplified logic for detecting pending tool confirmations:

TypeScript const pendingToolCallConfirmation = messages.some((m) =>m.parts?.some((part) => isToolUIPart(part) && part.state === "input-available",),);// Handle tool confirmationif (pendingToolCallConfirmation) {await addToolResult({toolCallId: part.toolCallId,tool: getToolName(part),output: "User approved the action",});}Seamlessly handle legacy message formats without code changes.

TypeScript // All these formats are automatically converted:// Legacy v4 string contentconst legacyMessage = {role: "user",content: "Hello world",};// Legacy v4 with tool callsconst legacyWithTools = {role: "assistant",content: "",toolInvocations: [{toolCallId: "123",toolName: "weather",args: { city: "SF" },state: "result",result: "Sunny, 72°F",},],};// Automatically becomes v5 format:// {// role: "assistant",// parts: [{// type: "tool-call",// toolCallId: "123",// toolName: "weather",// args: { city: "SF" },// state: "result",// result: "Sunny, 72°F"// }]// }Migrate tool definitions to use the new

inputSchemaproperty.TypeScript // Before (AI SDK v4)const tools = {weather: {description: "Get weather information",parameters: z.object({city: z.string(),}),execute: async (args) => {return await getWeather(args.city);},},};// After (AI SDK v5)const tools = {weather: {description: "Get weather information",inputSchema: z.object({city: z.string(),}),execute: async (args) => {return await getWeather(args.city);},},};Seamless integration with Cloudflare Workers AI models through the updated workers-ai-provider v2.0.0.

Use Cloudflare Workers AI models directly in your agent workflows:

TypeScript import { createWorkersAI } from "workers-ai-provider";import { useAgentChat } from "agents/ai-react";// Create Workers AI model (v2.0.0 - same API, enhanced v5 internals)const model = createWorkersAI({binding: env.AI,})("@cf/meta/llama-3.2-3b-instruct");Workers AI models now support v5 file handling with automatic conversion:

TypeScript // Send images and files to Workers AI modelssendMessage({role: "user",parts: [{ type: "text", text: "Analyze this image:" },{type: "file",data: imageBuffer,mediaType: "image/jpeg",},],});// Workers AI provider automatically converts to proper formatEnhanced streaming support with automatic warning detection:

TypeScript // Streaming with Workers AI modelsconst result = await streamText({model: createWorkersAI({ binding: env.AI })("@cf/meta/llama-3.2-3b-instruct"),messages,onChunk: (chunk) => {// Enhanced streaming with warning handlingconsole.log(chunk);},});Update your imports to use the new v5 types:

TypeScript // Before (AI SDK v4)import type { Message } from "ai";import { useChat } from "ai/react";// After (AI SDK v5)import type { UIMessage } from "ai";// or alias for compatibilityimport type { UIMessage as Message } from "ai";import { useChat } from "@ai-sdk/react";- Migration Guide ↗ - Comprehensive migration documentation

- AI SDK v5 Documentation ↗ - Official AI SDK migration guide

- An Example PR showing the migration from AI SDK v4 to v5 ↗

- GitHub Issues ↗ - Report bugs or request features

We'd love your feedback! We're particularly interested in feedback on:

- Migration experience - How smooth was the upgrade process?

- Tool confirmation workflow - Does the new automatic detection work as expected?

- Message format handling - Any edge cases with legacy message conversion?

We've updated our "Built with Cloudflare" button to make it easier to share that you're building on Cloudflare with the world. Embed it in your project's README, blog post, or wherever you want to let people know.

Check out the documentation for usage information.

Deploying static site to Workers is now easier. When you run

wrangler deploy [directory]orwrangler deploy --assets [directory]without an existing configuration file, Wrangler CLI now guides you through the deployment process with interactive prompts.Before: Required remembering multiple flags and parameters

Terminal window wrangler deploy --assets ./dist --compatibility-date 2025-09-09 --name my-projectAfter: Simple directory deployment with guided setup

Terminal window wrangler deploy dist# Interactive prompts handle the rest as shown in the example flow belowInteractive prompts for missing configuration:

- Wrangler detects when you're trying to deploy a directory of static assets

- Prompts you to confirm the deployment type

- Asks for a project name (with smart defaults)

- Automatically sets the compatibility date to today

Automatic configuration generation:

- Creates a

wrangler.jsoncfile with your deployment settings - Stores your choices for future deployments

- Eliminates the need to remember complex command-line flags

Terminal window # Deploy your built static sitewrangler deploy dist# Wrangler will prompt:✔ It looks like you are trying to deploy a directory of static assets only. Is this correct? … yes✔ What do you want to name your project? … my-astro-site# Automatically generates a wrangler.jsonc file and adds it to your project:{"name": "my-astro-site","compatibility_date": "2025-09-09","assets": {"directory": "dist"}}# Next time you run wrangler deploy, this will use the configuration in your newly generated wrangler.jsonc filewrangler deploy- You must use Wrangler version 4.24.4 or later in order to use this feature

You can now upload up to 100,000 static assets per Worker version

- Paid and Workers for Platforms users can now upload up to 100,000 static assets per Worker version, a 5x increase from the previous limit of 20,000.

- Customers on the free plan still have the same limit as before — 20,000 static assets per version of your Worker

- The individual file size limit of 25 MiB remains unchanged for all customers.

This increase allows you to build larger applications with more static assets without hitting limits.

To take advantage of the increased limits, you must use Wrangler version 4.34.0 or higher. Earlier versions of Wrangler will continue to enforce the previous 20,000 file limit.

For more information about Workers static assets, see the Static Assets documentation and Platform Limits.

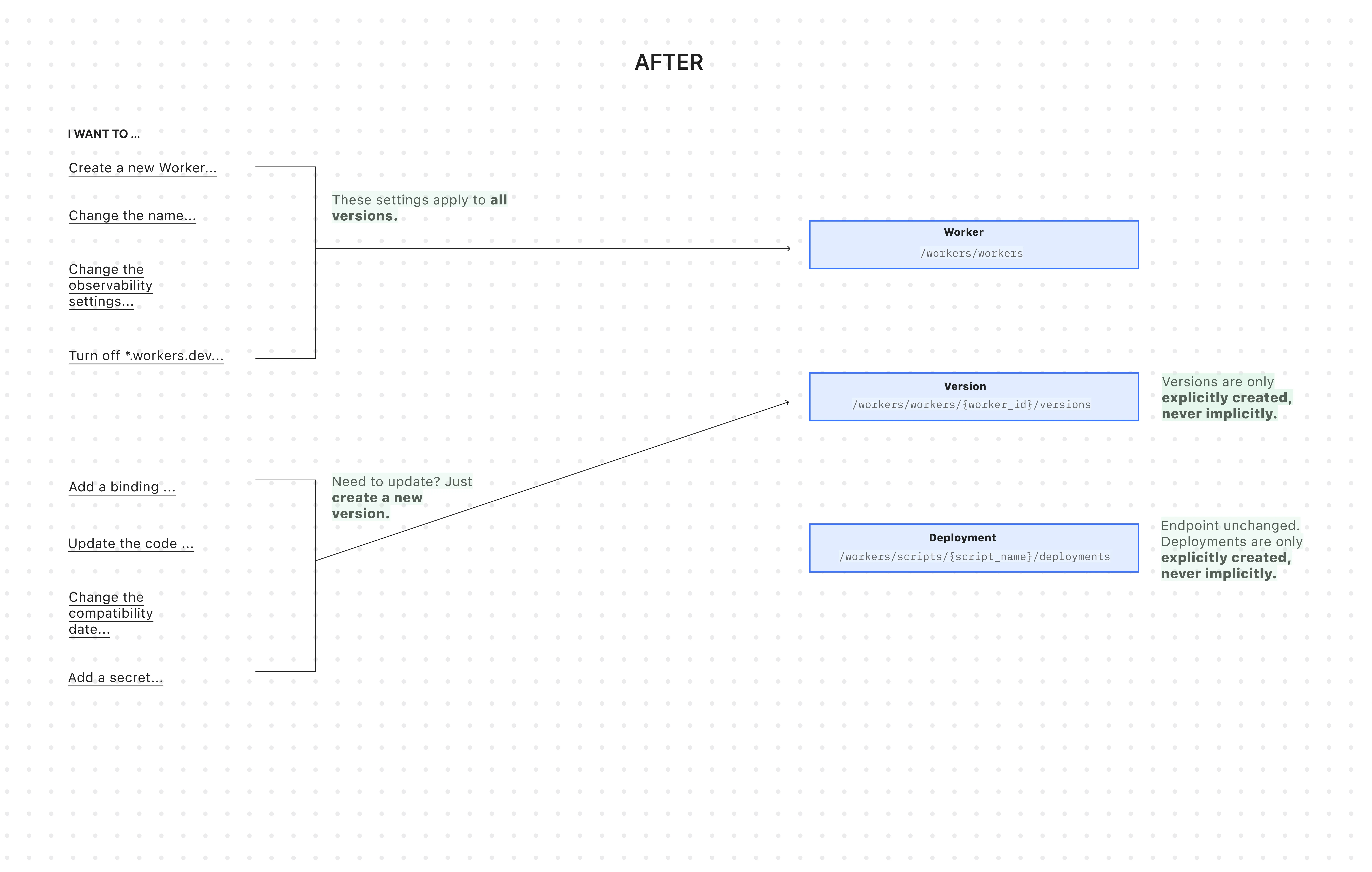

You can now manage Workers, Versions, and Deployments as separate resources with a new, resource-oriented API (Beta).

This new API is supported in the Cloudflare Terraform provider ↗ and the Cloudflare Typescript SDK ↗, allowing platform teams to manage a Worker's infrastructure in Terraform, while development teams handle code deployments from a separate repository or workflow. We also designed this API with AI agents in mind, as a clear, predictable structure is essential for them to reliably build, test, and deploy applications.

- New beta API endpoints

- Cloudflare TypeScript SDK v5.0.0 ↗

- Cloudflare Go SDK v6.0.0 ↗

- Terraform provider v5.9.0 ↗:

cloudflare_worker↗ ,cloudflare_worker_version↗, andcloudflare_workers_deployments↗ resources. - See full examples in our Infrastructure as Code (IaC) guide

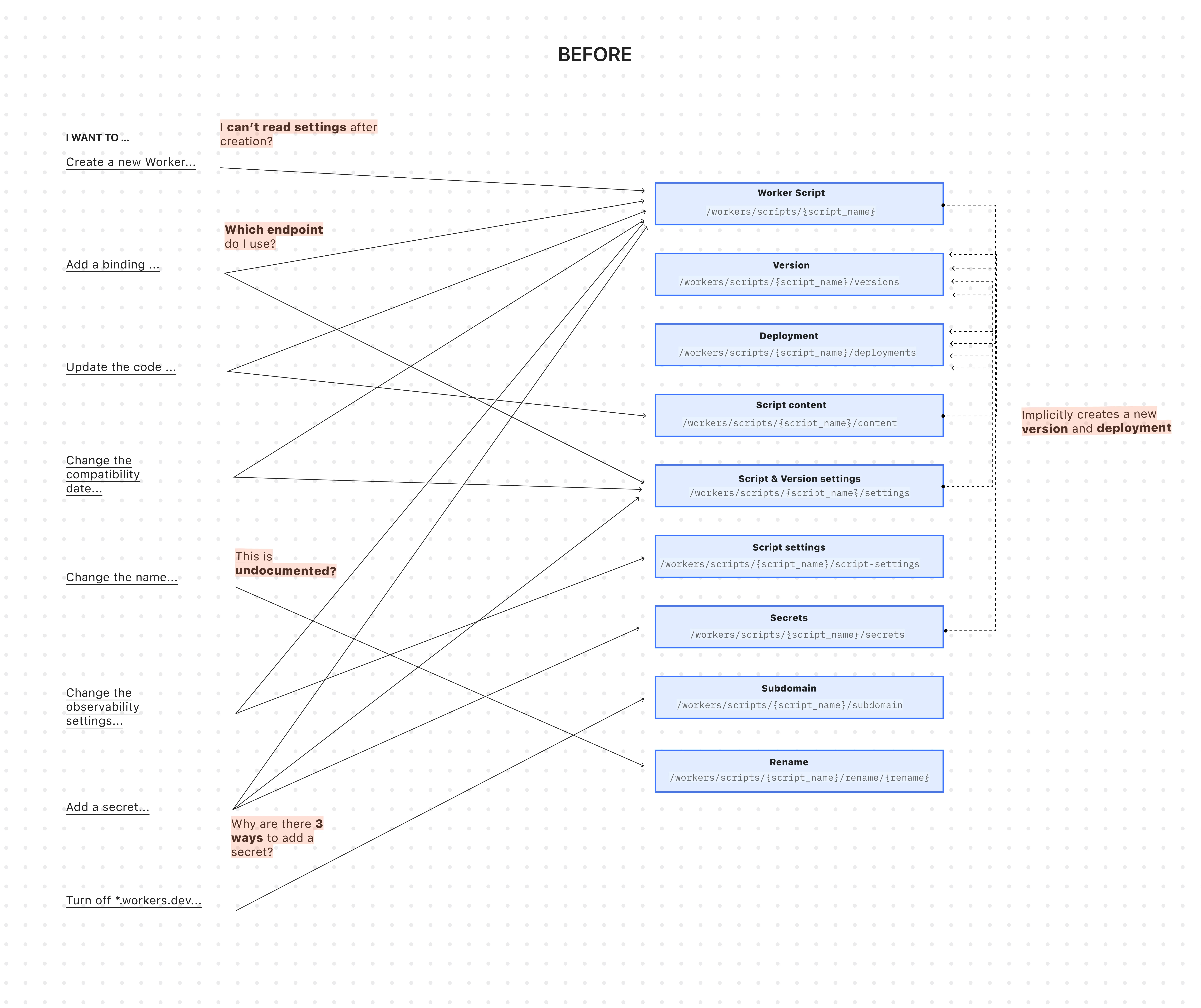

The existing API was originally designed for simple, one-shot script uploads:

Terminal window curl -X PUT "https://api.cloudflare.com/client/v4/accounts/$ACCOUNT_ID/workers/scripts/$SCRIPT_NAME" \-H "X-Auth-Email: $CLOUDFLARE_EMAIL" \-H "X-Auth-Key: $CLOUDFLARE_API_KEY" \-H "Content-Type: multipart/form-data" \-F 'metadata={"main_module": "worker.js","compatibility_date": "$today$"}' \-F "worker.js=@worker.js;type=application/javascript+module"This API worked for creating a basic Worker, uploading all of its code, and deploying it immediately — but came with challenges:

-

A Worker couldn't exist without code: To create a Worker, you had to upload its code in the same API request. This meant platform teams couldn't provision Workers with the proper settings, and then hand them off to development teams to deploy the actual code.

-

Several endpoints implicitly created deployments: Simple updates like adding a secret or changing a script's content would implicitly create a new version and immediately deploy it.

-

Updating a setting was confusing: Configuration was scattered across eight endpoints with overlapping responsibilities. This ambiguity made it difficult for human developers (and even more so for AI agents) to reliably update a Worker via API.

-

Scripts used names as primary identifiers: This meant simple renames could turn into a risky migration, requiring you to create a brand new Worker and update every reference. If you were using Terraform, this could inadvertently destroy your Worker altogether.

All endpoints now use simple JSON payloads, with script content embedded as

base64-encoded strings -- a more consistent and reliable approach than the previousmultipart/form-dataformat.-

Worker: The parent resource representing your application. It has a stable UUID and holds persistent settings like

name,tags, andlogpush. You can now create a Worker to establish its identity and settings before any code is uploaded. -

Version: An immutable snapshot of your code and its specific configuration, like bindings and

compatibility_date. Creating a new version is a safe action that doesn't affect live traffic. -

Deployment: An explicit action that directs traffic to a specific version.

Workers are now standalone resources that can be created and configured without any code. Platform teams can provision Workers with the right settings, then hand them off to development teams for implementation.

TypeScript // Step 1: Platform team creates the Worker resource (no code needed)const worker = await client.workers.beta.workers.create({name: "payment-service",account_id: "...",observability: {enabled: true,},});// Step 2: Development team adds code and creates a version laterconst version = await client.workers.beta.workers.versions.create(worker.id, {account_id: "...",main_module: "worker.js",compatibility_date: "$today",bindings: [ /*...*/ ],modules: [{name: "worker.js",content_type: "application/javascript+module",content_base64: Buffer.from(scriptContent).toString("base64"),},],});// Step 3: Deploy explicitly when readyconst deployment = await client.workers.scripts.deployments.create(worker.name, {account_id: "...",strategy: "percentage",versions: [{percentage: 100,version_id: version.id,},],});If you use Terraform, you can now declare the Worker in your Terraform configuration and manage configuration outside of Terraform in your Worker's

wrangler.jsoncfile and deploy code changes using Wrangler.resource "cloudflare_worker" "my_worker" {account_id = "..."name = "my-important-service"}# Manage Versions and Deployments here or outside of Terraform# resource "cloudflare_worker_version" "my_worker_version" {}# resource "cloudflare_workers_deployment" "my_worker_deployment" {}Creating a version and deploying it are now always explicit, separate actions - never implicit side effects. To update version-specific settings (like bindings), you create a new version with those changes. The existing deployed version remains unchanged until you explicitly deploy the new one.

Terminal window # Step 1: Create a new version with updated settings (doesn't affect live traffic)POST /workers/workers/{id}/versions{"compatibility_date": "$today","bindings": [{"name": "MY_NEW_ENV_VAR","text": "new_value","type": "plain_text"}],"modules": [...]}# Step 2: Explicitly deploy when ready (now affects live traffic)POST /workers/scripts/{script_name}/deployments{"strategy": "percentage","versions": [{"percentage": 100,"version_id": "new_version_id"}]}Configuration is now logically divided: Worker settings (like

nameandtags) persist across all versions, while Version settings (likebindingsandcompatibility_date) are specific to each code snapshot.Terminal window # Worker settings (the parent resource)PUT /workers/workers/{id}{"name": "payment-service","tags": ["production"],"logpush": true,}Terminal window # Version settings (the "code")POST /workers/workers/{id}/versions{"compatibility_date": "$today","bindings": [...],"modules": [...]}The

/workers/workers/path now supports addressing a Worker by both its immutable UUID and its mutable name.Terminal window # Both work for the same WorkerGET /workers/workers/29494978e03748669e8effb243cf2515 # UUID (stable for automation)GET /workers/workers/payment-service # Name (convenient for humans)This dual approach means:

- Developers can use readable names for debugging.

- Automation can rely on stable UUIDs to prevent errors when Workers are renamed.

- Terraform can rename Workers without destroying and recreating them.

- The pre-existing Workers REST API remains fully supported. Once the new API exits beta, we'll provide a migration timeline with ample notice and comprehensive migration guides.

- Existing Terraform resources and SDK methods will continue to be fully supported through the current major version.

- While the Deployments API currently remains on the

/scripts/endpoint, we plan to introduce a new Deployments endpoint under/workers/to match the new API structure.

JavaScript asset responses have been updated to use the

text/javascriptContent-Type header instead ofapplication/javascript. While both MIME types are widely supported by browsers, the HTML Living Standard explicitly recommendstext/javascriptas the preferred type going forward.This change improves:

- Standards alignment: Ensures consistency with the HTML spec and modern web platform guidance.

- Interoperability: Some developer tools, validators, and proxies expect text/javascript and may warn or behave inconsistently with application/javascript.

- Future-proofing: By following the spec-preferred MIME type, we reduce the risk of deprecation warnings or unexpected behavior in evolving browser environments.

- Consistency: Most frameworks, CDNs, and hosting providers now default to text/javascript, so this change matches common ecosystem practice.

Because all major browsers accept both MIME types, this update is backwards compatible and should not cause breakage.

Users will see this change on the next deployment of their assets.