AI Gateway now uses the AI REST API on

api.cloudflare.com. You can call any model — whether from OpenAI, Anthropic, Google, or hosted on Workers AI — through one unified API, using the same endpoints and authentication regardless of provider. Four endpoints are available:POST /ai/run— universal endpoint for all models and modalitiesPOST /ai/v1/chat/completions— OpenAI SDK compatiblePOST /ai/v1/responses— OpenAI Responses API compatiblePOST /ai/v1/messages— Anthropic SDK compatible

Terminal window curl -X POST "https://api.cloudflare.com/client/v4/accounts/$CLOUDFLARE_ACCOUNT_ID/ai/v1/chat/completions" \--header "Authorization: Bearer $CLOUDFLARE_API_TOKEN" \--header "Content-Type: application/json" \--data '{"model": "openai/gpt-5.5","messages": [{"role": "user", "content": "What is Cloudflare?"}]}'All AI Gateway features — logging, caching, rate limiting, and guardrails — are applied automatically. Third-party models are billed through Unified Billing, so you do not need to manage separate provider API keys.

Third-party model requests are routed through your account's default gateway, which is created automatically on first use. To route requests through a specific gateway, add the

cf-aig-gateway-idheader.If you are already calling Workers AI models through the existing REST API, that path (

/ai/run/@cf/{model}) continues to work. To call Workers AI models through AI Gateway, use the@cf/model prefix (for example,@cf/moonshotai/kimi-k2.6) and include thecf-aig-gateway-idheader to specify which gateway to route through.For more details and examples, refer to the REST API documentation.

The latest release of the Agents SDK ↗ brings more reliable chat recovery, fixes Agent state synchronization during reconnects, adds durable submissions for Think, exposes routing retry configuration, and adds connection control for Voice agents.

@cloudflare/ai-chatnow keeps server turns running when a browser or client stream is interrupted. This is useful for long-running AI responses where users refresh the page, close a tab, or temporarily lose connection. Callingstop()still cancels the server turn.Set

cancelOnClientAbort: trueif browser or client aborts should also cancel the server turn:JavaScript const chat = useAgentChat({agent: "assistant",name: "user-123",cancelOnClientAbort: true,});TypeScript const chat = useAgentChat({agent: "assistant",name: "user-123",cancelOnClientAbort: true,});Notable bug fixes:

- Chat stream resume negotiation no longer throws when replay races with a closed WebSocket connection.

- Recovered chat continuations no longer leave

useAgentChatstuck in a streaming state when the original socket disconnects before a terminal response. - Approval auto-continuation preserves reasoning parts and persists continuation reasoning in the final message.

isServerStreamingnow resets correctly when a resumed stream moves from the fallback observer path to a transport-owned stream.

agents@0.12.4prevents duplicate initial state frames during WebSocket connection setup. This avoids stale initial state messages overwriting state updates already sent by the client.Agent recovery is also more reliable when tool calls span a Durable Object restart. Recovery now defers user finish hooks until after agent startup and isolates hook failures, so one failed hook does not block other recovered runs from finalizing.

getAgentByName()now supportsroutingRetryfor transient Durable Object routing failures:JavaScript import { getAgentByName } from "agents";const agent = await getAgentByName(env.AssistantAgent, "user-123", {routingRetry: {maxAttempts: 3,},});TypeScript import { getAgentByName } from "agents";const agent = await getAgentByName(env.AssistantAgent, "user-123", {routingRetry: {maxAttempts: 3,},});@cloudflare/thinknow supports durable programmatic submissions.submitMessages()provides durable acceptance, idempotent retries, status inspection, cancellation, and cleanup for server-driven turns that should continue after the caller returns.Think.chat()RPC turns now run inside chat recovery fibers and persist their stream chunks. Interrupted sub-agent turns can recover partial output instead of starting over.ChatOptions.toolshas been removed from the TypeScript API. Define durable tools on the child agent or use agent tools for orchestration. Runtimeoptions.toolsvalues passed by legacy callers are ignored with a warning.@cloudflare/thinkno longer appliespruneMessages({ toolCalls: "before-last-2-messages" })to model context by default. The previous default could strip client-side tool results from longer multi-turn flows.truncateOlderMessagesstill runs as before, so context cost remains bounded. Subclasses that relied on the old aggressive pruning can opt back in frombeforeTurn:JavaScript import { Think } from "@cloudflare/think";import { pruneMessages } from "ai";export class MyAgent extends Think {beforeTurn(ctx) {return {messages: pruneMessages({messages: ctx.messages,toolCalls: "before-last-2-messages",}),};}}TypeScript import { Think } from "@cloudflare/think";import { pruneMessages } from "ai";export class MyAgent extends Think<Env> {beforeTurn(ctx) {return {messages: pruneMessages({messages: ctx.messages,toolCalls: "before-last-2-messages",}),};}}@cloudflare/voiceadds anenabledoption touseVoiceAgent. React apps can now delay creating and connecting aVoiceClientuntil prerequisites such as capability tokens are ready.JavaScript const voice = useVoiceAgent({agent: "MyVoiceAgent",enabled: Boolean(token),});TypeScript const voice = useVoiceAgent({agent: "MyVoiceAgent",enabled: Boolean(token),});This release also fixes Workers AI speech-to-text session edge cases and

withVoicetext streaming from AI SDKtextStreamresponses.- Streamable HTTP routing — Server-to-client requests now route through the originating POST stream when no standalone SSE stream is available.

- Structured tool output — Tool output shapes are preserved when truncating older messages or oversized persisted rows.

- Non-chat Think tool steps — Think agent-tool children can complete without emitting assistant text and can return structured output through

getAgentToolOutput. - Sub-agent schedules — Stale sub-agent schedule rows are pruned when their owning facet registry entry no longer exists.

@cloudflare/codemode— Adds a browser-safe export with an iframe sandbox executor and resolves OpenAPI specs inside the sandbox to avoid Worker Loader RPC size limits.

To update to the latest version:

Terminal window npm i agents@latest @cloudflare/ai-chat@latest @cloudflare/think@latest @cloudflare/voice@latestRefer to the Agents API reference and Chat agents documentation for more information.

We are refreshing the Workers AI model catalog to make room for newer releases. Please update your apps to remove references to the models listed below before the deprecation date.

@cf/zai-org/glm-4.7-flash— fast multilingual model with multi-turn tool calling and coding capabilities.@cf/google/gemma-4-26b-a4b-it— efficient open model with vision and tool calling.@cf/moonshotai/kimi-k2.6— capable tool-calling and vision model for agentic workloads and coding.

For pricing, refer to the Workers AI pricing page.

We originally stated Kimi K2.5 would be deprecated on May 10, 2026, however we have extended the deprecation date to May 30, 2026. Requests will be automatically aliased to Kimi K2.6 on May 30, 2026, which has a higher price. Please review the

@cf/moonshotai/kimi-k2.6pricing and model capabilities prior to May 30, 2026 to ensure that the model suits your needs.@cf/moonshotai/kimi-k2.5-->@cf/moonshotai/kimi-k2.6@hf/meta-llama/meta-llama-3-8b-instruct@cf/meta/llama-3-8b-instruct@cf/meta/llama-3-8b-instruct-awq@cf/meta/llama-3.1-8b-instruct@cf/meta/llama-3.1-8b-instruct-awq@cf/meta/llama-3.1-70b-instruct@cf/meta/llama-2-7b-chat-int8@cf/meta/llama-2-7b-chat-fp16@cf/mistral/mistral-7b-instruct-v0.1@hf/mistral/mistral-7b-instruct-v0.2@hf/google/gemma-7b-it@cf/google/gemma-3-12b-it@hf/nousresearch/hermes-2-pro-mistral-7b@cf/microsoft/phi-2@cf/defog/sqlcoder-7b-2@cf/unum/uform-gen2-qwen-500m@cf/facebook/bart-large-cnn

The

-fastand-loravariants of models will remain active, including:@cf/meta/llama-3.3-70b-instruct-fp8-fast@cf/meta/llama-3.1-8b-instruct-fast@cf/google/gemma-7b-it-lora@cf/google/gemma-2b-it-lora@cf/mistral/mistral-7b-instruct-v0.2-lora@cf/meta-llama/llama-2-7b-chat-hf-lora

LoRA models may be deprecated in the future. We will be adding more LoRA capabilities to the catalog, and will communicate when new LoRA models come online to give users time to train new LoRAs before we deprecate old ones.

For the full list of available models, refer to the Workers AI model catalog.

@cf/moonshotai/kimi-k2.6is now available on Workers AI, in partnership with Moonshot AI for Day 0 support. Kimi K2.6 is a native multimodal agentic model from Moonshot AI that advances practical capabilities in long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration.Built on a Mixture-of-Experts architecture with 1T total parameters and 32B active per token, Kimi K2.6 delivers frontier-scale intelligence with efficient inference. It scores competitively against GPT-5.4 and Claude Opus 4.6 on agentic and coding benchmarks, including BrowseComp (83.2), SWE-Bench Verified (80.2), and Terminal-Bench 2.0 (66.7).

- 262.1k token context window for retaining full conversation history, tool definitions, and codebases across long-running agent sessions

- Long-horizon coding with significant improvements on complex, end-to-end coding tasks across languages including Rust, Go, and Python

- Coding-driven design that transforms simple prompts and visual inputs into production-ready interfaces and full-stack workflows

- Agent swarm orchestration scaling horizontally to 300 sub-agents executing 4,000 coordinated steps for complex autonomous tasks

- Vision inputs for processing images alongside text

- Thinking mode with configurable reasoning depth

- Multi-turn tool calling for building agents that invoke tools across multiple conversation turns

If you are migrating from Kimi K2.5, note the following API changes:

- K2.6 uses

chat_template_kwargs.thinkingto control reasoning, replacingchat_template_kwargs.enable_thinking - K2.6 returns reasoning content in the

reasoningfield, replacingreasoning_content

Use Kimi K2.6 through the Workers AI binding (

env.AI.run()), the REST API at/ai/run, or the OpenAI-compatible endpoint at/v1/chat/completions. You can also use AI Gateway with any of these endpoints.For more information, refer to the Kimi K2.6 model page and pricing.

Cloudflare's network now supports redirecting verified AI training crawlers to canonical URLs when they request deprecated or duplicate pages. When enabled via AI Crawl Control > Quick Actions, AI training crawlers that request a page with a canonical tag pointing elsewhere receive a 301 redirect to the canonical version. Humans, search engine crawlers, and AI Search agents continue to see the original page normally.

This feature leverages your existing

<link rel="canonical">tags. No additional configuration required beyond enabling the toggle. Available on Pro, Business, and Enterprise plans at no additional cost.Refer to the Redirects for AI Training documentation for details.

AI Crawl Control now includes new tools to help you prepare your site for the agentic Internet—a web where AI agents are first-class citizens that discover and interact with content differently than human visitors.

The Metrics tab now includes a Content Format chart showing what content types AI systems request versus what your origin serves. Understanding these patterns helps you optimize content delivery for both human and agent consumption.

The Robots.txt tab has been renamed to Directives and now includes a link to check your site's Agent Readiness ↗ score.

Refer to our blog post on preparing for the agentic Internet ↗ for more on why these capabilities matter.

New AI Search instances created after today will work differently. New instances come with built-in storage and a vector index, so you can upload a file, have it indexed immediately, and search it right away.

Additionally new Workers Bindings are now available to use with AI Search. The new namespace binding lets you create and manage instances at runtime, and cross-instance search API lets you query across multiple instances in one call.

All new instances now comes with built-in storage which allows you to upload files directly to it using the Items API or the dashboard. No R2 buckets to set up, no external data sources to connect first.

TypeScript const instance = env.AI_SEARCH.get("my-instance");// upload and wait for indexing to completeconst item = await instance.items.uploadAndPoll("faq.md", content);// search immediately after indexingconst results = await instance.search({messages: [{ role: "user", content: "onboarding guide" }],});The new

ai_search_namespacesbinding replaces the previousenv.AI.autorag()API provided through theAIbinding. It gives your Worker access to all instances within a namespace and lets you create, update, and delete instances at runtime without redeploying.JSONC // wrangler.jsonc{"ai_search_namespaces": [{"binding": "AI_SEARCH","namespace": "default",},],}TypeScript // create an instance at runtimeconst instance = await env.AI_SEARCH.create({id: "my-instance",});For migration details, refer to Workers binding migration. For more on namespaces, refer to Namespaces.

Within the new AI Search binding, you now have access to a Search and Chat API on the namespace level. Pass an array of instance IDs and get one ranked list of results back.

TypeScript const results = await env.AI_SEARCH.search({messages: [{ role: "user", content: "What is Cloudflare?" }],ai_search_options: {instance_ids: ["product-docs", "customer-abc123"],},});Refer to Namespace-level search for details.

AI Search now supports hybrid search and relevance boosting, giving you more control over how results are found and ranked.

Hybrid search combines vector (semantic) search with BM25 keyword search in a single query. Vector search finds chunks with similar meaning, even when the exact words differ. Keyword search matches chunks that contain your query terms exactly. When you enable hybrid search, both run in parallel and the results are fused into a single ranked list.

You can configure the tokenizer (

porterfor natural language,trigramfor code), keyword match mode (andfor precision,orfor recall), and fusion method (rrformax) per instance:TypeScript const instance = await env.AI_SEARCH.create({id: "my-instance",index_method: { vector: true, keyword: true },fusion_method: "rrf",indexing_options: { keyword_tokenizer: "porter" },retrieval_options: { keyword_match_mode: "and" },});Refer to Search modes for an overview and Hybrid search for configuration details.

Relevance boosting lets you nudge search rankings based on document metadata. For example, you can prioritize recent documents by boosting on

timestamp, or surface high-priority content by boosting on a custom metadata field likepriority.Configure up to 3 boost fields per instance or override them per request:

TypeScript const results = await env.AI_SEARCH.get("my-instance").search({messages: [{ role: "user", content: "deployment guide" }],ai_search_options: {retrieval: {boost_by: [{ field: "timestamp", direction: "desc" },{ field: "priority", direction: "desc" },],},},});Refer to Relevance boosting for configuration details.

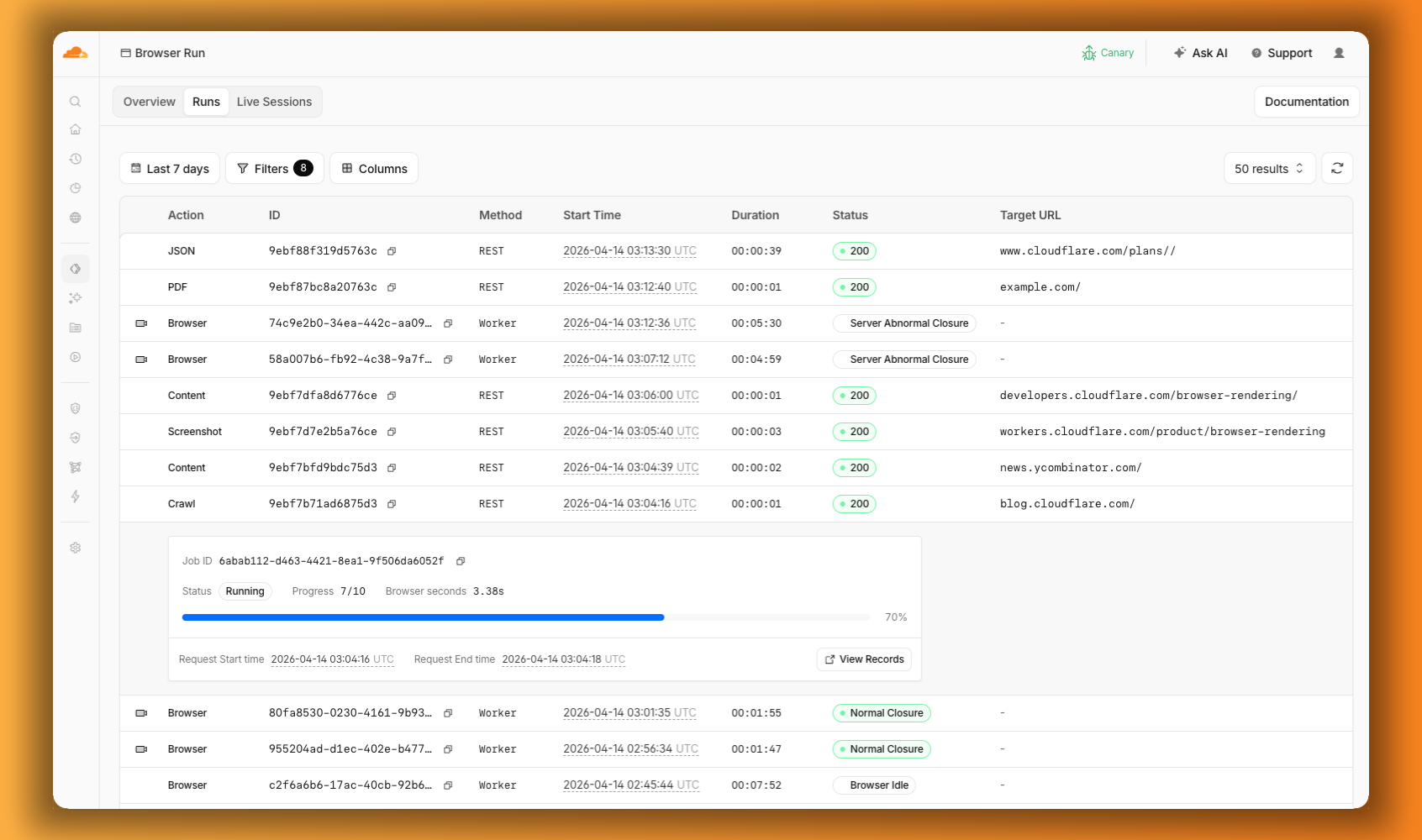

We are renaming Browser Rendering to Browser Run. The name Browser Rendering never fully captured what the product does. Browser Run lets you run full browser sessions on Cloudflare's global network, drive them with code or AI, record and replay sessions, crawl pages for content, debug in real time, and let humans intervene when your agent needs help.

Along with the rename, we have increased limits for Workers Paid plans and redesigned the Browser Run dashboard.

We have 4x-ed concurrency limits for Workers Paid plan users:

- Concurrent browsers per account: 30 → 120 per account

- New browser instances: 30 per minute → 1 per second

- REST API rate limits: recently increased from 3 to 10 requests per second

Rate limits across the limits page are now expressed in per-second terms, matching how they are enforced. No action is needed to benefit from the higher limits.

The redesigned dashboard ↗ now shows every request in a single Runs tab, not just browser sessions but also quick actions like screenshots, PDFs, markdown, and crawls. Filter by endpoint, view target URLs, status, and duration, and expand any row for more detail.

We are also shipping several new features:

- Live View, Human in the Loop, and Session Recordings - See what your agent is doing in real time, let humans step in when automation hits a wall, and replay any session after it ends.

- WebMCP - Websites can expose structured tools for AI agents to discover and call directly, replacing slow screenshot-analyze-click loops.

For the full story, read our Agents Week blog Browser Run: Give your agents a browser ↗.

When browser automation fails or behaves unexpectedly, it can be hard to understand what happened. We are shipping three new features in Browser Run (formerly Browser Rendering) to help:

- Live View for real-time visibility

- Human in the Loop for human intervention

- Session Recordings for replaying sessions after they end

Live View lets you see what your agent is doing in real time. The page, DOM, console, and network requests are all visible for any active browser session. Access Live View from the Cloudflare dashboard, via the hosted UI at

live.browser.run, or using native Chrome DevTools.When your agent hits a snag like a login page or unexpected edge case, it can hand off to a human instead of failing. With Human in the Loop, a human steps into the live browser session through Live View, resolves the issue, and hands control back to the script.

Today, you can step in by opening the Live View URL for any active session. Next, we are adding a handoff flow where the agent can signal that it needs help, notify a human to step in, then hand control back to the agent once the issue is resolved.

Session Recordings records DOM state so you can replay any session after it ends. Enable recordings by passing

recording: truewhen launching a browser. After the session closes, view the recording in the Cloudflare dashboard under Browser Run > Runs, or retrieve via API using the session ID. Next, we are adding the ability to inspect DOM state and console output at any point during the recording.

To get started, refer to the documentation for Live View, Human in the Loop, and Session Recording.

Browser Run (formerly Browser Rendering) now supports WebMCP ↗ (Web Model Context Protocol), a new browser API from the Google Chrome team.

The Internet was built for humans, so navigating as an AI agent today is unreliable. WebMCP lets websites expose structured tools for AI agents to discover and call directly. Instead of slow screenshot-analyze-click loops, agents can call website functions like

searchFlights()orbookTicket()with typed parameters, making browser automation faster, more reliable, and less fragile.

With WebMCP, you can:

- Discover website tools - Use

navigator.modelContextTesting.listTools()to see available actions on any WebMCP-enabled site - Execute tools directly - Call

navigator.modelContextTesting.executeTool()with typed parameters - Handle human-in-the-loop interactions - Some tools pause for user confirmation before completing sensitive actions

WebMCP requires Chrome beta features. We have an experimental pool with browser instances running Chrome beta so you can test emerging browser features before they reach stable Chrome. To start a WebMCP session, add

lab=trueto your/devtools/browserrequest:Terminal window curl -X POST "https://api.cloudflare.com/client/v4/accounts/{account_id}/browser-rendering/devtools/browser?lab=true&keep_alive=300000" \-H "Authorization: Bearer {api_token}"Combined with the recently launched CDP endpoint, AI agents can also use WebMCP. Connect an MCP client to Browser Run via CDP, and your agent can discover and call website tools directly. Here's the same hotel booking demo, this time driven by an AI agent through OpenCode:

For a step-by-step guide, refer to the WebMCP documentation.

- Discover website tools - Use

-

We are excited to announce two major capability upgrades for Agent Lee, the AI co-pilot built directly into the Cloudflare dashboard. Agent Lee is designed to understand your specific account configuration, and with this release, it moves from a passive advisor to an active assistant that can help you manage your infrastructure and visualize your data through natural language.

Agent Lee can now perform changes on your behalf across your Cloudflare account. Whether you need to update DNS records, modify SSL/TLS settings, or configure Workers routes, you can simply ask.

To ensure security and accuracy, every write operation requires explicit user approval. Before any change is committed, Agent Lee will present a summary of the proposed action in plain language. No action is taken until you select Confirm, and this approval requirement is enforced at the infrastructure level to prevent unauthorized changes.

Example requests:

- "Add an A record for blog.example.com pointing to 192.0.2.10."

- "Enable Always Use HTTPS on my zone."

- "Set the SSL mode for example.com to Full (strict)."

Understanding your traffic and security trends is now as easy as asking a question. Agent Lee now features Generative UI, allowing it to render inline charts and structured data visualizations directly within the chat interface using your actual account telemetry.

Example requests:

- "Show me a chart of my traffic over the last 7 days."

- "What does my error rate look like for the past 24 hours?"

- "Graph my cache hit rate for example.com this week."

These features are currently available in Beta for all users on the Free plan. To get started, log in to the Cloudflare dashboard ↗ and select Ask AI in the upper right corner.

To learn more about how to interact with your account using AI, refer to the Agent Lee documentation.

Browser Rendering now supports

wrangler browsercommands, letting you create, manage, and view browser sessions directly from your terminal, streamlining your workflow. Since Wrangler handles authentication, you do not need to pass API tokens in your commands.The following commands are available:

Command Description wrangler browser createCreate a new browser session wrangler browser closeClose a session wrangler browser listList active sessions wrangler browser viewView a live browser session The

createcommand spins up a browser instance on Cloudflare's network and returns a session URL. Once created, you can connect to the session using any CDP-compatible client like Puppeteer, Playwright, or MCP clients to automate browsing, scrape content, or debug remotely.Terminal window wrangler browser createUse

--keepAliveto set the session keep-alive duration (60-600 seconds):Terminal window wrangler browser create --keepAlive 300The

viewcommand auto-selects when only one session exists, or prompts for selection when multiple sessions are available.All commands support

--jsonfor structured output, and because these are CLI commands, you can incorporate them into scripts to automate session management.For full usage details, refer to the Wrangler commands documentation.

Outbound Workers for Sandboxes and Containers now support zero-trust credential injection, TLS interception, allow/deny lists, and dynamic per-instance egress policies. These features give platforms running agentic workloads full control over what leaves the sandbox, without exposing secrets to untrusted workloads, like user-generated code or coding agents.

Because outbound handlers run in the Workers runtime, outside the sandbox, they can hold secrets the sandbox never sees. A sandboxed workload can make a plain request, and credentials are transparently attached before a request is forwarded upstream.

For instance, you could run an agent in a sandbox and ensure that any requests it makes to Github are authenticated. But it will never be able to access the credentials:

TypeScript export class MySandbox extends Sandbox {}MySandbox.outboundByHost = {"github.com": (request: Request, env: Env, ctx: OutboundHandlerContext) => {const requestWithAuth = new Request(request);requestWithAuth.headers.set("x-auth-token", env.SECRET);return fetch(requestWithAuth);},};You can easily inject unique credentials for different instances by using

ctx.containerId:TypeScript MySandbox.outboundByHost = {"my-internal-vcs.dev": async (request: Request,env: Env,ctx: OutboundHandlerContext,) => {const authKey = await env.KEYS.get(ctx.containerId);const requestWithAuth = new Request(request);requestWithAuth.headers.set("x-auth-token", authKey);return fetch(requestWithAuth);},};No token is ever passed into the sandbox. You can rotate secrets in the Worker environment and every request will pick them up immediately.

Outbound Workers now intercept HTTPS traffic. A unique ephemeral certificate authority (CA) and private key are created for each sandbox instance. The CA is placed into the sandbox and trusted by default. The ephemeral private key never leaves the container runtime sidecar process and is never shared across instances.

With TLS interception active, outbound Workers can act as a transparent proxy for both HTTP and HTTPS traffic.

Easily filter outbound traffic with

allowedHostsanddeniedHosts. WhenallowedHostsis set, it becomes a deny-by-default allowlist. Both properties support glob patterns.TypeScript export class MySandbox extends Sandbox {allowedHosts = ["github.com", "npmjs.org"];}Define named outbound handlers then apply or remove them at runtime using

setOutboundHandler()orsetOutboundByHost(). This lets you change egress policy for a running sandbox without restarting it.TypeScript export class MySandbox extends Sandbox {}MySandbox.outboundHandlers = {allowHosts: async (req: Request, env: Env, ctx: OutboundHandlerContext ) => {const url = new URL(req.url);if (ctx.params.allowedHostnames.includes(url.hostname)) {return fetch(req);}return new Response(null, { status: 403 });},noHttp: async () => {return new Response(null, { status: 403 });},};Apply handlers programmatically from your Worker:

TypeScript const sandbox = getSandbox(env.Sandbox, userId);// Open network for setupawait sandbox.setOutboundHandler("allowHosts", {allowedHostnames: ["github.com", "npmjs.org"],});await sandbox.exec("npm install");// Lock down after setupawait sandbox.setOutboundHandler("noHttp");Handlers accept

params, so you can customize behavior per instance without defining separate handler functions.Upgrade to

@cloudflare/containers@0.3.0or@cloudflare/sandbox@0.8.9to use these features.For more details, refer to Sandbox outbound traffic and Container outbound traffic.

Browser Rendering now exposes the Chrome DevTools Protocol (CDP), the low-level protocol that powers browser automation. The growing ecosystem of CDP-based agent tools, along with existing CDP automation scripts, can now use Browser Rendering directly.

Any CDP-compatible client, including Puppeteer and Playwright, can connect from any environment, whether that is Cloudflare Workers, your local machine, or a cloud environment. All you need is your Cloudflare API key.

For any existing CDP script, switching to Browser Rendering is a one-line change:

JavaScript const puppeteer = require("puppeteer-core");const browser = await puppeteer.connect({browserWSEndpoint: `wss://api.cloudflare.com/client/v4/accounts/${ACCOUNT_ID}/browser-rendering/devtools/browser?keep_alive=600000`,headers: { Authorization: `Bearer ${API_TOKEN}` },});const page = await browser.newPage();await page.goto("https://example.com");console.log(await page.title());await browser.close();Additionally, MCP clients like Claude Desktop, Claude Code, Cursor, and OpenCode can now use Browser Rendering as their remote browser via the chrome-devtools-mcp ↗ package.

Here is an example of how to configure Browser Rendering for Claude Desktop:

{"mcpServers": {"browser-rendering": {"command": "npx","args": ["-y","chrome-devtools-mcp@latest","--wsEndpoint=wss://api.cloudflare.com/client/v4/accounts/<ACCOUNT_ID>/browser-rendering/devtools/browser?keep_alive=600000","--wsHeaders={\"Authorization\":\"Bearer <API_TOKEN>\"}"]}}}To get started, refer to the CDP documentation.

AI Search now supports CSS content selectors for website data sources. You can now define which parts of a crawled page are extracted and indexed by specifying CSS selectors paired with URL glob patterns.

Content selectors solve the problem of indexing only relevant content while ignoring navigation, sidebars, footers, and other boilerplate. When a page URL matches a glob pattern, only elements matching the corresponding CSS selector are extracted and converted to Markdown for indexing.

Configure content selectors via the dashboard or API:

Terminal window curl "https://api.cloudflare.com/client/v4/accounts/{account_id}/ai-search/instances" \-H "Authorization: Bearer {api_token}" \-H "Content-Type: application/json" \-d '{"id": "my-ai-search","source": "https://example.com","type": "web-crawler","source_params": {"web_crawler": {"parse_options": {"content_selector": [{"path": "**/blog/**","selector": "article .post-body"}]}}}}'Selectors are evaluated in order, and the first matching pattern wins. You can define up to 10 content selector entries per instance.

For configuration details and examples, refer to the content selectors documentation.

AI Search now supports four additional Workers AI models across text generation and embedding.

Model Context window (tokens) @cf/zai-org/glm-4.7-flash131,072 @cf/qwen/qwen3-30b-a3b-fp832,000 GLM-4.7-Flash is a lightweight model from Zhipu AI with a 131,072 token context window, suitable for long-document summarization and retrieval tasks. Qwen3-30B-A3B is a mixture-of-experts model from Alibaba that activates only 3 billion parameters per forward pass, keeping inference fast while maintaining strong response quality.

Model Vector dims Input tokens Metric @cf/qwen/qwen3-embedding-0.6b1,024 4,096 cosine @cf/google/embeddinggemma-300m768 512 cosine Qwen3-Embedding-0.6B supports up to 4,096 input tokens, making it a good fit for indexing longer text chunks. EmbeddingGemma-300M from Google produces 768-dimension vectors and is optimized for low-latency embedding workloads.

All four models are available without additional provider keys since they run on Workers AI. Select them when creating or updating an AI Search instance in the dashboard or through the API.

For the full list of supported models, refer to Supported models.

We are partnering with Google to bring

@cf/google/gemma-4-26b-a4b-itto Workers AI. Gemma 4 26B A4B is a Mixture-of-Experts (MoE) model built from Gemini 3 research, with 26B total parameters and only 4B active per forward pass. By activating a small subset of parameters during inference, the model runs almost as fast as a 4B-parameter model while delivering the quality of a much larger one.Gemma 4 is Google's most capable family of open models, designed to maximize intelligence-per-parameter.

- Mixture-of-Experts architecture with 8 active experts out of 128 total (plus 1 shared expert), delivering frontier-level performance at a fraction of the compute cost of dense models

- 256,000 token context window for retaining full conversation history, tool definitions, and long documents across extended sessions

- Built-in thinking mode that lets the model reason step-by-step before answering, improving accuracy on complex tasks

- Vision understanding for object detection, document and PDF parsing, screen and UI understanding, chart comprehension, OCR (including multilingual), and handwriting recognition, with support for variable aspect ratios and resolutions

- Function calling with native support for structured tool use, enabling agentic workflows and multi-step planning

- Multilingual with out-of-the-box support for 35+ languages, pre-trained on 140+ languages

- Coding for code generation, completion, and correction

Use Gemma 4 26B A4B through the Workers AI binding (

env.AI.run()), the REST API at/runor/v1/chat/completions, or the OpenAI-compatible endpoint.For more information, refer to the Gemma 4 26B A4B model page.

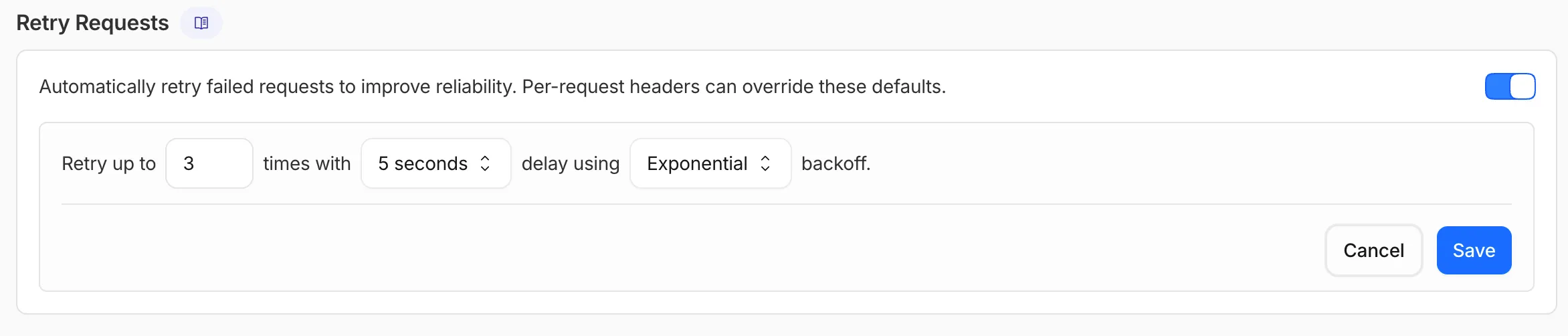

AI Gateway now supports automatic retries at the gateway level. When an upstream provider returns an error, your gateway retries the request based on the retry policy you configure, without requiring any client-side changes.

You can configure the retry count (up to 5 attempts), the delay between retries (from 100ms to 5 seconds), and the backoff strategy (Constant, Linear, or Exponential). These defaults apply to all requests through the gateway, and per-request headers can override them.

This is particularly useful when you do not control the client making the request and cannot implement retry logic on the caller side. For more complex failover scenarios — such as failing across different providers — use Dynamic Routing.

For more information, refer to Manage gateways.

AI Search supports a

wrangler ai-searchcommand namespace. Use it to manage instances from the command line.The following commands are available:

Command Description wrangler ai-search createCreate a new instance with an interactive wizard wrangler ai-search listList all instances in your account wrangler ai-search getGet details of a specific instance wrangler ai-search updateUpdate the configuration of an instance wrangler ai-search deleteDelete an instance wrangler ai-search searchRun a search query against an instance wrangler ai-search statsGet usage statistics for an instance The

createcommand guides you through setup, choosing a name, source type (r2orweb), and data source. You can also pass all options as flags for non-interactive use:Terminal window wrangler ai-search create my-instance --type r2 --source my-bucketUse

wrangler ai-search searchto query an instance directly from the CLI:Terminal window wrangler ai-search search my-instance --query "how do I configure caching?"All commands support

--jsonfor structured output that scripts and AI agents can parse directly.For full usage details, refer to the Wrangler commands documentation.

AI Crawl Control now supports extending the underlying WAF rule with custom modifications. Any changes you make directly in the WAF custom rules editor — such as adding path-based exceptions, extra user agents, or additional expression clauses — are preserved when you update crawler actions in AI Crawl Control.

If the WAF rule expression has been modified in a way AI Crawl Control cannot parse, a warning banner appears on the Crawlers page with a link to view the rule directly in WAF.

For more information, refer to WAF rule management.

The latest release of the Agents SDK ↗ exposes agent state as a readable property, prevents duplicate schedule rows across Durable Object restarts, brings full TypeScript inference to

AgentClient, and migrates to Zod 4.Both

useAgent(React) andAgentClient(vanilla JS) now expose astateproperty that reflects the current agent state. Previously, reading state required manually tracking it through theonStateUpdatecallback.React (

useAgent)JavaScript const agent = useAgent({agent: "game-agent",name: "room-123",});// Read state directly — no separate useState + onStateUpdate neededreturn <div>Score: {agent.state?.score}</div>;// Spread for partial updatesagent.setState({ ...agent.state, score: (agent.state?.score ?? 0) + 10 });TypeScript const agent = useAgent<GameAgent, GameState>({agent: "game-agent",name: "room-123",});// Read state directly — no separate useState + onStateUpdate neededreturn <div>Score: {agent.state?.score}</div>;// Spread for partial updatesagent.setState({ ...agent.state, score: (agent.state?.score ?? 0) + 10 });agent.stateis reactive — the component re-renders when state changes from either the server or a client-sidesetState()call.Vanilla JS (

AgentClient)JavaScript const client = new AgentClient({agent: "game-agent",name: "room-123",host: "your-worker.workers.dev",});client.setState({ score: 100 });console.log(client.state); // { score: 100 }TypeScript const client = new AgentClient<GameAgent>({agent: "game-agent",name: "room-123",host: "your-worker.workers.dev",});client.setState({ score: 100 });console.log(client.state); // { score: 100 }State starts as

undefinedand is populated when the server sends the initial state on connect (frominitialState) or whensetState()is called. Use optional chaining (agent.state?.field) for safe access. TheonStateUpdatecallback continues to work as before — the newstateproperty is additive.schedule()now supports anidempotentoption that deduplicates by(type, callback, payload), preventing duplicate rows from accumulating when called in places that run on every Durable Object restart such asonStart().Cron schedules are idempotent by default. Calling

schedule("0 * * * *", "tick")multiple times with the same callback, expression, and payload returns the existing schedule row instead of creating a new one. Pass{ idempotent: false }to override.Delayed and date-scheduled types support opt-in idempotency:

JavaScript import { Agent } from "agents";class MyAgent extends Agent {async onStart() {// Safe across restarts — only one row is createdawait this.schedule(60, "maintenance", undefined, { idempotent: true });}}TypeScript import { Agent } from "agents";class MyAgent extends Agent {async onStart() {// Safe across restarts — only one row is createdawait this.schedule(60, "maintenance", undefined, { idempotent: true });}}Two new warnings help catch common foot-guns:

- Calling

schedule()insideonStart()without{ idempotent: true }emits aconsole.warnwith actionable guidance (once per callback; skipped for cron and whenidempotentis set explicitly). - If an alarm cycle processes 10 or more stale one-shot rows for the same callback, the SDK emits a

console.warnand aschedule:duplicate_warningdiagnostics channel event.

AgentClientnow accepts an optional agent type parameter for full type inference on RPC calls, matching the typed experience already available withuseAgent.JavaScript const client = new AgentClient({agent: "my-agent",host: window.location.host,});// Typed call — method name autocompletes, args and return type inferredconst value = await client.call("getValue");// Typed stub — direct RPC-style proxyawait client.stub.getValue();await client.stub.add(1, 2);TypeScript const client = new AgentClient<MyAgent>({agent: "my-agent",host: window.location.host,});// Typed call — method name autocompletes, args and return type inferredconst value = await client.call("getValue");// Typed stub — direct RPC-style proxyawait client.stub.getValue();await client.stub.add(1, 2);State is automatically inferred from the agent type, so

onStateUpdateis also typed:JavaScript const client = new AgentClient({agent: "my-agent",host: window.location.host,onStateUpdate: (state) => {// state is typed as MyAgent's state type},});TypeScript const client = new AgentClient<MyAgent>({agent: "my-agent",host: window.location.host,onStateUpdate: (state) => {// state is typed as MyAgent's state type},});Existing untyped usage continues to work without changes. The RPC type utilities (

AgentMethods,AgentStub,RPCMethods) are now exported fromagents/clientfor advanced typing scenarios.agents,@cloudflare/ai-chat, and@cloudflare/codemodenow requirezod ^4.0.0. Zod v3 is no longer supported.- Turn serialization —

onChatMessage()and_reply()work is now queued so user requests, tool continuations, andsaveMessages()never stream concurrently. - Duplicate messages on stop — Clicking stop during an active stream no longer splits the assistant message into two entries.

- Duplicate messages after tool calls — Orphaned client IDs no longer leak into persistent storage.

keepAlive()now uses a lightweight in-memory ref count instead of schedule rows. Multiple concurrent callers share a single alarm cycle. The@experimentaltag has been removed from bothkeepAlive()andkeepAliveWhile().A new entry point

@cloudflare/codemode/tanstack-aiadds support for TanStack AI's ↗chat()as an alternative to the Vercel AI SDK'sstreamText():JavaScript import {createCodeTool,tanstackTools,} from "@cloudflare/codemode/tanstack-ai";import { chat } from "@tanstack/ai";const codeTool = createCodeTool({tools: [tanstackTools(myServerTools)],executor,});const stream = chat({ adapter, tools: [codeTool], messages });TypeScript import { createCodeTool, tanstackTools } from "@cloudflare/codemode/tanstack-ai";import { chat } from "@tanstack/ai";const codeTool = createCodeTool({tools: [tanstackTools(myServerTools)],executor,});const stream = chat({ adapter, tools: [codeTool], messages });To update to the latest version:

Terminal window npm i agents@latest @cloudflare/ai-chat@latest- Calling

AI Search now offers new REST API endpoints for search and chat that use an OpenAI compatible format. This means you can use the familiar

messagesarray structure that works with existing OpenAI SDKs and tools. The messages array also lets you pass previous messages within a session, so the model can maintain context across multiple turns.Endpoint Path Chat Completions POST /accounts/{account_id}/ai-search/instances/{name}/chat/completionsSearch POST /accounts/{account_id}/ai-search/instances/{name}/searchHere is an example request to the Chat Completions endpoint using the new

messagesarray format:Terminal window curl https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai-search/instances/{NAME}/chat/completions \-H "Content-Type: application/json" \-H "Authorization: Bearer {API_TOKEN}" \-d '{"messages": [{"role": "system","content": "You are a helpful documentation assistant."},{"role": "user","content": "How do I get started?"}]}'For more details, refer to the AI Search REST API guide.

If you are using the previous AutoRAG API endpoints (

/autorag/rags/), we recommend migrating to the new endpoints. The previous AutoRAG API endpoints will continue to be fully supported.Refer to the migration guide for step-by-step instructions.

AI Search now supports public endpoints, UI snippets, and MCP, making it easy to add search to your website or connect AI agents.

Public endpoints allow you to expose AI Search capabilities without requiring API authentication. To enable public endpoints:

- Go to AI Search in the Cloudflare dashboard. Go to AI Search

- Select your instance, and turn on Public Endpoint in Settings. For more details, refer to Public endpoint configuration.

UI snippets are pre-built search and chat components you can embed in your website. Visit search.ai.cloudflare.com ↗ to configure and preview components for your AI Search instance.

To add a search modal to your page:

<scripttype="module"src="https://<INSTANCE_ID>.search.ai.cloudflare.com/assets/v0.0.25/search-snippet.es.js"></script><search-modal-snippetapi-url="https://<INSTANCE_ID>.search.ai.cloudflare.com/"placeholder="Search..."></search-modal-snippet>For more details, refer to the UI snippets documentation.

The MCP endpoint allows AI agents to search your content via the Model Context Protocol. Connect your MCP client to:

https://<INSTANCE_ID>.search.ai.cloudflare.com/mcpFor more details, refer to the MCP documentation.

AI Search now supports custom metadata filtering, allowing you to define your own metadata fields and filter search results based on attributes like category, version, or any custom field you define.

You can define up to 5 custom metadata fields per AI Search instance. Each field has a name and data type (

text,number, orboolean):Terminal window curl -X POST https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai-search/instances \-H "Content-Type: application/json" \-H "Authorization: Bearer {API_TOKEN}" \-d '{"id": "my-instance","type": "r2","source": "my-bucket","custom_metadata": [{ "field_name": "category", "data_type": "text" },{ "field_name": "version", "data_type": "number" },{ "field_name": "is_public", "data_type": "boolean" }]}'How you attach metadata depends on your data source:

- R2 bucket: Set metadata using S3-compatible custom headers (

x-amz-meta-*) when uploading objects. Refer to R2 custom metadata for examples. - Website: Add

<meta>tags to your HTML pages. Refer to Website custom metadata for details.

Use custom metadata fields in your search queries alongside built-in attributes like

folderandtimestamp:Terminal window curl https://api.cloudflare.com/client/v4/accounts/{ACCOUNT_ID}/ai-search/instances/{NAME}/search \-H "Content-Type: application/json" \-H "Authorization: Bearer {API_TOKEN}" \-d '{"messages": [{"content": "How do I configure authentication?","role": "user"}],"ai_search_options": {"retrieval": {"filters": {"category": "documentation","version": { "$gte": 2.0 }}}}}'Learn more in the metadata filtering documentation.

- R2 bucket: Set metadata using S3-compatible custom headers (