Changelog

New updates and improvements at Cloudflare.

Static Assets: Fixed a bug in how redirect rules ↗ defined in your Worker's

_redirectsfile are processed.If you're serving Static Assets with a

_redirectsfile containing a rule like/ja/* /:splat, paths with double slashes were previously misinterpreted as external URLs. For example, visiting/ja//example.comwould incorrectly redirect tohttps://example.cominstead of/example.comon your domain. This has been fixed and double slashes now correctly resolve as local paths. Note: Cloudflare Pages was not affected by this issue.

We've updated preview URLs for Cloudflare Workers to support long branch names.

Previously, branch and Worker names exceeding the 63-character DNS limit would cause alias generation to fail, leaving pull requests without aliased preview URLs. This particularly impacted teams relying on descriptive branch naming.

Now, Cloudflare automatically truncates long branch names and appends a unique hash, ensuring every pull request gets a working preview link.

- 63 characters or less:

<branch-name>-<worker-name>→ Uses actual branch name as is - 64 characters or more:

<truncated-branch-name>--<hash>-<worker-name>→ Uses truncated name with 4-character hash - Hash generation: The hash is derived from the full branch name to ensure uniqueness

- Stable URLs: The same branch always generates the same hash across all commits

- Wrangler 4.30.0 or later: This feature requires updating to wrangler@4.30.0+

- No configuration needed: Works automatically with existing preview URL setups

- 63 characters or less:

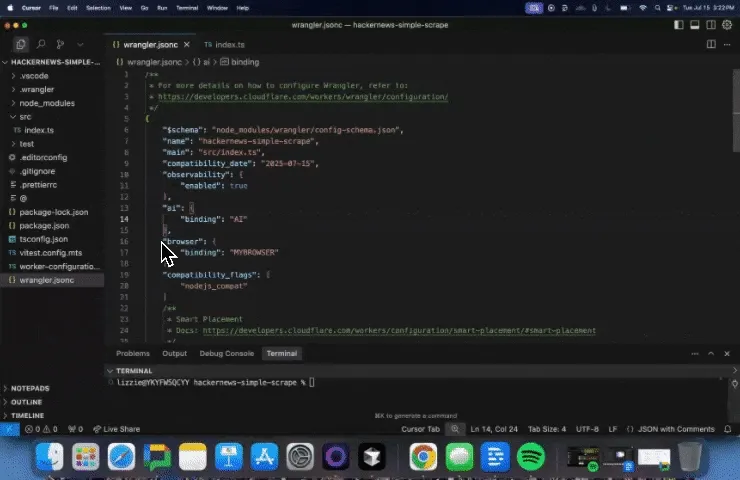

We are changing how Python Workers are structured by default. Previously, handlers were defined at the top-level of a module as

on_fetch,on_scheduled, etc. methods, but now they live in an entrypoint class.Here's an example of how to now define a Worker with a fetch handler:

Python from workers import Response, WorkerEntrypointclass Default(WorkerEntrypoint):async def fetch(self, request):return Response("Hello World!")To keep using the old-style handlers, you can specify the

disable_python_no_global_handlerscompatibility flag in your wrangler file:{"compatibility_flags": ["disable_python_no_global_handlers"]}compatibility_flags = [ "disable_python_no_global_handlers" ]Consult the Python Workers documentation for more details.

The recent Cloudflare Terraform Provider ↗ and SDK releases (such as cloudflare-typescript ↗) bring significant improvements to the Workers developer experience. These updates focus on reliability, performance, and adding Python Workers support.

Resolved several issues with the

cloudflare_workers_scriptresource that resulted in unwarranted plan diffs, including:- Using Durable Objects migrations

- Using some bindings such as

secret_text - Using smart placement

A resource should never show a plan diff if there isn't an actual change. This fix reduces unnecessary noise in your Terraform plan and is available in Cloudflare Terraform Provider 5.8.0.

You can now specify

content_fileandcontent_sha256instead ofcontent. This prevents the Workers script content from being stored in the state file which greatly reduces plan diff size and noise. If your workflow synced plans remotely, this should now happen much faster since there is less data to sync. This is available in Cloudflare Terraform Provider 5.7.0.resource "cloudflare_workers_script" "my_worker" {account_id = "123456789"script_name = "my_worker"main_module = "worker.mjs"content_file = "worker.mjs"content_sha256 = filesha256("worker.mjs")}Fixed the

cloudflare_workers_scriptresource to properly support headers and redirects for Assets:resource "cloudflare_workers_script" "my_worker" {account_id = "123456789"script_name = "my_worker"main_module = "worker.mjs"content_file = "worker.mjs"content_sha256 = filesha256("worker.mjs")assets = {config = {headers = file("_headers")redirects = file("_redirects")}# Completion jwt from:# https://developers.cloudflare.com/api/resources/workers/subresources/assets/subresources/upload/jwt = "jwt"}}Available in Cloudflare Terraform Provider 5.8.0.

Added support for uploading Python Workers (beta) in Terraform. You can now deploy Python Workers with:

resource "cloudflare_workers_script" "my_worker" {account_id = "123456789"script_name = "my_worker"content_file = "worker.py"content_sha256 = filesha256("worker.py")content_type = "text/x-python"}Available in Cloudflare Terraform Provider 5.8.0.

Fixed an issue where Workers script versions in the SDK did not allow uploading files. This now works, and also has an improved files upload interface:

JavaScript const scriptContent = `export default {async fetch(request, env, ctx) {return new Response('Hello World!', { status: 200 });}};`;client.workers.scripts.versions.create('my-worker', {account_id: '123456789',metadata: {main_module: 'my-worker.mjs',},files: [await toFile(Buffer.from(scriptContent),'my-worker.mjs',{type: "application/javascript+module",})]});Will be available in cloudflare-typescript 4.6.0. A similar change will be available in cloudflare-python 4.4.0.

Previously when creating a KV value like this:

JavaScript await cf.kv.namespaces.values.update("my-kv-namespace", "key1", {account_id: "123456789",metadata: "my metadata",value: JSON.stringify({hello: "world"})});...and recalling it in your Worker like this:

TypeScript const value = await c.env.KV.get<{hello: string}>("key1", "json");You'd get back this:

{metadata:'my metadata', value:"{'hello':'world'}"}instead of the correct value of{hello: 'world'}This is fixed in cloudflare-typescript 4.5.0 and will be fixed in cloudflare-python 4.4.0.

A minimal implementation of the MessageChannel API ↗ is now available in Workers. This means that you can use

MessageChannelto send messages between different parts of your Worker, but not across different Workers.The

MessageChannelandMessagePortAPIs will be available by default at the global scope with any worker using a compatibility date of2025-08-15or later. It is also available using theexpose_global_message_channelcompatibility flag, or can be explicitly disabled using theno_expose_global_message_channelcompatibility flag.JavaScript const { port1, port2 } = new MessageChannel();port2.onmessage = (event) => {console.log('Received message:', event.data);};port2.postMessage('Hello from port2!');Any value that can be used with the

structuredClone(...)API can be sent over the port.There are a number of key limitations to the

MessageChannelAPI in Workers:- Transfer lists are currently not supported. This means that you will not be able to transfer

ownership of objects like

ArrayBufferorMessagePortbetween ports. - The

MessagePortis not yet serializable. This means that you cannot send aMessagePortobject through thepostMessagemethod or via JSRPC calls. - The

'messageerror'event is only partially supported. If the'onmessage'handler throws an error, the'messageerror'event will be triggered, however, it will not be triggered when there are errors serializing or deserializing the message data. Instead, the error will be thrown when thepostMessagemethod is called on the sending port. - The

'close'event will be emitted on both ports when one of the ports is closed, however it will not be emitted when the Worker is terminated or when one of the ports is garbage collected.

- Transfer lists are currently not supported. This means that you will not be able to transfer

ownership of objects like

Now, you can use

.envfiles to provide secrets and override environment variables on theenvobject during local development with Wrangler and the Cloudflare Vite plugin.Previously in local development, if you wanted to provide secrets or environment variables during local development, you had to use

.dev.varsfiles. This is still supported, but you can now also use.envfiles, which are more familiar to many developers.You can create a

.envfile in your project root to define environment variables that will be used when runningwrangler devorvite dev. The.envfile should be formatted like adotenvfile, such asKEY="VALUE":.env TITLE="My Worker"API_TOKEN="dev-token"When you run

wrangler devorvite dev, the environment variables defined in the.envfile will be available in your Worker code via theenvobject:JavaScript export default {async fetch(request, env) {const title = env.TITLE; // "My Worker"const apiToken = env.API_TOKEN; // "dev-token"const response = await fetch(`https://api.example.com/data?token=${apiToken}`,);return new Response(`Title: ${title} - ` + (await response.text()));},};If your Worker defines multiple environments, you can set different variables for each environment (ex: production or staging) by creating files named

.env.<environment-name>.When you use

wrangler <command> --env <environment-name>orCLOUDFLARE_ENV=<environment-name> vite dev, the corresponding environment-specific file will also be loaded and merged with the.envfile.For example, if you want to set different environment variables for the

stagingenvironment, you can create a file named.env.staging:.env.staging API_TOKEN="staging-token"When you run

wrangler dev --env stagingorCLOUDFLARE_ENV=staging vite dev, the environment variables from.env.stagingwill be merged onto those from.env.JavaScript export default {async fetch(request, env) {const title = env.TITLE; // "My Worker" (from `.env`)const apiToken = env.API_TOKEN; // "staging-token" (from `.env.staging`, overriding the value from `.env`)const response = await fetch(`https://api.example.com/data?token=${apiToken}`,);return new Response(`Title: ${title} - ` + (await response.text()));},};For more information on how to use

.envfiles with Wrangler and the Cloudflare Vite plugin, see the following documentation:

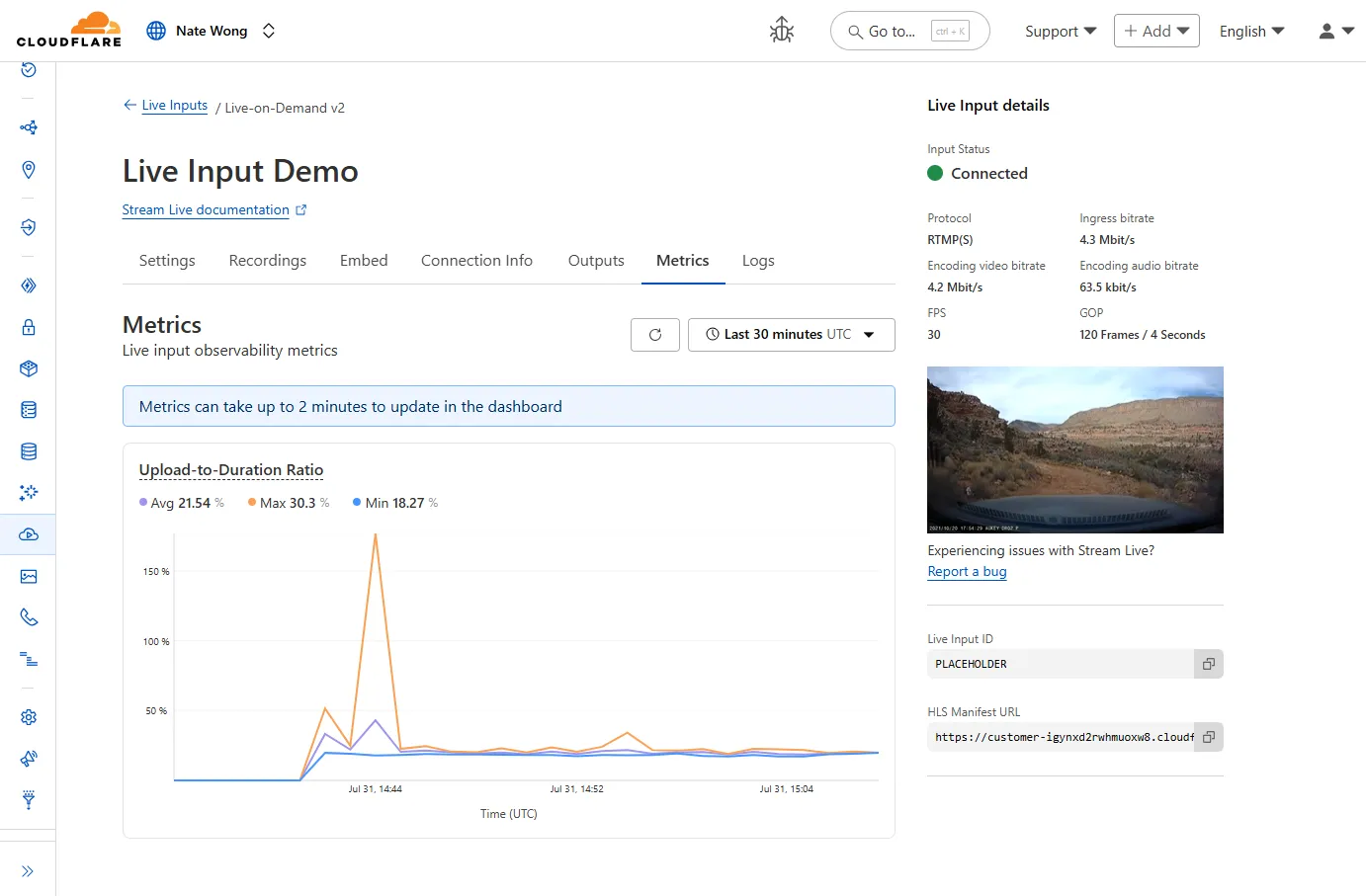

New information about broadcast metrics and events is now available in Cloudflare Stream in the Live Input details of the Dashboard.

You can now easily understand broadcast-side health and performance with new observability, which can help when troubleshooting common issues, particularly for new customers who are just getting started, and platform customers who may have limited visibility into how their end-users configure their encoders.

To get started, start a live stream (just getting started?), then visit the Live Input details page in Dash.

See our new live Troubleshooting guide to learn what these metrics mean and how to use them to address common broadcast issues.

You can now import

waitUntilfromcloudflare:workersto extend your Worker's execution beyond the request lifecycle from anywhere in your code.Previously,

waitUntilcould only be accessed through the execution context (ctx) parameter passed to your Worker's handler functions. This meant that if you needed to schedule background tasks from deeply nested functions or utility modules, you had to pass thectxobject through multiple function calls to accesswaitUntil.Now, you can import

waitUntildirectly and use it anywhere in your Worker without needing to passctxas a parameter:JavaScript import { waitUntil } from "cloudflare:workers";export function trackAnalytics(eventData) {const analyticsPromise = fetch("https://analytics.example.com/track", {method: "POST",body: JSON.stringify(eventData),});// Extend execution to ensure analytics tracking completeswaitUntil(analyticsPromise);}This is particularly useful when you want to:

- Schedule background tasks from utility functions or modules

- Extend execution for analytics, logging, or cleanup operations

- Avoid passing the execution context through multiple layers of function calls

JavaScript import { waitUntil } from "cloudflare:workers";export default {async fetch(request, env, ctx) {// Background task that should complete even after response is sentcleanupTempData(env.KV_NAMESPACE);return new Response("Hello, World!");}};function cleanupTempData(kvNamespace) {// This function can now use waitUntil without needing ctxconst deletePromise = kvNamespace.delete("temp-key");waitUntil(deletePromise);}For more information, see the

waitUntildocumentation.

By setting the value of the

cacheproperty tono-cache, you can force Cloudflare's cache to revalidate its contents with the origin when making subrequests from Cloudflare Workers.index.js export default {async fetch(req, env, ctx) {const request = new Request("https://cloudflare.com", {cache: "no-cache",});const response = await fetch(request);return response;},};index.ts export default {async fetch(req, env, ctx): Promise<Response> {const request = new Request("https://cloudflare.com", { cache: 'no-cache'});const response = await fetch(request);return response;}} satisfies ExportedHandler<Environment>When

no-cacheis set, the Worker request will first look for a match in Cloudflare's cache, then:- If there is a match, a conditional request is sent to the origin, regardless of whether or not the match is fresh or stale. If the resource has not changed, the cached version is returned. If the resource has changed, it will be downloaded from the origin, updated in the cache, and returned.

- If there is no match, Workers will make a standard request to the origin and cache the response.

This increases compatibility with NPM packages and JavaScript frameworks that rely on setting the

cacheproperty, which is a cross-platform standard part of theRequestinterface. Previously, if you set thecacheproperty onRequestto'no-cache', the Workers runtime threw an exception.- Learn how the Cache works with Cloudflare Workers

- Enable Node.js compatibility for your Cloudflare Worker

- Explore Runtime APIs and Bindings available in Cloudflare Workers

We’ve shipped a major release for the @cloudflare/sandbox ↗ SDK, turning it into a full-featured, container-based execution platform that runs securely on Cloudflare Workers.

This update adds live streaming of output, persistent Python and JavaScript code interpreters with rich output support (charts, tables, HTML, JSON), file system access, Git operations, full background process control, and the ability to expose running services via public URLs.

This makes it ideal for building AI agents, CI runners, cloud REPLs, data analysis pipelines, or full developer tools — all without managing infrastructure.

Create persistent code contexts with support for rich visual + structured outputs.

Creates a new code execution context with persistent state.

TypeScript // Create a Python contextconst pythonCtx = await sandbox.createCodeContext({ language: "python" });// Create a JavaScript contextconst jsCtx = await sandbox.createCodeContext({ language: "javascript" });Options:

- language: Programming language ('python' | 'javascript' | 'typescript')

- cwd: Working directory (default: /workspace)

- envVars: Environment variables for the context

Executes code with optional streaming callbacks.

TypeScript // Simple executionconst execution = await sandbox.runCode('print("Hello World")', {context: pythonCtx,});// With streaming callbacksawait sandbox.runCode(`for i in range(5):print(f"Step {i}")time.sleep(1)`,{context: pythonCtx,onStdout: (output) => console.log("Real-time:", output.text),onResult: (result) => console.log("Result:", result),},);Options:

- language: Programming language ('python' | 'javascript' | 'typescript')

- cwd: Working directory (default: /workspace)

- envVars: Environment variables for the context

Returns a streaming response for real-time processing.

TypeScript const stream = await sandbox.runCodeStream("import time; [print(i) for i in range(10)]",);// Process the stream as neededInterpreter outputs are auto-formatted and returned in multiple formats:

- text

- html (e.g., Pandas tables)

- png, svg (e.g., Matplotlib charts)

- json (structured data)

- chart (parsed visualizations)

TypeScript const result = await sandbox.runCode(`import seaborn as snsimport matplotlib.pyplot as pltdata = sns.load_dataset("flights")pivot = data.pivot("month", "year", "passengers")sns.heatmap(pivot, annot=True, fmt="d")plt.title("Flight Passengers")plt.show()pivot.to_dict()`,{ context: pythonCtx },);if (result.png) {console.log("Chart output:", result.png);}Start background processes and expose them with live URLs.

TypeScript await sandbox.startProcess("python -m http.server 8000");const preview = await sandbox.exposePort(8000);console.log("Live preview at:", preview.url);Start, inspect, and terminate long-running background processes.

TypeScript const process = await sandbox.startProcess("node server.js");console.log(`Started process ${process.id} with PID ${process.pid}`);// Monitor the processconst logStream = await sandbox.streamProcessLogs(process.id);for await (const log of parseSSEStream<LogEvent>(logStream)) {console.log(`Server: ${log.data}`);}- listProcesses() - List all running processes

- getProcess(id) - Get detailed process status

- killProcess(id, signal) - Terminate specific processes

- killAllProcesses() - Kill all processes

- streamProcessLogs(id, options) - Stream logs from running processes

- getProcessLogs(id) - Get accumulated process output

Clone Git repositories directly into the sandbox.

TypeScript await sandbox.gitCheckout("https://github.com/user/repo", {branch: "main",targetDir: "my-project",});Sandboxes are still experimental. We're using them to explore how isolated, container-like workloads might scale on Cloudflare — and to help define the developer experience around them.

The latest releases of @cloudflare/agents ↗ brings major improvements to MCP transport protocols support and agents connectivity. Key updates include:

MCP servers can now request user input during tool execution, enabling interactive workflows like confirmations, forms, and multi-step processes. This feature uses durable storage to preserve elicitation state even during agent hibernation, ensuring seamless user interactions across agent lifecycle events.

TypeScript // Request user confirmation via elicitationconst confirmation = await this.elicitInput({message: `Are you sure you want to increment the counter by ${amount}?`,requestedSchema: {type: "object",properties: {confirmed: {type: "boolean",title: "Confirm increment",description: "Check to confirm the increment",},},required: ["confirmed"],},});Check out our demo ↗ to see elicitation in action.

MCP now supports HTTP streamable transport which is recommended over SSE. This transport type offers:

- Better performance: More efficient data streaming and reduced overhead

- Improved reliability: Enhanced connection stability and error recover- Automatic fallback: If streamable transport is not available, it gracefully falls back to SSE

TypeScript export default MyMCP.serve("/mcp", {binding: "MyMCP",});The SDK automatically selects the best available transport method, gracefully falling back from streamable-http to SSE when needed.

Significant improvements to MCP server connections and transport reliability:

- Auto transport selection: Automatically determines the best transport method, falling back from streamable-http to SSE as needed

- Improved error handling: Better connection state management and error reporting for MCP servers

- Reliable prop updates: Centralized agent property updates ensure consistency across different contexts

You can use

.queue()to enqueue background work — ideal for tasks like processing user messages, sending notifications etc.TypeScript class MyAgent extends Agent {doSomethingExpensive(payload) {// a long running process that you want to run in the background}queueSomething() {await this.queue("doSomethingExpensive", somePayload); // this will NOT block further execution, and runs in the backgroundawait this.queue("doSomethingExpensive", someOtherPayload); // the callback will NOT run until the previous callback is complete// ... call as many times as you want}}Want to try it yourself? Just define a method like processMessage in your agent, and you’re ready to scale.

Want to build an AI agent that can receive and respond to emails automatically? With the new email adapter and onEmail lifecycle method, now you can.

TypeScript export class EmailAgent extends Agent {async onEmail(email: AgentEmail) {const raw = await email.getRaw();const parsed = await PostalMime.parse(raw);// create a response based on the email contents// and then send a replyawait this.replyToEmail(email, {fromName: "Email Agent",body: `Thanks for your email! You've sent us "${parsed.subject}". We'll process it shortly.`,});}}You route incoming mail like this:

TypeScript export default {async email(email, env) {await routeAgentEmail(email, env, {resolver: createAddressBasedEmailResolver("EmailAgent"),});},};You can find a full example here ↗.

Custom methods are now automatically wrapped with the agent's context, so calling

getCurrentAgent()should work regardless of where in an agent's lifecycle it's called. Previously this would not work on RPC calls, but now just works out of the box.TypeScript export class MyAgent extends Agent {async suggestReply(message) {// getCurrentAgent() now correctly works, even when called inside an RPC methodconst { agent } = getCurrentAgent()!;return generateText({prompt: `Suggest a reply to: "${message}" from "${agent.name}"`,tools: [replyWithEmoji],});}}Try it out and tell us what you build!

We're thrilled to be a Day 0 partner with OpenAI ↗ to bring their latest open models ↗ to Workers AI, including support for Responses API, Code Interpreter, and Web Search (coming soon).

Get started with the new models at

@cf/openai/gpt-oss-120band@cf/openai/gpt-oss-20b. Check out the blog ↗ for more details about the new models, and thegpt-oss-120bandgpt-oss-20bmodel pages for more information about pricing and context windows.If you call the model through:

- Workers Binding, it will accept/return Responses API –

env.AI.run(“@cf/openai/gpt-oss-120b”) - REST API on

/runendpoint, it will accept/return Responses API –https://api.cloudflare.com/client/v4/accounts/<account_id>/ai/run/@cf/openai/gpt-oss-120b - REST API on new

/responsesendpoint, it will accept/return Responses API –https://api.cloudflare.com/client/v4/accounts/<account_id>/ai/v1/responses - REST API for OpenAI Compatible endpoint, it will return Chat Completions (coming soon) –

https://api.cloudflare.com/client/v4/accounts/<account_id>/ai/v1/chat/completions

curl https://api.cloudflare.com/client/v4/accounts/<account_id>/ai/v1/responses \-H "Content-Type: application/json" \-H "Authorization: Bearer $CLOUDFLARE_API_KEY" \-d '{"model": "@cf/openai/gpt-oss-120b","reasoning": {"effort": "medium"},"input": [{"role": "user","content": "What are the benefits of open-source models?"}]}'The model is natively trained to support stateful code execution, and we've implemented support for this feature using our Sandbox SDK ↗ and Containers ↗. Cloudflare's Developer Platform is uniquely positioned to support this feature, so we're very excited to bring our products together to support this new use case.

We are working to implement Web Search for the model, where users can bring their own Exa API Key so the model can browse the Internet.

- Workers Binding, it will accept/return Responses API –

As part of the ongoing open beta for Workers Builds, we’ve increased the available disk space for builds from 8 GB to 20 GB for both Free and Paid plans.

This provides more space for larger projects, dependencies, and build artifacts while improving overall build reliability.

Metric Free Plan Paid Plans Disk Space 20 GB 20 GB All other build limits — including CPU, memory, build minutes, and timeout remain unchanged.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high mumber of issues ↗ reported by the Cloudflare community related to the v5 release. We have committed to releasing improvements on a 2 week cadeance to ensure it's stability and reliability. We have also pivoted from an issue-to-issue approach to a resource-per-resource approach - we will be focusing on specific resources for every release, stablizing the release and closing all associated bugs with that resource before moving onto resolving migration issues.

Thank you for continuing to raise issues. We triage them weekly and they help make our products stronger.

- Resources stablized:

cloudflare_custom_pagescloudflare_page_rulecloudflare_dns_recordcloudflare_argo_tiered_caching

- Addressed chronic drift issues in

cloudflare_logpush_job,cloudflare_zero_trust_dns_location,cloudflare_ruleset&cloudflare_api_token cloudflare_zone_subscriptonreturns expected valuesrate_plan.idfrom former versionscloudflare_workers_scriptcan now successfully be destroyed with bindings & migration for Durable Objects now recorded in tfstate- Ability to configure

add_headersundercloudflare_zero_trust_gateway_policy - Other bug fixes

For a more detailed look at all of the changes, see the changelog ↗ in GitHub.

- #5666: cloudflare_ruleset example lists id which is a read-only field ↗

- #5578: cloudflare_logpush_job plan always suggests changes ↗

- #5552: 5.4.0: Since provider update, existing cloudflare_list_item would be recreated "created" state ↗

- #5670: cloudflare_zone_subscription: uses wrong ID field in Read/Update ↗

- #5548: cloudflare_api_token resource always shows changes (drift) ↗

- #5634: cloudflare_workers_script with bindings fails to be destroyed ↗

- #5616: cloudflare_workers_script Unable to deploy worker assets ↗

- #5331: cloudflare_workers_script 500 internal server error when uploading python ↗

- #5701: cloudflare_workers_script migrations for Durable Objects not recorded in tfstate; cannot be upgraded between versions ↗

- #5704: cloudflare_workers_script randomly fails to deploy when changing compatibility_date ↗

- #5439: cloudflare_workers_script (v5.2.0) ignoring content and bindings properties ↗

- #5522: cloudflare_workers_script always detects changes after apply ↗

- #5693: cloudflare_zero_trust_access_identity_provider gives recurring change on OTP pin login ↗

- #5567: cloudflare_r2_custom_domain doesn't roundtrip jurisdiction properly ↗

- #5179: Bad request with when creating cloudflare_api_shield_schema resource ↗

If you have an unaddressed issue with the provider, we encourage you to check the open issues ↗ and open a new one if one does not already exist for what you are experiencing.

We suggest holding off on migration to v5 while we work on stablization. This help will you avoid any blocking issues while the Terraform resources are actively being stablized.

If you'd like more information on migrating from v4 to v5, please make use of the migration guide ↗. We have provided automated migration scripts using Grit which simplify the transition, although these do not support implementations which use Terraform modules, so customers making use of modules need to migrate manually. Please make use of

terraform planto test your changes before applying, and let us know if you encounter any additional issues by reporting to our GitHub repository ↗.- Resources stablized:

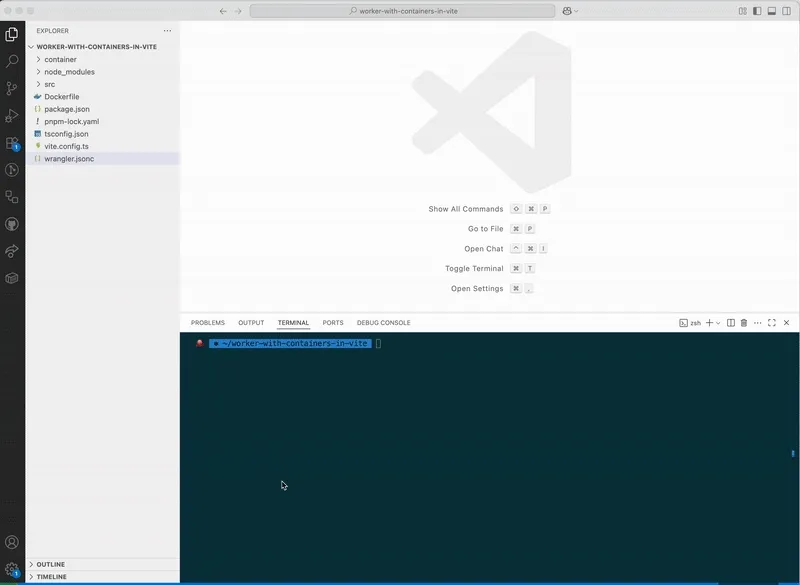

You can now configure and run Containers alongside your Worker during local development when using the Cloudflare Vite plugin. Previously, you could only develop locally when using Wrangler as your local development server.

You can simply configure your Worker and your Container(s) in your Wrangler configuration file:

{"name": "container-starter","main": "src/index.js","containers": [{"class_name": "MyContainer","image": "./Dockerfile","instances": 5}],"durable_objects": {"bindings": [{"class_name": "MyContainer","name": "MY_CONTAINER"}]},"migrations": [{"new_sqlite_classes": ["MyContainer"],"tag": "v1"}],}name = "container-starter"main = "src/index.js"[[containers]]class_name = "MyContainer"image = "./Dockerfile"instances = 5[[durable_objects.bindings]]class_name = "MyContainer"name = "MY_CONTAINER"[[migrations]]new_sqlite_classes = [ "MyContainer" ]tag = "v1"Once your Worker and Containers are configured, you can access the Container instances from your Worker code:

TypeScript import { Container, getContainer } from "@cloudflare/containers";export class MyContainer extends Container {defaultPort = 4000; // Port the container is listening onsleepAfter = "10m"; // Stop the instance if requests not sent for 10 minutes}async fetch(request, env) {const { "session-id": sessionId } = await request.json();// Get the container instance for the given session IDconst containerInstance = getContainer(env.MY_CONTAINER, sessionId)// Pass the request to the container instance on its default portreturn containerInstance.fetch(request);}To develop your Worker locally, start a local dev server by running

Terminal window vite devin your terminal.

Learn more about Cloudflare Containers ↗ or the Cloudflare Vite plugin ↗ in our developer docs.

Any template which uses Worker environment variables, secrets, or Secrets Store secrets can now be deployed using a Deploy to Cloudflare button.

Define environment variables and secrets store bindings in your Wrangler configuration file as normal:

{"name": "my-worker","main": "./src/index.ts",// Set this to today's date"compatibility_date": "2026-03-11","vars": {"API_HOST": "https://example.com",},"secrets_store_secrets": [{"binding": "API_KEY","store_id": "demo","secret_name": "api-key"}]}name = "my-worker"main = "./src/index.ts"# Set this to today's datecompatibility_date = "2026-03-11"[vars]API_HOST = "https://example.com"[[secrets_store_secrets]]binding = "API_KEY"store_id = "demo"secret_name = "api-key"Add secrets to a

.dev.vars.exampleor.env.examplefile:.dev.vars.example COOKIE_SIGNING_KEY=my-secret # commentAnd optionally, you can add a description for these bindings in your template's

package.jsonto help users understand how to configure each value:package.json {"name": "my-worker","private": true,"cloudflare": {"bindings": {"API_KEY": {"description": "Select your company's API key for connecting to the example service."},"COOKIE_SIGNING_KEY": {"description": "Generate a random string using `openssl rand -hex 32`."}}}}These secrets and environment variables will be presented to users in the dashboard as they deploy this template, allowing them to configure each value. Additional information about creating templates and Deploy to Cloudflare buttons can be found in our documentation.

We’ve launched pricing for Browser Rendering, including a free tier and a pay-as-you-go model that scales with your needs. Starting August 20, 2025, Cloudflare will begin billing for Browser Rendering.

There are two ways to use Browser Rendering. Depending on the method you use, here’s how billing will work:

- REST API: Charged for Duration only ($/browser hour)

- Workers Bindings: Charged for both Duration and Concurrency ($/browser hour and # of concurrent browsers)

Included usage and pricing by plan

Plan Included duration Included concurrency Price (beyond included) Workers Free 10 minutes per day 3 concurrent browsers N/A Workers Paid 10 hours per month 10 concurrent browsers (averaged monthly) 1. REST API: $0.09 per additional browser hour

2. Workers Bindings: $0.09 per additional browser hour

$2.00 per additional concurrent browserWhat you need to know:

- Workers Free Plan: 10 minutes of browser usage per day with 3 concurrent browsers at no charge.

- Workers Paid Plan: 10 hours of browser usage per month with 10 concurrent browsers (averaged monthly) at no charge. Additional usage is charged as shown above.

You can monitor usage via the Cloudflare dashboard ↗. Go to Compute (Workers) > Browser Rendering.

If you've been using Browser Rendering and do not wish to incur charges, ensure your usage stays within your plan's included usage. To estimate costs, take a look at these example pricing scenarios.

You can now run your Browser Rendering locally using

npx wrangler dev, which spins up a browser directly on your machine before deploying to Cloudflare's global network. By running tests locally, you can quickly develop, debug, and test changes without needing to deploy or worry about usage costs.

Get started with this example guide that shows how to use Cloudflare's fork of Puppeteer (you can also use Playwright) to take screenshots of webpages and store the results in Workers KV.

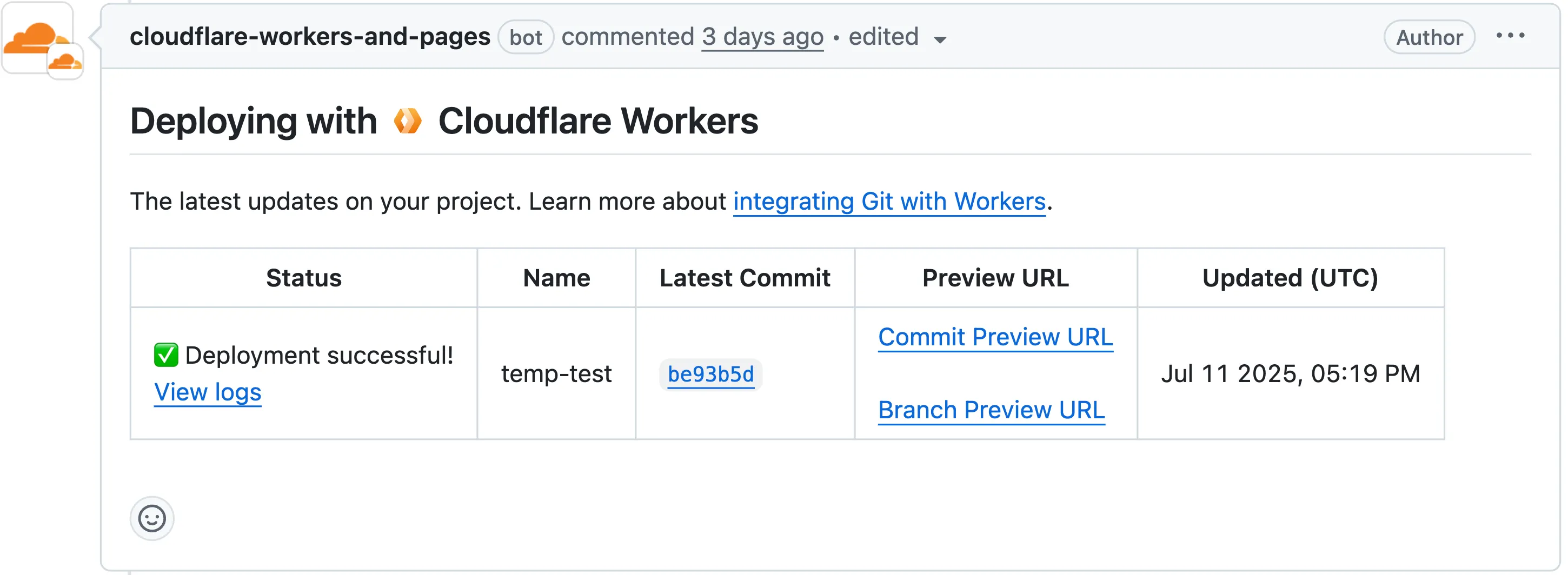

Now, when you connect your Cloudflare Worker to a git repository on GitHub or GitLab, each branch of your repository has its own stable preview URL, that you can use to preview code changes before merging the pull request and deploying to production.

This works the same way that Cloudflare Pages does — every time you create a pull request, you'll automatically get a shareable preview link where you can see your changes running, without affecting production. The link stays the same, even as you add commits to the same branch. These preview URLs are named after your branch and are posted as a comment to each pull request. The URL stays the same with every commit and always points to the latest version of that branch.

Each comment includes two preview URLs as shown above:

- Commit Preview URL: Unique to the specific version/commit (e.g.,

<version-prefix>-<worker-name>.<subdomain>.workers.dev) - Branch Preview URL: A stable alias based on the branch name (e.g.,

<branch-name>-<worker-name>.<subdomain>.workers.dev)

When you create a pull request:

- A preview alias is automatically created based on the Git branch name (e.g.,

<branch-name>becomes<branch-name>-<worker-name>.<subdomain>.workers.dev) - No configuration is needed, the alias is generated for you

- The link stays the same even as you add commits to the same branch

- Preview URLs are posted directly to your pull request as comments (just like they are in Cloudflare Pages)

You can also assign a custom preview alias using the Wrangler CLI, by passing the

--preview-aliasflag when uploading a version of your Worker:Terminal window wrangler versions upload --preview-alias staging- Only available on the workers.dev subdomain (custom domains not yet supported)

- Requires Wrangler v4.21.0+

- Preview URLs are not generated for Workers that use Durable Objects

- Not yet supported for Workers for Platforms

- Commit Preview URL: Unique to the specific version/commit (e.g.,

We now support

audiomode! Use this feature to extract audio from a source video, outputting an M4A file to use in downstream workflows like AI inference, content moderation, or transcription.For example,

Example URL https://example.com/cdn-cgi/media/<OPTIONS>/<SOURCE-VIDEO>https://example.com/cdn-cgi/media/mode=audio,time=3s,duration=60s/<input video with diction>For more information, learn about Transforming Videos.

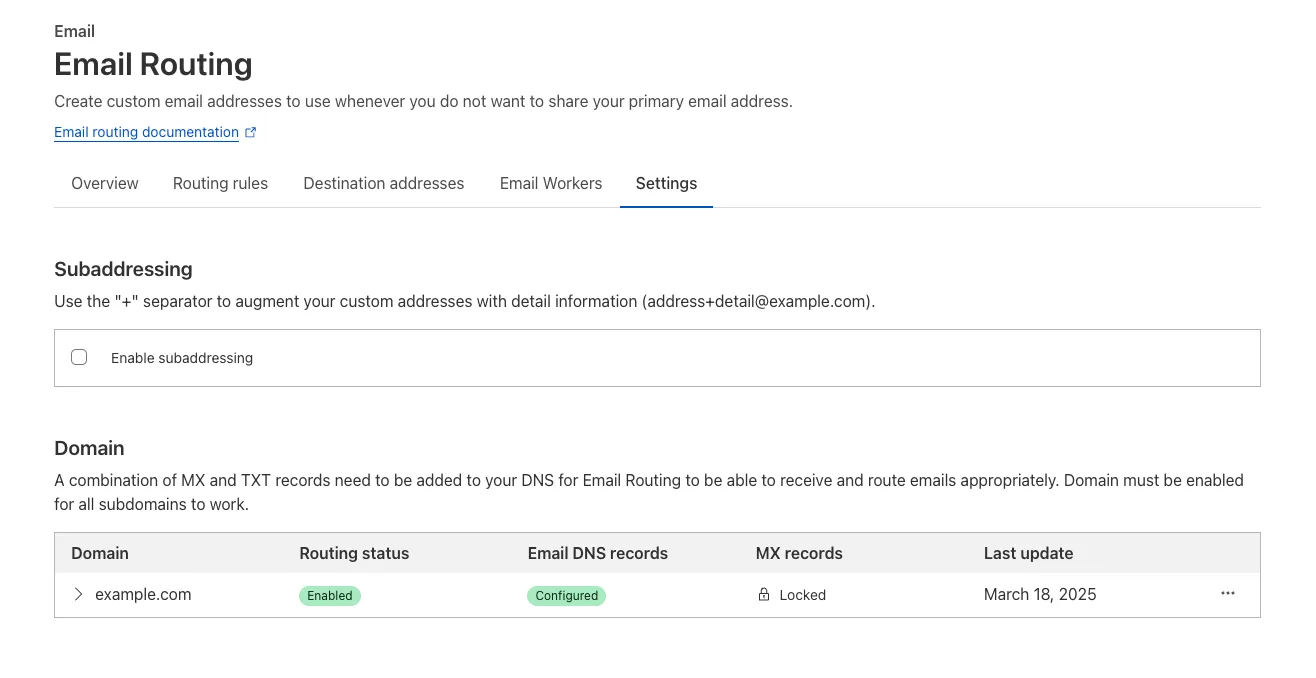

Subaddressing, as defined in RFC 5233 ↗, also known as plus addressing, is now supported in Email Routing. This enables using the "+" separator to augment your custom addresses with arbitrary detail information.

Now you can send an email to

user+detail@example.comand it will be captured by theuser@example.comcustom address. The+detailpart is ignored by Email Routing, but it can be captured next in the processing chain in the logs, an Email Worker or an Agent application ↗.Customers can use this feature to dynamically add context to their emails, such as tracking the source of an email or categorizing emails without needing to create multiple custom addresses.

Check our Developer Docs to learn on to enable subaddressing in Email Routing.

Vite 7 ↗ is now supported in the Cloudflare Vite plugin. See the Vite changelog ↗ for a list of changes.

Note that the minimum Node.js versions supported by Vite 7 are 20.19 and 22.12. We continue to support Vite 6 so you do not need to immediately upgrade.

Your real-time applications running over Cloudflare Tunnel are now faster and more reliable. We've completely re-architected the way

cloudflaredproxies UDP traffic in order to isolate it from other traffic, ensuring latency-sensitive applications like private DNS are no longer slowed down by heavy TCP traffic (like file transfers) on the same Tunnel.This is a foundational improvement to Cloudflare Tunnel, delivered automatically to all customers. There are no settings to configure — your UDP traffic is already flowing faster and more reliably.

What’s new:

- Faster UDP performance: We've significantly reduced the latency for establishing new UDP sessions, making applications like private DNS much more responsive.

- Greater reliability for mixed traffic: UDP packets are no longer affected by heavy TCP traffic, preventing timeouts and connection drops for your real-time services.

Learn more about running TCP or UDP applications and private networks through Cloudflare Tunnel.

Earlier this year, we announced the launch of the new Terraform v5 Provider. We are aware of the high mumber of issues ↗ reported by the Cloudflare community related to the v5 release, with 13.5% of resources impacted. We have committed to releasing improvements on a 2 week cadeance to ensure it's stability and relability, including the v5.7 release.

Thank you for continuing to raise issues and please keep an eye on this changelog for more information about upcoming releases.

- Addressed permanent diff bug on Cloudflare Tunnel config

- State is now saved correctly for Zero Trust Access applications

- Exact match is now working as expected within

data.cloudflare_zero_trust_access_applications cloudflare_zero_trust_access_policynow supports OIDC claims & diff issues resolved- Self hosted applications with private IPs no longer require a public domain for

cloudflare_zero_trust_access_application. - New resource:

cloudflare_zero_trust_tunnel_warp_connector

- Other bug fixes

For a more detailed look at all of the changes, see the changelog ↗ in GitHub.

- #5563: cloudflare_logpull_retention is missing import ↗

- #5608: cloudflare_zero_trust_access_policy in 5.5.0 provider gives error upon apply unexpected new value: .app_count: was cty.NumberIntVal(0), but now cty.NumberIntVal(1) ↗

- #5612: data.cloudflare_zero_trust_access_applications does not exact match ↗

- #5532: cloudflare_zero_trust_access_identity_provider detects changes on every plan ↗

- #5662: cloudflare_zero_trust_access_policy does not support OIDC claims ↗

- #5565: Running Terraform with the cloudflare_zero_trust_access_policy resource results in updates on every apply, even when no changes are made - breaks idempotency ↗

- #5529: cloudflare_zero_trust_access_application: self hosted applications with private ips require public domain ↗

If you have an unaddressed issue with the provider, we encourage you to check the open issues ↗ and open a new one if one does not already exist for what you are experiencing.

We suggest holding on migration to v5 while we work on stablization of the v5 provider. This will ensure Cloudflare can work ahead and avoid any blocking issues.

If you'd like more information on migrating from v4 to v5, please make use of the migration guide ↗. We have provided automated migration scripts using Grit which simplify the transition, although these do not support implementations which use Terraform modules, so customers making use of modules need to migrate manually. Please make use of

terraform planto test your changes before applying, and let us know if you encounter any additional issues by reporting to our GitHub repository ↗.

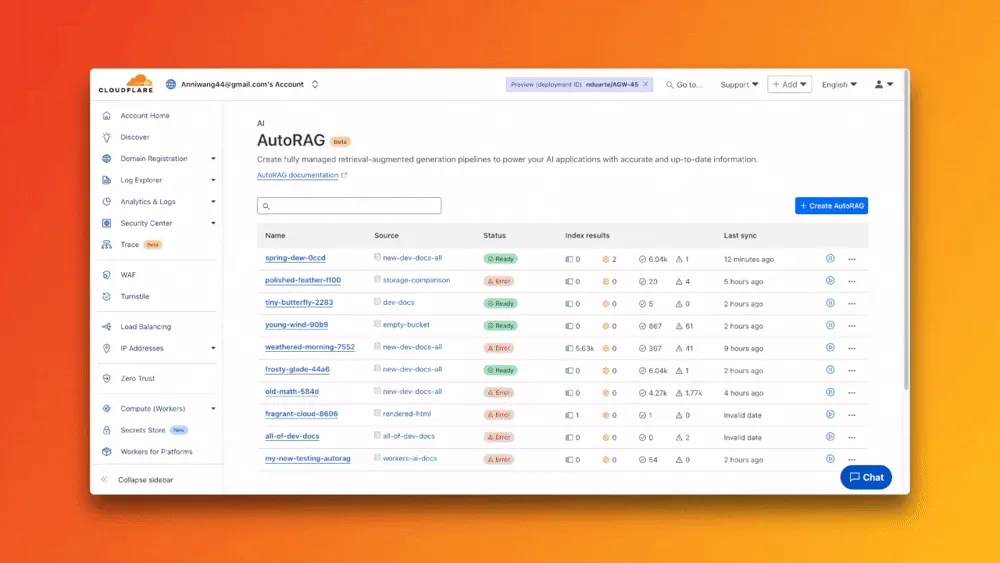

You can now expect 3-5× faster indexing in AutoRAG, and with it, a brand new Jobs view to help you monitor indexing progress.

With each AutoRAG, indexing jobs are automatically triggered to sync your data source (i.e. R2 bucket) with your Vectorize index, ensuring new or updated files are reflected in your query results. You can also trigger jobs manually via the Sync API or by clicking “Sync index” in the dashboard.

With the new jobs observability, you can now:

- View the status, job ID, source, start time, duration and last sync time for each indexing job

- Inspect real-time logs of job events (e.g.

Starting indexing data source...) - See a history of past indexing jobs under the Jobs tab of your AutoRAG

This makes it easier to understand what’s happening behind the scenes.

Coming soon: We’re adding APIs to programmatically check indexing status, making it even easier to integrate AutoRAG into your workflows.

Try it out today on the Cloudflare dashboard ↗.