SSH through Wrangler is now enabled by default for Containers. Previously, you had to set

ssh.enabledtotruein your Container configuration before you could connect.This change does not expose any publicly accessible ports on your Container. The SSH service is reachable only through

wrangler containers ssh, which authenticates against your Cloudflare account. You also need to add anssh-ed25519public key toauthorized_keysbefore anyone can connect, so enabling SSH alone does not grant access.To connect, add a public key to your Container configuration and run

wrangler containers ssh <INSTANCE_ID>:JSONC {"containers": [{"authorized_keys": [{"name": "<NAME>","public_key": "<YOUR_PUBLIC_KEY_HERE>",},],},],}TOML [[containers]][[containers.authorized_keys]]name = "<NAME>"public_key = "<YOUR_PUBLIC_KEY_HERE>"To disable SSH, set

ssh.enabledtofalsein your Container configuration:JSONC {"containers": [{"ssh": {"enabled": false,},},],}TOML [[containers]][containers.ssh]enabled = falseFor more information, refer to the SSH documentation.

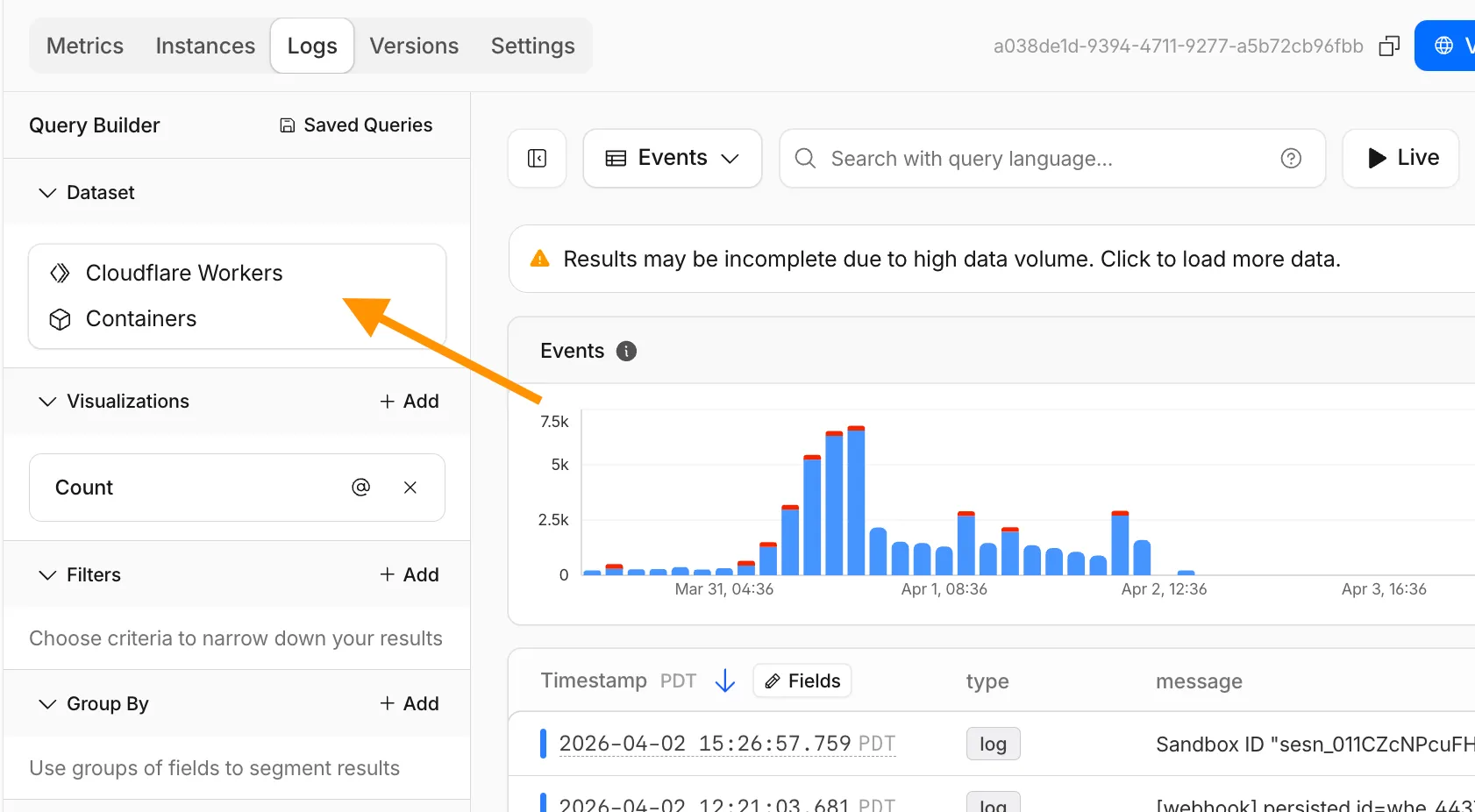

The Container logs page now displays related Worker and Durable Object logs alongside container logs. This co-locates all relevant log events for a container application in one place, making it easier to trace requests and debug issues.

You can filter to a single source when you need to isolate Container, Worker, or Durable Object output.

For information on configuring container logging, refer to How do Container logs work?.

Cloudflare Containers and Sandboxes are now generally available.

Containers let you run more workloads on the Workers platform, including resource-intensive applications, different languages, and CLI tools that need full Linux environments.

Since the initial launch of Containers, there have been significant improvements to Containers' performance, stability, and feature set. Some highlights include:

- Higher limits allow you to run thousands of containers concurrently.

- Active-CPU pricing means that you only pay for used CPU cycles.

- Easy connections to Workers and other bindings via hostnames help you extend your Containers with additional functionality.

- Docker Hub support makes it easy to use your existing images and registries.

- SSH support helps you access and debug issues in live containers.

The Sandbox SDK provides isolated environments for running untrusted code securely, with a simple TypeScript API for executing commands, managing files, and exposing services. This makes it easier to secure and manage your agents at scale. Some additions since launch include:

- Live preview URLs so agents can run long-lived services and verify in-flight changes.

- Persistent code interpreters for Python, JavaScript, and TypeScript, with rich structured outputs.

- Interactive PTY terminals for real browser-based terminal access with multiple isolated shells per sandbox.

- Backup and restore APIs to snapshot a workspace and quickly restore an agent's coding session without repeating expensive setup steps.

- Real-time filesystem watching so apps and agents can react immediately to file changes inside a sandbox.

For more information, refer to Containers and Sandbox SDK documentation.

Outbound Workers for Sandboxes and Containers now support zero-trust credential injection, TLS interception, allow/deny lists, and dynamic per-instance egress policies. These features give platforms running agentic workloads full control over what leaves the sandbox, without exposing secrets to untrusted workloads, like user-generated code or coding agents.

Because outbound handlers run in the Workers runtime, outside the sandbox, they can hold secrets the sandbox never sees. A sandboxed workload can make a plain request, and credentials are transparently attached before a request is forwarded upstream.

For instance, you could run an agent in a sandbox and ensure that any requests it makes to Github are authenticated. But it will never be able to access the credentials:

TypeScript export class MySandbox extends Sandbox {}MySandbox.outboundByHost = {"github.com": (request: Request, env: Env, ctx: OutboundHandlerContext) => {const requestWithAuth = new Request(request);requestWithAuth.headers.set("x-auth-token", env.SECRET);return fetch(requestWithAuth);},};You can easily inject unique credentials for different instances by using

ctx.containerId:TypeScript MySandbox.outboundByHost = {"my-internal-vcs.dev": async (request: Request,env: Env,ctx: OutboundHandlerContext,) => {const authKey = await env.KEYS.get(ctx.containerId);const requestWithAuth = new Request(request);requestWithAuth.headers.set("x-auth-token", authKey);return fetch(requestWithAuth);},};No token is ever passed into the sandbox. You can rotate secrets in the Worker environment and every request will pick them up immediately.

Outbound Workers now intercept HTTPS traffic. A unique ephemeral certificate authority (CA) and private key are created for each sandbox instance. The CA is placed into the sandbox and trusted by default. The ephemeral private key never leaves the container runtime sidecar process and is never shared across instances.

With TLS interception active, outbound Workers can act as a transparent proxy for both HTTP and HTTPS traffic.

Easily filter outbound traffic with

allowedHostsanddeniedHosts. WhenallowedHostsis set, it becomes a deny-by-default allowlist. Both properties support glob patterns.TypeScript export class MySandbox extends Sandbox {allowedHosts = ["github.com", "npmjs.org"];}Define named outbound handlers then apply or remove them at runtime using

setOutboundHandler()orsetOutboundByHost(). This lets you change egress policy for a running sandbox without restarting it.TypeScript export class MySandbox extends Sandbox {}MySandbox.outboundHandlers = {allowHosts: async (req: Request, env: Env, ctx: OutboundHandlerContext ) => {const url = new URL(req.url);if (ctx.params.allowedHostnames.includes(url.hostname)) {return fetch(req);}return new Response(null, { status: 403 });},noHttp: async () => {return new Response(null, { status: 403 });},};Apply handlers programmatically from your Worker:

TypeScript const sandbox = getSandbox(env.Sandbox, userId);// Open network for setupawait sandbox.setOutboundHandler("allowHosts", {allowedHostnames: ["github.com", "npmjs.org"],});await sandbox.exec("npm install");// Lock down after setupawait sandbox.setOutboundHandler("noHttp");Handlers accept

params, so you can customize behavior per instance without defining separate handler functions.Upgrade to

@cloudflare/containers@0.3.0or@cloudflare/sandbox@0.8.9to use these features.For more details, refer to Sandbox outbound traffic and Container outbound traffic.

You can now specify placement constraints to control where your Containers run.

Constraint Values Use case regionsENAM,WNAM,EEUR,WEURGeographic placement jurisdictioneu,fedrampCompliance boundaries Use

regionsto limit placement to specific geographic areas. Usejurisdictionto restrict containers to compliance boundaries —eumaps to European regions (EEUR, WEUR) andfedrampmaps to North American regions (ENAM, WNAM).Refer to Containers placement for more details.

Containers and Sandboxes now support connecting directly to Workers over HTTP. This allows you to call Workers functions and bindings, like KV or R2, from within the container at specific hostnames.

Define an

outboundhandler to capture any HTTP request or useoutboundByHostto capture requests to individual hostnames and IPs.JavaScript export class MyApp extends Sandbox {}MyApp.outbound = async (request, env, ctx) => {// you can run arbitrary functions defined in your Worker on any HTTP requestreturn await someWorkersFunction(request.body);};MyApp.outboundByHost = {"my.worker": async (request, env, ctx) => {return await anotherFunction(request.body);},};In this example, requests from the container to

http://my.workerwill run the function defined withinoutboundByHost, and any other HTTP requests will run theoutboundhandler. These handlers run entirely inside the Workers runtime, outside of the container sandbox.Each handler has access to

env, so it can call any binding set in Wrangler config. Code inside the container makes a standard HTTP request to that hostname and the outbound Worker translates it into a binding call.JavaScript export class MyApp extends Sandbox {}MyApp.outboundByHost = {"my.kv": async (request, env, ctx) => {const key = new URL(request.url).pathname.slice(1);const value = await env.KV.get(key);return new Response(value ?? "", { status: value ? 200 : 404 });},"my.r2": async (request, env, ctx) => {const key = new URL(request.url).pathname.slice(1);const object = await env.BUCKET.get(key);return new Response(object?.body ?? "", { status: object ? 200 : 404 });},};Now, from inside the container sandbox,

curl http://my.kv/some-keywill access Workers KV andcurl http://my.r2/some-objectwill access R2.Use

ctx.containerIdto reference the container's automatically provisioned Durable Object.JavaScript export class MyContainer extends Container {}MyContainer.outboundByHost = {"get-state.do": async (request, env, ctx) => {const id = env.MY_CONTAINER.idFromString(ctx.containerId);const stub = env.MY_CONTAINER.get(id);return stub.getStateForKey(request.body);},};This provides an easy way to associate state with any container instance, and includes a built-in SQLite database.

Upgrade to

@cloudflare/containersversion 0.2.0 or later, or@cloudflare/sandboxversion 0.8.0 or later to use outbound Workers.Refer to Containers outbound traffic and Sandboxes outbound traffic for more details and examples.

Containers now support Docker Hub ↗ images. You can use a fully qualified Docker Hub image reference in your Wrangler configuration ↗ instead of first pushing the image to Cloudflare Registry.

JSONC {"containers": [{// Example: docker.io/cloudflare/sandbox:0.7.18"image": "docker.io/<NAMESPACE>/<REPOSITORY>:<TAG>",},],}TOML [[containers]]image = "docker.io/<NAMESPACE>/<REPOSITORY>:<TAG>"Containers also support private Docker Hub images. To configure credentials, refer to Use private Docker Hub images.

For more information, refer to Image management.

You can now SSH into running Container instances using Wrangler. This is useful for debugging, inspecting running processes, or executing one-off commands inside a Container.

To connect, enable

wrangler_sshin your Container configuration and add yourssh-ed25519public key toauthorized_keys:JSONC {"containers": [{"wrangler_ssh": {"enabled": true},"authorized_keys": [{"name": "<NAME>","public_key": "<YOUR_PUBLIC_KEY_HERE>"}]}]}TOML [[containers]][containers.wrangler_ssh]enabled = true[[containers.authorized_keys]]name = "<NAME>"public_key = "<YOUR_PUBLIC_KEY_HERE>"Then connect with:

Terminal window wrangler containers ssh <INSTANCE_ID>You can also run a single command without opening an interactive shell:

Terminal window wrangler containers ssh <INSTANCE_ID> -- ls -alUse

wrangler containers instances <APPLICATION>to find the instance ID for a running Container.For more information, refer to the SSH documentation.

A new

wrangler containers instancescommand lists all instances for a given Container application. This mirrors the instances view in the Cloudflare dashboard.The command displays each instance's ID, name, state, location, version, and creation time:

Terminal window wrangler containers instances <APPLICATION_ID>Use the

--jsonflag for machine-readable output, which is also the default format in non-interactive environments such as CI pipelines.For the full list of options, refer to the

containers instancescommand reference.

You can now run more Containers concurrently with significantly higher limits on memory, vCPU, and disk.

Limit Previous Limit New Limit Memory for concurrent live Container instances 400GiB 6TiB vCPU for concurrent live Container instances 100 1,500 Disk for concurrent live Container instances 2TB 30TB This 15x increase enables larger-scale workloads on Containers. You can now run 15,000 instances of the

liteinstance type, 6,000 instances ofbasic, over 1,500 instances ofstandard-1, or over 1,000 instances ofstandard-2concurrently.Refer to Limits for more details on the available instance types and limits.

Sandboxes now support

createBackup()andrestoreBackup()methods for creating and restoring point-in-time snapshots of directories.This allows you to restore environments quickly. For instance, in order to develop in a sandbox, you may need to include a user's codebase and run a build step. Unfortunately

git cloneandnpm installcan take minutes, and you don't want to run these steps every time the user starts their sandbox.Now, after the initial setup, you can just call

createBackup(), thenrestoreBackup()the next time this environment is needed. This makes it practical to pick up exactly where a user left off, even after days of inactivity, without repeating expensive setup steps.TypeScript const sandbox = getSandbox(env.Sandbox, "my-sandbox");// Make non-trivial changes to the file systemawait sandbox.gitCheckout(endUserRepo, { targetDir: "/workspace" });await sandbox.exec("npm install", { cwd: "/workspace" });// Create a point-in-time backup of the directoryconst backup = await sandbox.createBackup({ dir: "/workspace" });// Store the handle for later useawait env.KV.put(`backup:${userId}`, JSON.stringify(backup));// ... in a future session...// Restore instead of re-cloning and reinstallingawait sandbox.restoreBackup(backup);Backups are stored in R2 and can take advantage of R2 object lifecycle rules to ensure they do not persist forever.

Key capabilities:

- Persist and reuse across sandbox sessions — Easily store backup handles in KV, D1, or Durable Object storage for use in subsequent sessions

- Usable across multiple instances — Fork a backup across many sandboxes for parallel work

- Named backups — Provide optional human-readable labels for easier management

- TTLs — Set time-to-live durations so backups are automatically removed from storage once they are no longer needed

To get started, refer to the backup and restore guide for setup instructions and usage patterns, or the Backups API reference for full method documentation.

Sandboxes and Containers now support running Docker for "Docker-in-Docker" setups. This is particularly useful when your end users or agents want to run a full sandboxed development environment.

This allows you to:

- Develop containerized applications with your Sandbox

- Run isolated test environments for images

- Build container images as part of CI/CD workflows

- Deploy arbitrary images supplied at runtime within a container

For Sandbox SDK users, see the Docker-in-Docker guide for instructions on combining Docker with the SandboxSDK. For general Containers usage, see the Containers FAQ.

Custom instance types are now enabled for all Cloudflare Containers users. You can now specify specific vCPU, memory, and disk amounts, rather than being limited to pre-defined instance types. Previously, only select Enterprise customers were able to customize their instance type.

To use a custom instance type, specify the

instance_typeproperty as an object withvcpu,memory_mib, anddisk_mbfields in your Wrangler configuration:TOML [[containers]]image = "./Dockerfile"instance_type = { vcpu = 2, memory_mib = 6144, disk_mb = 12000 }Individual limits for custom instance types are based on the

standard-4instance type (4 vCPU, 12 GiB memory, 20 GB disk). You must allocate at least 1 vCPU for custom instance types. For workloads requiring less than 1 vCPU, use the predefined instance types likeliteorbasic.See the limits documentation for the full list of constraints on custom instance types. See the getting started guide to deploy your first Container,

Containers now support mounting R2 buckets as FUSE (Filesystem in Userspace) volumes, allowing applications to interact with R2 using standard filesystem operations.

Common use cases include:

- Bootstrapping containers with datasets, models, or dependencies for sandboxes and agent environments

- Persisting user configuration or application state without managing downloads

- Accessing large static files without bloating container images or downloading at startup

FUSE adapters like tigrisfs ↗, s3fs ↗, and gcsfuse ↗ can be installed in your container image and configured to mount buckets at startup.

FROM alpine:3.20# Install FUSE and dependenciesRUN apk update && \apk add --no-cache ca-certificates fuse curl bash# Install tigrisfsRUN ARCH=$(uname -m) && \if [ "$ARCH" = "x86_64" ]; then ARCH="amd64"; fi && \if [ "$ARCH" = "aarch64" ]; then ARCH="arm64"; fi && \VERSION=$(curl -s https://api.github.com/repos/tigrisdata/tigrisfs/releases/latest | grep -o '"tag_name": "[^"]*' | cut -d'"' -f4) && \curl -L "https://github.com/tigrisdata/tigrisfs/releases/download/${VERSION}/tigrisfs_${VERSION#v}_linux_${ARCH}.tar.gz" -o /tmp/tigrisfs.tar.gz && \tar -xzf /tmp/tigrisfs.tar.gz -C /usr/local/bin/ && \rm /tmp/tigrisfs.tar.gz && \chmod +x /usr/local/bin/tigrisfs# Create startup script that mounts bucketRUN printf '#!/bin/sh\n\set -e\n\mkdir -p /mnt/r2\n\R2_ENDPOINT="https://${R2_ACCOUNT_ID}.r2.cloudflarestorage.com"\n\/usr/local/bin/tigrisfs --endpoint "${R2_ENDPOINT}" -f "${BUCKET_NAME}" /mnt/r2 &\n\sleep 3\n\ls -lah /mnt/r2\n\' > /startup.sh && chmod +x /startup.shCMD ["/startup.sh"]See the Mount R2 buckets with FUSE example for a complete guide on mounting R2 buckets and/or other S3-compatible storage buckets within your containers.

Containers and Sandboxes pricing for CPU time is now based on active usage only, instead of provisioned resources.

This means that you now pay less for Containers and Sandboxes.

Imagine running the

standard-2instance type for one hour, which can use up to 1 vCPU, but on average you use only 20% of your CPU capacity.CPU-time is priced at $0.00002 per vCPU-second.

Previously, you would be charged for the CPU allocated to the instance multiplied by the time it was active, in this case 1 hour.

CPU cost would have been: $0.072 — 1 vCPU * 3600 seconds * $0.00002

Now, since you are only using 20% of your CPU capacity, your CPU cost is cut to 20% of the previous amount.

CPU cost is now: $0.0144 — 1 vCPU * 3600 seconds * $0.00002 * 20% utilization

This can significantly reduce costs for Containers and Sandboxes.

See the documentation to learn more about Containers, Sandboxes, and associated pricing.

New instance types provide up to 4 vCPU, 12 GiB of memory, and 20 GB of disk per container instance.

Instance Type vCPU Memory Disk lite 1/16 256 MiB 2 GB basic 1/4 1 GiB 4 GB standard-1 1/2 4 GiB 8 GB standard-2 1 6 GiB 12 GB standard-3 2 8 GiB 16 GB standard-4 4 12 GiB 20 GB The

devandstandardinstance types are preserved for backward compatibility and are aliases forliteandstandard-1, respectively. Thestandard-1instance type now provides up to 8 GB of disk instead of only 4 GB.See the getting started guide to deploy your first Container, and the limits documentation for more details on the available instance types and limits.

You can now run more Containers concurrently with higher limits on CPU, memory, and disk.

Limit New Limit Previous Limit Memory for concurrent live Container instances 400GiB 40GiB vCPU for concurrent live Container instances 100 20 Disk for concurrent live Container instances 2TB 100GB You can now run 1000 instances of the

devinstance type, 400 instances ofbasic, or 100 instances ofstandardconcurrently.This opens up new possibilities for running larger-scale workloads on Containers.

See the getting started guide to deploy your first Container, and the limits documentation for more details on the available instance types and limits.